Technology peripherals

Technology peripherals AI

AI Nvidia releases AI chip H200: performance soars by 90%, Llama 2 inference speed doubles

Nvidia releases AI chip H200: performance soars by 90%, Llama 2 inference speed doublesNvidia releases AI chip H200: performance soars by 90%, Llama 2 inference speed doubles

DoNews News on November 14th, NVIDIA released the next generation of artificial intelligence supercomputer chips on the 13th Beijing time. These chips will play an important role in deep learning and large language models (LLM), such as OpenAI’s GPT-4.

The new generation of chips has made significant progress compared with the previous generation and will be widely used in data centers and supercomputers to handle complex tasks such as weather and climate prediction, drug research and development, and quantum computing

The key product released is the HGX H200 GPU based on Nvidia's "Hopper" architecture, which is the successor to the H100 GPU and is the company's first chip to use HBM3e memory. HBM3e memory has faster speed and larger capacity, so it is very suitable for the application of large language models

NVIDIA said: "With HBM3e technology, NVIDIA H200 memory speed reaches 4.8TB per second, capacity is 141GB, almost twice that of A100, and bandwidth has also increased by 2.4 times."

In the field of artificial intelligence, NVIDIA claims that the inference speed of HGX H200 on Llama 2 (70 billion parameter LLM) is twice as fast as that of H100. HGX H200 will be available in 4-way and 8-way configurations and is compatible with the software and hardware in the H100 system

It will be available in every type of data center (on-premises, cloud, hybrid cloud and edge) and deployed by Amazon Web Services, Google Cloud, Microsoft Azure and Oracle Cloud Infrastructure, etc., and will be available in Q2 2024 roll out.

Another key product released by NVIDIA this time is the GH200 Grace Hopper "superchip", which combines the HGX H200 GPU and Arm-based NVIDIA Grace CPU through the company's NVLink-C2C interconnect, officials said It is designed for supercomputers and allows "scientists and researchers to solve the world's most challenging problems by accelerating complex AI and HPC applications running terabytes of data."

The GH200 will be used in "more than 40 AI supercomputers at research centers, system manufacturers and cloud providers around the world," including Dell, Eviden, Hewlett Packard Enterprise (HPE), Lenovo, QCT and Supermicro.

It is worth noting that HPE’s Cray EX2500 supercomputer will use four-way GH200 and can scale to tens of thousands of Grace Hopper superchip nodes

The above is the detailed content of Nvidia releases AI chip H200: performance soars by 90%, Llama 2 inference speed doubles. For more information, please follow other related articles on the PHP Chinese website!

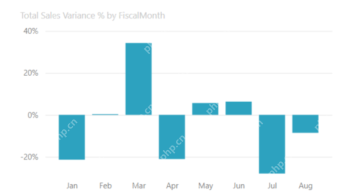

Most Used 10 Power BI Charts - Analytics VidhyaApr 16, 2025 pm 12:05 PM

Most Used 10 Power BI Charts - Analytics VidhyaApr 16, 2025 pm 12:05 PMHarnessing the Power of Data Visualization with Microsoft Power BI Charts In today's data-driven world, effectively communicating complex information to non-technical audiences is crucial. Data visualization bridges this gap, transforming raw data i

Expert Systems in AIApr 16, 2025 pm 12:00 PM

Expert Systems in AIApr 16, 2025 pm 12:00 PMExpert Systems: A Deep Dive into AI's Decision-Making Power Imagine having access to expert advice on anything, from medical diagnoses to financial planning. That's the power of expert systems in artificial intelligence. These systems mimic the pro

Three Of The Best Vibe Coders Break Down This AI Revolution In CodeApr 16, 2025 am 11:58 AM

Three Of The Best Vibe Coders Break Down This AI Revolution In CodeApr 16, 2025 am 11:58 AMFirst of all, it’s apparent that this is happening quickly. Various companies are talking about the proportions of their code that are currently written by AI, and these are increasing at a rapid clip. There’s a lot of job displacement already around

Runway AI's Gen-4: How Can AI Montage Go Beyond AbsurdityApr 16, 2025 am 11:45 AM

Runway AI's Gen-4: How Can AI Montage Go Beyond AbsurdityApr 16, 2025 am 11:45 AMThe film industry, alongside all creative sectors, from digital marketing to social media, stands at a technological crossroad. As artificial intelligence begins to reshape every aspect of visual storytelling and change the landscape of entertainment

How to Enroll for 5 Days ISRO AI Free Courses? - Analytics VidhyaApr 16, 2025 am 11:43 AM

How to Enroll for 5 Days ISRO AI Free Courses? - Analytics VidhyaApr 16, 2025 am 11:43 AMISRO's Free AI/ML Online Course: A Gateway to Geospatial Technology Innovation The Indian Space Research Organisation (ISRO), through its Indian Institute of Remote Sensing (IIRS), is offering a fantastic opportunity for students and professionals to

Local Search Algorithms in AIApr 16, 2025 am 11:40 AM

Local Search Algorithms in AIApr 16, 2025 am 11:40 AMLocal Search Algorithms: A Comprehensive Guide Planning a large-scale event requires efficient workload distribution. When traditional approaches fail, local search algorithms offer a powerful solution. This article explores hill climbing and simul

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost EfficiencyApr 16, 2025 am 11:37 AM

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost EfficiencyApr 16, 2025 am 11:37 AMThe release includes three distinct models, GPT-4.1, GPT-4.1 mini and GPT-4.1 nano, signaling a move toward task-specific optimizations within the large language model landscape. These models are not immediately replacing user-facing interfaces like

The Prompt: ChatGPT Generates Fake PassportsApr 16, 2025 am 11:35 AM

The Prompt: ChatGPT Generates Fake PassportsApr 16, 2025 am 11:35 AMChip giant Nvidia said on Monday it will start manufacturing AI supercomputers— machines that can process copious amounts of data and run complex algorithms— entirely within the U.S. for the first time. The announcement comes after President Trump si

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

Dreamweaver Mac version

Visual web development tools

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment