The importance of cross-validation cannot be ignored!

In order not to change the original meaning, what needs to be re-expressed is: First, we need to figure out why cross-validation is needed?

Cross-validation is a technique commonly used in machine learning and statistics to evaluate the performance and generalization ability of a predictive model, especially when data are limited or to evaluate the model's generalization to new unseen data. Cross-validation is extremely valuable when it comes to capabilities.

Under what circumstances will cross-validation be used?

- Model performance evaluation: Cross-validation helps estimate the performance of the model on unseen data. By training and evaluating the model on multiple subsets of the data, cross-validation provides a more robust estimate of model performance than a single train-test split.

- Data efficiency: When data is limited, cross-validation makes full use of all available samples, providing a more reliable assessment of model performance by using all data simultaneously for training and evaluation.

- Hyperparameter tuning: Cross validation is often used to select the best hyperparameters for a model. By evaluating the performance of your model using different hyperparameter settings on different subsets of the data, you can identify the hyperparameter values that perform best in terms of overall performance.

- Detecting overfitting: Cross-validation helps detect whether the model is overfitting the training data. If the model performs significantly better on the training set than on the validation set, it may indicate overfitting and requires adjustments, such as regularization or choosing a simpler model.

- Generalization ability evaluation: Cross-validation provides an evaluation of the model's ability to generalize to unseen data. By evaluating the model on multiple data splits, it helps evaluate the model's ability to capture underlying patterns in the data without relying on randomness or a specific train-test split.

The general idea of cross-validation can be shown in Figure 5 fold cross. In each iteration, the new model is trained on four sub-datasets and performed on the last retained sub-dataset. Test to make sure all data is utilized. Through indicators such as average score and standard deviation, a true measure of model performance is provided.

Everything has to start with K-fold crossover.

KFold

K-fold cross-validation has been integrated in Sklearn. Here is a 7-fold example:

from sklearn.datasets import make_regressionfrom sklearn.model_selection import KFoldx, y = make_regression(n_samples=100)# Init the splittercross_validation = KFold(n_splits=7)

There is another A common operation is to perform a Shuffle before performing the split, which further minimizes the risk of overfitting by destroying the original order of the samples:

cross_validation = KFold(n_splits=7, shuffle=True)

In this way, a simple k-fold Cross-validation can be done, be sure to check the source code! Be sure to check out the source code! Be sure to check out the source code!

StratifiedKFold

StratifiedKFold is specially designed for classification problems.

In some classification problems, the target distribution should remain unchanged even if the data is divided into multiple sets. For example, in most cases, a binary target with a class ratio of 30 to 70 should still maintain the same ratio in the training set and the test set. In ordinary KFold, this rule is broken because the data is shuffled before splitting. , the category proportions will not be maintained.

To solve this problem, another splitter class specifically for classification is used in Sklearn - StratifiedKFold:

from sklearn.datasets import make_classificationfrom sklearn.model_selection import StratifiedKFoldx, y = make_classification(n_samples=100, n_classes=2)cross_validation = StratifiedKFold(n_splits=7, shuffle=True, random_state=1121218)

Although it is different from KFold Looks similar, but now the class proportions remain consistent across all splits and iterations Cross-validation is very similar

Logically speaking, by using different random seeds to generate multiple training/test sets, it should be similar to a robust cross-validation process in enough iterations

Scikit-learn library also provides corresponding interfaces:

from sklearn.model_selection import ShuffleSplitcross_validation = ShuffleSplit(n_splits=7, train_size=0.75, test_size=0.25)TimeSeriesSplit

When the data set is a time series, traditional cross-validation cannot be used , this will completely disrupt the order. In order to solve this problem, refer to Sklearn provides another splitter-TimeSeriesSplit,

When the data set is a time series, traditional cross-validation cannot be used , this will completely disrupt the order. In order to solve this problem, refer to Sklearn provides another splitter-TimeSeriesSplit,

from sklearn.model_selection import TimeSeriesSplitcross_validation = TimeSeriesSplit(n_splits=7)The validation set is always located at the end of the training set After indexing the case we can see the graph. This is due to the fact that the index is a date, which means we cannot accidentally train a time series model on a date in the future and make a prediction for a previous date

Cross-validation of non-independent and identically distributed (non-IID) data

The above method is processed for independent and identically distributed data sets, that is, the process of generating data will not be affected by other samples

However, in some cases, the data does not meet the conditions of independent and identical distribution (IID), that is, there is a dependency between some samples. This situation also occurs in Kaggle competitions, such as the Google Brain Ventilator Pressure competition. This data records the air pressure values of the artificial lung during thousands of breaths (inhalation and exhalation), and is recorded at every moment of each breath. There are approximately 80 rows of data for each breathing process, and these rows are related to each other. In this case, traditional cross-validation methods cannot be used because the division of the data may "happen just in the middle of a breathing process"

This can be understood as the need to "group" these data, Because the data within the group are related. For example, when collecting medical data from multiple patients, each patient has multiple samples. However, these data are likely to be affected by individual patient differences and therefore also need to be grouped

Often we hope that a model trained on a specific group will generalize well to other unseen groups. groups, so when performing cross-validation, "tag" the data of these groups and tell them how to distinguish them from each other.

Several interfaces are provided in Sklearn to handle these situations:

- GroupKFold

- StratifiedGroupKFold

- LeaveOneGroupOut

- LeavePGroupsOut

- GroupShuffleSplit

It is strongly recommended to understand the idea of cross-validation and how to implement it. It is a good way to look at the Sklearn source code. In addition, you need to have a clear definition of your own data set, and data preprocessing is really important.

The above is the detailed content of The importance of cross-validation cannot be ignored!. For more information, please follow other related articles on the PHP Chinese website!

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AM

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AMIntroduction In prompt engineering, “Graph of Thought” refers to a novel approach that uses graph theory to structure and guide AI’s reasoning process. Unlike traditional methods, which often involve linear s

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AM

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AMIntroduction Congratulations! You run a successful business. Through your web pages, social media campaigns, webinars, conferences, free resources, and other sources, you collect 5000 email IDs daily. The next obvious step is

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AM

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AMIntroduction In today’s fast-paced software development environment, ensuring optimal application performance is crucial. Monitoring real-time metrics such as response times, error rates, and resource utilization can help main

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM“How many users do you have?” he prodded. “I think the last time we said was 500 million weekly actives, and it is growing very rapidly,” replied Altman. “You told me that it like doubled in just a few weeks,” Anderson continued. “I said that priv

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AM

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AMIntroduction Mistral has released its very first multimodal model, namely the Pixtral-12B-2409. This model is built upon Mistral’s 12 Billion parameter, Nemo 12B. What sets this model apart? It can now take both images and tex

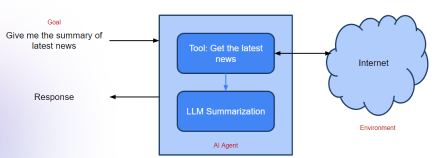

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AM

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AMImagine having an AI-powered assistant that not only responds to your queries but also autonomously gathers information, executes tasks, and even handles multiple types of data—text, images, and code. Sounds futuristic? In this a

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AM

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AMIntroduction The finance industry is the cornerstone of any country’s development, as it drives economic growth by facilitating efficient transactions and credit availability. The ease with which transactions occur and credit

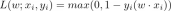

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AM

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AMIntroduction Data is being generated at an unprecedented rate from sources such as social media, financial transactions, and e-commerce platforms. Handling this continuous stream of information is a challenge, but it offers an

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

WebStorm Mac version

Useful JavaScript development tools