Technology peripherals

Technology peripherals AI

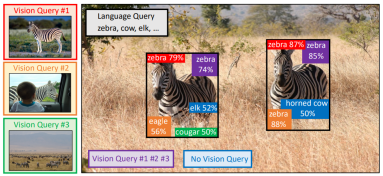

AI Let's look at pictures of large models more effectively than typing! New research in NeurIPS 2023 proposes a multi-modal query method, increasing the accuracy by 7.8%

Let's look at pictures of large models more effectively than typing! New research in NeurIPS 2023 proposes a multi-modal query method, increasing the accuracy by 7.8%The ability to "read pictures" of large models is so strong, why do they still find the wrong things?

For example, confusing bats and bats that don't look alike, or not recognizing rare fish in some data sets...

This is because we let the large model When "looking for something", the text is often entered.

If the description is ambiguous or too biased, such as "bat" (bat or bat?) or "devil medaka" (Cyprinodon diabolis) , the AI will will be greatly confused.

This leads to the use of large models for target detection, especially for open world (unknown scenes) target detection tasks. The effect is often ineffective. It's as good as imagined.

Now, a paper included in NeurIPS 2023 finally solves this problem.

The paper proposes a target detection method based on multi-modal queryMQ-Det, which only needs to add The previous picture example can greatly improve the accuracy of finding things with large models.

On the benchmark detection data set LVIS, without the need for downstream task model fine-tuning, MQ-Det improves the GLIP accuracy of the mainstream detection large model by about 7.8%# on average. ##, on 13 benchmark small sample downstream tasks, the average accuracy is improved by 6.3%.

How is this done? Lets come look.The following content is reproduced from the author of the paper and Zhihu blogger @沁园夏:

Directory- MQ-Det: Multi-mode A large open-world target detection model for dynamic query

- 1.1 From text query to multi-modal query

- 1.2 MQ-Det plug-and-play multi-modal query model architecture

- 1.3 MQ-Det efficient training strategy

- 1.4 Experimental results: Finetuning-free evaluation

- 1.5 Experimental results: Few-shot evaluation

- 1.6 Multi-modal query target detection Prospect

Paper name:Multi-modal Queried Object Detection in the Wild

Paper link: https://www.php.cn/link/9c6947bd95ae487c81d4e19d3ed8cd6f

Code address: https://www.php.cn/link/2307ac1cfee5db3a5402aac9db25cc5d

A picture is worth a thousand words: With the rise of image and text pre-training, and with the help of the open semantics of text, target detection has gradually entered the stage of open world perception. To this end, many large detection models follow the pattern of text query, which uses categorical text descriptions to query potential targets in target images. However, this approach often faces the problem of being “broad but not precise”.

For example, (1) for the fine-grained object(fish species) detection in Figure 1, it is often difficult to describe various fine-grained fish species with limited text, (2) Category ambiguity ("bat" can refer to both a bat and a bat) .

However, the above problems can be solved through image examples. Compared with text, images can providericher feature clues of the target object, but at the same time, text has powerful Generalizability.

Thus, how to organically combine the two query methods has become a natural idea.Difficulties in obtaining multi-modal query capabilities: How to obtain such a model with multi-modal query, there are three challenges: (1) It is very difficult to fine-tune directly with limited image examples. It is easy to cause catastrophic forgetting; (2) Training a large detection model from scratch will have good generalization but consumes a lot of money. For example, training GLIP on a single card requires 30 million data for 480 days.

Multi-modal query target detection: Based on the above considerations, the author proposed a simple and effective model design and training strategy-MQ-Det.

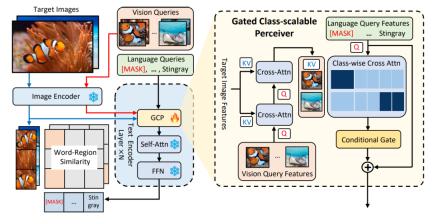

MQ-Det inserts a small number of gated perception modules(GCP) based on the existing large frozen text query detection model to receive input from visual examples, and also designs a visual condition mask The language prediction training strategy efficiently results in high-performance detectors for multi-modal queries.

1.2 MQ-Det plug-and-play multi-modal query model architecture

Gated Awareness Module

As shown in Figure 1, the author inserted the Gating Awareness Module(GCP) layer by layer into the text encoder side of the existing frozen text query detection large model. , the working mode of GCP can be succinctly expressed by the following formula:

(X-MHA) gets to enhance its representation ability, and then each category text ti will cross-attention with the visual example  of the corresponding category to get

of the corresponding category to get  , and then The original text ti and the visually augmented text

, and then The original text ti and the visually augmented text  are fused through a gating module gate to obtain the output

are fused through a gating module gate to obtain the output  of the current layer. Such a simple design follows three principles: (1) Category scalability; (2) Semantic completion; (3) Anti-forgetfulness. For detailed discussion, see the original text.

of the current layer. Such a simple design follows three principles: (1) Category scalability; (2) Semantic completion; (3) Anti-forgetfulness. For detailed discussion, see the original text.

Modulated training based on frozen language query detector

Due to the current large pre-trained detection model of text queries It has good generalization ability. The author of the paper believes that it only needs to be slightly adjusted with visual details based on the original text features. There are also specific experimental demonstrations in the article that found that fine-tuning after opening the original pre-trained model parameters can easily lead to the problem of catastrophic forgetting, and instead lose the ability to detect the open world. Thus, MQ-Det can efficiently insert visual information into the detector of existing text queries by only modulating the GCP module inserted into the training based on the pre-trained detector of frozen text queries. In the paper, the author applies the structural design and training technology of MQ-Det to the current SOTA models GLIP and GroundingDINO respectively to verify the versatility of the method.Vision-conditioned mask language prediction training strategy

The author also proposed a vision-conditioned mask language prediction training strategy to solve the problem of frozen pre-programming. The problem of learning inertia caused by training models. The so-called learning inertia means that the detector tends to maintain the features of the original text query during the training process, thereby ignoring the newly added visual query features. To this end, MQ-Det randomly replaces text tokens with [MASK] tokens during training, forcing the model to learn from the visual query feature side, that is:

Finetuning-free: Compared with traditional zero-shot(zero-shot) evaluation only uses categories Text is tested, and MQ-Det proposes a more realistic evaluation strategy: finetuning-free. It is defined as: without any downstream fine-tuning, users can use category text, image examples, or a combination of both to perform object detection.

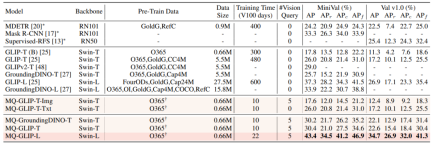

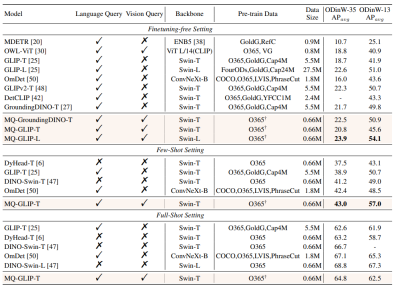

Under the finetuning-free setting, MQ-Det selects 5 visual examples for each category and combines the category text for target detection. However, other existing models do not support visual queries and can only Object detection with plain text descriptions. The following table shows the detection results on LVIS MiniVal and LVIS v1.0. It can be found that the introduction of multi-modal query greatly improves the open world target detection capability.△Table 1 The finetuning-free performance of each detection model under the LVIS benchmark data set

As can be seen from Table 1, MQ-GLIP-L has the highest performance in GLIP- Based on L, AP has been increased by more than 7%, and the effect is very significant!

1.5 Experimental results: Few-shot evaluation

△Table 2 Each model performs on 35 detection tasks ODinW-35 and its 13 subsets ODinW-13 Performance

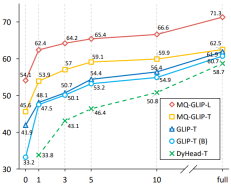

The author further conducted comprehensive experiments on 35 downstream detection tasks ODinW-35. As can be seen from Table 2, in addition to its powerful finetuning-free performance, MQ-Det also has good small sample detection capabilities, which further confirms the potential of multi-modal query. Figure 2 also shows the significant improvement of MQ-Det on GLIP.

△Figure 2 Comparison of data utilization efficiency; horizontal axis: number of training samples, vertical axis: average AP on OdinW-13

1.6 Multi-modal Query the prospects of target detection

As a research field based on practical applications, target detection attaches great importance to the implementation of algorithms.

Although previous pure text query target detection models have shown good generalization, it is difficult for text to cover fine-grained information in actual open world detection, and the rich information granularity in images perfectly Completed this link.

So far we can find that text is general but not precise, and images are precise but not general. If we can effectively combine the two, that is, multi-modal query, it will push open world target detection further forward.

MQ-Det has taken the first step in multi-modal query, and its significant performance improvement also demonstrates the huge potential of multi-modal query target detection.

At the same time, the introduction of text descriptions and visual examples provides users with more choices, making target detection more flexible and user-friendly.

The above is the detailed content of Let's look at pictures of large models more effectively than typing! New research in NeurIPS 2023 proposes a multi-modal query method, increasing the accuracy by 7.8%. For more information, please follow other related articles on the PHP Chinese website!

从VAE到扩散模型:一文解读以文生图新范式Apr 08, 2023 pm 08:41 PM

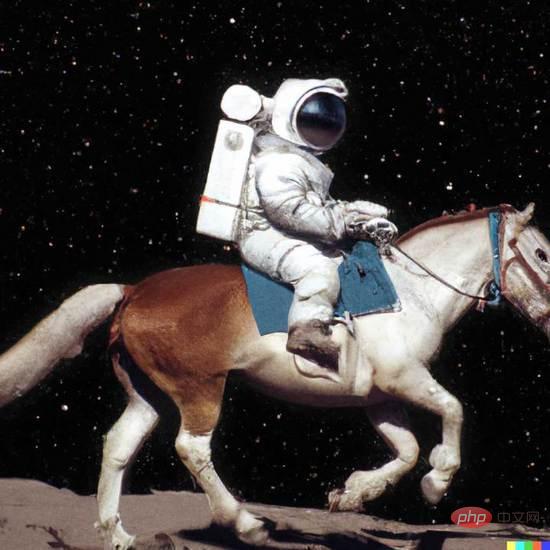

从VAE到扩散模型:一文解读以文生图新范式Apr 08, 2023 pm 08:41 PM1 前言在发布DALL·E的15个月后,OpenAI在今年春天带了续作DALL·E 2,以其更加惊艳的效果和丰富的可玩性迅速占领了各大AI社区的头条。近年来,随着生成对抗网络(GAN)、变分自编码器(VAE)、扩散模型(Diffusion models)的出现,深度学习已向世人展现其强大的图像生成能力;加上GPT-3、BERT等NLP模型的成功,人类正逐步打破文本和图像的信息界限。在DALL·E 2中,只需输入简单的文本(prompt),它就可以生成多张1024*1024的高清图像。这些图像甚至

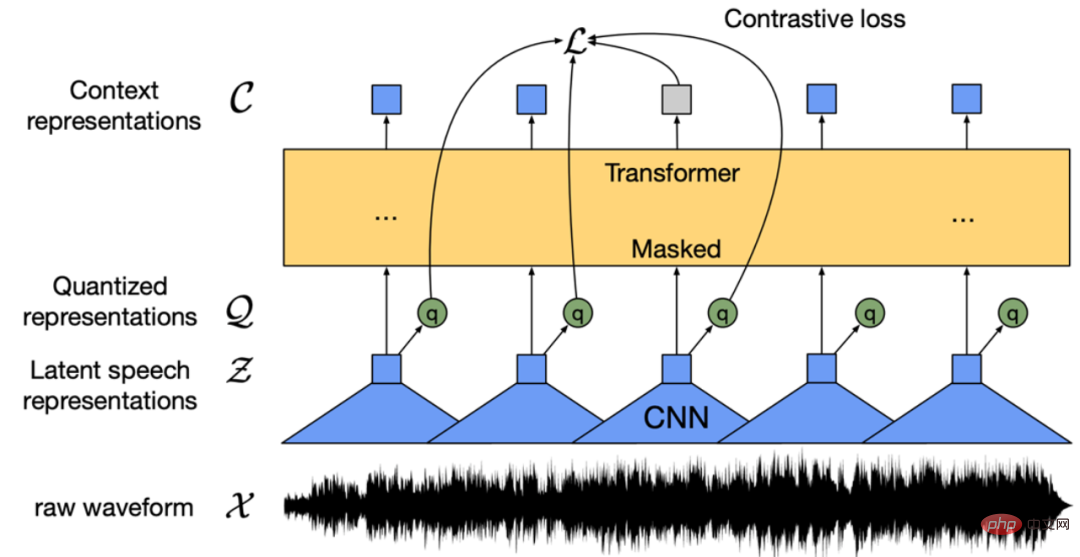

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PM

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PMWav2vec 2.0 [1],HuBERT [2] 和 WavLM [3] 等语音预训练模型,通过在多达上万小时的无标注语音数据(如 Libri-light )上的自监督学习,显著提升了自动语音识别(Automatic Speech Recognition, ASR),语音合成(Text-to-speech, TTS)和语音转换(Voice Conversation,VC)等语音下游任务的性能。然而这些模型都没有公开的中文版本,不便于应用在中文语音研究场景。 WenetSpeech [4] 是

普林斯顿陈丹琦:如何让「大模型」变小Apr 08, 2023 pm 04:01 PM

普林斯顿陈丹琦:如何让「大模型」变小Apr 08, 2023 pm 04:01 PM“Making large models smaller”这是很多语言模型研究人员的学术追求,针对大模型昂贵的环境和训练成本,陈丹琦在智源大会青源学术年会上做了题为“Making large models smaller”的特邀报告。报告中重点提及了基于记忆增强的TRIME算法和基于粗细粒度联合剪枝和逐层蒸馏的CofiPruning算法。前者能够在不改变模型结构的基础上兼顾语言模型困惑度和检索速度方面的优势;而后者可以在保证下游任务准确度的同时实现更快的处理速度,具有更小的模型结构。陈丹琦 普

解锁CNN和Transformer正确结合方法,字节跳动提出有效的下一代视觉TransformerApr 09, 2023 pm 02:01 PM

解锁CNN和Transformer正确结合方法,字节跳动提出有效的下一代视觉TransformerApr 09, 2023 pm 02:01 PM由于复杂的注意力机制和模型设计,大多数现有的视觉 Transformer(ViT)在现实的工业部署场景中不能像卷积神经网络(CNN)那样高效地执行。这就带来了一个问题:视觉神经网络能否像 CNN 一样快速推断并像 ViT 一样强大?近期一些工作试图设计 CNN-Transformer 混合架构来解决这个问题,但这些工作的整体性能远不能令人满意。基于此,来自字节跳动的研究者提出了一种能在现实工业场景中有效部署的下一代视觉 Transformer——Next-ViT。从延迟 / 准确性权衡的角度看,

Stable Diffusion XL 现已推出—有什么新功能,你知道吗?Apr 07, 2023 pm 11:21 PM

Stable Diffusion XL 现已推出—有什么新功能,你知道吗?Apr 07, 2023 pm 11:21 PM3月27号,Stability AI的创始人兼首席执行官Emad Mostaque在一条推文中宣布,Stable Diffusion XL 现已可用于公开测试。以下是一些事项:“XL”不是这个新的AI模型的官方名称。一旦发布稳定性AI公司的官方公告,名称将会更改。与先前版本相比,图像质量有所提高与先前版本相比,图像生成速度大大加快。示例图像让我们看看新旧AI模型在结果上的差异。Prompt: Luxury sports car with aerodynamic curves, shot in a

五年后AI所需算力超100万倍!十二家机构联合发表88页长文:「智能计算」是解药Apr 09, 2023 pm 07:01 PM

五年后AI所需算力超100万倍!十二家机构联合发表88页长文:「智能计算」是解药Apr 09, 2023 pm 07:01 PM人工智能就是一个「拼财力」的行业,如果没有高性能计算设备,别说开发基础模型,就连微调模型都做不到。但如果只靠拼硬件,单靠当前计算性能的发展速度,迟早有一天无法满足日益膨胀的需求,所以还需要配套的软件来协调统筹计算能力,这时候就需要用到「智能计算」技术。最近,来自之江实验室、中国工程院、国防科技大学、浙江大学等多达十二个国内外研究机构共同发表了一篇论文,首次对智能计算领域进行了全面的调研,涵盖了理论基础、智能与计算的技术融合、重要应用、挑战和未来前景。论文链接:https://spj.scien

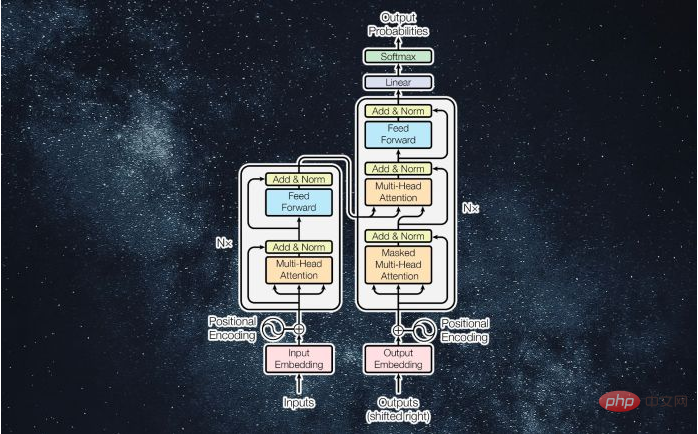

什么是Transformer机器学习模型?Apr 08, 2023 pm 06:31 PM

什么是Transformer机器学习模型?Apr 08, 2023 pm 06:31 PM译者 | 李睿审校 | 孙淑娟近年来, Transformer 机器学习模型已经成为深度学习和深度神经网络技术进步的主要亮点之一。它主要用于自然语言处理中的高级应用。谷歌正在使用它来增强其搜索引擎结果。OpenAI 使用 Transformer 创建了著名的 GPT-2和 GPT-3模型。自从2017年首次亮相以来,Transformer 架构不断发展并扩展到多种不同的变体,从语言任务扩展到其他领域。它们已被用于时间序列预测。它们是 DeepMind 的蛋白质结构预测模型 AlphaFold

AI模型告诉你,为啥巴西最可能在今年夺冠!曾精准预测前两届冠军Apr 09, 2023 pm 01:51 PM

AI模型告诉你,为啥巴西最可能在今年夺冠!曾精准预测前两届冠军Apr 09, 2023 pm 01:51 PM说起2010年南非世界杯的最大网红,一定非「章鱼保罗」莫属!这只位于德国海洋生物中心的神奇章鱼,不仅成功预测了德国队全部七场比赛的结果,还顺利地选出了最终的总冠军西班牙队。不幸的是,保罗已经永远地离开了我们,但它的「遗产」却在人们预测足球比赛结果的尝试中持续存在。在艾伦图灵研究所(The Alan Turing Institute),随着2022年卡塔尔世界杯的持续进行,三位研究员Nick Barlow、Jack Roberts和Ryan Chan决定用一种AI算法预测今年的冠军归属。预测模型图

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

SublimeText3 Linux new version

SublimeText3 Linux latest version

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool