Technology peripherals

Technology peripherals AI

AI Wall-E the robot is here! Disney unveils new robot, uses RL to learn to walk, and can also interact socially

Wall-E the robot is here! Disney unveils new robot, uses RL to learn to walk, and can also interact sociallyDang, dang, dang, "WALL-E Robot" appears!

It has a flat head and a boxy body. If you point it at the ground, it will tilt its head to express confusion.

However, it is not WALL-E, the real WALL-E looks like this!

This cute little robot was developed by the Disney Research team at the 2023 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) in Detroit on display.

This little robot launched by Disney immediately attracted people’s curious eyes as soon as it appeared

Extremely With its expressive head, two swaying antennae "antennas", and short calves, the child-sized body incorporates a lot of emotional body language.

Let everyone who sees it be filled with love and affection.

The difference between this robot and other small bipedal robots is its unique way of walking - it makes sounds when moving, making it feel like a unique little life

Sure enough, after the video on IROS was uploaded to the Internet, netizens were all adorable.

"It's so cute, love, love, love."

He is very cute

There are also a large number of netizens who want to take it home directly.

「I need one!」

「I Can’t wait to see it walking around my room!"

Let’s see how this little robot does next It was created

The Journey to the Birth of Disney’s Little Robot

Programming robots to express emotional movements is Disney’s specialty.

In 1971, Disney World’s Hall of Presidents began using animated robotics technology

But as robots became more advanced and mobile Even stronger, it is increasingly difficult for robot designers and robot animators to develop affective behaviors that both exploit and are compatible with real-world constraints.

Disney Research has spent the past year developing a new system that leverages reinforcement learning techniques to transform animators’ imaginations into expressive movements

These actions are powerful enough to work in almost any venue, whether at the International Conference on Robotics and Intelligent Systems (IROS), at a Disney theme park, or in the woods of Switzerland.... ..

The function of this new system is to allow robots to show more emotions and expressions, thereby making them more attractive and useful in different situations

This The robot was developed by a Disney research team in Zurich, led by Moritz Bächer. It is mainly manufactured through 3D printing, using modular hardware and actuators

This design allows it to be developed and improved very quickly, from initial concept to on-board As seen in the video, it was completed in less than a year.

This robot has a four-degree-of-freedom head that can look up, down, left, and right, and tilt.

In addition, it has a five-degree-of-freedom leg with a hip joint, allowing it to walk while maintaining dynamic balance

This enables the robot to perform more complex actions and interactions with higher flexibility.

At Disney, that’s not enough. Our robots need to have the ability to walk, jump, trot, or roam gracefully to deliver the emotions we need

Disney has professional animators who are good at expressing emotions through movement, as well as roboticists who are good at building mechanical systems.

“What we’re trying to bring to these robots actually stems from our history of character animation,” explains Michael Hopkins, Disney’s chief development engineer.

"We have an animator on our team and together we are able to leverage their knowledge and our technical expertise to create the best possible performance."

Creating an effective robot character requires combining the talents of an animator and a roboticist

This is a fairly time-consuming process that involves a lot of experimentation and errors to ensure the robot communicates the animator's intent without falling over.

"It's not just about walking. Walking is just one of the inputs to a reinforcement learning system, but another important input is how it walks," Pope added.

To bridge this gap, Disney Research developed a system based on reinforcement learning

The system uses simulation technology to combine the animator's vision with the powerful robot movement and strike a balance between the two. Realizing the constraints of the physical world allows animators to develop highly expressive movements.

These artists want their imagined movements to come to life as close to the physical limits of robots as possible

Disney’s assembly line can Training a robot on a new behavior on a PC can take years of training in just a few hours

#########In addition, reinforcement learning makes the movements produced by small robots extremely stable. ######

This system developed by Disney has the ability to repeatedly train movements, and can also fine-tune aspects such as motor performance, mass distribution, and friction between the robot and the ground

The content is rewritten as follows: This system can ensure that no matter what situation the robot encounters in the real world, it knows how to deal with it and can show corresponding emotions. It’s very important for a robot to maintain its own personality

Next destination

Disney’s Researchers are proud to say that although this little robot is very cute, what is more important is the system it relies on

This system makes the little robot so lively and cute, and it is also the future journey of Disney A promising first step in

Disney’s next plan is to use this technology to develop more physical robot characters and push the envelope with faster, more dynamic movements.

While the little robot doesn't have an official name yet, Disney says it's just a prelude to more animated robots to come.

It’s really exciting.

The above is the detailed content of Wall-E the robot is here! Disney unveils new robot, uses RL to learn to walk, and can also interact socially. For more information, please follow other related articles on the PHP Chinese website!

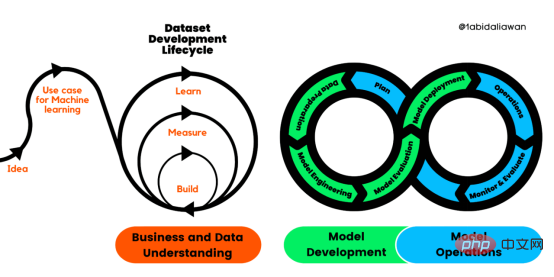

解读CRISP-ML(Q):机器学习生命周期流程Apr 08, 2023 pm 01:21 PM

解读CRISP-ML(Q):机器学习生命周期流程Apr 08, 2023 pm 01:21 PM译者 | 布加迪审校 | 孙淑娟目前,没有用于构建和管理机器学习(ML)应用程序的标准实践。机器学习项目组织得不好,缺乏可重复性,而且从长远来看容易彻底失败。因此,我们需要一套流程来帮助自己在整个机器学习生命周期中保持质量、可持续性、稳健性和成本管理。图1. 机器学习开发生命周期流程使用质量保证方法开发机器学习应用程序的跨行业标准流程(CRISP-ML(Q))是CRISP-DM的升级版,以确保机器学习产品的质量。CRISP-ML(Q)有六个单独的阶段:1. 业务和数据理解2. 数据准备3. 模型

人工智能的环境成本和承诺Apr 08, 2023 pm 04:31 PM

人工智能的环境成本和承诺Apr 08, 2023 pm 04:31 PM人工智能(AI)在流行文化和政治分析中经常以两种极端的形式出现。它要么代表着人类智慧与科技实力相结合的未来主义乌托邦的关键,要么是迈向反乌托邦式机器崛起的第一步。学者、企业家、甚至活动家在应用人工智能应对气候变化时都采用了同样的二元思维。科技行业对人工智能在创建一个新的技术乌托邦中所扮演的角色的单一关注,掩盖了人工智能可能加剧环境退化的方式,通常是直接伤害边缘人群的方式。为了在应对气候变化的过程中充分利用人工智能技术,同时承认其大量消耗能源,引领人工智能潮流的科技公司需要探索人工智能对环境影响的

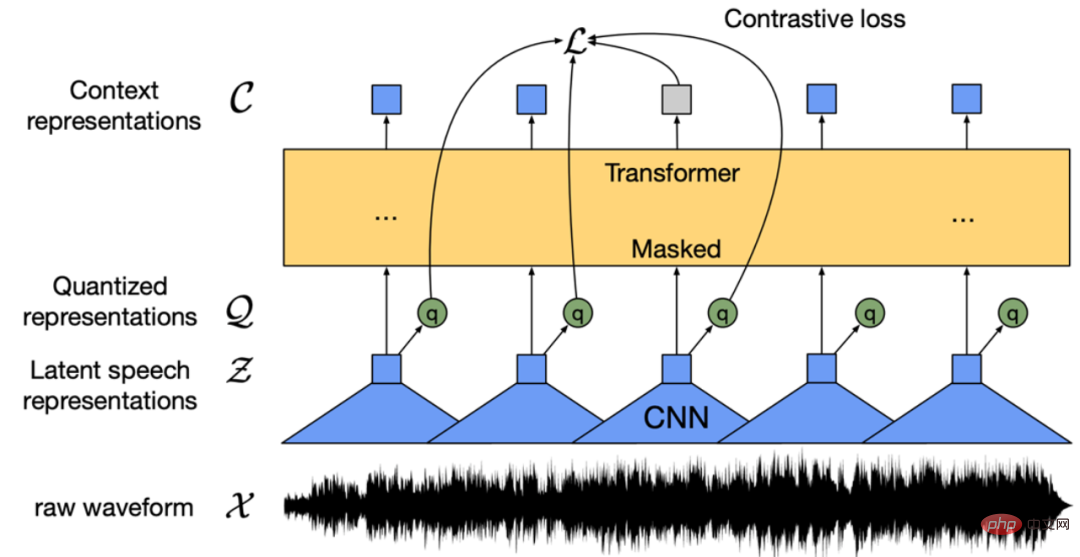

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PM

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PMWav2vec 2.0 [1],HuBERT [2] 和 WavLM [3] 等语音预训练模型,通过在多达上万小时的无标注语音数据(如 Libri-light )上的自监督学习,显著提升了自动语音识别(Automatic Speech Recognition, ASR),语音合成(Text-to-speech, TTS)和语音转换(Voice Conversation,VC)等语音下游任务的性能。然而这些模型都没有公开的中文版本,不便于应用在中文语音研究场景。 WenetSpeech [4] 是

条形统计图用什么呈现数据Jan 20, 2021 pm 03:31 PM

条形统计图用什么呈现数据Jan 20, 2021 pm 03:31 PM条形统计图用“直条”呈现数据。条形统计图是用一个单位长度表示一定的数量,根据数量的多少画成长短不同的直条,然后把这些直条按一定的顺序排列起来;从条形统计图中很容易看出各种数量的多少。条形统计图分为:单式条形统计图和复式条形统计图,前者只表示1个项目的数据,后者可以同时表示多个项目的数据。

自动驾驶车道线检测分类的虚拟-真实域适应方法Apr 08, 2023 pm 02:31 PM

自动驾驶车道线检测分类的虚拟-真实域适应方法Apr 08, 2023 pm 02:31 PMarXiv论文“Sim-to-Real Domain Adaptation for Lane Detection and Classification in Autonomous Driving“,2022年5月,加拿大滑铁卢大学的工作。虽然自主驾驶的监督检测和分类框架需要大型标注数据集,但光照真实模拟环境生成的合成数据推动的无监督域适应(UDA,Unsupervised Domain Adaptation)方法则是低成本、耗时更少的解决方案。本文提出对抗性鉴别和生成(adversarial d

数据通信中的信道传输速率单位是bps,它表示什么Jan 18, 2021 pm 02:58 PM

数据通信中的信道传输速率单位是bps,它表示什么Jan 18, 2021 pm 02:58 PM数据通信中的信道传输速率单位是bps,它表示“位/秒”或“比特/秒”,即数据传输速率在数值上等于每秒钟传输构成数据代码的二进制比特数,也称“比特率”。比特率表示单位时间内传送比特的数目,用于衡量数字信息的传送速度;根据每帧图像存储时所占的比特数和传输比特率,可以计算数字图像信息传输的速度。

数据分析方法有哪几种Dec 15, 2020 am 09:48 AM

数据分析方法有哪几种Dec 15, 2020 am 09:48 AM数据分析方法有4种,分别是:1、趋势分析,趋势分析一般用于核心指标的长期跟踪;2、象限分析,可依据数据的不同,将各个比较主体划分到四个象限中;3、对比分析,分为横向对比和纵向对比;4、交叉分析,主要作用就是从多个维度细分数据。

聊一聊Python 实现数据的序列化操作Apr 12, 2023 am 09:31 AM

聊一聊Python 实现数据的序列化操作Apr 12, 2023 am 09:31 AM在日常开发中,对数据进行序列化和反序列化是常见的数据操作,Python提供了两个模块方便开发者实现数据的序列化操作,即 json 模块和 pickle 模块。这两个模块主要区别如下:json 是一个文本序列化格式,而 pickle 是一个二进制序列化格式;json 是我们可以直观阅读的,而 pickle 不可以;json 是可互操作的,在 Python 系统之外广泛使用,而 pickle 则是 Python 专用的;默认情况下,json 只能表示 Python 内置类型的子集,不能表示自定义的

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

SublimeText3 Chinese version

Chinese version, very easy to use