Technology peripherals

Technology peripherals AI

AI Introducing RWKV: The rise of linear Transformers and exploring alternatives

Introducing RWKV: The rise of linear Transformers and exploring alternativesIntroducing RWKV: The rise of linear Transformers and exploring alternatives

Here is a summary of some of my thoughts on the RWKV podcast: https://www.php. cn/link/9bde76f262285bb1eaeb7b40c758b53e

Why is the importance of alternatives so prominent?

With the artificial intelligence revolution in 2023, the Transformer architecture is currently at its peak. However, in the rush to adopt the successful Transformer architecture, it is easy to overlook the alternatives that can be learned from.

#As engineers, we should not take a one-size-fits-all approach and use the same solution to every problem. We should weigh the pros and cons in every situation; otherwise being trapped within the limitations of a particular platform while feeling "satisfied" by not knowing there are alternatives could turn development back to pre-liberation overnight

This problem is not unique to the field of artificial intelligence, but a historical pattern that has been repeated from ancient times to the present.

. In this story, various database management systems, such as Oracle, MySQL, and SQL Server, compete fiercely for market share and technical advantages. These competitions are not only reflected in performance and functionality, but also involve many aspects such as business strategy, marketing and user satisfaction. These database management systems are constantly introducing new features and improvements to attract more users and businesses to choose their products. A page in the history of the SQL war, which has witnessed the development and transformation of the database management system industry, and also provided us with valuable experience and lessons

Recently A notable example in software development is the NoSQL trend that emerged when SQL servers began to be physically constrained. Startups around the world are turning to NoSQL for "scale" reasons, even though they are nowhere near those scales # However, over time, as With the advent of eventual consistency and the management overhead of NoSQL, and the huge leap in hardware capabilities in terms of SSD speed and capacity, SQL servers have seen a comeback recently due to their simplicity of use and are now available in over 90% of startups Sufficient scalability

SQL and NoSQL are two different database technologies. SQL is the abbreviation of Structured Query Language, which is mainly used to process structured data. NoSQL refers to a non-relational database, suitable for processing unstructured or semi-structured data. While some people think that SQL is better than NoSQL, or vice versa, in reality it just means that each technology has its own pros, cons, and use cases. In some cases, SQL may be better suited for processing complex relational data, while NoSQL is better suited for processing large-scale unstructured data. However, this does not mean that only one technology can be chosen. In fact, many applications and systems use hybrid solutions of SQL and NoSQL in practice. Depending on the specific needs and data type, the most appropriate technology can be selected to solve the problem. Therefore, it is important to understand the characteristics and applicable scenarios of each technology and make an informed choice based on the specific situation. Both SQL and NoSQL have their own unique learning points and preferred use cases that can be learned from and cross-pollinated among similar technologies

- Currently Transformer

- What is the biggest pain point of the architecture?

- Typically, this includes calculations, context size, dataset, and alignment. In this discussion we will focus on the computation and context length:

Since O(N^ per token used/generated 2) The secondary calculation cost caused by the increase. This makes context sizes larger than 100,000 very expensive, affecting inference and training.

The context size limits the Attention mechanism, severely limiting "intelligent agent" use cases (such as smol-dev) and forcing a solution to the problem. Larger contexts require fewer workarounds.

So, how do we solve this problem?

##Introducing RWKV: a linear T

ransformer###### /Modern Large RNN#####################RWKV and Microsoft RetNet are the first in a new category called "Linear Transformers"##### ############# It directly addresses the above three limitations by supporting: ############- Linear computational cost, independent of context size.

- # In CPUs (especially ARM), allow reasonable tokens/second output in RNN mode with lower requirements.

- #There is no hard context size limit as an RNN. Any limits in the documentation are guidelines - you can fine-tune them.

As we continue to scale our AI models to 100k contexts and beyond size, the quadratic computational cost starts to grow exponentially.

However, linear Transformers did not abandon the recurrent neural network architecture and solve its bottlenecks, which forced their replacement.

#However, the redesigned RNN has learned the scalable lessons of Transformer, allowing RNN to work similarly to Transformer and eliminating these bottlenecks.

In terms of training speed, using Transformers brings them back into play - allowing them to run efficiently at O(N) cost while scaling in training More than 1 billion parameters while maintaining similar performance levels.

Chart: Linear Transformer computation cost linearly scaling per token versus exponential growth of the transformer

When you apply a square ratio to linear scaling, you get over 10x growth at 2k token count, at Obtained more than 100x growth at 100k token length

At 14B parameters, RWKV is the largest open source linear Transformer, comparable to GPT NeoX and other similar datasets (such as the Pile) is comparable.

The performance of the RWKV model is comparable to existing transformer models of similar size, Various benchmarks show

But in simpler terms, what does this mean?

advantage

- Inference/training is 10x or more cheaper than Transformer in larger context sizes

- In RNN mode, it can be very Running slowly on limited hardware

- Similar performance to Transformer on same dataset

- RNN has no technical context size limit (unlimited context!)

Disadvantages

- Sliding window problem, lossy memory beyond a certain point

- Not proven yet Can be expanded to more than 14B parameters

- Not as good as transformer optimization and adoption

So while RWKV has not yet reached the 60B parameter scale of LLaMA2, with the right support and resources it has the potential to do so at lower cost and in a wider range of environments, especially as models tend to be smaller , more efficient case

Consider this if your use case is important for efficiency. However, this is not the final solution – the key lies in healthy alternatives

We should consider learning other Alternatives and their benefits

Diffusion model: Slower to train with text, but extremely resilient to multi-epoch training. Finding out why can help alleviate the token crisis.

Generative Adversarial Networks/Agents: Techniques can be used to train the desired training set to a specific target without a data set, even if it is based on Text model.

##Original title: Introducing RWKV: The Rise of Linear Transformers and Exploring Alternatives , Author: picocreator

##https://www.php.cn/link/b433da1b32b5ca96c0ba7fcb9edba97d

The above is the detailed content of Introducing RWKV: The rise of linear Transformers and exploring alternatives. For more information, please follow other related articles on the PHP Chinese website!

Personal Hacking Will Be A Pretty Fierce BearMay 11, 2025 am 11:09 AM

Personal Hacking Will Be A Pretty Fierce BearMay 11, 2025 am 11:09 AMCyberattacks are evolving. Gone are the days of generic phishing emails. The future of cybercrime is hyper-personalized, leveraging readily available online data and AI to craft highly targeted attacks. Imagine a scammer who knows your job, your f

Pope Leo XIV Reveals How AI Influenced His Name ChoiceMay 11, 2025 am 11:07 AM

Pope Leo XIV Reveals How AI Influenced His Name ChoiceMay 11, 2025 am 11:07 AMIn his inaugural address to the College of Cardinals, Chicago-born Robert Francis Prevost, the newly elected Pope Leo XIV, discussed the influence of his namesake, Pope Leo XIII, whose papacy (1878-1903) coincided with the dawn of the automobile and

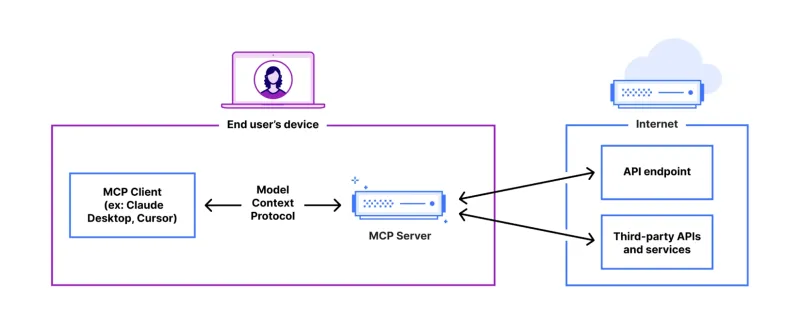

FastAPI-MCP Tutorial for Beginners and Experts - Analytics VidhyaMay 11, 2025 am 10:56 AM

FastAPI-MCP Tutorial for Beginners and Experts - Analytics VidhyaMay 11, 2025 am 10:56 AMThis tutorial demonstrates how to integrate your Large Language Model (LLM) with external tools using the Model Context Protocol (MCP) and FastAPI. We'll build a simple web application using FastAPI and convert it into an MCP server, enabling your L

Dia-1.6B TTS : Best Text-to-Dialogue Generation Model - Analytics VidhyaMay 11, 2025 am 10:27 AM

Dia-1.6B TTS : Best Text-to-Dialogue Generation Model - Analytics VidhyaMay 11, 2025 am 10:27 AMExplore Dia-1.6B: A groundbreaking text-to-speech model developed by two undergraduates with zero funding! This 1.6 billion parameter model generates remarkably realistic speech, including nonverbal cues like laughter and sneezes. This article guide

3 Ways AI Can Make Mentorship More Meaningful Than EverMay 10, 2025 am 11:17 AM

3 Ways AI Can Make Mentorship More Meaningful Than EverMay 10, 2025 am 11:17 AMI wholeheartedly agree. My success is inextricably linked to the guidance of my mentors. Their insights, particularly regarding business management, formed the bedrock of my beliefs and practices. This experience underscores my commitment to mentor

AI Unearths New Potential In The Mining IndustryMay 10, 2025 am 11:16 AM

AI Unearths New Potential In The Mining IndustryMay 10, 2025 am 11:16 AMAI Enhanced Mining Equipment The mining operation environment is harsh and dangerous. Artificial intelligence systems help improve overall efficiency and security by removing humans from the most dangerous environments and enhancing human capabilities. Artificial intelligence is increasingly used to power autonomous trucks, drills and loaders used in mining operations. These AI-powered vehicles can operate accurately in hazardous environments, thereby increasing safety and productivity. Some companies have developed autonomous mining vehicles for large-scale mining operations. Equipment operating in challenging environments requires ongoing maintenance. However, maintenance can keep critical devices offline and consume resources. More precise maintenance means increased uptime for expensive and necessary equipment and significant cost savings. AI-driven

Why AI Agents Will Trigger The Biggest Workplace Revolution In 25 YearsMay 10, 2025 am 11:15 AM

Why AI Agents Will Trigger The Biggest Workplace Revolution In 25 YearsMay 10, 2025 am 11:15 AMMarc Benioff, Salesforce CEO, predicts a monumental workplace revolution driven by AI agents, a transformation already underway within Salesforce and its client base. He envisions a shift from traditional markets to a vastly larger market focused on

AI HR Is Going To Rock Our Worlds As AI Adoption SoarsMay 10, 2025 am 11:14 AM

AI HR Is Going To Rock Our Worlds As AI Adoption SoarsMay 10, 2025 am 11:14 AMThe Rise of AI in HR: Navigating a Workforce with Robot Colleagues The integration of AI into human resources (HR) is no longer a futuristic concept; it's rapidly becoming the new reality. This shift impacts both HR professionals and employees, dem

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

SublimeText3 Linux new version

SublimeText3 Linux latest version

WebStorm Mac version

Useful JavaScript development tools