Technology peripherals

Technology peripherals AI

AI Microsoft's super powerful small model sparks heated discussion: exploring the huge role of textbook-level data

Microsoft's super powerful small model sparks heated discussion: exploring the huge role of textbook-level dataMicrosoft's super powerful small model sparks heated discussion: exploring the huge role of textbook-level data

As large models set off a new round of AI craze, people began to think: What is the source of the powerful capabilities of large models?

Currently, large models have been driven by the ever-increasing amount of "big data". "Big Model Big Data" seems to have become the standard paradigm for building models. However, as the model size and data volume continue to grow, the demand for computing power will expand rapidly. Some researchers are trying to explore new ideas. Rewritten content: Currently, large-scale models have been driven by ever-increasing amounts of “big data.” "Large Model Big Data" seems to have become the standard paradigm for building models. However, as the model size and data volume continue to grow, the computing power requirements will expand rapidly. Some researchers are trying to explore new ideas

Microsoft released a paper called "Just Textbooks" in June, using a data set of only 7B markers to A model containing 1.3B parameters, called phi-1, was trained. Despite having datasets and model sizes that are orders of magnitude smaller than competitors, phi-1 achieved a first-time pass rate of 50.6% in the HumanEval test and 55.5% in the MBPP test

phi-1 proves that high-quality "small data" can give the model good performance. Recently, Microsoft published a paper "Textbooks Are All You Need II: phi-1.5 technical report" to further study the potential of high-quality "small data".

Paper address: https://arxiv.org/abs/2309.05463

Model Introduction

Architecture

The research team used phi-1 research methods and focused their research on natural language For common sense reasoning tasks, a Transformer architecture language model phi-1.5 with 1.3B parameters was developed. The architecture of phi-1.5 is exactly the same as phi-1, with 24 layers, 32 heads, each head has a dimension of 64, and uses a rotation embedding with a rotation dimension of 32, and a context length of 2048

In addition, this study also uses flash-attention for training acceleration and codegen-mono's tokenizer.

The content that needs to be rewritten is: training data

phi The content that needs to be rewritten for -1.5 is: the training data is composed of the training data (7B tokens) and the newly created "textbook quality" data (about 20B tokens) for phi-1. Among them, the newly created "textbook quality" data is designed to allow the model to master common sense reasoning, and the research team carefully selected 20K topics to generate new data.

It is worth noting that in order to explore the importance of network data (commonly used in LLM), this study also constructed two models: phi-1.5-web-only and phi-1.5-web .

The research team stated: Creating a powerful and comprehensive dataset requires not only raw computing power, but also complex iterations, effective topic selection, and a deep understanding of knowledge. These elements can ensure the quality and diversity of data.

Experimental results

This study evaluated language understanding tasks, using multiple data sets, including PIQA, Hellaswag, OpenbookQA, SQUAD and MMLU. The evaluation results are shown in Table 3. The performance of phi-1.5 is comparable to that of a model 5 times larger.

on the common sense reasoning benchmark. The test results are shown in the table below:

In more complex reasoning tasks, such as elementary school mathematics and basic coding tasks, phi-1.5 outperforms Most of the LLM

research team believes that phi-1.5 once again proves the power of high-quality "small data".

Questions and Discussions

Perhaps because the concept of "big model and big data" is too deeply rooted in the hearts of the people, this research has been criticized Some researchers in the machine learning community are skeptical, and some even suspect that phi-1.5 was trained directly on the test benchmark data set.

Netizen Susan Zhang conducted a series of verifications and pointed out: "phi-1.5 can give completely correct answers to the original problem in the GSM8K data set. answer, but as long as the format is slightly modified (such as line breaks), phi-1.5 will not answer."

Also modify the data in the question, phi-1.5 will cause "illusion" in the process of answering the question. For example, in a food ordering problem, if only the "price of pizza" is modified, the phi-1.5 answer will be wrong.

##And, phi-1.5 seems to "remember" Final answer, even if the answer is already wrong even if the data is modified.

In this regard, Ronan Eldan, an author of the paper, quickly responded and explained and refuted the problems that appeared in the above-mentioned netizen test:

#But the netizen once again stated his point of view: The test shows that the answer to phi-1.5 is very "fragile" to the format of the prompt, and is harmful to the author's Response to the question:

Li Yuanzhi, the first author of the paper, responded: "Although phi-1.5 is indeed inferior to GPT-4 in terms of robustness, but "Fragile" is not an accurate term. In fact, for any model, pass@k accuracy will be much higher than pass@1 (so the correctness of the model is accidental)

After seeing these questions and discussions, netizens said: “The easiest way to respond is to make the synthetic data set public. ”

What do you think of this?

The above is the detailed content of Microsoft's super powerful small model sparks heated discussion: exploring the huge role of textbook-level data. For more information, please follow other related articles on the PHP Chinese website!

An easy-to-understand explanation of how to make inventory management more efficient using ChatGPT!May 14, 2025 am 03:44 AM

An easy-to-understand explanation of how to make inventory management more efficient using ChatGPT!May 14, 2025 am 03:44 AMEasy to implement even for small and medium-sized businesses! Smart inventory management with ChatGPT and Excel Inventory management is the lifeblood of your business. Overstocking and out-of-stock items have a serious impact on cash flow and customer satisfaction. However, the current situation is that introducing a full-scale inventory management system is high in terms of cost. What you'd like to focus on is the combination of ChatGPT and Excel. In this article, we will explain step by step how to streamline inventory management using this simple method. Automate tasks such as data analysis, demand forecasting, and reporting to dramatically improve operational efficiency. moreover,

An easy-to-understand explanation of how to check and switch versions of ChatGPT!May 14, 2025 am 03:43 AM

An easy-to-understand explanation of how to check and switch versions of ChatGPT!May 14, 2025 am 03:43 AMUse AI wisely by choosing a ChatGPT version! A thorough explanation of the latest information and how to check ChatGPT is an ever-evolving AI tool, but its features and performance vary greatly depending on the version. In this article, we will explain in an easy-to-understand manner the features of each version of ChatGPT, how to check the latest version, and the differences between the free version and the paid version. Choose the best version and make the most of your AI potential. Click here for more information about OpenAI's latest AI agent, OpenAI Deep Research ⬇️ [ChatGPT] OpenAI D

Explaining the reasons why you cannot use your credit card with ChatGPT's paid plan and how to deal with itMay 14, 2025 am 03:32 AM

Explaining the reasons why you cannot use your credit card with ChatGPT's paid plan and how to deal with itMay 14, 2025 am 03:32 AMTroubleshooting Guide for Credit Card Payment with ChatGPT Paid Subscriptions Credit card payments may be problematic when using ChatGPT paid subscription. This article will discuss the reasons for credit card rejection and the corresponding solutions, from problems solved by users themselves to the situation where they need to contact a credit card company, and provide detailed guides to help you successfully use ChatGPT paid subscription. OpenAI's latest AI agent, please click ⬇️ for details of "OpenAI Deep Research" 【ChatGPT】Detailed explanation of OpenAI Deep Research: How to use and charging standards Table of contents Causes of failure in ChatGPT credit card payment Reason 1: Incorrect input of credit card information Original

An easy-to-understand explanation of how to create a VBA macro in ChatGPT!May 14, 2025 am 02:40 AM

An easy-to-understand explanation of how to create a VBA macro in ChatGPT!May 14, 2025 am 02:40 AMFor beginners and those interested in business automation, writing VBA scripts, an extension to Microsoft Office, may find it difficult. However, ChatGPT makes it easy to streamline and automate business processes. This article explains in an easy-to-understand manner how to develop VBA scripts using ChatGPT. We will introduce in detail specific examples, from the basics of VBA to script implementation using ChatGPT integration, testing and debugging, and benefits and points to note. With the aim of improving programming skills and improving business efficiency,

I can't use the ChatGPT plugin function! Explaining what to do in case of an errorMay 14, 2025 am 01:56 AM

I can't use the ChatGPT plugin function! Explaining what to do in case of an errorMay 14, 2025 am 01:56 AMChatGPT plugin cannot be used? This guide will help you solve your problem! Have you ever encountered a situation where the ChatGPT plugin is unavailable or suddenly fails? The ChatGPT plugin is a powerful tool to enhance the user experience, but sometimes it can fail. This article will analyze in detail the reasons why the ChatGPT plug-in cannot work properly and provide corresponding solutions. From user setup checks to server troubleshooting, we cover a variety of troubleshooting solutions to help you efficiently use plug-ins to complete daily tasks. OpenAI Deep Research, the latest AI agent released by OpenAI. For details, please click ⬇️ [ChatGPT] OpenAI Deep Research Detailed explanation:

Does ChatGPT not follow the character count specification? A thorough explanation of how to deal with this!May 14, 2025 am 01:54 AM

Does ChatGPT not follow the character count specification? A thorough explanation of how to deal with this!May 14, 2025 am 01:54 AMWhen writing a sentence using ChatGPT, there are times when you want to specify the number of characters. However, it is difficult to accurately predict the length of sentences generated by AI, and it is not easy to match the specified number of characters. In this article, we will explain how to create a sentence with the number of characters in ChatGPT. We will introduce effective prompt writing, techniques for getting answers that suit your purpose, and teach you tips for dealing with character limits. In addition, we will explain why ChatGPT is not good at specifying the number of characters and how it works, as well as points to be careful about and countermeasures. This article

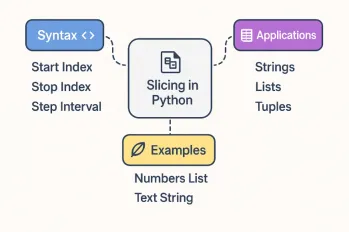

All About Slicing Operations in PythonMay 14, 2025 am 01:48 AM

All About Slicing Operations in PythonMay 14, 2025 am 01:48 AMFor every Python programmer, whether in the domain of data science and machine learning or software development, Python slicing operations are one of the most efficient, versatile, and powerful operations. Python slicing syntax a

An easy-to-understand explanation of how to use ChatGPT to create quotes!May 14, 2025 am 01:44 AM

An easy-to-understand explanation of how to use ChatGPT to create quotes!May 14, 2025 am 01:44 AMThe evolution of AI technology has accelerated business efficiency. What's particularly attracting attention is the creation of estimates using AI. OpenAI's AI assistant, ChatGPT, contributes to improving the estimate creation process and improving accuracy. This article explains how to create a quote using ChatGPT. We will introduce efficiency improvements through collaboration with Excel VBA, specific examples of application to system development projects, benefits of AI implementation, and future prospects. Learn how to improve operational efficiency and productivity with ChatGPT. Op

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

SublimeText3 Chinese version

Chinese version, very easy to use

WebStorm Mac version

Useful JavaScript development tools

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver Mac version

Visual web development tools