Backend Development

Backend Development Python Tutorial

Python Tutorial Scrapy practice: crawling and analyzing data from a game forum

Scrapy practice: crawling and analyzing data from a game forumIn recent years, the use of Python for data mining and analysis has become more and more popular. Scrapy is a popular tool when it comes to scraping website data. In this article, we will introduce how to use Scrapy to crawl data from a game forum for subsequent data analysis.

1. Select the target

First, we need to select a target website. Here, we choose a game forum.

As shown in the picture below, this forum contains various resources, such as game guides, game downloads, player communication, etc.

Our goal is to obtain the post title, author, publishing time, number of replies and other information for subsequent data analysis.

2. Create a Scrapy project

Before we start crawling data, we need to create a Scrapy project. At the command line, enter the following command:

scrapy startproject forum_spider

This will create a new project named "forum_spider".

3. Configure Scrapy settings

In the settings.py file, we need to configure some settings to ensure that Scrapy can successfully crawl the required data from the forum website. The following are some commonly used settings:

BOT_NAME = 'forum_spider' SPIDER_MODULES = ['forum_spider.spiders'] NEWSPIDER_MODULE = 'forum_spider.spiders' ROBOTSTXT_OBEY = False # 忽略robots.txt文件 DOWNLOAD_DELAY = 1 # 下载延迟 COOKIES_ENABLED = False # 关闭cookies

4. Writing Spider

In Scrapy, Spider is the class used to perform the actual work (ie, crawl the website). We need to define a spider to get the required data from the forum.

We can use Scrapy's Shell to test and debug our Spider. At the command line, enter the following command:

scrapy shell "https://forum.example.com"

This will open an interactive Python shell with the target forum.

In the shell, we can use the following command to test the required Selector:

response.xpath("xpath_expression").extract()Here, "xpath_expression" should be the XPath expression used to select the required data.

For example, the following code is used to obtain all threads in the forum:

response.xpath("//td[contains(@id, 'td_threadtitle_')]").extract()After we have determined the XPath expression, we can create a Spider.

In the spiders folder, we create a new file called "forum_spider.py". The following is the code of Spider:

import scrapy

class ForumSpider(scrapy.Spider):

name = "forum"

start_urls = [

"https://forum.example.com"

]

def parse(self, response):

for thread in response.xpath("//td[contains(@id, 'td_threadtitle_')]"):

yield {

'title': thread.xpath("a[@class='s xst']/text()").extract_first(),

'author': thread.xpath("a[@class='xw1']/text()").extract_first(),

'date': thread.xpath("em/span/@title").extract_first(),

'replies': thread.xpath("a[@class='xi2']/text()").extract_first()

}In the above code, we first define the name of Spider as "forum" and set a starting URL. Then, we defined the parse() method to handle the response of the forum page.

In the parse() method, we use XPath expressions to select the data we need. Next, we use the yield statement to generate the data into a Python dictionary and return it. This means that our Spider will crawl all the threads in the forum homepage one by one and extract the required data.

5. Run Spider

Before executing Spider, we need to ensure that Scrapy has been configured correctly. We can test whether the spider is working properly using the following command:

scrapy crawl forum

This will start our spider and output the captured data in the console.

6. Data Analysis

After we successfully crawl the data, we can use some Python libraries (such as Pandas and Matplotlib) to analyze and visualize the data.

We can first store the crawled data as a CSV file to facilitate data analysis and processing.

import pandas as pd

df = pd.read_csv("forum_data.csv")

print(df.head())This will display the first five rows of data in the CSV file.

We can use libraries such as Pandas and Matplotlib to perform statistical analysis and visualization of data.

Here is a simple example where we sort the data by posting time and plot the total number of posts.

import matplotlib.pyplot as plt

import pandas as pd

df = pd.read_csv("forum_data.csv")

df['date'] = pd.to_datetime(df['date']) #将时间字符串转化为时间对象

df['month'] = df['date'].dt.month

grouped = df.groupby('month')

counts = grouped.size()

counts.plot(kind='bar')

plt.title('Number of Threads by Month')

plt.xlabel('Month')

plt.ylabel('Count')

plt.show()In the above code, we convert the release time into a Python Datetime object and group the data according to month. We then used Matplotlib to create a histogram to show the number of posts published each month.

7. Summary

This article introduces how to use Scrapy to crawl data from a game forum, and shows how to use Python's Pandas and Matplotlib libraries for data analysis and visualization. These tools are Python libraries that are very popular in the field of data analysis and can be used to explore and visualize website data.

The above is the detailed content of Scrapy practice: crawling and analyzing data from a game forum. For more information, please follow other related articles on the PHP Chinese website!

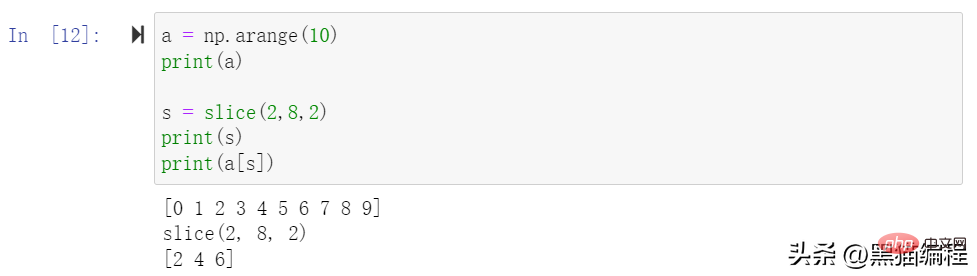

一文详解Python数据分析模块Numpy切片、索引和广播Apr 10, 2023 pm 02:56 PM

一文详解Python数据分析模块Numpy切片、索引和广播Apr 10, 2023 pm 02:56 PMNumpy切片和索引ndarray对象的内容可以通过索引或切片来访问和修改,与 Python 中 list 的切片操作一样。ndarray 数组可以基于 0 ~ n-1 的下标进行索引,切片对象可以通过内置的 slice 函数,并设置 start, stop 及 step 参数进行,从原数组中切割出一个新数组。切片还可以包括省略号 …,来使选择元组的长度与数组的维度相同。 如果在行位置使用省略号,它将返回包含行中元素的 ndarray。高级索引整数数组索引以下实例获取数组中 (0,0),(1,1

Python中的机器学习是什么?Jun 04, 2023 am 08:52 AM

Python中的机器学习是什么?Jun 04, 2023 am 08:52 AM近年来,机器学习(MachineLearning)成为了IT行业中最热门的话题之一,Python作为一种高效的编程语言,已经成为了许多机器学习实践者的首选。本文将会介绍Python中机器学习的概念、应用和实现。一、机器学习概念机器学习是一种让机器通过对数据的分析、学习和优化,自动改进性能的技术。其主要目的是让机器能够在数据中发现存在的规律,从而获得对未来

如何利用 Go 语言进行数据分析和机器学习?Jun 10, 2023 am 09:21 AM

如何利用 Go 语言进行数据分析和机器学习?Jun 10, 2023 am 09:21 AM随着互联网技术的发展和大数据的普及,越来越多的公司和机构开始关注数据分析和机器学习。现在,有许多编程语言可以用于数据科学,其中Go语言也逐渐成为了一种不错的选择。虽然Go语言在数据科学上的应用不如Python和R那么广泛,但是它具有高效、并发和易于部署等特点,因此在某些场景中表现得非常出色。本文将介绍如何利用Go语言进行数据分析和机器学习

数据挖掘和数据分析的区别是什么?Dec 07, 2020 pm 03:16 PM

数据挖掘和数据分析的区别是什么?Dec 07, 2020 pm 03:16 PM区别:1、“数据分析”得出的结论是人的智力活动结果,而“数据挖掘”得出的结论是机器从学习集【或训练集、样本集】发现的知识规则;2、“数据分析”不能建立数学模型,需要人工建模,而“数据挖掘”直接完成了数学建模。

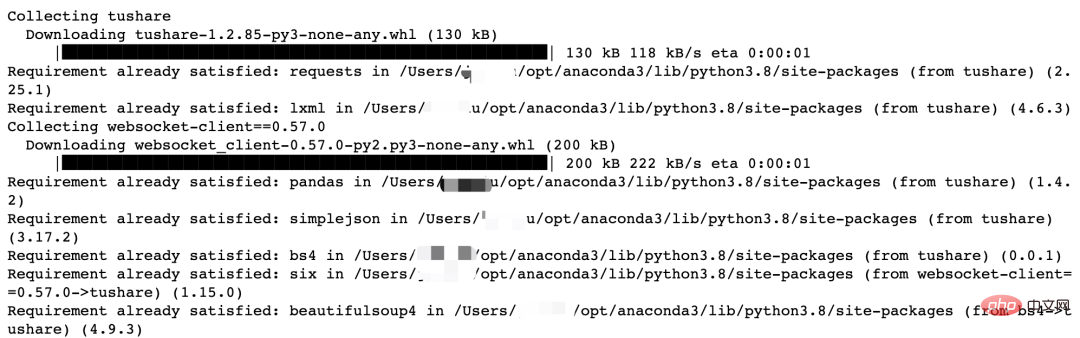

Python量化交易实战:获取股票数据并做分析处理Apr 15, 2023 pm 09:13 PM

Python量化交易实战:获取股票数据并做分析处理Apr 15, 2023 pm 09:13 PM量化交易(也称自动化交易)是一种应用数学模型帮助投资者进行判断,并且根据计算机程序发送的指令进行交易的投资方式,它极大地减少了投资者情绪波动的影响。量化交易的主要优势如下:快速检测客观、理性自动化量化交易的核心是筛选策略,策略也是依靠数学或物理模型来创造,把数学语言变成计算机语言。量化交易的流程是从数据的获取到数据的分析、处理。数据获取数据分析工作的第一步就是获取数据,也就是数据采集。获取数据的方式有很多,一般来讲,数据来源主要分为两大类:外部来源(外部购买、网络爬取、免费开源数据等)和内部来源

MySQL中的大数据分析技巧Jun 14, 2023 pm 09:53 PM

MySQL中的大数据分析技巧Jun 14, 2023 pm 09:53 PM随着大数据时代的到来,越来越多的企业和组织开始利用大数据分析来帮助自己更好地了解其所面对的市场和客户,以便更好地制定商业策略和决策。而在大数据分析中,MySQL数据库也是经常被使用的一种工具。本文将介绍MySQL中的大数据分析技巧,为大家提供参考。一、使用索引进行查询优化索引是MySQL中进行查询优化的重要手段之一。当我们对某个列创建了索引后,MySQL就可

为何军事人工智能初创公司近年来备受追捧Apr 13, 2023 pm 01:34 PM

为何军事人工智能初创公司近年来备受追捧Apr 13, 2023 pm 01:34 PM俄乌冲突爆发 2 周后,数据分析公司 Palantir 的首席执行官亚历山大·卡普 (Alexander Karp) 向欧洲领导人提出了一项建议。在公开信中,他表示欧洲人应该在硅谷的帮助下实现武器现代化。Karp 写道,为了让欧洲“保持足够强大以战胜外国占领的威胁”,各国需要拥抱“技术与国家之间的关系,以及寻求摆脱根深蒂固的承包商控制的破坏性公司与联邦政府部门之间的资金关系”。而军队已经开始响应这项号召。北约于 6 月 30 日宣布,它正在创建一个 10 亿美元的创新基金,将投资于早期创业公司和

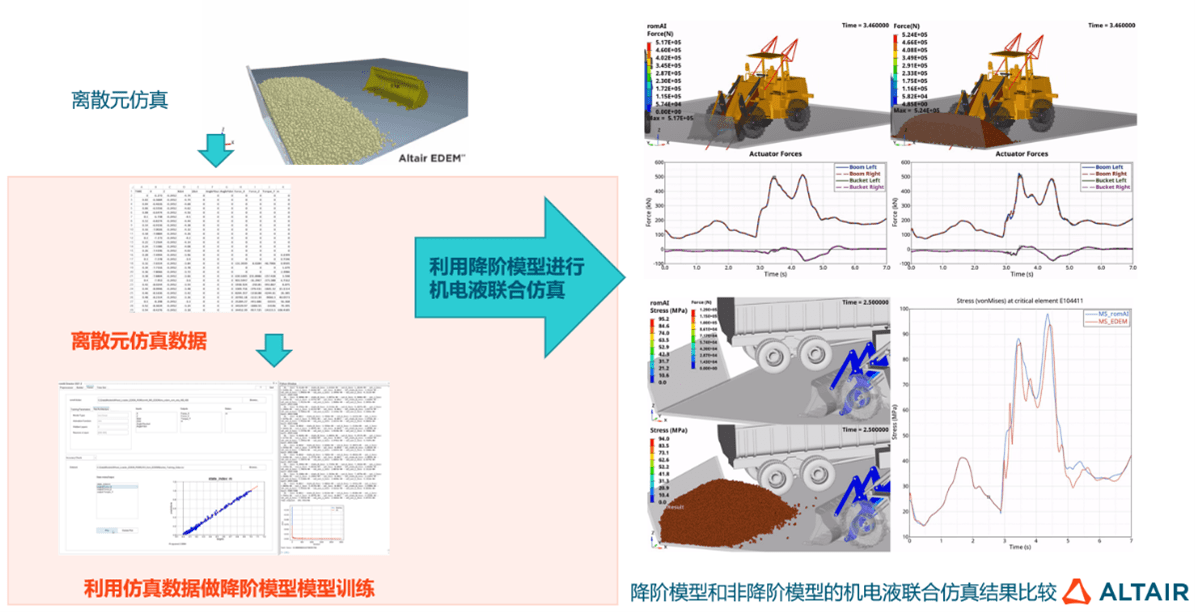

AI牵引工业软件新升级,数据分析与人工智能在探索中进化Jun 05, 2023 pm 04:04 PM

AI牵引工业软件新升级,数据分析与人工智能在探索中进化Jun 05, 2023 pm 04:04 PMCAE和AI技术双融合已成为企业研发设计环节数字化转型的重要应用趋势,但企业数字化转型绝不仅是单个环节的优化,而是全流程、全生命周期的转型升级,数据驱动只有作用于各业务环节,才能真正助力企业持续发展。数字化浪潮席卷全球,作为数字经济核心驱动,数字技术逐步成为企业发展新动能,助推企业核心竞争力进化,在此背景下,数字化转型已成为所有企业的必选项和持续发展的前提,拥抱数字经济成为企业的共同选择。但从实际情况来看,面向C端的产业如零售电商、金融等领域在数字化方面走在前列,而以制造业、能源重工等为代表的传

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Dreamweaver CS6

Visual web development tools

Notepad++7.3.1

Easy-to-use and free code editor

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

SublimeText3 English version

Recommended: Win version, supports code prompts!

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment