Technology peripherals

Technology peripherals AI

AI 'Censored' during image generation: Failure cases of stable diffusion are affected by four major factors

'Censored' during image generation: Failure cases of stable diffusion are affected by four major factors'Censored' during image generation: Failure cases of stable diffusion are affected by four major factors

Text-to-image diffusion generation models, such as Stable Diffusion, DALL-E 2 and mid-journey, have been in a state of vigorous development and have strong text-to-image generation capabilities, but "overturned ” Cases will occasionally appear.

As shown in the figure below, when given a text prompt: "A photo of a warthog", the Stable Diffusion model can generate a corresponding, clear and realistic photo of a warthog. However, when we slightly modify this text prompt and change it to: "A photo of a warthog and a traitor", what about the warthog? How did it become a car?

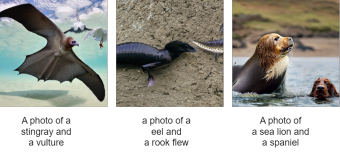

Let’s take a look at the next few examples. What new species are these?

What causes these strange phenomena? These generation failure cases all come from a recently published paper "Stable Diffusion is Unstable":

- Paper address: https://arxiv.org/abs/2306.02583

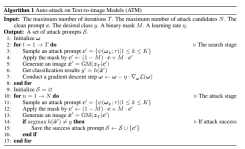

In this paper A gradient-based adversarial algorithm for text-to-image models is proposed for the first time. This algorithm can efficiently and effectively generate a large number of offensive text prompts, and can effectively explore the instability of the Stable diffusion model. This algorithm achieved an attack success rate of 91.1% on short text prompts and 81.2% on long text prompts. In addition, this algorithm provides rich cases for studying the failure modes of text-to-image generation models, laying a foundation for research on the controllability of image generation.

Based on a large number of generation failure cases generated by this algorithm, the researcher summarized four reasons for generation failure, which are:

- Difference in generation speed

- Similarity of coarse-grained features

- Ambiguity of words

- The position of the word in the prompt

Difference in generation speed

When a prompt (prompt) contains multiple generation targets, we often encounter There is an issue where a certain target disappears during the generation process. Theoretically, all targets within the same cue should share the same initial noise. As shown in Figure 4, the researchers generated one thousand category targets on ImageNet under the condition of fixed initial noise. They used the last image generated by each target as a reference image and calculated the Structural Similarity Index (SSIM) score between the image generated at each time step and the image generated at the last step to demonstrate the different targets. Differences in build speed.

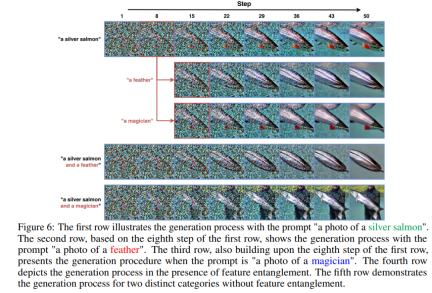

Coarse-grained feature similarity

In the diffusion generation process, the researcher found that when When there is global or local coarse-grained feature similarity between two types of targets, problems will arise when calculating cross attention weights. This is because the two target nouns may focus on the same block of the same picture at the same time, resulting in feature entanglement. For example, in Figure 6, feather and silver salmon have certain similarities in coarse-grained features, which results in feather being able to continue to complete its generation task in the eighth step of the generation process based on silver salmon. For two types of targets without entanglement, such as silver salmon and magician, magician cannot complete its generation task on the intermediate step image based on silver salmon.

Polysemy

In this chapter, researchers explore in depth what happens when a word has multiple meanings time generation. What they found was that, without any outside perturbation, the resulting image often represented a specific meaning of the word. Take "warthog" as an example. The first line in Figure A4 is generated based on the meaning of the word "warthog".

However, researchers also found that when other words are injected into the original prompt , which may cause semantic shifts. For example, when the word "traitor" is introduced in a prompt describing "warthog", the generated image content may deviate from the original meaning of "warthog" and generate entirely new content.

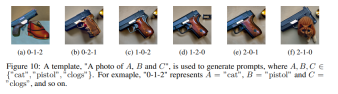

The position of the word in prompt

In Figure 10, the researcher observed an interesting phenomenon. Although from a human perspective, the prompts arranged in different orders generally have the same meaning, they are all describing a picture of a cat, clogs, and a pistol. However, for the language model, that is, the CLIP text encoder, the order of the words affects its understanding of the text to a certain extent, which in turn changes the content of the generated images. This phenomenon shows that although our descriptions are semantically consistent, the model may produce different understanding and generation results due to the different order of words. This not only reveals that the way models process language and understands semantics is different from humans, but also reminds us that we need to pay more attention to the impact of word order when designing and using such models.

Model structure

As shown in Figure 1 below, without changing the original target noun in the prompt Under the premise, the researcher continuousizes the discrete process of word replacement or expansion by learning the Gumbel Softmax distribution, thereby ensuring the differentiability of perturbation generation. After generating the image, the CLIP classifier and margin loss are used to optimize ω, aiming to generate CLIP For images that cannot be correctly classified, in order to ensure that offensive cues have a certain similarity with clean cues, researchers have further used semantic similarity constraints and text fluency constraints.

Once this distribution is learned, the algorithm is able to sample multiple text prompts with attack effects for the same clean text prompt.

# See the original article for more details.

The above is the detailed content of 'Censored' during image generation: Failure cases of stable diffusion are affected by four major factors. For more information, please follow other related articles on the PHP Chinese website!

AI Game Development Enters Its Agentic Era With Upheaval's Dreamer PortalMay 02, 2025 am 11:17 AM

AI Game Development Enters Its Agentic Era With Upheaval's Dreamer PortalMay 02, 2025 am 11:17 AMUpheaval Games: Revolutionizing Game Development with AI Agents Upheaval, a game development studio comprised of veterans from industry giants like Blizzard and Obsidian, is poised to revolutionize game creation with its innovative AI-powered platfor

Uber Wants To Be Your Robotaxi Shop, Will Providers Let Them?May 02, 2025 am 11:16 AM

Uber Wants To Be Your Robotaxi Shop, Will Providers Let Them?May 02, 2025 am 11:16 AMUber's RoboTaxi Strategy: A Ride-Hail Ecosystem for Autonomous Vehicles At the recent Curbivore conference, Uber's Richard Willder unveiled their strategy to become the ride-hail platform for robotaxi providers. Leveraging their dominant position in

AI Agents Playing Video Games Will Transform Future RobotsMay 02, 2025 am 11:15 AM

AI Agents Playing Video Games Will Transform Future RobotsMay 02, 2025 am 11:15 AMVideo games are proving to be invaluable testing grounds for cutting-edge AI research, particularly in the development of autonomous agents and real-world robots, even potentially contributing to the quest for Artificial General Intelligence (AGI). A

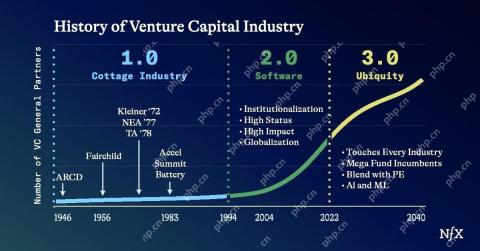

The Startup Industrial Complex, VC 3.0, And James Currier's ManifestoMay 02, 2025 am 11:14 AM

The Startup Industrial Complex, VC 3.0, And James Currier's ManifestoMay 02, 2025 am 11:14 AMThe impact of the evolving venture capital landscape is evident in the media, financial reports, and everyday conversations. However, the specific consequences for investors, startups, and funds are often overlooked. Venture Capital 3.0: A Paradigm

Adobe Updates Creative Cloud And Firefly At Adobe MAX London 2025May 02, 2025 am 11:13 AM

Adobe Updates Creative Cloud And Firefly At Adobe MAX London 2025May 02, 2025 am 11:13 AMAdobe MAX London 2025 delivered significant updates to Creative Cloud and Firefly, reflecting a strategic shift towards accessibility and generative AI. This analysis incorporates insights from pre-event briefings with Adobe leadership. (Note: Adob

Everything Meta Announced At LlamaConMay 02, 2025 am 11:12 AM

Everything Meta Announced At LlamaConMay 02, 2025 am 11:12 AMMeta's LlamaCon announcements showcase a comprehensive AI strategy designed to compete directly with closed AI systems like OpenAI's, while simultaneously creating new revenue streams for its open-source models. This multifaceted approach targets bo

The Brewing Controversy Over The Proposition That AI Is Nothing More Than Just Normal TechnologyMay 02, 2025 am 11:10 AM

The Brewing Controversy Over The Proposition That AI Is Nothing More Than Just Normal TechnologyMay 02, 2025 am 11:10 AMThere are serious differences in the field of artificial intelligence on this conclusion. Some insist that it is time to expose the "emperor's new clothes", while others strongly oppose the idea that artificial intelligence is just ordinary technology. Let's discuss it. An analysis of this innovative AI breakthrough is part of my ongoing Forbes column that covers the latest advancements in the field of AI, including identifying and explaining a variety of influential AI complexities (click here to view the link). Artificial intelligence as a common technology First, some basic knowledge is needed to lay the foundation for this important discussion. There is currently a large amount of research dedicated to further developing artificial intelligence. The overall goal is to achieve artificial general intelligence (AGI) and even possible artificial super intelligence (AS)

Model Citizens, Why AI Value Is The Next Business YardstickMay 02, 2025 am 11:09 AM

Model Citizens, Why AI Value Is The Next Business YardstickMay 02, 2025 am 11:09 AMThe effectiveness of a company's AI model is now a key performance indicator. Since the AI boom, generative AI has been used for everything from composing birthday invitations to writing software code. This has led to a proliferation of language mod

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 Chinese version

Chinese version, very easy to use

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

SublimeText3 English version

Recommended: Win version, supports code prompts!

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.