Operation and Maintenance

Operation and Maintenance Safety

Safety An article on how to optimize the performance of LLM using local knowledge base

An article on how to optimize the performance of LLM using local knowledge baseAn article on how to optimize the performance of LLM using local knowledge base

Yesterday, a 220-hour fine-tuning training was completed. The main task was to fine-tune a dialogue model on CHATGLM-6B that can more accurately diagnose database error information.

However, the final result of this training that I waited for nearly ten days was disappointing. Compared to the previous training I did with smaller sample coverage, the difference is still quite big.

This result is still a bit disappointing. This model is basically not practical. of value. It seems that the parameters and training set need to be readjusted and the training is performed again. The training of large language models is an arms race, and it is impossible to play without good equipment. It seems that we must also upgrade the laboratory equipment, otherwise there will be few ten days to waste.

Judging from the recent failed fine-tuning training, fine-tuning training is not easy to complete. Different task objectives are mixed together for training. Different task objectives may require different training parameters, making the final training set unable to meet the needs of certain tasks. Therefore, PTUNING is only suitable for a very certain task, and is not necessarily suitable for mixed tasks. Models aimed at mixed tasks may need to use FINETUNE. This is similar to what everyone said when I was communicating with a friend a few days ago.

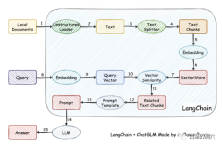

In fact, because it is difficult to train the model, some people have given up training the model by themselves, and instead vectorize the local knowledge base for more accurate retrieval, and then use AUTOPROMPT to retrieve it The final result generates an automatic prompt to ask the speech model. This goal is easily achieved using langchain.

The working principle is to load the local document as text through the loader, and then The text is divided into stroke text fragments, and after encoding, they are written into vector storage and used for query. After the query results come out, prompts for asking questions are automatically formed through the Prompt Template to ask LLM, and LLM generates the final answer.

There is another important point in this work. One is to more accurately search for knowledge in the local knowledge base. This is achieved by vector storage in the search. Currently, it is targeted at Chinese and English local knowledge bases. There are many solutions for vectorization and search of knowledge bases. You can choose a solution that is more friendly to your knowledge base.

The above is a knowledge base about OB passed on vicuna-13b In the Q&A, the above is the answer using the LLM capability without using the local knowledge base. The following is the answer after loading the local knowledge base. It can be seen that the performance improvement is quite obvious.

Let’s take a look at the ORA error problem just now. Before using the local knowledge base, LLM was basically It's nonsense, but after loading the local knowledge base, this answer is still satisfactory. The typos in the article are also errors in our knowledge base. In fact, the training set used by PTUNING is also generated through this local knowledge base.

We can gain some experience from the pitfalls we have stepped on recently. First of all, the difficulty of ptuning is much higher than we thought. Although ptuning requires lower equipment than finetune, the training difficulty is not low at all. Secondly, it is good to use local knowledge base through Langchain and autoprompt to improve LLM capabilities. For most enterprise applications, as long as the local knowledge base is sorted out and a suitable vectorization solution is selected, you should be able to get results that are no worse than PTUNING/FINETUNE. Effect. Third, and again as mentioned last time, the ability of LLM is crucial. A powerful LLM must be selected as the basic model to use. Any embedded model can only partially improve capabilities and cannot play a decisive role. Fourth, for database-related knowledge, vicuna-13b has really good abilities.

I have to go to the client to communicate early this morning. Time is limited in the morning, so I will just write a few sentences. If you have any thoughts on this, please leave a message for discussion (the discussion is only visible to you and me). I am also walking alone on this road. I hope there are fellow travelers who can give me some advice.

The above is the detailed content of An article on how to optimize the performance of LLM using local knowledge base. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

SublimeText3 Linux new version

SublimeText3 Linux latest version

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

SublimeText3 Chinese version

Chinese version, very easy to use

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.