In the field of customer service, changes related to ChatGPT have begun

In recent years, more and more businesses have adopted artificial intelligence technology to automate contact centers to handle the calls, chats and text messages of millions of customers. Now, ChatGPT's superior communication skills are being merged with key capabilities integrated into business-specific systems such as internal knowledge bases and CRMs.

The application of large-scale language models (LLM) can enhance automated contact centers, enabling them to resolve customer requests from start to finish like human customer service, and has achieved remarkable results. On the other hand, as more customers become aware of ChatGPT's human-like capabilities, you can imagine they will start to become more frustrated with legacy systems that often require them to wait 45 minutes for their credit card information to be updated.

But don’t be afraid. While using AI to solve customer problems may seem outdated to early adopters, the timing is actually perfect.

LLM Can Stop Decline in Customer Satisfaction

Satisfaction levels in the customer service industry have fallen to their lowest levels in decades due to a lack of seats and increased demand. The rise of LLM is bound to make artificial intelligence a core issue for every boardroom trying to rebuild customer loyalty.

Businesses that had turned to expensive outsourcing options, or eliminated contact centers entirely, suddenly saw a sustainable path to growth.

The blueprint has been drawn. AI can help achieve three primary goals of a call center: resolve customer issues in the first ring, reduce overall costs, and reduce agent burden (and by doing so, increase agent retention).

Over the past few years, enterprise-level contact centers have deployed artificial intelligence to handle their most common requests (e.g., billing, account management, and even outbound calls), and this trend looks set to continue in 2023 The years go on.

By doing this, they have been able to reduce wait times, allow their agents to focus on revenue-generating or value-added calls, and free themselves from outdated strategies designed to drive customers away from agents and solutions.

All of this can lead to cost savings, and Gartner predicts that the deployment of artificial intelligence will reduce contact center costs by more than $80 billion by 2026.

LLM makes automation easier and better than ever

LLM is trained on massive public datasets. This broad knowledge of the world lends itself well to customer service. They are able to accurately understand a customer's actual needs, regardless of the caller's way of speaking or presenting them.

LLM has been integrated into existing automation platforms, effectively improving the platform's ability to understand unstructured human conversations while reducing the occurrence of errors. This results in better resolution rates, fewer conversation steps, shorter call times and less need for an agent.

Customers can talk to the machine using any natural sentences, including asking multiple questions, asking the machine to wait or sending information via text. A key improvement to LLM is improved call resolution, allowing more customers to get the answers they need without having to speak to an agent.

LLM also significantly reduces the time required to customize and deploy artificial intelligence. With the right API, a short-staffed contact center can have a solution up and running in a matter of weeks without having to manually train artificial intelligence to understand the various requests a customer might make.

Contact centers face huge challenges and must simultaneously meet strict SLA metrics and keep call duration to a minimum. With LLM, they can not only answer more calls but also resolve issues end-to-end.

Call Center Automation Reduces ChatGPT Risk

While LLM is impressive, there are also many documented cases of inappropriate answers and "hallucinations" - on the machine When it doesn't know what to say, it makes up answers.

For enterprises, this is the number one reason why LLMs like ChatGPT cannot connect directly with customers, let alone integrate them with specific business systems, rules and platforms.

Existing AI platforms, such as Dialpad, Replicant and Five9, are providing contact centers with safeguards to better leverage the power of LLM while reducing risk. These solutions comply with SOC2, HIPAA and PCI standards to ensure maximum protection of customers' personal information.

And, because conversations are configured specifically for each use case, contact centers can control every word spoken or written by their machines, eliminating the need for prompt input (i.e. users trying to “trick” the LLM). Unpredictable risks caused by circumstances).

In the rapidly changing world of artificial intelligence, contact centers have more technology solutions to evaluate than ever before.

Customer expectations are rising and ChatGPT level service will soon become the common standard. All signs point to customer service being one of the sectors that has benefited most from past technological revolutions.

The above is the detailed content of In the field of customer service, changes related to ChatGPT have begun. For more information, please follow other related articles on the PHP Chinese website!

Sakana AI's 'AI Scientist': The Next Einstein or Just a Tool?Apr 14, 2025 am 09:27 AM

Sakana AI's 'AI Scientist': The Next Einstein or Just a Tool?Apr 14, 2025 am 09:27 AMIntroduction In artificial intelligence, a groundbreaking development has emerged that promises to reshape the very process of scientific discovery. In collaboration with the Foerster Lab for AI Research at the University of O

How to Prepare for an AI Job Interview? - Analytics VidhyaApr 14, 2025 am 09:25 AM

How to Prepare for an AI Job Interview? - Analytics VidhyaApr 14, 2025 am 09:25 AMIntroduction It could be challenging to prepare for an AI job interview due to the vast nature of the field and the wide variety of knowledge and abilities needed. The expansion of the AI industry corresponds with a growing re

Optimizing LLM Tasks with AdalFlowApr 14, 2025 am 09:21 AM

Optimizing LLM Tasks with AdalFlowApr 14, 2025 am 09:21 AMAdalFlow: A PyTorch Library for Streamlining LLM Task Pipelines AdalFlow, spearheaded by Li Yin, bridges the gap between Retrieval-Augmented Generation (RAG) research and practical application. Leveraging PyTorch, it addresses the limitations of exi

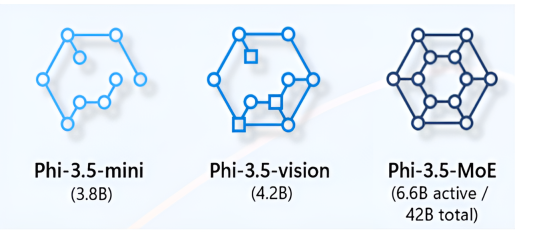

What Makes Phi 3.5 SLMs a Game-Changer for Generative AI?Apr 14, 2025 am 09:13 AM

What Makes Phi 3.5 SLMs a Game-Changer for Generative AI?Apr 14, 2025 am 09:13 AMMicrosoft Unveils Phi-3.5: A Family of Efficient and Powerful Small Language Models Microsoft's latest generation of Small Language Models (SLMs), the Phi-3.5 family, boasts superior performance across diverse benchmarks encompassing language, reason

A Guide to Python functions and Lambdas - Analytics VidhyaApr 14, 2025 am 09:12 AM

A Guide to Python functions and Lambdas - Analytics VidhyaApr 14, 2025 am 09:12 AMPython: Mastering Functions and Lambda Functions for Efficient and Readable Code We've explored Python's versatility; now let's delve into its capabilities for enhancing code efficiency and readability. Maintaining code modularity in production-leve

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AM

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AMIntroduction In prompt engineering, “Graph of Thought” refers to a novel approach that uses graph theory to structure and guide AI’s reasoning process. Unlike traditional methods, which often involve linear s

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AM

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AMIntroduction Congratulations! You run a successful business. Through your web pages, social media campaigns, webinars, conferences, free resources, and other sources, you collect 5000 email IDs daily. The next obvious step is

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AM

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AMIntroduction In today’s fast-paced software development environment, ensuring optimal application performance is crucial. Monitoring real-time metrics such as response times, error rates, and resource utilization can help main

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

Dreamweaver Mac version

Visual web development tools

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

Dreamweaver CS6

Visual web development tools