Technology peripherals

Technology peripherals AI

AI Andrew Ng's ChatGPT class went viral: AI gave up writing words backwards, but understood the whole world

Andrew Ng's ChatGPT class went viral: AI gave up writing words backwards, but understood the whole worldI didn’t expect that ChatGPT would still make stupid mistakes to this day?

Master Andrew Ng pointed it out at the latest class:

ChatGPT will not reverse words!

For example, let it reverse the word lollipop, and the output is pilollol, which is completely confusing.

Oh, this is indeed a bit surprising.

So much so that after netizens who listened to the class posted on Reddit, they immediately attracted a large number of onlookers, and the post quickly reached 6k views.

And this is not an accidental bug. Netizens found that ChatGPT is indeed unable to complete this task, and the results of our personal testing are also the same.

- 1 token≈4 English characters≈three-quarters of a word;

- 100 tokens≈75 words;

- 1-2 sentences ≈30 tokens;

- A paragraph ≈ 100 tokens, 1500 words ≈ 2048 tokens;

The higher the token-to-char (token to word) ratio, the higher the processing cost. Therefore, processing Chinese tokenize is more expensive than English.

It can be understood that token is a way for large models to understand the real world of humans. It's very simple and greatly reduces memory and time complexity.

But there is a problem with tokenizing words, which makes it difficult for the model to learn meaningful input representations. The most intuitive representation is that it cannot understand the meaning of the words.

At that time, Transformers had done corresponding optimization. For example, a complex and uncommon word was divided into a meaningful token and an independent token.

Just like "annoyingly" is divided into two parts: "annoying" and "ly", the former retains its own meaning, while the latter is more common.

This has also resulted in the amazing effects of ChatGPT and other large model products today, which can understand human language very well.

As for the inability to handle such a small task as word reversal, there is naturally a solution.

The simplest and most direct way is to separate the words yourself~

Or you can let ChatGPT do it step by step , first tokenize each letter.

Or maybe let it write a program that reverses letters, and then the result of the program is correct. (dog head)

However, GPT-4 can also be used, and there is no such problem in actual testing.

△Actual measurement GPT-4

In short, token is the cornerstone of AI’s understanding of natural language.

As a bridge for AI to understand human natural language, the importance of tokens has become increasingly obvious.

It has become a key determinant of the performance of AI models and the billing standard for large models.

There is even token literature

As mentioned above, token can facilitate the model to capture more fine-grained semantic information, such as word meaning, word order, grammatical structure, etc. In sequence modeling tasks (such as language modeling, machine translation, text generation, etc.), position and order are very important for model building.

Only when the model accurately understands the position and context of each token in the sequence can it predict the content better and correctly and give reasonable output.

Therefore, the quality and quantity of tokens have a direct impact on the model effect.

Starting this year, when more and more large models are released, the number of tokens will be emphasized. For example, the details of the exposure of Google PaLM 2 mentioned that it used 3.6 trillion tokens for training.

And many big names in the industry have also said that tokens are really crucial!

Andrej Karpathy, an AI scientist who switched from Tesla to OpenAI this year, said in his speech:

More tokens can enable models Think better.

And he emphasized that the performance of the model is not determined solely by the parameter size.

For example, the parameter size of LLaMA is much smaller than that of GPT-3 (65B vs 175B), but because it uses more tokens for training (1.4T vs 300B), LLaMA is more powerful.

With its direct impact on model performance, token is still the billing standard for AI models.

Take OpenAI’s pricing standard as an example. They charge in units of 1K tokens. Different models and different types of tokens have different prices.

In short, once you step into the field of AI large models, you will find that token is an unavoidable knowledge point.

Well, even token literature has been derived...

But it is worth mentioning that, what role does token play in the Chinese world? What it should be translated into has not been fully decided yet.

The literal translation of "token" is always a bit weird.

GPT-4 thinks it is better to call it "word element" or "tag", what do you think?

Reference link:

[1]https://www.reddit.com/r/ChatGPT/comments/13xxehx/chatgpt_is_unable_to_reverse_words/

[2]https://help.openai.com/en/articles/4936856-what-are-tokens-and-how-to-count-them

[3]https://openai.com /pricing

The above is the detailed content of Andrew Ng's ChatGPT class went viral: AI gave up writing words backwards, but understood the whole world. For more information, please follow other related articles on the PHP Chinese website!

ai合并图层的快捷键是什么Jan 07, 2021 am 10:59 AM

ai合并图层的快捷键是什么Jan 07, 2021 am 10:59 AMai合并图层的快捷键是“Ctrl+Shift+E”,它的作用是把目前所有处在显示状态的图层合并,在隐藏状态的图层则不作变动。也可以选中要合并的图层,在菜单栏中依次点击“窗口”-“路径查找器”,点击“合并”按钮。

ai橡皮擦擦不掉东西怎么办Jan 13, 2021 am 10:23 AM

ai橡皮擦擦不掉东西怎么办Jan 13, 2021 am 10:23 AMai橡皮擦擦不掉东西是因为AI是矢量图软件,用橡皮擦不能擦位图的,其解决办法就是用蒙板工具以及钢笔勾好路径再建立蒙板即可实现擦掉东西。

谷歌超强AI超算碾压英伟达A100!TPU v4性能提升10倍,细节首次公开Apr 07, 2023 pm 02:54 PM

谷歌超强AI超算碾压英伟达A100!TPU v4性能提升10倍,细节首次公开Apr 07, 2023 pm 02:54 PM虽然谷歌早在2020年,就在自家的数据中心上部署了当时最强的AI芯片——TPU v4。但直到今年的4月4日,谷歌才首次公布了这台AI超算的技术细节。论文地址:https://arxiv.org/abs/2304.01433相比于TPU v3,TPU v4的性能要高出2.1倍,而在整合4096个芯片之后,超算的性能更是提升了10倍。另外,谷歌还声称,自家芯片要比英伟达A100更快、更节能。与A100对打,速度快1.7倍论文中,谷歌表示,对于规模相当的系统,TPU v4可以提供比英伟达A100强1.

ai可以转成psd格式吗Feb 22, 2023 pm 05:56 PM

ai可以转成psd格式吗Feb 22, 2023 pm 05:56 PMai可以转成psd格式。转换方法:1、打开Adobe Illustrator软件,依次点击顶部菜单栏的“文件”-“打开”,选择所需的ai文件;2、点击右侧功能面板中的“图层”,点击三杠图标,在弹出的选项中选择“释放到图层(顺序)”;3、依次点击顶部菜单栏的“文件”-“导出”-“导出为”;4、在弹出的“导出”对话框中,将“保存类型”设置为“PSD格式”,点击“导出”即可;

ai顶部属性栏不见了怎么办Feb 22, 2023 pm 05:27 PM

ai顶部属性栏不见了怎么办Feb 22, 2023 pm 05:27 PMai顶部属性栏不见了的解决办法:1、开启Ai新建画布,进入绘图页面;2、在Ai顶部菜单栏中点击“窗口”;3、在系统弹出的窗口菜单页面中点击“控制”,然后开启“控制”窗口即可显示出属性栏。

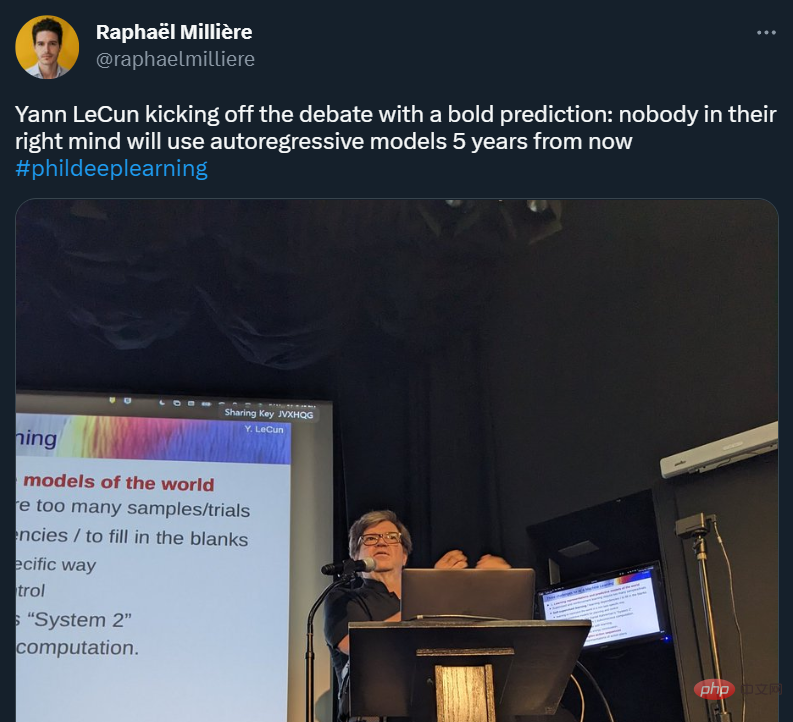

GPT-4的研究路径没有前途?Yann LeCun给自回归判了死刑Apr 04, 2023 am 11:55 AM

GPT-4的研究路径没有前途?Yann LeCun给自回归判了死刑Apr 04, 2023 am 11:55 AMYann LeCun 这个观点的确有些大胆。 「从现在起 5 年内,没有哪个头脑正常的人会使用自回归模型。」最近,图灵奖得主 Yann LeCun 给一场辩论做了个特别的开场。而他口中的自回归,正是当前爆红的 GPT 家族模型所依赖的学习范式。当然,被 Yann LeCun 指出问题的不只是自回归模型。在他看来,当前整个的机器学习领域都面临巨大挑战。这场辩论的主题为「Do large language models need sensory grounding for meaning and u

强化学习再登Nature封面,自动驾驶安全验证新范式大幅减少测试里程Mar 31, 2023 pm 10:38 PM

强化学习再登Nature封面,自动驾驶安全验证新范式大幅减少测试里程Mar 31, 2023 pm 10:38 PM引入密集强化学习,用 AI 验证 AI。 自动驾驶汽车 (AV) 技术的快速发展,使得我们正处于交通革命的风口浪尖,其规模是自一个世纪前汽车问世以来从未见过的。自动驾驶技术具有显着提高交通安全性、机动性和可持续性的潜力,因此引起了工业界、政府机构、专业组织和学术机构的共同关注。过去 20 年里,自动驾驶汽车的发展取得了长足的进步,尤其是随着深度学习的出现更是如此。到 2015 年,开始有公司宣布他们将在 2020 之前量产 AV。不过到目前为止,并且没有 level 4 级别的 AV 可以在市场

ai移动不了东西了怎么办Mar 07, 2023 am 10:03 AM

ai移动不了东西了怎么办Mar 07, 2023 am 10:03 AMai移动不了东西的解决办法:1、打开ai软件,打开空白文档;2、选择矩形工具,在文档中绘制矩形;3、点击选择工具,移动文档中的矩形;4、点击图层按钮,弹出图层面板对话框,解锁图层;5、点击选择工具,移动矩形即可。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

SublimeText3 Linux new version

SublimeText3 Linux latest version

WebStorm Mac version

Useful JavaScript development tools

Dreamweaver CS6

Visual web development tools

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

SublimeText3 Chinese version

Chinese version, very easy to use