Technology peripherals

Technology peripherals AI

AI Three OpenAI leaders personally wrote this article: How should we govern superintelligence?

Three OpenAI leaders personally wrote this article: How should we govern superintelligence?AI has never affected human life so extensively and brought so many worries and troubles to human beings as it does today.

Like all other major technological innovations in the past, the development of AI also has two sides, one facing good and the other facing evil. This is also the beginning of regulatory agencies around the world. One of the important reasons for active intervention.

In any case, the development of AI technology seems to be a general trend and unstoppable. How to make AI comply with human values and ethics? More secure development and deployment is an issue that all current AI practitioners need to seriously consider.

Today, OpenAI’s three co-founders—CEO Sam Altman, President Greg Brockman, and Chief Scientist Ilya Sutskever Co-authored an article discussing how to govern superintelligence. They argue that now is a good time to start thinking about superintelligent governance—future artificial intelligence systems that are even more capable than AGI.

Based on what we have seen so far, it is conceivable that within the next ten years, artificial intelligence (AI) will surpass humans in most fields Expert skill level and can carry out as many production activities as one of the largest companies today.

#Regardless of the potential benefits and drawbacks, superintelligence will be more powerful than other technologies that humans have had to face in the past. We can have an incredibly prosperous future; but we must manage risks to get there. Considering the possibility of existential risk, we cannot just respond passively. Nuclear power is one example of a technology with this property; another is synthetic biology.

Likewise, we must mitigate the risks of current AI technologies, but superintelligence will require special handling and coordination. A Starting Point

There are many important ideas in our opportunity to successfully lead this development; here we Initial reflections were given on three of these ideas.

First, we need some coordination among leading development efforts to ensure that superintelligence is developed in a way that both keeps us safe and can help these systems integrate smoothly with society. There are many ways to achieve this; the world's major governments could come together to establish a project that many current efforts would be part of, or we could do so through "collective consent" (with A new organizational support force, as suggested below), would limit the growth of cutting-edge AI capabilities to a certain rate each year.

Of course, individual companies should also be held to extremely high standards to develop responsibly.

Secondly, we may eventually need something similar to the International Atomic Energy Agency (IAEA) to regulate the development of superintelligence; Any effort that exceeds a certain threshold of capabilities (or computing and other resources) needs to be overseen by this international body, which can inspect the system, require audits, test for compliance with security standards, impose limits on the extent of deployment and security levels, etc. Tracking compute and energy usage would be of great help and give us some hope that this idea is actually achievable. As a first step, companies could voluntarily agree to begin implementing elements that such a body might one day require, while as a second step, individual countries could implement it. Importantly, such an agency should focus on reducing “existential risks” rather than issues that should be left to individual countries, such as defining what AI should be allowed to say. Third, We need to have enough technical capabilities to make super intelligence safe. This is an open research question into which we and others are investing considerable effort. We believe it is important to allow companies and open source projects to develop models below significant capability thresholds , without requiring the kind of regulation we describe here (including cumbersome mechanisms such as licensing or auditing). Today’s systems will create tremendous value for the world, and while they do have risks, those risk levels appear to be comparable to other Internet technologies, and society’s response The approach seemed appropriate. By contrast, the systems we are focusing on will have power beyond that of any technology to date, and we should be careful not to overwhelm our efforts by applying similar standards to far lower technology at this threshold to downplay attention to them. But for,the governance of the most robust systems, and the issues surrounding their deployment decision, there must be strong public oversight. We believe that people around the world should democratically determine the boundaries and defaults of AI systems. We don't yet know how to design such a mechanism, but we plan to do some experiments. We continue to believe that, within these broad boundaries, individual users should have significant control over how they behave with AI. #Given the risks and difficulties, it’s worth thinking about why we’re building this technology. #At OpenAI, we have two basic reasons. First, we believe it will lead to a better world than we can imagine today (we are already seeing early examples of this in areas such as education, creative work, and personal productivity). The world faces a lot of problems, and we need more help solving them; this technology can improve our society, and we're sure to be surprised by everyone's creativity using these new tools. The economic growth and improvement in quality of life will be staggering. Second, we believe that stopping building superintelligence would be a decision that is unintuitively risky and fraught with difficulty. Due to its huge advantages, the cost of building superintelligence is getting lower every year, the number of builders is increasing rapidly, and it is essentially part of the technological path we are on, stopping it will require something like A global surveillance regime exists, and even such a regime is no guarantee of success. Therefore, we must get it right. Out of scope

Public Participation and Potential

The above is the detailed content of Three OpenAI leaders personally wrote this article: How should we govern superintelligence?. For more information, please follow other related articles on the PHP Chinese website!

ai合并图层的快捷键是什么Jan 07, 2021 am 10:59 AM

ai合并图层的快捷键是什么Jan 07, 2021 am 10:59 AMai合并图层的快捷键是“Ctrl+Shift+E”,它的作用是把目前所有处在显示状态的图层合并,在隐藏状态的图层则不作变动。也可以选中要合并的图层,在菜单栏中依次点击“窗口”-“路径查找器”,点击“合并”按钮。

ai橡皮擦擦不掉东西怎么办Jan 13, 2021 am 10:23 AM

ai橡皮擦擦不掉东西怎么办Jan 13, 2021 am 10:23 AMai橡皮擦擦不掉东西是因为AI是矢量图软件,用橡皮擦不能擦位图的,其解决办法就是用蒙板工具以及钢笔勾好路径再建立蒙板即可实现擦掉东西。

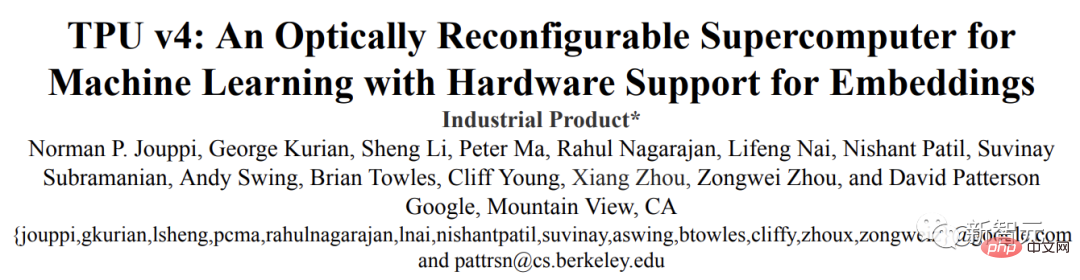

谷歌超强AI超算碾压英伟达A100!TPU v4性能提升10倍,细节首次公开Apr 07, 2023 pm 02:54 PM

谷歌超强AI超算碾压英伟达A100!TPU v4性能提升10倍,细节首次公开Apr 07, 2023 pm 02:54 PM虽然谷歌早在2020年,就在自家的数据中心上部署了当时最强的AI芯片——TPU v4。但直到今年的4月4日,谷歌才首次公布了这台AI超算的技术细节。论文地址:https://arxiv.org/abs/2304.01433相比于TPU v3,TPU v4的性能要高出2.1倍,而在整合4096个芯片之后,超算的性能更是提升了10倍。另外,谷歌还声称,自家芯片要比英伟达A100更快、更节能。与A100对打,速度快1.7倍论文中,谷歌表示,对于规模相当的系统,TPU v4可以提供比英伟达A100强1.

ai可以转成psd格式吗Feb 22, 2023 pm 05:56 PM

ai可以转成psd格式吗Feb 22, 2023 pm 05:56 PMai可以转成psd格式。转换方法:1、打开Adobe Illustrator软件,依次点击顶部菜单栏的“文件”-“打开”,选择所需的ai文件;2、点击右侧功能面板中的“图层”,点击三杠图标,在弹出的选项中选择“释放到图层(顺序)”;3、依次点击顶部菜单栏的“文件”-“导出”-“导出为”;4、在弹出的“导出”对话框中,将“保存类型”设置为“PSD格式”,点击“导出”即可;

ai顶部属性栏不见了怎么办Feb 22, 2023 pm 05:27 PM

ai顶部属性栏不见了怎么办Feb 22, 2023 pm 05:27 PMai顶部属性栏不见了的解决办法:1、开启Ai新建画布,进入绘图页面;2、在Ai顶部菜单栏中点击“窗口”;3、在系统弹出的窗口菜单页面中点击“控制”,然后开启“控制”窗口即可显示出属性栏。

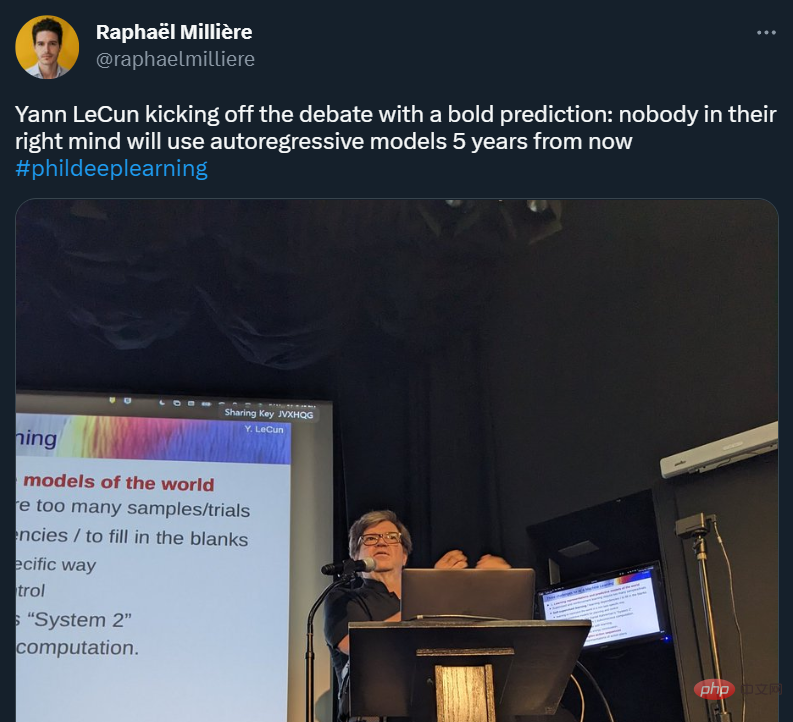

GPT-4的研究路径没有前途?Yann LeCun给自回归判了死刑Apr 04, 2023 am 11:55 AM

GPT-4的研究路径没有前途?Yann LeCun给自回归判了死刑Apr 04, 2023 am 11:55 AMYann LeCun 这个观点的确有些大胆。 「从现在起 5 年内,没有哪个头脑正常的人会使用自回归模型。」最近,图灵奖得主 Yann LeCun 给一场辩论做了个特别的开场。而他口中的自回归,正是当前爆红的 GPT 家族模型所依赖的学习范式。当然,被 Yann LeCun 指出问题的不只是自回归模型。在他看来,当前整个的机器学习领域都面临巨大挑战。这场辩论的主题为「Do large language models need sensory grounding for meaning and u

强化学习再登Nature封面,自动驾驶安全验证新范式大幅减少测试里程Mar 31, 2023 pm 10:38 PM

强化学习再登Nature封面,自动驾驶安全验证新范式大幅减少测试里程Mar 31, 2023 pm 10:38 PM引入密集强化学习,用 AI 验证 AI。 自动驾驶汽车 (AV) 技术的快速发展,使得我们正处于交通革命的风口浪尖,其规模是自一个世纪前汽车问世以来从未见过的。自动驾驶技术具有显着提高交通安全性、机动性和可持续性的潜力,因此引起了工业界、政府机构、专业组织和学术机构的共同关注。过去 20 年里,自动驾驶汽车的发展取得了长足的进步,尤其是随着深度学习的出现更是如此。到 2015 年,开始有公司宣布他们将在 2020 之前量产 AV。不过到目前为止,并且没有 level 4 级别的 AV 可以在市场

ai移动不了东西了怎么办Mar 07, 2023 am 10:03 AM

ai移动不了东西了怎么办Mar 07, 2023 am 10:03 AMai移动不了东西的解决办法:1、打开ai软件,打开空白文档;2、选择矩形工具,在文档中绘制矩形;3、点击选择工具,移动文档中的矩形;4、点击图层按钮,弹出图层面板对话框,解锁图层;5、点击选择工具,移动矩形即可。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

SublimeText3 Mac version

God-level code editing software (SublimeText3)

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

Zend Studio 13.0.1

Powerful PHP integrated development environment