Technology peripherals

Technology peripherals AI

AI Get a virtual 3D wife in 30 seconds with a single card! Text to 3D generates a high-precision digital human with clear pore details, seamlessly connecting with Maya, Unity and other production tools

Get a virtual 3D wife in 30 seconds with a single card! Text to 3D generates a high-precision digital human with clear pore details, seamlessly connecting with Maya, Unity and other production toolsChatGPT has injected a dose of chicken blood into the AI industry. Everything that was once unimaginable has become basic practice today.

is continuing to advance Text-to-3D, which is regarded as following Diffusion(image) and GPT(text). The next frontier hot spot in the field of AIGC has received unprecedented attention.

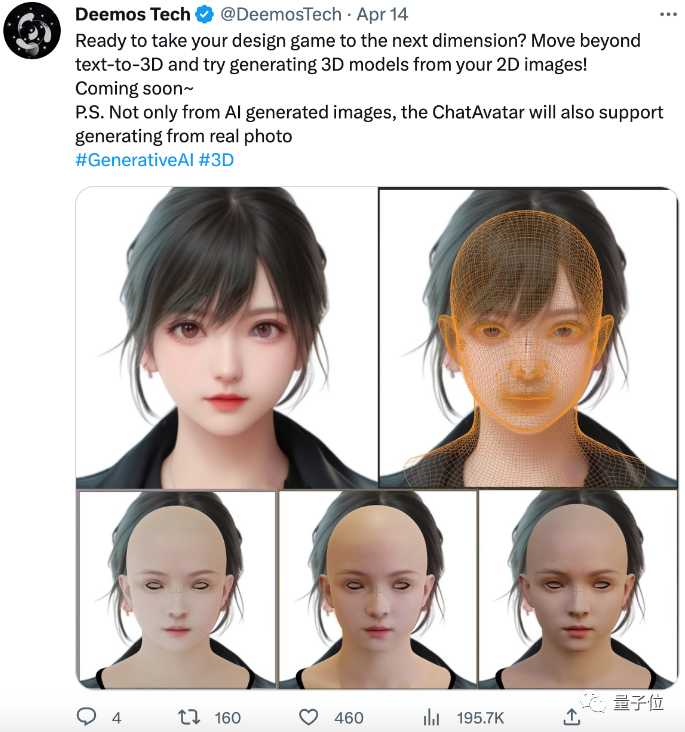

No, a product called ChatAvatar has been put into low-key public beta. It quickly attracted more than 700,000 views and attention, and was listed in the Hot (Spaces of the week) .

△ChatAvatar will also support Image to 3D technology that generates 3D stylized characters from AI-generated single-perspective/multi-perspective original paintings, which has been widely received Pay attention to

The 3D model generated by the current beta version can be directly downloaded to the local together with the PBR material. Not only does it work well, but more importantly it is free to play. Some netizens exclaimed:

It’s so cool, I feel like I can easily generate my own digital twin.

#This has attracted many netizens to try it out and contribute their ideas. Some people combined this product with ControlNet and found that the effect was so delicate and realistic that it was unexpected.

This Text-to-3D tool with almost zero threshold to use is called ChatAvatar and was created by the domestic AI startup Yingmo Technology Team.

It is understood that this is the world’s first Production-Ready Text to 3D product. Through simple text, such as the name of a star or the appearance of a desired character, it can generate film and television-level images. 3D hyper-realistic digital human assets.

The efficiency is also very high. It only takes 30 seconds on average to make a face that looks real - even your own.

In the future, the generation field will also be expanded to other three-dimensional assets.

And the model has regular topology, PBR material with 4k resolution, and binding. It can be directly connected to the production pipeline of production engines such as Unity, Unreal Engine, and Maya.

So, what kind of 3D generation tool is ChatAvatar? What technology is used behind it?

Complete "Painting Skin" in 30 seconds

Experience the gameplay of ChatAvatar personally and find that it can be said to be a truly zero threshold.

Specifically, you only need to describe your needs to ChatBot in vernacular on the official website in the form of a conversation, and a 3D face can be generated on demand and covered with a sticker The real "human skin" of the model.

During the entire conversation process, ChatBot will guide according to the user's needs to understand the user's thoughts on the required model in as much detail as possible.

During the experience, we described to ChatBot such a 3D image we want to generate:

Click left Click the Generate button on the side, and in less than 10 seconds on average, the initial prototypes of 9 different 3D faces generated according to the description will appear on the screen.

After selecting one of them at will, the model and material will continue to be optimized based on the selection. Finally, the model rendering result after covering the skin will appear, and the rendering effect under different light and shadow will be displayed - these renderings are completed in real time in the browser :

It is worth mentioning that if the user is a prompt engineering expert, he can also complete the generation by directly entering prompt in the left box.

Finally, with one click download, you can get a 3D digital head asset that can be directly connected to the production engine and driven:

Although the beta version The hairstyle function has not yet been launched, but overall, the final generated 3D digital human assets and description content have a high degree of matching.

The official website also displays many assets generated by ChatAvatar users, with different races, skin colors, different ages, joy, anger, sorrow, beauty, ugly, fat and thin, and all kinds of looks.

Let’s summarize the highlights of the ChatAvatar product for generating 3D digital human assets:

First of all, it is easy to use; secondly,The generation span is large, and the facial features can be changed, and masks, tattoos, etc. that fit the face can also be generated, such as this:

##According to the official According to the promotional video, ChatAvatar can even further generate characters beyond human scope, such as characters in film and television works such as Avatar:

##The most important thing is, ChatAvatar

##The most important thing is, ChatAvatar

. This means that the 3D assets generated by ChatAvatar can be directly integrated into the game and film and television production processes.

Of course, before being officially connected to the industrial process, ChatAvatar has already attracted thousands of artists and professional art personnel to participate in the first round of public beta, and related topics on Twitter have received nearly one million views and attention.

Any tweet can have more than 50k views.

It’s not for nothing that I have accumulated a lot of “tap water”. Look at the 3D face of Einstein. Who wouldn’t say that it really looks like him?

It’s not for nothing that I have accumulated a lot of “tap water”. Look at the 3D face of Einstein. Who wouldn’t say that it really looks like him?

If combined with ControlNet, the generated effect is no less than that of a SLR photo taken directly:

If combined with ControlNet, the generated effect is no less than that of a SLR photo taken directly:

There are already many users After the experience, I began to imagine using this Text-to-3D tool on a large scale in industrial applications such as games, film and television.

There are already many users After the experience, I began to imagine using this Text-to-3D tool on a large scale in industrial applications such as games, film and television.

It is understood that user feedback will become an important basis for the ChatAvatar team to quickly iterate and update, forming a data flywheel to provide more complete and demand-based functions in a timely manner.

In fact, for previous designers or companies in the 3D industry, most AI text-to-3D applications are not ineffective, but there are still many difficulties in actually implementing them into the industrial design process.

What are the technical reasons behind ChatAvatar being able to make such a big splash this time?

What are the technical reasons behind ChatAvatar being able to make such a big splash this time?

What is the difficulty in generating 3D assets that meet industry requirements?

It is said that AI will replace humans. In fact, it is not that easy to replace just in the field of Text-to-3D.

The biggest difficulty is to make the things generated by AI meet the industry's requirements for 3D assets from the

standard. How do you understand the

Industry Standardhere? From the perspective of professional 3D art design, there are at least three aspects-Quality, controllability and generation speed.

The first is quality. Especially for the film, television and game industries that emphasize visual effects, in order to generate 3D assets that meet pipeline requirements, "industry unspoken rules" such as topological regularity and texture mapping accuracy are the first steps that must be taken for AI products. Hom.

Take the regularity of the topological structure as an example. This essentially refers to the reasonableness of the 3D asset wiring.

For 3D assets, the regularity of the topology often directly affects the animation effect, modification processing efficiency and texture drawing speed of the object:

According to the introduction of 3D art design in the industry, manual retopology The time cost is often higher than the production of the 3D model itself, even in multiples. This means that no matter how cool the 3D assets generated by the AI model are, if the generated topological regularity does not meet the requirements, the cost cannot be fundamentally reduced. Not to mention texture accuracy.

△Yingmo Technology’s ChatAvatar project has significantly improved compared to previous work in terms of generation quality, speed and standard compatibility

Take PBR textures, which are currently commonly required by the game and film and television industries, as an example. They include a series of textures such as reflectivity maps and normal maps, which are equivalent to the "layers" of 2D image PSD files and are essential for 3D asset pipeline production. One of the few conditions.

However, the current 3D assets generated by AI are often a "whole", and it is rare to be able to independently generate PBR textures that meet the industrial environment as required.

The second is controllability. For generative AI, how to make the generated content more "controllable" is another major requirement of the CG industry for this technology.

Take the well-known 2D industry as an example. Before the emergence of ControlNet, the 2D AIGC industry had been in a state of "semi-dark progress".

In other words, AI can generate images of objects of specified categories, but cannot generate objects of specified postures. The generation effect depends entirely on prompt engineering and "metaphysics."

After the emergence of ControlNet, the controllability of 2D AI image generation has been improved by leaps and bounds. However, for 3D AI, in order to generate assets with corresponding effects, it still relies heavily on professional Prompt works.

Finally is the generation speed. Compared with 3D art design, the advantage of AI generation is speed. However, if the speed and effect of AI rendering cannot match that of manual rendering, then this technology will still not be able to bring benefits to the industry.

Take NeRF, which is currently very popular in AI technology, as an example. Its industrialization is faced with compatibility problems of speed and quality.

When the generation quality is high, 3D generation based on NeRF often takes a long time; however, if speed is pursued, even 3D assets generated by NeRF will not be put into industrial use at all.

But even if this problem is solved, how to make NeRF compatible with mainstream engines in the traditional CG industry without losing accuracy is still a huge problem.

It is not difficult to find from the above industrial standardization process that there are two major bottlenecks in the implementation of most AI text to 3D applications:

One is that the prompt project needs to be completed manually, which is not friendly enough for non-AI professionals or designers who do not understand AI; the other is that the generated 3D assets often do not meet industry standards and cannot be put into use no matter how beautiful they are.

In view of these two points, ChatAvatar has given two specific and effective solutions.

On the one hand, ChatAvatar realizes a second path besides manual input prompt engineering, and is also a shortcut more suitable for ordinary people: describing needs through direct dialogue through "Party A mode".

The team’s official Twitter said that in order to realize this feature, ChatAvatar developed a method of converting conversational descriptions into portrait features based on GPT’s capabilities.

Designers only need to keep chatting with GPT and describe the "feeling" they want:

GPT can automatically help complete the prompt project and display the results Delivered to AI:

# In other words, if ControlNet is the "Game Changer" for the 2D industry, then for the 3D industry, ChatAvatar can convert text into 3D , is nothing short of a game changer for the industry.

On the other hand, what is more important is that ChatAvatar is perfectly compatible with the CG pipeline, that is, the generated assets meet industry requirements in terms of topology, controllability and speed.

This not only means that after generating 3D assets, the downloaded content can be directly imported into various post-production software for secondary editing, with greater controllability;

At the same time, the generated Models and high-precision material maps can also achieve extremely realistic rendering effects in later rendering.

In order to achieve such an effect, the team developed a progressive 3D generation framework DreamFace for ChatAvatar.

The key lies in the underlying data used to train the model, which is the world's first large-scale, High-precision, multi-expression face high-precision data set.

Based on this data set, DreamFace can efficiently complete the generation ofproduct-level three-dimensional assets, that is, the generated assets have regular topology, materials, and bindings.

DreamFace mainly includes three modules: geometry generation, physics-based material diffusion and animation capability generation. By introducing an external 3D database, DreamFace can directly output assets that comply with the CG process.

文生图 field achieved results due to Diffusion Model, people began to expect Text generation 3D to have the same amazing performance. Once the 3D creation technology of generative AI matures, content creation such as VR and video will take off.

The AI startup company behind itYingmu Technology was incubated from the MARS Laboratory of Shanghai University of Science and Technology in 2020. After its establishment, it received two rounds of investment from Qiji Chuangtan and Sequoia Seeds.

The company focuses on the research and productization of computer graphics and generative AI. In 2021, before AIGC made huge waves, the company had already launched Wand, the first AIGC ToC painting application in China, and the product once topped the AppStore partition.

And this forward-looking team, which is already well-known in the industry, average age is only 25 years old.

After specifically anchoring the first commercialization scenario on digital people, ChatAvatar is their latest progress in this direction by taking advantage of AIGC.

As a newly launched product, ChatAvatar has exceeded the expectations of the Yingmo team in terms of product effects such as compatibility, completion and accuracy. However, in Wu Di's words, the process of getting here was "very embarrassing."

The main reason is nothing more than "lack of people". At present, Shadow Eye has made progress in multi-category 3D generation technology, and the next step is to launch "3D generated large models".

The above is the detailed content of Get a virtual 3D wife in 30 seconds with a single card! Text to 3D generates a high-precision digital human with clear pore details, seamlessly connecting with Maya, Unity and other production tools. For more information, please follow other related articles on the PHP Chinese website!

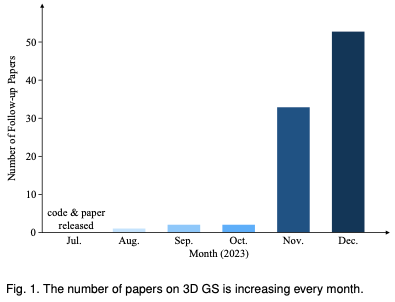

为何在自动驾驶方面Gaussian Splatting如此受欢迎,开始放弃NeRF?Jan 17, 2024 pm 02:57 PM

为何在自动驾驶方面Gaussian Splatting如此受欢迎,开始放弃NeRF?Jan 17, 2024 pm 02:57 PM写在前面&笔者的个人理解三维Gaussiansplatting(3DGS)是近年来在显式辐射场和计算机图形学领域出现的一种变革性技术。这种创新方法的特点是使用了数百万个3D高斯,这与神经辐射场(NeRF)方法有很大的不同,后者主要使用隐式的基于坐标的模型将空间坐标映射到像素值。3DGS凭借其明确的场景表示和可微分的渲染算法,不仅保证了实时渲染能力,而且引入了前所未有的控制和场景编辑水平。这将3DGS定位为下一代3D重建和表示的潜在游戏规则改变者。为此我们首次系统地概述了3DGS领域的最新发展和关

了解 Microsoft Teams 中的 3D Fluent 表情符号Apr 24, 2023 pm 10:28 PM

了解 Microsoft Teams 中的 3D Fluent 表情符号Apr 24, 2023 pm 10:28 PM您一定记得,尤其是如果您是Teams用户,Microsoft在其以工作为重点的视频会议应用程序中添加了一批新的3DFluent表情符号。在微软去年宣布为Teams和Windows提供3D表情符号之后,该过程实际上已经为该平台更新了1800多个现有表情符号。这个宏伟的想法和为Teams推出的3DFluent表情符号更新首先是通过官方博客文章进行宣传的。最新的Teams更新为应用程序带来了FluentEmojis微软表示,更新后的1800表情符号将为我们每天

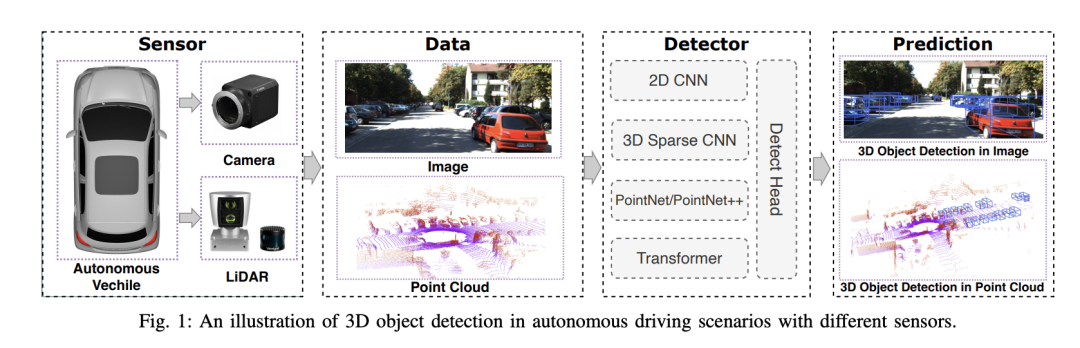

选择相机还是激光雷达?实现鲁棒的三维目标检测的最新综述Jan 26, 2024 am 11:18 AM

选择相机还是激光雷达?实现鲁棒的三维目标检测的最新综述Jan 26, 2024 am 11:18 AM0.写在前面&&个人理解自动驾驶系统依赖于先进的感知、决策和控制技术,通过使用各种传感器(如相机、激光雷达、雷达等)来感知周围环境,并利用算法和模型进行实时分析和决策。这使得车辆能够识别道路标志、检测和跟踪其他车辆、预测行人行为等,从而安全地操作和适应复杂的交通环境.这项技术目前引起了广泛的关注,并认为是未来交通领域的重要发展领域之一。但是,让自动驾驶变得困难的是弄清楚如何让汽车了解周围发生的事情。这需要自动驾驶系统中的三维物体检测算法可以准确地感知和描述周围环境中的物体,包括它们的位置、

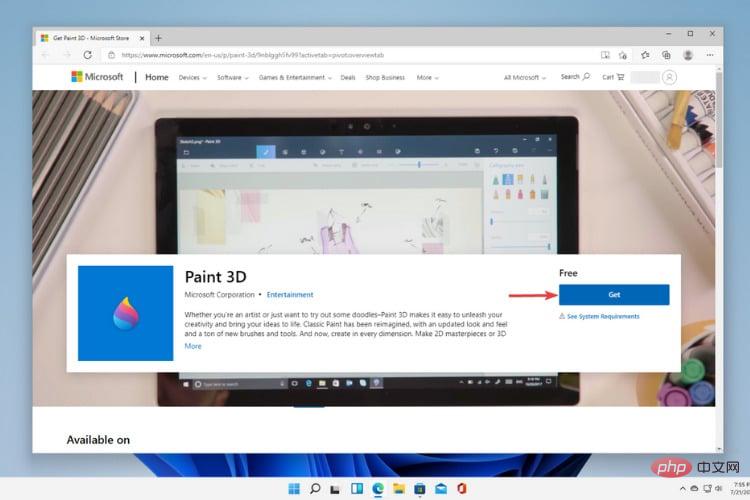

Windows 11中的Paint 3D:下载、安装和使用指南Apr 26, 2023 am 11:28 AM

Windows 11中的Paint 3D:下载、安装和使用指南Apr 26, 2023 am 11:28 AM当八卦开始传播新的Windows11正在开发中时,每个微软用户都对新操作系统的外观以及它将带来什么感到好奇。经过猜测,Windows11就在这里。操作系统带有新的设计和功能更改。除了一些添加之外,它还带有功能弃用和删除。Windows11中不存在的功能之一是Paint3D。虽然它仍然提供经典的Paint,它对抽屉,涂鸦者和涂鸦者有好处,但它放弃了Paint3D,它提供了额外的功能,非常适合3D创作者。如果您正在寻找一些额外的功能,我们建议AutodeskMaya作为最好的3D设计软件。如

单卡30秒跑出虚拟3D老婆!Text to 3D生成看清毛孔细节的高精度数字人,无缝衔接Maya、Unity等制作工具May 23, 2023 pm 02:34 PM

单卡30秒跑出虚拟3D老婆!Text to 3D生成看清毛孔细节的高精度数字人,无缝衔接Maya、Unity等制作工具May 23, 2023 pm 02:34 PMChatGPT给AI行业注入一剂鸡血,一切曾经的不敢想,都成为如今的基操。正持续进击的Text-to-3D,就被视为继Diffusion(图像)和GPT(文字)后,AIGC领域的下一个前沿热点,得到了前所未有的关注度。这不,一款名为ChatAvatar的产品低调公测,火速收揽超70万浏览与关注,并登上抱抱脸周热门(Spacesoftheweek)。△ChatAvatar也将支持从AI生成的单视角/多视角原画生成3D风格化角色的Imageto3D技术,受到了广泛关注现行beta版本生成的3D模型,

自动驾驶3D视觉感知算法深度解读Jun 02, 2023 pm 03:42 PM

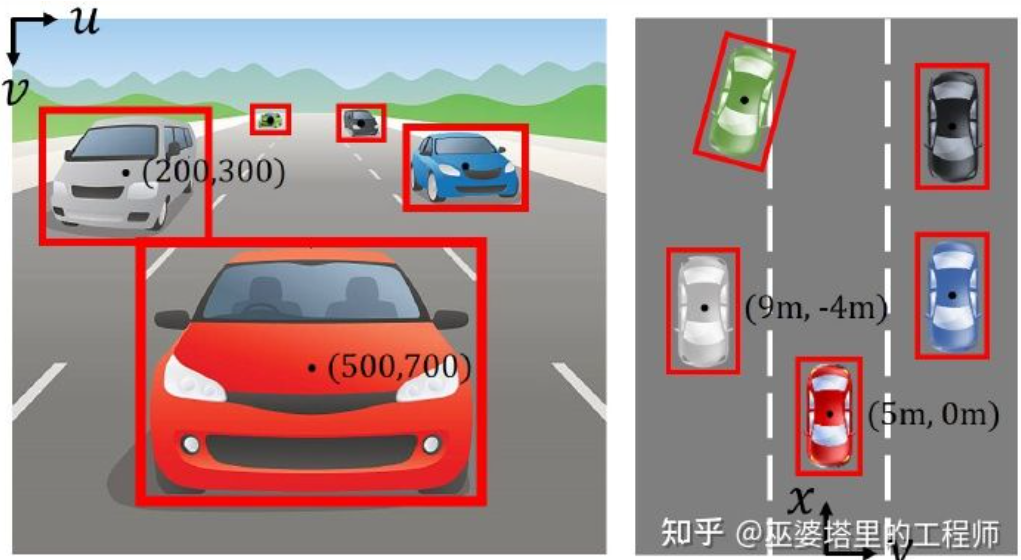

自动驾驶3D视觉感知算法深度解读Jun 02, 2023 pm 03:42 PM对于自动驾驶应用来说,最终还是需要对3D场景进行感知。道理很简单,车辆不能靠着一张图像上得到感知结果来行驶,就算是人类司机也不能对着一张图像来开车。因为物体的距离和场景的和深度信息在2D感知结果上是体现不出来的,而这些信息才是自动驾驶系统对周围环境作出正确判断的关键。一般来说,自动驾驶车辆的视觉传感器(比如摄像头)安装在车身上方或者车内后视镜上。无论哪个位置,摄像头所得到的都是真实世界在透视视图(PerspectiveView)下的投影(世界坐标系到图像坐标系)。这种视图与人类的视觉系统很类似,

《原神》:知名原神3d同人作者被捕Feb 15, 2024 am 09:51 AM

《原神》:知名原神3d同人作者被捕Feb 15, 2024 am 09:51 AM一些原神“奇怪”的关键词,在这两天很有关注度,明明搜索指数没啥变化,却不断有热议话题蹦窜。例如了龙王、钟离等“转变”立绘激增,虽在网络上疯传了一阵子,但是经过追溯发现这些是合理、常规的二创同人。如果单是这些,倒也翻不起多大的热度。按照一部分网友的说法,除了原神自身就有热度外,发现了一件格外醒目的事情:原神3d同人作者shirakami已经被捕。这引发了不小的热议。为什么被捕?关键词,原神3D动画。还是越过了线(就是你想的那种),再多就不能明说了。经过多方求证,以及新闻报道,确实有此事。自从去年发

跨模态占据性知识的学习:使用渲染辅助蒸馏技术的RadOccJan 25, 2024 am 11:36 AM

跨模态占据性知识的学习:使用渲染辅助蒸馏技术的RadOccJan 25, 2024 am 11:36 AM原标题:Radocc:LearningCross-ModalityOccupancyKnowledgethroughRenderingAssistedDistillation论文链接:https://arxiv.org/pdf/2312.11829.pdf作者单位:FNii,CUHK-ShenzhenSSE,CUHK-Shenzhen华为诺亚方舟实验室会议:AAAI2024论文思路:3D占用预测是一项新兴任务,旨在使用多视图图像估计3D场景的占用状态和语义。然而,由于缺乏几何先验,基于图像的场景

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Atom editor mac version download

The most popular open source editor

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

SublimeText3 Linux new version

SublimeText3 Linux latest version

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),