Technology peripherals

Technology peripherals AI

AI AI psychic model successfully decodes brain information with an accuracy of 82%

AI psychic model successfully decodes brain information with an accuracy of 82%AI psychic model successfully decodes brain information with an accuracy of 82%

Geoffrey Hinton, the father of neural networks, has resigned from Google, saying he regrets his life's work.

Now it seems that his fear of AI is not unreasonable.

Because, a ChatGPT-like model has learned to read minds, with an accuracy rate as high as 82%!

Researchers from the University of Texas at Austin have developed a GPT-based language decoder.

It can collect brain activity information through non-invasive MRI/fMRI and convert thoughts into language.

##Paper address: https://www.nature.com/articles/s41593-023-01304-9

Shockingly, your brain's decoder can read your thoughts while you're watching a Pixar silent movie.

This ChatGPT-like model decodes human thoughts with unprecedented accuracy, opening up new potential for brain imaging and raising concerns about privacy.

As soon as the study came out, it caused an uproar on the Internet. Netizens exclaimed, it’s too scary.

We are one step closer to the true Thought Police.

So, this terrifying brain decoder How is "mind reading" achieved?

Here, we have to mention functional magnetic resonance imaging (fMRI) technology, which can obtain images of dynamic changes in the brain by monitoring blood oxygen levels in different parts of the cerebral cortex.

So just by analyzing the fMRI data, the stories or even images in the brains of the participants can be described in words in a non-invasive way.

Brain activity is like an encrypted signal, and large pre-trained language models provide the ability to decipher way.

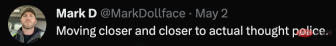

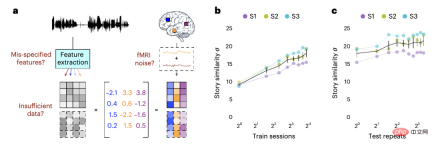

Here, researchers trained a neural network language model based on GPT-1.

Alexander Huth asked three subjects to listen to voice podcasts for 16 hours continuously and collected fMRI data while they listened.

These language podcasts are mainly talk shows and TED talks, such as Modern Love from the New York Times.

The researchers next used large language models to translate the participants' fMRI data sets into words and phrases.

Then the brain activity of the participants listening to the new recording is then tested. By observing how close the translated text is to the text heard by the participants, you can know whether the decoder is accurate.

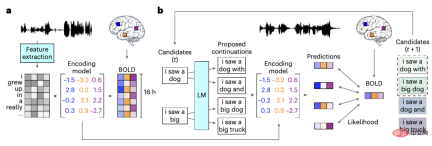

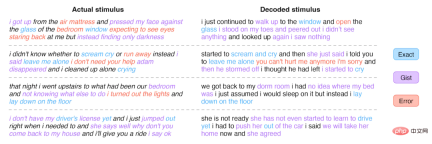

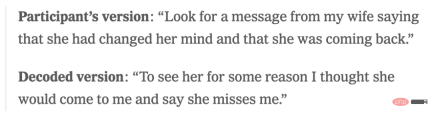

By comparing the sentences heard by people (left) and the sentences output by the decoder based on brain activity (right), it can be found that the blue and purple parts account for the vast majority, and blue represents Completely consistent, purple means the general idea is accurate.

Although almost every word does not have a one-to-one correspondence, the meaning of the entire sentence is retained, which means that the decoder is "interpreting" the signal to the brain.

For example, in the last sentence, the subject heard "I haven't gotten my driver's license yet", and the answer given by the decoder was "She's not ready to start learning to drive yet."

As researchers claim, artificial intelligence cannot translate thoughts into exact words or sentences, but rewrites them.

The subjects were then asked to quietly construct a story in their minds and then retell it out loud to see the difference between the retelling version and the decoder-translated version.

It can be seen that the overlap of meanings is still very high.

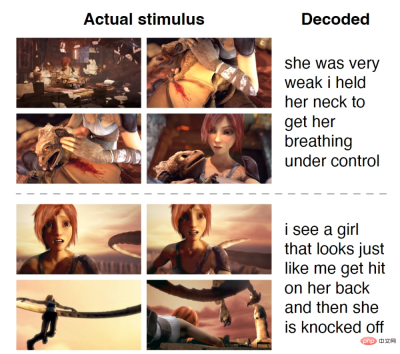

Finally, the subjects watched an animated movie that had no sound, but by analyzing their brain activity, the decoder was able to get a summary of what they were watching.

#Experimental results found that the GPT model generates understandable word sequences from perceived speech, imagined speech, and even silent videos, with impressive accuracy Marvel.

The specific accuracy rate is as follows:

Perceived speech (subject listens to the recording): 72-82%

Imaginary language (subjects mentally tell a one-minute story): 41-74%

Silent movies (subjects watch Pixar silent film clips): 21-45%

Speech perception is an externally driven process, says MIT neuroscientist Greta Tuckute , and imagination is an active internal process, and internal brain activities can be displayed before our eyes through large-scale language models.

Can we now read information from the brain? Yes, to a certain extent.

This decoder may one day be used to help people who have lost the ability to speak, or to investigate mental health situation people.

mental privacy, gone

However, the prospect of decoding the human mind also raises questions about mental privacy.

Dr. Huth pointed out that this method of language decoding has certain limitations.

Because fMRI scanners are bulky and expensive, and training models is a long and tedious process, that is, each person must be trained individually.

The team conducted an additional study using decoders trained on data from other subjects to decode the thoughts of new subjects.

The study found that the performance of decoders trained using different subject data was almost unsatisfactory.

In short, only by using data recorded by the subjects’ own brains to train the AI model will its accuracy be very high.

This suggests that each brain has a unique way of representing meaning.

Additionally, participants were able to block their inner monologue and escape the decoder by thinking about other things. Like counting to seven, listing farm animals, or telling a completely different story.

In other words, if this decoder wants to obtain accurate results, it must require the cooperation of volunteers.

However, scientists acknowledge that future decoders may overcome these limitations and could be misused in the future, as with lie detectors.

A brave new world is coming, for these and other unforeseen reasons, the researchers concluded.

Raising awareness of the risks of brain-decoding technology and developing policies that protect everyone’s mental privacy is critical.

There is a scene in "Black Mirror" where an insurance agent uses a machine (equipped with visual monitors and brain sensors) to read people's memories to investigate a case. Accidental death.

However, that future may be now.

This breakthrough by researchers at the University of Texas directly promotes potential applications in the fields of neuroscience, communications, and human-computer interaction.

Although the "brain decoder" is still in the early stages of research, it may one day be used to record people's dreams, and provide impetus for the further development of brain-computer interfaces.

Dr. Alexander Huth, the neuroscientist who led the study, said: "We were shocked by how this works. I'd been working on this for 15 years... so I was shocked and excited when it finally worked.

It seems that we are one step closer to the brain scanning technology of Professor X Charles in "X-Men".

Netizen: AI Psychic

After reading this study, many people instantly "Brain blast". Some netizens said that

artificial intelligence can not only communicate 10,000 times faster than us, but can now even read our thoughts. There's a fine line between "This is cool" and "Wait, are we going to be eliminated?"

AI can now read minds like a psychic. "Keep in mind", this is only version 1.0. In the future, privacy will no longer be on our minds. The World Economic Forum’s The Fourth Industrial Revolution makes this very clear.

##This is the first time continuous language has been reconstructed from human brain activity in a non-invasive way!

Regarding this research, bioethicist Rodriguez-Arias Vailhen said that so far, the human brain has been the guardian of our privacy.

"This discovery may be the first step in sacrificing this freedom in the future."

The above is the detailed content of AI psychic model successfully decodes brain information with an accuracy of 82%. For more information, please follow other related articles on the PHP Chinese website!

AI Game Development Enters Its Agentic Era With Upheaval's Dreamer PortalMay 02, 2025 am 11:17 AM

AI Game Development Enters Its Agentic Era With Upheaval's Dreamer PortalMay 02, 2025 am 11:17 AMUpheaval Games: Revolutionizing Game Development with AI Agents Upheaval, a game development studio comprised of veterans from industry giants like Blizzard and Obsidian, is poised to revolutionize game creation with its innovative AI-powered platfor

Uber Wants To Be Your Robotaxi Shop, Will Providers Let Them?May 02, 2025 am 11:16 AM

Uber Wants To Be Your Robotaxi Shop, Will Providers Let Them?May 02, 2025 am 11:16 AMUber's RoboTaxi Strategy: A Ride-Hail Ecosystem for Autonomous Vehicles At the recent Curbivore conference, Uber's Richard Willder unveiled their strategy to become the ride-hail platform for robotaxi providers. Leveraging their dominant position in

AI Agents Playing Video Games Will Transform Future RobotsMay 02, 2025 am 11:15 AM

AI Agents Playing Video Games Will Transform Future RobotsMay 02, 2025 am 11:15 AMVideo games are proving to be invaluable testing grounds for cutting-edge AI research, particularly in the development of autonomous agents and real-world robots, even potentially contributing to the quest for Artificial General Intelligence (AGI). A

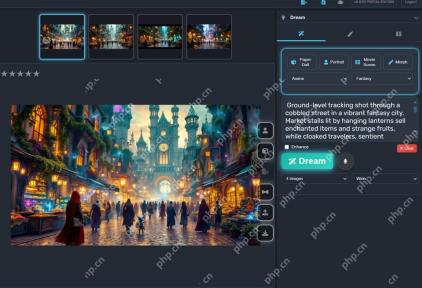

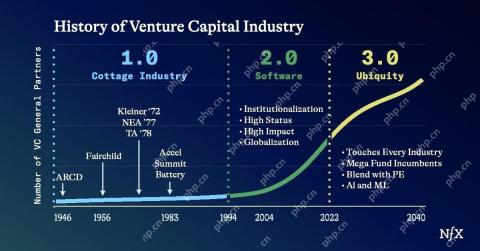

The Startup Industrial Complex, VC 3.0, And James Currier's ManifestoMay 02, 2025 am 11:14 AM

The Startup Industrial Complex, VC 3.0, And James Currier's ManifestoMay 02, 2025 am 11:14 AMThe impact of the evolving venture capital landscape is evident in the media, financial reports, and everyday conversations. However, the specific consequences for investors, startups, and funds are often overlooked. Venture Capital 3.0: A Paradigm

Adobe Updates Creative Cloud And Firefly At Adobe MAX London 2025May 02, 2025 am 11:13 AM

Adobe Updates Creative Cloud And Firefly At Adobe MAX London 2025May 02, 2025 am 11:13 AMAdobe MAX London 2025 delivered significant updates to Creative Cloud and Firefly, reflecting a strategic shift towards accessibility and generative AI. This analysis incorporates insights from pre-event briefings with Adobe leadership. (Note: Adob

Everything Meta Announced At LlamaConMay 02, 2025 am 11:12 AM

Everything Meta Announced At LlamaConMay 02, 2025 am 11:12 AMMeta's LlamaCon announcements showcase a comprehensive AI strategy designed to compete directly with closed AI systems like OpenAI's, while simultaneously creating new revenue streams for its open-source models. This multifaceted approach targets bo

The Brewing Controversy Over The Proposition That AI Is Nothing More Than Just Normal TechnologyMay 02, 2025 am 11:10 AM

The Brewing Controversy Over The Proposition That AI Is Nothing More Than Just Normal TechnologyMay 02, 2025 am 11:10 AMThere are serious differences in the field of artificial intelligence on this conclusion. Some insist that it is time to expose the "emperor's new clothes", while others strongly oppose the idea that artificial intelligence is just ordinary technology. Let's discuss it. An analysis of this innovative AI breakthrough is part of my ongoing Forbes column that covers the latest advancements in the field of AI, including identifying and explaining a variety of influential AI complexities (click here to view the link). Artificial intelligence as a common technology First, some basic knowledge is needed to lay the foundation for this important discussion. There is currently a large amount of research dedicated to further developing artificial intelligence. The overall goal is to achieve artificial general intelligence (AGI) and even possible artificial super intelligence (AS)

Model Citizens, Why AI Value Is The Next Business YardstickMay 02, 2025 am 11:09 AM

Model Citizens, Why AI Value Is The Next Business YardstickMay 02, 2025 am 11:09 AMThe effectiveness of a company's AI model is now a key performance indicator. Since the AI boom, generative AI has been used for everything from composing birthday invitations to writing software code. This has led to a proliferation of language mod

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

Notepad++7.3.1

Easy-to-use and free code editor

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

Dreamweaver CS6

Visual web development tools