Technology peripherals

Technology peripherals AI

AI AltDiffusion-m18, a versatile tool for generating multilingual texts and images

AltDiffusion-m18, a versatile tool for generating multilingual texts and imagesAltDiffusion-m18, a versatile tool for generating multilingual texts and images

Currently, the selection of non-English text and image generation models is limited, and users often have to translate the prompt into English before entering the model. This will not only cause additional operational burden, but also language and cultural errors in the translation process will affect the accuracy of the generated images.

Zhiyuan Research Institute’s FlagAI team pioneered an efficient training method, using a multi-language pre-training model combined with Stable Diffusion to train a multi-language text and image generation model - AltDiffusion-m18, supporting 18 types Language text-image generation.

Including Chinese, English, Japanese, Thai, Korean, Hindi, Ukrainian, Arabic, Turkish, Vietnamese, Polish, Dutch, Portuguese, Italian, Spanish, German, French, Russian.

Huggingface: https://huggingface.co/BAAI/AltDiffusion-m18

GitHub: https://github.com/FlagAI-Open/FlagAI/blob/master/examples/AltDiffusion -m18

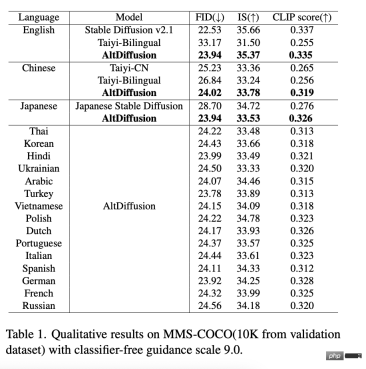

AltDiffusion-m18 achieved Stable Diffusion 95~99% effect in the objective evaluation of FID, IS, CLIP score in English, reached the optimal level in Chinese and Japanese, and filled in the remaining 15 categories. The gap in the language text and picture generation model has greatly satisfied the industry's strong demand for multi-language text and picture generation. Special thanks go to the Stable Diffusion Research Team for providing advice on this work.

In addition, AltDiffusion-m18 related innovative technology report "AltCLIP: Altering the Language Encoder in CLIP for Extended Language Capabilities" has been accepted by Findings of ACL 2023.

Technical Highlights

1 New AltCLIP, efficient and low-cost construction of multi-language T2I model

AltDiffusion released last year -m9, based on Stable Diffusion v1.4, the Zhiyuan team innovatively replaced the language tower with the multi-language tower AltCLIP, and used multi-language data in nine languages for fine-tuning, extending the original Stable Diffusion that only supports English to support 9 different languages.

AltCLIP: https://github.com/FlagAI-Open/FlagAI/tree/master/examples/AltCLIP-m18

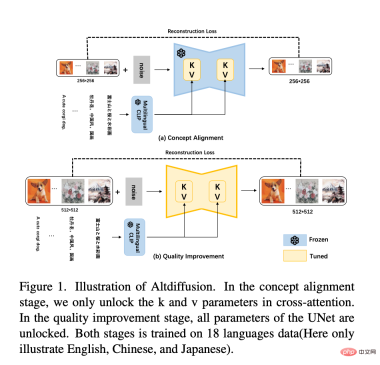

And AltDiffusion-m18 is based on Stable Diffusion v2.1 training. The new language tower of Stable Diffusion v2.1 is the inverted second layer of OpenCLIP. Therefore, the new AltCLIP uses the inverted second layer of OpenCLIP as the distillation target to retrain, and based on m9, it will only use the CrossAttention layer K and V matrices in Unet. Fine-tuning is expanded into a two-stage training method, as shown in the figure below:

- First stage: Earlier during the experiment of m9, it was discovered that fine-tuning the K and V matrices The main thing to learn is the conceptual alignment of text and pictures, so the first stage of m18 training continues to use the data of 18 languages to fine-tune the K and V matrices. In addition, experiments have proven that reducing the resolution of an image from 512*512 to 256*256 does not lose the semantic information of the image. Therefore, in the first stage of learning text-image concept alignment, the resolution of 256*256 is used for training, which speeds up the training.

- The second stage: In order to further improve the quality of the generated images, use the resolution of 512*512 to train the full parameters of Unet in the data of 18 languages. In addition, 10% of the text is discarded for unconditional training to serve classifier-free guidance inference.

- In addition, a classifier-free guided training technique is adopted to further improve the generation quality.

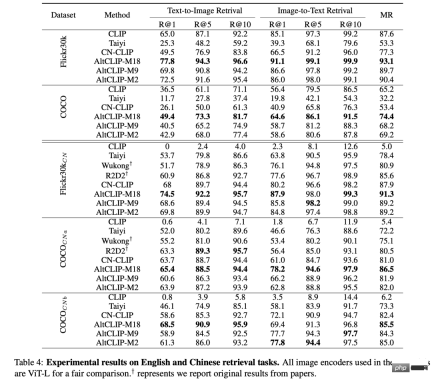

The latest evaluation results show that AltCLIP-m18 surpasses CLIP and reaches the optimal level in Chinese and English zero-shot (zero sample) retrieval tasks⬇️

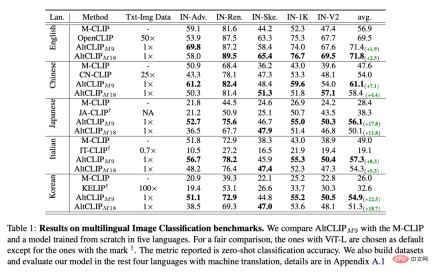

On multi-language image classification benchmarks, AltCLIP-m9 (early version, supports 9 languages) and AltCLIP-m18 reach the optimal level ⬇️

Similarly, thanks to AltCLIP With the innovative idea of changing towers, AltDiffusion-m18 can also be seamlessly connected to all Stable Diffusion models and ecological tools built on the original CLIP. All tools that support Stable Diffusion such as Stable Diffusion WebUI, DreamBooth, etc. can be applied to AltDiffusion-m18. Painless to get started and great playability!

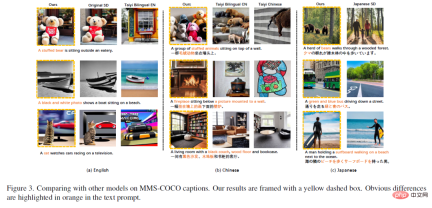

2 Multi-language generation effects are aligned, with superior performance and accurate details

With the blessing of the new AltCLIP, AltDiffusion-m18 has achieved 95~99% of the original Stable Diffusion effect in the English FID, IS, CLIP score evaluation, and has achieved the most advanced performance in 17 languages including Chinese and Japanese. The performance of AltDiffusion-m18 is shown in the following table:

The above is the detailed content of AltDiffusion-m18, a versatile tool for generating multilingual texts and images. For more information, please follow other related articles on the PHP Chinese website!

![[Ghibli-style images with AI] Introducing how to create free images with ChatGPT and copyright](https://img.php.cn/upload/article/001/242/473/174707263295098.jpg?x-oss-process=image/resize,p_40) [Ghibli-style images with AI] Introducing how to create free images with ChatGPT and copyrightMay 13, 2025 am 01:57 AM

[Ghibli-style images with AI] Introducing how to create free images with ChatGPT and copyrightMay 13, 2025 am 01:57 AMThe latest model GPT-4o released by OpenAI not only can generate text, but also has image generation functions, which has attracted widespread attention. The most eye-catching feature is the generation of "Ghibli-style illustrations". Simply upload the photo to ChatGPT and give simple instructions to generate a dreamy image like a work in Studio Ghibli. This article will explain in detail the actual operation process, the effect experience, as well as the errors and copyright issues that need to be paid attention to. For details of the latest model "o3" released by OpenAI, please click here⬇️ Detailed explanation of OpenAI o3 (ChatGPT o3): Features, pricing system and o4-mini introduction Please click here for the English version of Ghibli-style article⬇️ Create Ji with ChatGPT

Explaining examples of use and implementation of ChatGPT in local governments! Also introduces banned local governmentsMay 13, 2025 am 01:53 AM

Explaining examples of use and implementation of ChatGPT in local governments! Also introduces banned local governmentsMay 13, 2025 am 01:53 AMAs a new communication method, the use and introduction of ChatGPT in local governments is attracting attention. While this trend is progressing in a wide range of areas, some local governments have declined to use ChatGPT. In this article, we will introduce examples of ChatGPT implementation in local governments. We will explore how we are achieving quality and efficiency improvements in local government services through a variety of reform examples, including supporting document creation and dialogue with citizens. Not only local government officials who aim to reduce staff workload and improve convenience for citizens, but also all interested in advanced use cases.

What is the Fukatsu-style prompt in ChatGPT? A thorough explanation with example sentences!May 13, 2025 am 01:52 AM

What is the Fukatsu-style prompt in ChatGPT? A thorough explanation with example sentences!May 13, 2025 am 01:52 AMHave you heard of a framework called the "Fukatsu Prompt System"? Language models such as ChatGPT are extremely excellent, but appropriate prompts are essential to maximize their potential. Fukatsu prompts are one of the most popular prompt techniques designed to improve output accuracy. This article explains the principles and characteristics of Fukatsu-style prompts, including specific usage methods and examples. Furthermore, we have introduced other well-known prompt templates and useful techniques for prompt design, so based on these, we will introduce C.

What is ChatGPT Search? Explains the main functions, usage, and fee structure!May 13, 2025 am 01:51 AM

What is ChatGPT Search? Explains the main functions, usage, and fee structure!May 13, 2025 am 01:51 AMChatGPT Search: Get the latest information efficiently with an innovative AI search engine! In this article, we will thoroughly explain the new ChatGPT feature "ChatGPT Search," provided by OpenAI. Let's take a closer look at the features, usage, and how this tool can help you improve your information collection efficiency with reliable answers based on real-time web information and intuitive ease of use. ChatGPT Search provides a conversational interactive search experience that answers user questions in a comfortable, hidden environment that hides advertisements

An easy-to-understand explanation of how to create a composition in ChatGPT and prompts!May 13, 2025 am 01:50 AM

An easy-to-understand explanation of how to create a composition in ChatGPT and prompts!May 13, 2025 am 01:50 AMIn a modern society with information explosion, it is not easy to create compelling articles. How to use creativity to write articles that attract readers within a limited time and energy requires superb skills and rich experience. At this time, as a revolutionary writing aid, ChatGPT attracted much attention. ChatGPT uses huge data to train language generation models to generate natural, smooth and refined articles. This article will introduce how to effectively use ChatGPT and efficiently create high-quality articles. We will gradually explain the writing process of using ChatGPT, and combine specific cases to elaborate on its advantages and disadvantages, applicable scenarios, and safe use precautions. ChatGPT will be a writer to overcome various obstacles,

How to create diagrams using ChatGPT! Illustrated loading and plugins are also explainedMay 13, 2025 am 01:49 AM

How to create diagrams using ChatGPT! Illustrated loading and plugins are also explainedMay 13, 2025 am 01:49 AMAn efficient guide to creating charts using AI Visual materials are essential to effectively conveying information, but creating it takes a lot of time and effort. However, the chart creation process is changing dramatically due to the rise of AI technologies such as ChatGPT and DALL-E 3. This article provides detailed explanations on efficient and attractive diagram creation methods using these cutting-edge tools. It covers everything from ideas to completion, and includes a wealth of information useful for creating diagrams, from specific steps, tips, plugins and APIs that can be used, and how to use the image generation AI "DALL-E 3."

An easy-to-understand explanation of ChatGPT Plus' pricing structure and payment methods!May 13, 2025 am 01:48 AM

An easy-to-understand explanation of ChatGPT Plus' pricing structure and payment methods!May 13, 2025 am 01:48 AMUnlock ChatGPT Plus: Fees, Payment Methods and Upgrade Guide ChatGPT, a world-renowned generative AI, has been widely used in daily life and business fields. Although ChatGPT is basically free, the paid version of ChatGPT Plus provides a variety of value-added services, such as plug-ins, image recognition, etc., which significantly improves work efficiency. This article will explain in detail the charging standards, payment methods and upgrade processes of ChatGPT Plus. For details of OpenAI's latest image generation technology "GPT-4o image generation" please click: Detailed explanation of GPT-4o image generation: usage methods, prompt word examples, commercial applications and differences from other AIs Table of contents ChatGPT Plus Fees Ch

Explaining how to create a design using ChatGPT! We also introduce examples of use and promptsMay 13, 2025 am 01:47 AM

Explaining how to create a design using ChatGPT! We also introduce examples of use and promptsMay 13, 2025 am 01:47 AMHow to use ChatGPT to streamline your design work and increase creativity This article will explain in detail how to create a design using ChatGPT. We will introduce examples of using ChatGPT in various design fields, such as ideas, text generation, and web design. We will also introduce points that will help you improve the efficiency and quality of a variety of creative work, such as graphic design, illustration, and logo design. Please take a look at how AI can greatly expand your design possibilities. table of contents ChatGPT: A powerful tool for design creation

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

WebStorm Mac version

Useful JavaScript development tools

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

Notepad++7.3.1

Easy-to-use and free code editor