Technology peripherals

Technology peripherals AI

AI A100 implements a 3D reconstruction method without 3D convolution, and only takes 70ms for each frame reconstruction

A100 implements a 3D reconstruction method without 3D convolution, and only takes 70ms for each frame reconstructionA100 implements a 3D reconstruction method without 3D convolution, and only takes 70ms for each frame reconstruction

Reconstructing 3D indoor scenes from pose images is usually divided into two stages: image depth estimation, followed by depth merging and surface reconstruction. Recently, several studies have proposed a series of methods that perform reconstruction directly in the final 3D volumetric feature space. Although these methods have achieved impressive reconstruction results, they rely on expensive 3D convolutional layers, limiting their application in resource-constrained environments.

Now, researchers from institutions such as Niantic and UCL are trying to reuse traditional methods and focus on high-quality multi-view depth prediction, finally using simple and off-the-shelf depth fusion methods. Highly accurate 3D reconstruction.

- ##Paper address: https://nianticlabs.github .io/simplerecon/resources/SimpleRecon.pdf

- GitHub address: https://github.com/nianticlabs/simplerecon

- Paper home page: https://nianticlabs.github.io/simplerecon/

This research uses powerful image first A 2D CNN is carefully designed based on the experiment as well as the plane scan feature quantity and geometric loss. The proposed method SimpleRecon achieves significantly leading results in depth estimation and allows online real-time low-memory reconstruction.

As shown in the figure below, SimpleRecon’s reconstruction speed is very fast, taking only about 70ms per frame.

The comparison results between SimpleRecon and other methods are as follows:

The depth estimation model is located at the intersection of monocular depth estimation and planar scanning MVS. Researchers use cost volume (cost volume) to increase the depth prediction encoder-decoder. Architecture, as shown in Figure 2. The image encoder extracts matching features from the reference and source images as input to the cost volume. A 2D convolutional encoder-decoder network is used to process the output of the cost volume, which is augmented with image-level features extracted by a separate pre-trained image encoder.

The key to this research is to inject existing metadata into the cost volume along with typical deep image features to allow network access to useful information, such as geometry and relative camera pose information. Figure 3 shows the feature volume construction in detail. By integrating this previously untapped information, our model is able to significantly outperform previous methods in depth prediction without expensive 4D cost volumes, complex temporal fusion, and Gaussian processes.

The study was implemented using PyTorch and used EfficientNetV2 S as the backbone, which has a decoder similar to UNet. In addition, they also used ResNet18 The first 2 blocks were used for matching feature extraction, the optimizer was AdamW, and it took 36 hours to complete on two 40GB A100 GPUs.

Network architecture designThe network is implemented based on the 2D convolutional encoder-decoder architecture. When building such a network, research has found that there are some important design choices that can significantly improve depth prediction accuracy, mainly including:

Baseline cost volume fusion: Although the RNN-based temporal fusion method are often used, but they significantly increase the complexity of the system. Instead, the study makes cost volume fusion as simple as possible and finds that simply adding the dot product matching costs between the reference view and each source view can give results that are competitive with SOTA depth estimation.

Image encoder and feature matching encoder: Previous research has shown that image encoder is very important for depth estimation, both in monocular and multi-view estimation. For example, DeepVideoMVS uses MnasNet as the image encoder, which has relatively low latency. The study recommends using a small but more powerful EfficientNetv2 S encoder, which significantly improves depth estimation accuracy, although this comes at the cost of an increased number of parameters and a 10% reduction in execution speed.

Fusing multi-scale image features to cost volume encoder: In 2D CNN-based depth stereo and multi-view stereo, image features are usually combined with cost volume output on a single scale. Recently, DeepVideoMVS proposes to stitch deep image features at multiple scales, adding skip connections between image encoders and cost volume encoders at all resolutions. This is helpful for LSTM-based fusion networks, and the study found that it is also important for their architecture.

Experiments

This study trained and evaluated the proposed method on the 3D scene reconstruction dataset ScanNetv2. Table 1 below uses the metrics proposed by Eigen et al. (2014) to evaluate the depth prediction performance of several network models.

Surprisingly, the model proposed in this study does not use 3D convolution, but outperforms all baseline models in depth prediction indicators. Furthermore, baseline models that do not use metadata encoding also perform better than previous methods, indicating that a well-designed and trained 2D network is sufficient for high-quality depth estimation. Figures 4 and 5 below show qualitative results for depth and normal.

This study used the standard protocol established by TransformerFusion for 3D reconstruction evaluation. The results are shown in Table 2 below. .

For online and interactive 3D reconstruction applications, reducing sensor latency is critical. Table 3 below shows the ensemble computation time per frame for each model given a new RGB frame.

In order to verify the effectiveness of each component in the method proposed in this study, the researcher conducted an ablation experiment, and the results are shown in Table 4 below.

Interested readers can read the original text of the paper to learn more about the research details.

The above is the detailed content of A100 implements a 3D reconstruction method without 3D convolution, and only takes 70ms for each frame reconstruction. For more information, please follow other related articles on the PHP Chinese website!

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AM

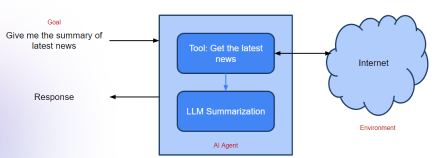

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AMIntroduction In prompt engineering, “Graph of Thought” refers to a novel approach that uses graph theory to structure and guide AI’s reasoning process. Unlike traditional methods, which often involve linear s

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AM

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AMIntroduction Congratulations! You run a successful business. Through your web pages, social media campaigns, webinars, conferences, free resources, and other sources, you collect 5000 email IDs daily. The next obvious step is

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AM

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AMIntroduction In today’s fast-paced software development environment, ensuring optimal application performance is crucial. Monitoring real-time metrics such as response times, error rates, and resource utilization can help main

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM“How many users do you have?” he prodded. “I think the last time we said was 500 million weekly actives, and it is growing very rapidly,” replied Altman. “You told me that it like doubled in just a few weeks,” Anderson continued. “I said that priv

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AM

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AMIntroduction Mistral has released its very first multimodal model, namely the Pixtral-12B-2409. This model is built upon Mistral’s 12 Billion parameter, Nemo 12B. What sets this model apart? It can now take both images and tex

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AM

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AMImagine having an AI-powered assistant that not only responds to your queries but also autonomously gathers information, executes tasks, and even handles multiple types of data—text, images, and code. Sounds futuristic? In this a

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AM

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AMIntroduction The finance industry is the cornerstone of any country’s development, as it drives economic growth by facilitating efficient transactions and credit availability. The ease with which transactions occur and credit

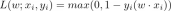

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AM

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AMIntroduction Data is being generated at an unprecedented rate from sources such as social media, financial transactions, and e-commerce platforms. Handling this continuous stream of information is a challenge, but it offers an

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

WebStorm Mac version

Useful JavaScript development tools