Technology peripherals

Technology peripherals AI

AI Integrating GPT large model products, WakeData new round of product upgrades

Integrating GPT large model products, WakeData new round of product upgradesIntegrating GPT large model products, WakeData new round of product upgrades

Recently, WakeData Weike Data (hereinafter referred to as "WakeData") has completed a new round of product capability upgrades.

At the product launch conference in November 2022, WakeData’s “three determinations” have been conveyed: always firmly invest in technology, comprehensively consolidate the scientific and technological capabilities and self-research rate of core products; always be firm Domestic adaptation capabilities support domestic chips, operating systems, databases, middleware, national secret algorithms, etc., and realize local substitution of foreign manufacturers in the same field; always firmly embrace the ecosystem and create a win-win situation with partners.

WakeData continues a new round of product capability upgrades. Relying on its technology accumulation in the past five years and its practice in real estate, retail, automobile and other industries and vertical fields, it has jointly developed privately owned products with strategic partners. WakeMind, a large industry model with centralized deployment capabilities, will help more companies revolutionize themselves, improve efficiency, and continue to liberate productivity in the AIGC era.

The three major platform layers of the WakeMind model

Model layer: The mothership platform will be based on privatized deployment and As the core engine with industry customization capabilities, WakeMind has been connected to large models such as ChatGPT. It also supports access to multiple large model capabilities such as Wen Xinyiyan and Tongyi Qianwen.

Platform layer: WakeMind is based on Prompt project, Plugin, LangChain and other methods realize efficient dialogue capabilities with large models. On the basis of zero-sample learning, the model can better understand contextual information through Prompt and Plugin management; by feeding industry corpus, the model can quickly learn industry knowledge and have the ability to think and reason about industries and vertical fields.

Application layer: The WakeMind mothership platform provides underlying capabilities to empower product applications and industry scenarios through carrier-based aircraft one after another, improving internal productivity of the enterprise.

For example, how does the mothership platform empower Weishu Cloud. In the process of building and using data assets with the help of Weishu Cloud Platform, enterprises often need to invest a large number of professional data development engineers to participate in business needs analysis and data development work, and a large number of tedious development tasks will lead to the entire data value realization cycle. Being elongated. Based on WakeMind empowerment, only through text interaction, Weishu Cloud can automatically generate corresponding data query statements and execute the query with one click, which can greatly improve the efficiency of data query, analysis, and development, and comprehensively reduce the technical threshold for data use. , to achieve the goal of making data available to everyone.

The three major characteristics of the WakeMind model

1) The number of parameters is more suitable for industrial and vertical field scenarios. To reach human-level content, AI-generated content often needs to be based on "pre-training and fine-tuning" large models; WakeData teamed up with the industry's leading multi-modal pre-trained large model manufacturer with hundreds of billions of parameters to compress 100 WakeMind model with 100 million parameters; in focused industries and vertical fields, based on P-Tuning V2, the parameters that need fine-tuning can be reduced to one thousandth of the original, significantly reducing the amount of calculation required for fine-tuning.

2) Text creation and code generation with industry-specific and vertical domain capabilities.

3) Support privatized deployment and industrial customization. Leading companies in industries or vertical fields hope to have the ability to privatize deployment and industry-specific customization of large models. How to conduct effective pre-training with small sample learning and low computing power consumption has become the technical threshold for industrial customized models. The accumulation of WakeData's industry data and vertical field data will enable large industry models to have industry know-how and form unique competitive advantages.

At the same time, WakeMind uses the Transformer architecture to generate tens of thousands of instruction compliance sample data in a self-instruct manner. It uses SFT (Supervised Fine-Tuning), RLHF and other technologies to achieve intent alignment. After quantization through INT8, it can be significantly Reduce the cost of inference and make the model feasible for privatized deployment

Large model and industrial pre-trained large model

Since OpneAI released ChatGPT, it has brought it to the world A huge shock came. The Large Language Model (LLM) behind it and RLHF (Reinforcement Learning from Human Feedback), a language model optimized based on human feedback using reinforcement learning, have received widespread attention.

WakeData has released 11 AI models in NLP, CV, speech and other fields since its early days, among which the large NLP semantic analysis model has the most abundant application scenarios. For example, in real estate, automobile, brand retail and other industries with low frequency and high customer unit price, SCRM is one of the most effective ways to manage potential customers and existing customers. Through the accumulation of industry corpus and specific pre-training, WakeData enables AI to develop a deep understanding of the industry and can quickly respond to customer questions 24 hours a day during the conversation. It can also automatically extract customer tags based on conversation information to improve the resolution of customer portraits.

In WakeData, AI large model capabilities have covered everything from the construction of underlying customer data assets, to mid-level customer business journeys and business rules, to upper-level multi-touch point marketing links; with the ability to The ability to 'reduce costs, improve efficiency, and empower' the entire digital customer management vertical field. For example, in the field of CDP customer data platform, operators used to need cumbersome rule design to select the appropriate target customer groups. Now, through simple language description and dialogue, AI can assist in finding the corresponding target customer groups, greatly reducing the platform cost. The cost of using and learning is reduced, and the usage efficiency and interactive experience are greatly improved.

In the field of MA marketing automation, WakeData’s products have been connected to WeChat ecosystem, Douyin, Xiaohongshu and other touch points, and support the automated construction of marketing journeys, providing rich The journey template library can achieve "real-time, one-to-one, personalized" user contact. An important part of this is the generation of personalized marketing materials, including text, pictures, mixed graphics and text, etc. AI large models can greatly improve the efficiency and quality of this part while reducing costs.

In the field of Loyalty membership, when the membership system spans different industries and business formats, there will be challenges in unifying membership rules and member assets. WakeData’s AI large model is based on a large amount of industry experience and corpus training The formed Prompt engine can automatically generate mapping logic and combination solutions for different membership rules through simple conversations to describe the characteristics and business demands of members of different business types.

The practice of large models in industries and vertical fields has proven its value.

Three stages of WakeMind’s business path

1) 2018-2021, self-owned model application and commercialization exploration period. Based on WakeData's three basic product lines of Weishu Cloud, Weike Cloud, and Kunlun Platform, the self-developed NLP large model will be comprehensively explored and practiced in vertical fields such as real estate, new retail, automobiles, and digital marketing.

2) 2022-2023, WakeMind release and mothership platform construction period. WakeData collaborates with strategic partners to accelerate the research and development of industry large model WakeMind, and through the mothership platform, WakeMind has the ability to customize industry and vertical fields, has the ability to privatize deployment, and has the access and management capabilities of general large models to achieve Advantageous additions to the scenario that cannot be covered by own models.

3) In 2023 and beyond, the WakeMind model application period will be fully entered. Based on the capabilities of the mothership platform, WakeMind is fully connected to product lines such as Weike Cloud, Weishu Cloud, and Kunlun Platform. Through industry knowledge accumulation, industry scenario optimization, and industry prompt engineering training, WakeMind further improves the industry capabilities of the model and will Launch larger-scale commercial applications in real estate, new retail, automobile and other industries. At the same time, WakeData itself has begun to realize its own productivity revolution based on the capabilities of the WakeMind mothership platform.

How WakeData uses AI to liberate productivity

WakeData's mission is defined as "waking up data" and has been deployed in the field of big data platforms for many years. As a TOB enterprise services company, WakeData sees huge opportunities in "how to use large models" and covers the use of large models in two aspects: on the one hand, it integrates large models into products, and on the other hand, it helps companies internally of designers, programmers, and others use large models for product development and customer project delivery.

There are two basic elements for the access and application of large models, more applicable scenarios and big data AI capabilities. WakeData’s two main products, “Weike Cloud” and “Weishu Cloud” are Access to large models is facilitated. Weike Cloud can more conveniently and seamlessly connect large model tools based on industry digital applications, and customers do not need to worry about the complex configuration and technical optimization behind the application; Weike Cloud can apply optimization prompt projects and vertical models based on industry help scenarios. This is also the product solution advantage that WakeData has always adhered to in platform applications.

At the same time, WakeData divides large model access products into two categories. One is based on product and industry business flow access. The focus of this type of access is to optimize experience and industry knowledge to help Customers can use it quickly, conveniently and effectively; the second type is to deeply optimize vertical scenarios based on product architecture and open source large models. This type of product is more in line with the needs of large customers in terms of risk resistance and data security. At the same time, the model can be continuously updated based on industry understanding. Optimization can maintain the continued competitiveness of such customers in vertical industries.

“Enterprises must integrate big models in digital transformation and digital customer management. Big data and scenarios are two key elements.” Li Kechen, founder and CEO of WakeData, said.

Under normal circumstances, large models require a large amount of data for effective training, so it is crucial to have industrial data platform capabilities. Recently, the Cyberspace Administration of China released the "Measures for the Management of Generative Artificial Intelligence Services (Draft for Comments)", which particularly emphasizes the legal compliance of training and pre-training data sources, as well as the authenticity, accuracy, objectivity and diversity of the data. sex. The value application scenarios of large models are an important factor in the development and commercialization of large models; the so-called scenarios refer to the purpose of the models we train and whether they can create core value for the business under the premise of legal compliance.

Li Kechen believes that scenarios are environments where large models are used, and the basis of big data and AI technology is capabilities; companies with industry scenarios and industry data will be faster, more effective, and more agile when acquiring large model capabilities. .

WakeData’s two core product lines are the accumulation of these two elements; as a new generation data platform, Weishu Cloud has powerful big data Eed-to-End data processing capabilities. As a new generation of digital customer management platform, Keyun includes CDP, MA, SCRM, Loyalty and other suites, and has a large number of business application scenarios. Through the strategy of deep cultivation in vertical industries, Keyun has stronger industry Know-How and more valuable products. value of training sample data. Weishu Cloud will release version 5.0 in 2022. Its data integration, data calculation, data analysis and governance, data visualization, and data assetization capabilities all have industry-leading advantages. These data-side advantages have also become barriers to competition for industrialized artificial intelligence applications in the era of large models.

“A working atmosphere that promotes productivity liberation has been initially formed within WakeData. WakeMind capabilities have been used in areas such as product design, development testing, and marketing operations. The initial application has achieved a human efficiency of 20%. Improvement. While accelerating product research and development, it also improves the efficiency of customer project delivery and saves time and cost for the implementation of customers' digital projects." said Qian Yong, WakeData CTO.

The Kunlun platform consists of three parts: basic cloud, development cloud, and integrated cloud. It is a very important cloud native technology base in the process of WakeData product development, implementation and delivery. Kunlun Platform Development Cloud is empowered by WakeMind. Engineers are already exploring applications such as "based on product documentation, assisting in generating corresponding architecture design and data model design, and then assisting in generating code and detecting the correctness of the code." For example, in the process of promoting domain-driven design, WakeMind can assist in learning DDD and assist engineers in domain modeling; in the process of data modeling, data models can be created, modified, automatically supplemented and improved through natural language interaction, and rapid production can be achieved. SQL statements; during the product development process, by inputting product documents, extract and generate a product glossary, and provide detailed explanations, etc.

For ordinary engineers, they can already make significant improvements in areas such as generating rule code, automatically generating unit tests, code review and optimization, etc. Improved development efficiency.

WakeMind provides a copywriting generation assistant available to everyone.

#The marketing department quickly builds a marketing growth matrix through AI’s Text to Video.

AIGC’s empowerment of industries and vertical fields is an inevitable trend, and it is also the core development path of WakeData since its establishment. WakeData has always maintained an open and embracing attitude towards ChatGPT-like technologies and services, and has actively participated in them. Based on the strategy of focusing on industrialized operations, WakeData has firmly grasped its path to value and commercialization. WakeMind's large industry model of WakeMind will help more companies revolutionize themselves, improve efficiency, and continue to liberate productivity in the AIGC era.

The above is the detailed content of Integrating GPT large model products, WakeData new round of product upgrades. For more information, please follow other related articles on the PHP Chinese website!

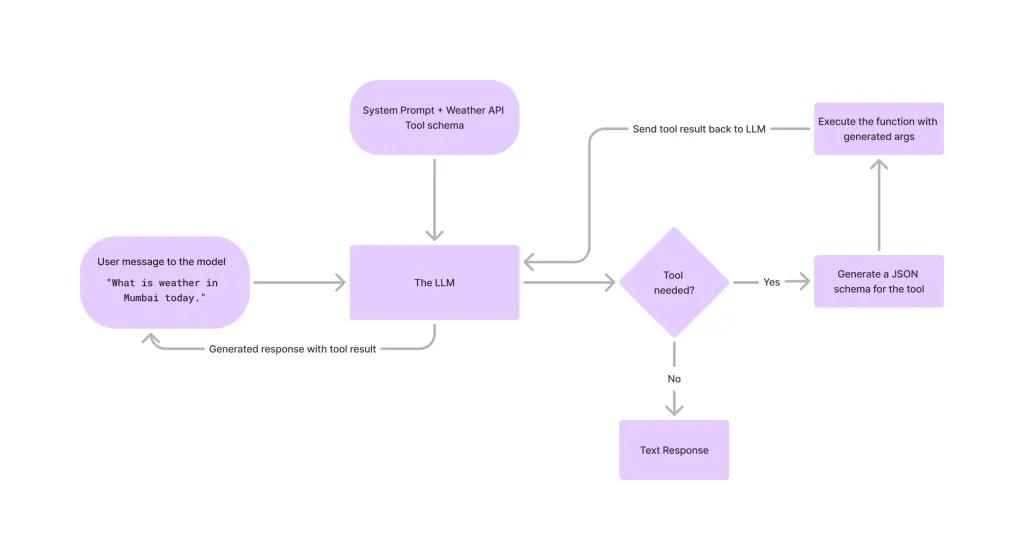

Tool Calling in LLMsApr 14, 2025 am 11:28 AM

Tool Calling in LLMsApr 14, 2025 am 11:28 AMLarge language models (LLMs) have surged in popularity, with the tool-calling feature dramatically expanding their capabilities beyond simple text generation. Now, LLMs can handle complex automation tasks such as dynamic UI creation and autonomous a

How ADHD Games, Health Tools & AI Chatbots Are Transforming Global HealthApr 14, 2025 am 11:27 AM

How ADHD Games, Health Tools & AI Chatbots Are Transforming Global HealthApr 14, 2025 am 11:27 AMCan a video game ease anxiety, build focus, or support a child with ADHD? As healthcare challenges surge globally — especially among youth — innovators are turning to an unlikely tool: video games. Now one of the world’s largest entertainment indus

UN Input On AI: Winners, Losers, And OpportunitiesApr 14, 2025 am 11:25 AM

UN Input On AI: Winners, Losers, And OpportunitiesApr 14, 2025 am 11:25 AM“History has shown that while technological progress drives economic growth, it does not on its own ensure equitable income distribution or promote inclusive human development,” writes Rebeca Grynspan, Secretary-General of UNCTAD, in the preamble.

Learning Negotiation Skills Via Generative AIApr 14, 2025 am 11:23 AM

Learning Negotiation Skills Via Generative AIApr 14, 2025 am 11:23 AMEasy-peasy, use generative AI as your negotiation tutor and sparring partner. Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining

TED Reveals From OpenAI, Google, Meta Heads To Court, Selfie With MyselfApr 14, 2025 am 11:22 AM

TED Reveals From OpenAI, Google, Meta Heads To Court, Selfie With MyselfApr 14, 2025 am 11:22 AMThe TED2025 Conference, held in Vancouver, wrapped its 36th edition yesterday, April 11. It featured 80 speakers from more than 60 countries, including Sam Altman, Eric Schmidt, and Palmer Luckey. TED’s theme, “humanity reimagined,” was tailor made

Joseph Stiglitz Warns Of The Looming Inequality Amid AI Monopoly PowerApr 14, 2025 am 11:21 AM

Joseph Stiglitz Warns Of The Looming Inequality Amid AI Monopoly PowerApr 14, 2025 am 11:21 AMJoseph Stiglitz is renowned economist and recipient of the Nobel Prize in Economics in 2001. Stiglitz posits that AI can worsen existing inequalities and consolidated power in the hands of a few dominant corporations, ultimately undermining economic

What is Graph Database?Apr 14, 2025 am 11:19 AM

What is Graph Database?Apr 14, 2025 am 11:19 AMGraph Databases: Revolutionizing Data Management Through Relationships As data expands and its characteristics evolve across various fields, graph databases are emerging as transformative solutions for managing interconnected data. Unlike traditional

LLM Routing: Strategies, Techniques, and Python ImplementationApr 14, 2025 am 11:14 AM

LLM Routing: Strategies, Techniques, and Python ImplementationApr 14, 2025 am 11:14 AMLarge Language Model (LLM) Routing: Optimizing Performance Through Intelligent Task Distribution The rapidly evolving landscape of LLMs presents a diverse range of models, each with unique strengths and weaknesses. Some excel at creative content gen

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment