The result of all these human decisions is that complex models end up being "designed intuitively" rather than systematically, said Frank Hutter, head of the Machine Learning Laboratory at the University of Freiburg in Germany.

#A growing field called automated machine learning (autoML) aims to eliminate this guesswork. The idea is to let algorithms take over the decisions researchers currently have to make when designing models. Ultimately, these technologies could make machine learning more accessible.

Although automatic machine learning has been around for nearly a decade, researchers are still working to improve it. A new conference taking place in Baltimore today showcases efforts to improve the accuracy and streamline the performance of autoML.

There is strong interest in autoML’s potential to simplify machine learning. Companies like Amazon and Google already offer low-code machine learning tools that leverage autoML technology. If these techniques become more effective, it could speed up research and make machine learning available to more people.

The idea is to allow people to choose the question they want to ask, point the autoML tool at it, and get the results they want.

This vision is the "holy grail of computer science," said Lars Kotthoff, assistant professor of computer science at the University of Wyoming and conference organizer. "You specify the problem, and the computer knows how to solve it, and that's what you do. Everything." But first, researchers must figure out how to make these techniques more time- and energy-efficient.

What can automated machine learning solve?

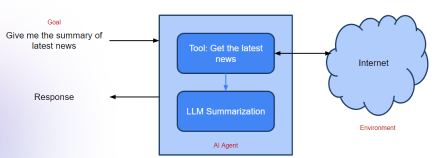

At first glance, the concept of autoML may seem redundant—after all, machine learning is already about automating the process of deriving insights from data. But because autoML algorithms operate at a level of abstraction above the underlying machine learning models, relying solely on the output of those models for guidance, they save time and computational effort.

Researchers can apply autoML technology to pre-trained models to gain new insights without wasting computing power duplicating existing research.

For example, Mehdi Bahrami, a research scientist at Fujitsu Research Institute in the United States, and his co-authors presented recent work on how to use the BERT-sort algorithm with different pre-trained models to adapt to new purposes.

BERT-sort is an algorithm that finds what is called "semantic order" when training on a data set. For example, given movie review data, it knows that "great" movies are ranked higher than "good" and "bad" movies.

With the help of autoML technology, the learned semantic order can also be generalized to classify cancer diagnosis and even foreign language text, thereby reducing time and calculation amount.

"BERT requires months of computation and is very expensive, like $1 million to generate the model and repeat the process," Bahrami said. "So if everyone wants to do the same thing, That's expensive — it's not energy efficient, and it's not sustainable for the world."

Despite the promise shown in the field, researchers are still looking for ways to make autoML technology more computationally efficient. For example, with methods like Neural Architecture Search (NAS), where many different models are built and tested to find the best fit, the energy required to complete all these iterations can be significant.

Automatic machine learning can also be applied to machine learning algorithms that do not involve neural networks, such as creating random decision forests or support vector machines to classify data. Research in these areas is ongoing, and there are already many coding libraries available for people who want to integrate autoML technology into their projects.

Hutter said the next step is to use autoML to quantify uncertainty and address issues of trustworthiness and fairness in the algorithm. In this vision, criteria for trustworthiness and fairness would be similar to any other machine learning constraints, such as accuracy. AutoML can capture and automatically correct biases found in these algorithms before they are released.

Continuing progress in neural architecture search

But for applications like deep learning, autoML still has a long way to go. The data used to train deep learning models, such as images, documents, and recorded speech, is often dense and complex. It requires huge computing power to process. The cost and time to train these models can be prohibitive for anyone except researchers working at large corporations with deep pockets.

A competition at the conference requires participants to develop energy-efficient alternative algorithms for neural architecture search. This is quite a challenge because this technology is notorious for its computational requirements. It automatically cycles through countless deep learning models to help researchers choose the right one for their application, but the process can take months and cost more than a million dollars.

The goal of these alternative algorithms, known as zero-cost neural architecture search agents, is to make neural architecture search more accessible and greener by drastically slashing its computational requirements. The results take seconds to run, not months. Currently, these techniques are still in the early stages of development and often unreliable, but machine learning researchers predict they have the potential to make the model selection process more efficient.

The above is the detailed content of Develop AI more easily with autoML technology. For more information, please follow other related articles on the PHP Chinese website!

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AM

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AMIntroduction In prompt engineering, “Graph of Thought” refers to a novel approach that uses graph theory to structure and guide AI’s reasoning process. Unlike traditional methods, which often involve linear s

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AM

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AMIntroduction Congratulations! You run a successful business. Through your web pages, social media campaigns, webinars, conferences, free resources, and other sources, you collect 5000 email IDs daily. The next obvious step is

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AM

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AMIntroduction In today’s fast-paced software development environment, ensuring optimal application performance is crucial. Monitoring real-time metrics such as response times, error rates, and resource utilization can help main

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM“How many users do you have?” he prodded. “I think the last time we said was 500 million weekly actives, and it is growing very rapidly,” replied Altman. “You told me that it like doubled in just a few weeks,” Anderson continued. “I said that priv

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AM

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AMIntroduction Mistral has released its very first multimodal model, namely the Pixtral-12B-2409. This model is built upon Mistral’s 12 Billion parameter, Nemo 12B. What sets this model apart? It can now take both images and tex

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AM

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AMImagine having an AI-powered assistant that not only responds to your queries but also autonomously gathers information, executes tasks, and even handles multiple types of data—text, images, and code. Sounds futuristic? In this a

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AM

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AMIntroduction The finance industry is the cornerstone of any country’s development, as it drives economic growth by facilitating efficient transactions and credit availability. The ease with which transactions occur and credit

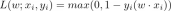

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AM

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AMIntroduction Data is being generated at an unprecedented rate from sources such as social media, financial transactions, and e-commerce platforms. Handling this continuous stream of information is a challenge, but it offers an

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

WebStorm Mac version

Useful JavaScript development tools

Dreamweaver CS6

Visual web development tools