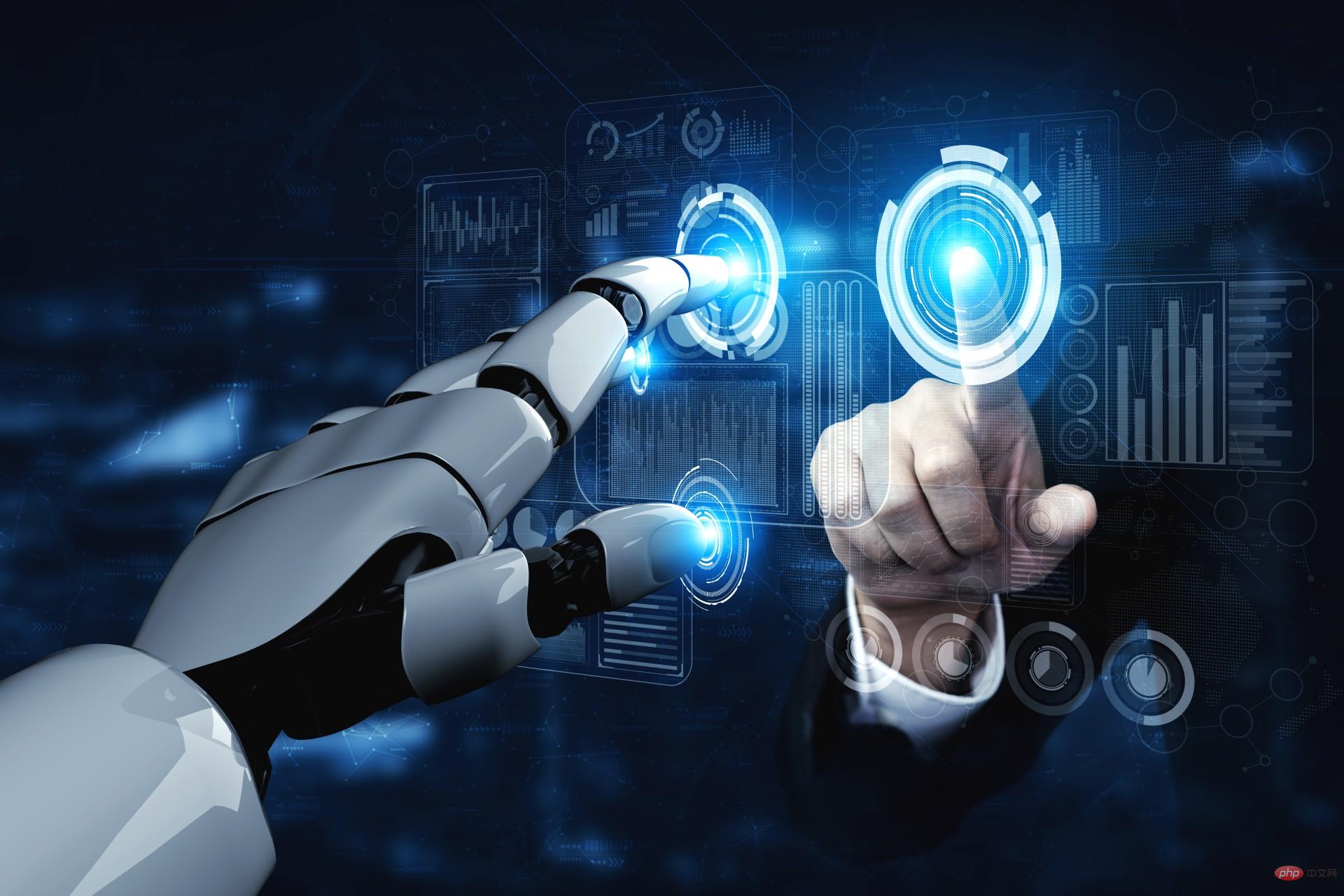

Edge computing has become one of the most talked about technology trends, and with all this talk, maybe you’re thinking it’s time to invest in intelligent edge technology for your IoT network. However, before you start shopping for new edge devices, let’s discuss what exactly edge computing is, what its role is, and whether applications can benefit from edge technology.

Edge computing can add a lot of flexibility, speed, and intelligence to IoT networks, but it’s important to understand that edge AI devices don’t solve all the challenges faced by intelligent network applications. At the end of this article, we will discuss the key features and considerations buyers should consider when evaluating edge AI devices, after determining whether edge technology is suitable for the application.

What is edge computing

Edge computing takes the Internet of Things to a higher level at the edge of the cloud, raw data Can be converted into value in real time. Elevates the importance and governance of connected nodes, endpoints and other smart devices by redistributing data processing work across the network.

Edge computing is almost the exact opposite of cloud computing, where data flows from a distributed network, is processed in a centralized data center, and the results are typically transmitted back to the original distributed network. Network to trigger action or produce change. However, transmitting large amounts of data over long distances comes with costs. These costs can be measured in money, but they can also be measured in other key ways, such as power or time.

This is where edge computing comes in. When power, bandwidth and latency really matter, edge computing may be the answer. Unlike centralized cloud computing, where data may have to travel hundreds of miles before being processed, edge computing enables data to be processed at the same network edge location where the data is sensed, created or resides. This means that processing latency is almost negligible and power and bandwidth requirements are often significantly reduced.

One of the main enablers of edge computing today is a way for semiconductor manufacturers to increase processing power without significantly increasing power consumption. This means processors at the edge can do more with the data they acquire without using more power. This allows more data to stay at the edge rather than being transferred to the core. In addition to reducing overall system power, this increases response time and improves data privacy.

Some technologies that benefit from this development include artificial intelligence and machine learning, but these also rely on reducing the cost of data acquisition while increasing the level of data privacy. Both cost and privacy concerns can be addressed through edge processing. When it comes to emerging trends like AI and ML, both technologies have traditionally required significant resources, far beyond what is typically available in endpoints or smart devices. Advances in hardware and software now also make it possible to embed these enabling technologies into smaller, more resource-constrained devices at the edge of the network.

Evaluating Edge Artificial Intelligence

Selecting a platform capable of performing edge processing, which may include running AI algorithms or ML inference engines, requires careful evaluation . Simple sensors and actuators, even those that are part of the Internet of Things, can be implemented with relatively small integrated devices. Increasing the amount of edge processing will require a more powerful platform, possibly using a highly parallel architecture. Typically, this means GPUs, but if the platform is too powerful, it will become a burden on the limited resources at the edge of the network.

It is also important to remember that an edge device is basically a real-world interface, so it may need to support some common interface technologies such as Ethernet, GPIO, CAN, Serial line and/or USB). It may also need to support peripherals such as cameras, keyboards and monitors.

The edge can also be a very different environment than the comfort of a climate-controlled data center. Edge devices can be exposed to extremes of temperature, humidity, vibration and even altitude. This will have an impact on the choice of equipment and how it is packaged or packaged.

Another important aspect to consider is regulatory requirements. Any device that uses radio frequencies to communicate will be subject to regulations and may require a license to operate. Some platforms will comply "out of the box", but others may require more effort. Once in use, they are unlikely to receive hardware upgrades, so processing power, memory, and storage must be carefully identified during the design cycle to provide room for future performance improvements.

This includes software upgrades. Unlike hardware, software updates can be deployed while the device is in the field. These over-the-air updates are now so common that it is likely that any edge device will need to be designed to support OTA updates.

Choosing the right solution will involve a careful evaluation of all these general points, as well as a careful study of the specific needs of the application. For example, does the device need to process video data, or audio data, or only temperature, or also monitor other environmental aspects. Many of these issues apply to all technologies deployed at the leading edge, but as processing levels increase and expectations for output increase, it will be necessary to expand the list of requirements.

Benefits of Edge Computing

It is now technically possible to put AI and ML into edge devices and smart nodes. will bring significant opportunities. This means that the processing engine is not only closer to the data source, but that engine can do more with the data it collects.

There are indeed benefits to doing this. First, it can improve productivity, or the efficiency with which data is used. Second, it simplifies the network architecture because less data is moved. Third, it makes proximity to the data center less important. This last point may not seem important if the data center is in a city, but it makes a big difference if the edge of the network is a remote location, such as a farm or water treatment plant.

It is undeniable that data moves quickly on the Internet. Many people may be surprised to learn that a search query may travel around the world twice before the results appear on the screen. The total elapsed time may be only a fraction of a second, but to us it is almost instantaneous. But for the machines and other smart devices that make up an internet of connected, intelligent, and often autonomous sensors and actuators, every second feels like an hour.

This round-trip delay is a real concern for manufacturers and developers of real-time systems. The time it takes for data to travel to and from the data center is not inconsequential, and it is certainly not instantaneous. Reducing this latency is a key goal of edge computing. It works with faster networks, which is where 5G comes into play. However, rolling out faster networks will not make up for the cumulative network latency we expect as more devices come online.

Analysts predict that by 2030, the number of connected devices may reach 50 billion. If each of these devices required the bandwidth of a data center, the network would always be congested. If many of them are running in a pipeline, waiting for data from the previous stage to arrive, the total latency will quickly become very noticeable. Edge computing is the only viable solution to alleviate congested networks.

However, while there is a certain need for edge computing in general, the specific benefits of edge computing still depend heavily on the application, which is where the laws of edge computing apply. These laws will help engineering teams decide whether edge computing is suitable for a specific application.

The 4 Laws of Edge Computing

Laws of Physics

The first law is the law of physics , this is immutable. RF energy travels at the speed of light, just like photons in fiber optic networks. This is good news. The bad news is they can't go any faster. So if the round trip time is still not fast enough, edge computing may be the right choice.

The Ping test provides a simple way to measure the time it takes for a packet to travel between two endpoints of a network connection. Online games are often hosted on multiple servers, and players will ping the server until they find the one with the lowest latency, meaning data can travel the fastest. That's the key with time-sensitive data, even if it's just a fraction of a second.

Delay is also not entirely dependent on the transport mechanism. There are encoders and decoders at each end, these physical layers needed to convert the electrons into whatever form of energy is used and then convert them back again. All of this takes time, and even if the processor is running at gigahertz speeds, time is limited and depends on the amount of data being moved.

Laws of Economics

This approach may be more flexible, but it is also more difficult as demand for processing and storage resources soars. predict. Margins are always slim, but if the cost of processing data in the cloud suddenly rises, it could prove the difference between profit or loss.

The cost of cloud services starts with the cost of buying or renting a server, rack, or blade. This depends on the number of CPU cores, the amount of RAM or persistent storage required, and the service level. Guaranteed uptime costs more than unguaranteed service levels. Network bandwidth is essentially free, but if a minimum level of bandwidth is required, you should pay for it, and this needs to be taken into account when assessing costs.

That is, processing data at the edge is not affected by this variable cost. Once the initial cost of the equipment has been incurred, the additional cost of processing any amount of data at the edge is virtually zero.

Data Protection Law

Data is valuable because it means or represents something. Anyone who captures information may now be subject to the data privacy laws of the region where that data was captured. This means that even the legal owner of the device that captured the data may not be allowed to move the data across geographic boundaries.

For example, this would include the EU Data Protection Directive, the General Data Protection Regulation and the Asia-Pacific Economic Cooperation Privacy Framework. Canada's Personal Information Protection and Electronic Documents Act is consistent with EU data protection law, and the United States' Safe Harbor Arrangement is also consistent with EU data protection law.

Edge processing can overcome this. By processing data at the edge, it does not need to leave the device. Data privacy is increasingly important in portable consumer devices, and facial recognition on phones uses local artificial intelligence to process camera images so the data never leaves the device. The same goes for CCTV and other security surveillance systems. Using cameras to monitor public spaces often means images are transmitted and processed by cloud-based data servers, which raises data privacy concerns. Processing data in-camera is fast and secure, potentially eliminating or simplifying the need for data privacy measures.

Murphy's Law

Finally, we need to consider Murphy's Law, which states that if something can go wrong, it will Something went wrong. Of course, even in the most well-designed systems in the world, problems will always arise. Edge processing eliminates many of the possible points of failure associated with moving data over the network, storing it in the cloud and relying on data centers to provide processing power.

If an application could technically benefit from edge processing, there are some questions to ask. Here are some of the most relevant suggestions:

(1) What processor architecture the application runs on

Port the software to different instructions Sets can be costly and introduce delays, so upgrading shouldn't mean moving out.

(2)What kind of I/O is needed

This can be any number of wired and/or wireless interfaces. This issue needs to be addressed early as it can lead to inefficiencies if not thought through carefully.

(3) What is the operating environment?

#Is the operating environment hot or cold? For example, the Mars mission is a good example of edge processing. , if it is extreme, the operating environment changes dramatically.

(4) Does the hardware need to be compliant or certified?

The answer is almost yes, so choosing a pre-certified platform can save time and money.

(5) How much power is needed

System power supplies are expensive in terms of unit cost and installation, so it is very beneficial to understand the power .

(6) Is the edge device limited by the form factor?

This is more important in edge processing than in many other deployments, so it needs to be Considerations early in the design cycle.

(7)What is the working time?

#Is this going into an industrial application that may need to run for many years, or is the life cycle measured in months? , these are all things we need to consider clearly.

(8) What are the performance requirements of the system

In terms of processing capabilities, such as frames per second, memory requirements, application language etc.

(9) Is there a cost consideration

This is a tough question because the answer is always "yes" but knowing the cost limit What will help in the selection process.

Conclusion

Edge processing is enabled by the Internet of Things, but it is much more than that. It's driven by higher expectations than earlier examples of connected devices. At a low level, there are commonalities; the device may need to be low power, it may need to be low cost, but now it may also need to provide a higher level of intelligent operation without conflicting with power consumption and cost.

Choosing the right platform can be made easier by choosing the right technology partner. Enter an ecosystem developed around edge computing and choose the right edge computing platform for your AI applications.

The above is the detailed content of How to choose edge AI devices. For more information, please follow other related articles on the PHP Chinese website!

2023年机器学习的十大概念和技术Apr 04, 2023 pm 12:30 PM

2023年机器学习的十大概念和技术Apr 04, 2023 pm 12:30 PM机器学习是一个不断发展的学科,一直在创造新的想法和技术。本文罗列了2023年机器学习的十大概念和技术。 本文罗列了2023年机器学习的十大概念和技术。2023年机器学习的十大概念和技术是一个教计算机从数据中学习的过程,无需明确的编程。机器学习是一个不断发展的学科,一直在创造新的想法和技术。为了保持领先,数据科学家应该关注其中一些网站,以跟上最新的发展。这将有助于了解机器学习中的技术如何在实践中使用,并为自己的业务或工作领域中的可能应用提供想法。2023年机器学习的十大概念和技术:1. 深度神经网

人工智能自动获取知识和技能,实现自我完善的过程是什么Aug 24, 2022 am 11:57 AM

人工智能自动获取知识和技能,实现自我完善的过程是什么Aug 24, 2022 am 11:57 AM实现自我完善的过程是“机器学习”。机器学习是人工智能核心,是使计算机具有智能的根本途径;它使计算机能模拟人的学习行为,自动地通过学习来获取知识和技能,不断改善性能,实现自我完善。机器学习主要研究三方面问题:1、学习机理,人类获取知识、技能和抽象概念的天赋能力;2、学习方法,对生物学习机理进行简化的基础上,用计算的方法进行再现;3、学习系统,能够在一定程度上实现机器学习的系统。

超参数优化比较之网格搜索、随机搜索和贝叶斯优化Apr 04, 2023 pm 12:05 PM

超参数优化比较之网格搜索、随机搜索和贝叶斯优化Apr 04, 2023 pm 12:05 PM本文将详细介绍用来提高机器学习效果的最常见的超参数优化方法。 译者 | 朱先忠审校 | 孙淑娟简介通常,在尝试改进机器学习模型时,人们首先想到的解决方案是添加更多的训练数据。额外的数据通常是有帮助(在某些情况下除外)的,但生成高质量的数据可能非常昂贵。通过使用现有数据获得最佳模型性能,超参数优化可以节省我们的时间和资源。顾名思义,超参数优化是为机器学习模型确定最佳超参数组合以满足优化函数(即,给定研究中的数据集,最大化模型的性能)的过程。换句话说,每个模型都会提供多个有关选项的调整“按钮

得益于OpenAI技术,微软必应的搜索流量超过谷歌Mar 31, 2023 pm 10:38 PM

得益于OpenAI技术,微软必应的搜索流量超过谷歌Mar 31, 2023 pm 10:38 PM截至3月20日的数据显示,自微软2月7日推出其人工智能版本以来,必应搜索引擎的页面访问量增加了15.8%,而Alphabet旗下的谷歌搜索引擎则下降了近1%。 3月23日消息,外媒报道称,分析公司Similarweb的数据显示,在整合了OpenAI的技术后,微软旗下的必应在页面访问量方面实现了更多的增长。截至3月20日的数据显示,自微软2月7日推出其人工智能版本以来,必应搜索引擎的页面访问量增加了15.8%,而Alphabet旗下的谷歌搜索引擎则下降了近1%。这些数据是微软在与谷歌争夺生

荣耀的人工智能助手叫什么名字Sep 06, 2022 pm 03:31 PM

荣耀的人工智能助手叫什么名字Sep 06, 2022 pm 03:31 PM荣耀的人工智能助手叫“YOYO”,也即悠悠;YOYO除了能够实现语音操控等基本功能之外,还拥有智慧视觉、智慧识屏、情景智能、智慧搜索等功能,可以在系统设置页面中的智慧助手里进行相关的设置。

30行Python代码就可以调用ChatGPT API总结论文的主要内容Apr 04, 2023 pm 12:05 PM

30行Python代码就可以调用ChatGPT API总结论文的主要内容Apr 04, 2023 pm 12:05 PM阅读论文可以说是我们的日常工作之一,论文的数量太多,我们如何快速阅读归纳呢?自从ChatGPT出现以后,有很多阅读论文的服务可以使用。其实使用ChatGPT API非常简单,我们只用30行python代码就可以在本地搭建一个自己的应用。 阅读论文可以说是我们的日常工作之一,论文的数量太多,我们如何快速阅读归纳呢?自从ChatGPT出现以后,有很多阅读论文的服务可以使用。其实使用ChatGPT API非常简单,我们只用30行python代码就可以在本地搭建一个自己的应用。使用 Python 和 C

人工智能在教育领域的应用主要有哪些Dec 14, 2020 pm 05:08 PM

人工智能在教育领域的应用主要有哪些Dec 14, 2020 pm 05:08 PM人工智能在教育领域的应用主要有个性化学习、虚拟导师、教育机器人和场景式教育。人工智能在教育领域的应用目前还处于早期探索阶段,但是潜力却是巨大的。

人工智能在生活中的应用有哪些Jul 20, 2022 pm 04:47 PM

人工智能在生活中的应用有哪些Jul 20, 2022 pm 04:47 PM人工智能在生活中的应用有:1、虚拟个人助理,使用者可通过声控、文字输入的方式,来完成一些日常生活的小事;2、语音评测,利用云计算技术,将自动口语评测服务放在云端,并开放API接口供客户远程使用;3、无人汽车,主要依靠车内的以计算机系统为主的智能驾驶仪来实现无人驾驶的目标;4、天气预测,通过手机GPRS系统,定位到用户所处的位置,在利用算法,对覆盖全国的雷达图进行数据分析并预测。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.