Technology peripherals

Technology peripherals AI

AI Single-machine training of 20 billion parameter large model: Cerebras breaks new record

Single-machine training of 20 billion parameter large model: Cerebras breaks new recordSingle-machine training of 20 billion parameter large model: Cerebras breaks new record

This week, chip startup Cerebras announced a new milestone: training an NLP (natural language processing) artificial intelligence model with more than 10 billion parameters in a single computing device.

The volume of AI models trained by Cerebras reaches an unprecedented 20 billion parameters, all without scaling workloads across multiple accelerators. This work is enough to satisfy the most popular text-to-image AI generation model on the Internet - OpenAI's 12 billion parameter large model DALL-E.

#The most important thing about Cerebras’ new job is the reduced infrastructure and software complexity requirements. The chip provided by this company, Wafer Scale Engine-2 (WSE2), is, as the name suggests, etched on a single entire wafer of TSMC's 7 nm process, an area typically large enough to accommodate hundreds of mainstream chips - with a staggering 2.6 trillion transistors. , 850,000 AI computing cores and 40 GB integrated cache, and the power consumption after packaging is as high as 15kW.

The Wafer Scale Engine-2 is close to the size of a wafer and is larger than an iPad.

Although Cerebras’ single machine is already similar to a supercomputer in terms of size, the NLP model retaining up to 20 billion parameters in a single chip is still Significantly reduces the cost of training on thousands of GPUs, and the associated hardware and scaling requirements, while eliminating the technical difficulty of splitting models among them. The latter is "one of the most painful aspects of NLP workloads" and sometimes "takes months to complete," Cerebras said.

This is a customized problem that is unique not only to each neural network being processed, but also to the specifications of each GPU and the network that ties them together - These elements must be set up in advance before the first training session and are not portable across systems.

Cerebras’ CS-2 is a stand-alone supercomputing cluster that includes Wafer Scale Engine-2 chips, all Associated power, memory, and storage subsystems.

#What is the approximate level of 20 billion parameters? In the field of artificial intelligence, large-scale pre-training models are the direction that various technology companies and institutions are working hard to develop recently. OpenAI's GPT-3 is an NLP model that can write entire articles and do things that are enough to deceive human readers. Mathematical operations and translations with a staggering 175 billion parameters. DeepMind’s Gopher, launched late last year, raised the record number of parameters to 280 billion.

Recently, Google Brain even announced that it had trained a model with more than one trillion parameters, Switch Transformer.

"In the field of NLP, larger models have been proven to perform better. But traditionally, only a few companies have the resources and expertise to complete the decomposition of these large models. model, the hard work of distributing it across hundreds or thousands of graphics processing units," said Andrew Feldman, CEO and co-founder of Cerebras. "As a result, very few companies can train large NLP models - it is too expensive, time-consuming and unavailable for the rest of the industry."

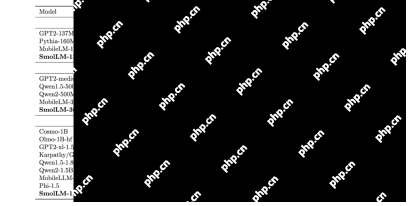

Now, Cerebras' approach Can lower the application threshold of GPT-3XL 1.3B, GPT-J 6B, GPT-3 13B and GPT-NeoX 20B models, enabling the entire AI ecosystem to build large models in minutes and train on a single CS-2 system they.

#However, just like the clock speed of flagship CPUs, the number of parameters is only one factor in the performance of large models an indicator. Recently, some research has achieved better results while reducing parameters, such as Chinchilla proposed by DeepMind in April this year, which surpassed GPT-3 and Gopher in conventional cases with only 70 billion parameters.

The goal of this type of research is of course working smarter, not working harder. Therefore, Cerebras's achievements are more important than what people first see - this research gives us confidence that the current level of chip manufacturing can adapt to increasingly complex models, and the company said that systems with special chips as the core have the support " The ability of models with hundreds of billions or even trillions of parameters.

The explosive growth in the number of trainable parameters on a single chip relies on Cerebras’ Weight Streaming technology. This technology decouples computation and memory footprint, allowing memory to scale at any scale based on the rapidly growing number of parameters in AI workloads. This reduces setup time from months to minutes and allows switching between models such as GPT-J and GPT-Neo. As the researcher said: "It only takes a few keystrokes."

"Cerebras provides people with the ability to run large language models in a low-cost and convenient way, opening the door to artificial intelligence This is an exciting new era of intelligence. It provides organizations that cannot spend tens of millions of dollars with an easy and inexpensive way to compete in large models," said Dan Olds, chief research officer at Intersect360 Research. "We very much look forward to new applications and discoveries from CS-2 customers as they train GPT-3 and GPT-J-level models on massive data sets."

The above is the detailed content of Single-machine training of 20 billion parameter large model: Cerebras breaks new record. For more information, please follow other related articles on the PHP Chinese website!

How to Build Your Personal AI Assistant with Huggingface SmolLMApr 18, 2025 am 11:52 AM

How to Build Your Personal AI Assistant with Huggingface SmolLMApr 18, 2025 am 11:52 AMHarness the Power of On-Device AI: Building a Personal Chatbot CLI In the recent past, the concept of a personal AI assistant seemed like science fiction. Imagine Alex, a tech enthusiast, dreaming of a smart, local AI companion—one that doesn't rely

AI For Mental Health Gets Attentively Analyzed Via Exciting New Initiative At Stanford UniversityApr 18, 2025 am 11:49 AM

AI For Mental Health Gets Attentively Analyzed Via Exciting New Initiative At Stanford UniversityApr 18, 2025 am 11:49 AMTheir inaugural launch of AI4MH took place on April 15, 2025, and luminary Dr. Tom Insel, M.D., famed psychiatrist and neuroscientist, served as the kick-off speaker. Dr. Insel is renowned for his outstanding work in mental health research and techno

The 2025 WNBA Draft Class Enters A League Growing And Fighting Online HarassmentApr 18, 2025 am 11:44 AM

The 2025 WNBA Draft Class Enters A League Growing And Fighting Online HarassmentApr 18, 2025 am 11:44 AM"We want to ensure that the WNBA remains a space where everyone, players, fans and corporate partners, feel safe, valued and empowered," Engelbert stated, addressing what has become one of women's sports' most damaging challenges. The anno

Comprehensive Guide to Python Built-in Data Structures - Analytics VidhyaApr 18, 2025 am 11:43 AM

Comprehensive Guide to Python Built-in Data Structures - Analytics VidhyaApr 18, 2025 am 11:43 AMIntroduction Python excels as a programming language, particularly in data science and generative AI. Efficient data manipulation (storage, management, and access) is crucial when dealing with large datasets. We've previously covered numbers and st

First Impressions From OpenAI's New Models Compared To AlternativesApr 18, 2025 am 11:41 AM

First Impressions From OpenAI's New Models Compared To AlternativesApr 18, 2025 am 11:41 AMBefore diving in, an important caveat: AI performance is non-deterministic and highly use-case specific. In simpler terms, Your Mileage May Vary. Don't take this (or any other) article as the final word—instead, test these models on your own scenario

AI Portfolio | How to Build a Portfolio for an AI Career?Apr 18, 2025 am 11:40 AM

AI Portfolio | How to Build a Portfolio for an AI Career?Apr 18, 2025 am 11:40 AMBuilding a Standout AI/ML Portfolio: A Guide for Beginners and Professionals Creating a compelling portfolio is crucial for securing roles in artificial intelligence (AI) and machine learning (ML). This guide provides advice for building a portfolio

What Agentic AI Could Mean For Security OperationsApr 18, 2025 am 11:36 AM

What Agentic AI Could Mean For Security OperationsApr 18, 2025 am 11:36 AMThe result? Burnout, inefficiency, and a widening gap between detection and action. None of this should come as a shock to anyone who works in cybersecurity. The promise of agentic AI has emerged as a potential turning point, though. This new class

Google Versus OpenAI: The AI Fight For StudentsApr 18, 2025 am 11:31 AM

Google Versus OpenAI: The AI Fight For StudentsApr 18, 2025 am 11:31 AMImmediate Impact versus Long-Term Partnership? Two weeks ago OpenAI stepped forward with a powerful short-term offer, granting U.S. and Canadian college students free access to ChatGPT Plus through the end of May 2025. This tool includes GPT‑4o, an a

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

SublimeText3 English version

Recommended: Win version, supports code prompts!

Atom editor mac version download

The most popular open source editor

Dreamweaver Mac version

Visual web development tools