Technology peripherals

Technology peripherals AI

AI Harvard University messed up: DALL-E 2 is just a 'glue monster', and the accuracy of its generation is only 22%

Harvard University messed up: DALL-E 2 is just a 'glue monster', and the accuracy of its generation is only 22%When DALL-E 2 was first released, the generated paintings could almost perfectly reproduce the input text. The high-definition resolution and powerful drawing imagination also made various netizens call it "too cool".

But a new research paper from Harvard University recently shows that although the images generated by DALL-E 2 are exquisite, it may just glue a few entities in the text together. Taken together, the spatial relationships expressed in the text are not even understood!

Paper link: https://arxiv.org/pdf/2208.00005.pdf

Data link: https://osf.io/sm68h/

For example, given a text prompt of "A cup on a spoon", you can see that some of the images generated by DALL-E 2 do not satisfy the "on" relationship.

However, in the training set, the combinations of tea cups and spoons that DALL-E 2 may see are all "in", while "on" is relatively rare, so between the two In terms of generating these relationships, the accuracy rates are also different.

So in order to explore whether DALL-E 2 can really understand the semantic relationships in the text, the researchers selected 15 types of relationships, 8 of which were spatial relationships (physical relations). ), including in, on, under, covering, near, occluded by, hanging over and tied to; 7 action relations (agentic relation), including pushing, pulling, touching, hitting, kicking, helping and hiding.

The entity set in the text is limited to 12, and the selected items are simple and common items in each data set, namely: box, cylinder, blanket, bowl, teacup, knife; man, woman, child, robot, monkey and iguana.

For each type of relationship, 5 prompts are created, and 2 entities are randomly selected for replacement each time, ultimately generating 75 text prompts. After submission to the DALL-E 2 rendering engine, the first 18 generated images were selected, resulting in 1350 images.

The researchers then selected 169 out of 180 annotators through a common sense reasoning test to participate in the annotation process.

Experimental results found that the average consistency between the images generated by DALL-E 2 and the text prompts used to generate the images was only 22.2% across 75 prompts

However, it is difficult to say whether DALL-E 2 truly "understands" the relationship in the text. By observing the consistency scores of the annotators, based on the consensus thresholds of 0%, 25% and 50%, Holm-corrected one-sample significance tests for each relationship showed that participant agreement was significantly higher than 0% at α = 0.95 (pHolm

So even without correcting for multiple comparisons, the fact is that the images generated by DALL-E 2 do not understand the relationship between the two objects in the text.

The results also show that DALL-E's ability to connect two unrelated objects may not be as strong as imagined, such as "A child touching a bowl" The consistency is 87% because in real-world images, children and bowls appear together very frequently.

The final consistency rate of the image generated by "A monkey touching an iguana" is only 11%, and there may even be species errors in the rendered image.

Therefore, some image categories in DALL-E 2 are relatively well developed, such as children and food, but some categories of data still require continued training.

However, the current DALL-E 2 still mainly displays its high-definition and realistic style on the official website. It is not yet clear whether its inner meaning is to "glue two objects together" or to truly understand the text information. Then generate the image.

Researchers said that relational understanding is a basic component of human intelligence, and DALL-E 2's poor performance in basic spatial relationships (such as on, of) shows that it is not yet as flexible and flexible as humans. Robustly construct and understand the world.

However, netizens said that being able to develop "glue" to stick things together is already a great achievement! DALL-E 2 is not AGI and there is still a lot of room for improvement in the future. At least we have opened the door to automatically generate images!

What else is wrong with DALL-E 2?

In fact, as soon as DALL-E 2 was released, a large number of practitioners conducted in-depth analysis of its advantages and disadvantages.

Blog link: https://www.lesswrong.com/posts/uKp6tBFStnsvrot5t/what-dall-e-2-can-and-cannot-do

Writing novels with GPT-3 is a bit monotonous. DALL-E 2 can generate some illustrations for the text and even generate comic strips for long texts.

For example, DALL-E 2 can add features to pictures, such as "A woman at a coffeeshop working on her laptop and wearing headphones, painting by Alphonse Mucha", which can accurately generate painting styles, coffee shops, and wearing headphones. , laptops, etc.

But if the feature description in the text involves two people, DALL-E 2 may forget which features belong to which person. For example, the input text is:

a young dark-haired boy resting in bed, and a grey-haired older woman sitting in a chair beside the bed underneath a window with sun streaming through, Pixar style digital art.

A young dark-haired boy lies on the bed and an old gray-haired woman sits on a chair next to the bed under the window with sunlight shining through, Pixar style digital art.

It can be seen that DALL-E 2 can correctly generate windows, chairs and beds, but the generated images are slightly different in the feature combination of age, gender and hair color. confused.

Another example is to let "Captain America and Iron Man stand side by side". You can see that the generated result obviously has the characteristics of Captain America and Iron Man, but the specific elements are placed on different people. (For example, Iron Man wears Captain America’s shield).

If the foreground and background are particularly detailed, the model may not be generated.

For example, the input text is:

Two dogs dressed like roman soldiers on a pirate ship looking at New York City through a spyglass.

##two dogs Dog looks at New York City through a spyglass like a Roman soldier on a pirate ship. This time DALL-E 2 just stopped working. The author of the blog spent half an hour and couldn't figure it out. In the end, he needed to play in "New York City and a pirate ship" or "a dog with a telescope and a Roman soldier uniform" Choose between. Dall-E 2 can generate images using a generic background, such as a city or a bookshelf in a library, but if that is not the main focus of the image, getting the finer details often becomes very Disaster. Although DALL-E 2 can generate common objects, such as various fancy chairs, if you ask it to generate an "Alto bicycle", the resulting picture will be somewhat similar to a bicycle, but not exactly.

# on a stop sign. ##Although the model can indeed generate some "recognizable" English letters, the connected semantics are still different from the expected words. This is where DALL-E 2 is not as good as the first generation DALL-E.

When generating images related to musical instruments, DALL-E 2 seems to remember the position of the human hand when playing, but without strings, playing is a little awkward.

When generating images related to musical instruments, DALL-E 2 seems to remember the position of the human hand when playing, but without strings, playing is a little awkward.

DALL-E 2 also provides an editing function. For example, after generating an image, you can use the cursor to highlight its area and add a complete description of the modification.

DALL-E 2 also provides an editing function. For example, after generating an image, you can use the cursor to highlight its area and add a complete description of the modification.

But this function is not always effective. For example, if you want to add "short hair" to the original image, the editing function will always add something in strange places.

# Technology is still being updated and developed, looking forward to DALL-E 3!

# Technology is still being updated and developed, looking forward to DALL-E 3!

The above is the detailed content of Harvard University messed up: DALL-E 2 is just a 'glue monster', and the accuracy of its generation is only 22%. For more information, please follow other related articles on the PHP Chinese website!

ai合并图层的快捷键是什么Jan 07, 2021 am 10:59 AM

ai合并图层的快捷键是什么Jan 07, 2021 am 10:59 AMai合并图层的快捷键是“Ctrl+Shift+E”,它的作用是把目前所有处在显示状态的图层合并,在隐藏状态的图层则不作变动。也可以选中要合并的图层,在菜单栏中依次点击“窗口”-“路径查找器”,点击“合并”按钮。

ai橡皮擦擦不掉东西怎么办Jan 13, 2021 am 10:23 AM

ai橡皮擦擦不掉东西怎么办Jan 13, 2021 am 10:23 AMai橡皮擦擦不掉东西是因为AI是矢量图软件,用橡皮擦不能擦位图的,其解决办法就是用蒙板工具以及钢笔勾好路径再建立蒙板即可实现擦掉东西。

谷歌超强AI超算碾压英伟达A100!TPU v4性能提升10倍,细节首次公开Apr 07, 2023 pm 02:54 PM

谷歌超强AI超算碾压英伟达A100!TPU v4性能提升10倍,细节首次公开Apr 07, 2023 pm 02:54 PM虽然谷歌早在2020年,就在自家的数据中心上部署了当时最强的AI芯片——TPU v4。但直到今年的4月4日,谷歌才首次公布了这台AI超算的技术细节。论文地址:https://arxiv.org/abs/2304.01433相比于TPU v3,TPU v4的性能要高出2.1倍,而在整合4096个芯片之后,超算的性能更是提升了10倍。另外,谷歌还声称,自家芯片要比英伟达A100更快、更节能。与A100对打,速度快1.7倍论文中,谷歌表示,对于规模相当的系统,TPU v4可以提供比英伟达A100强1.

ai可以转成psd格式吗Feb 22, 2023 pm 05:56 PM

ai可以转成psd格式吗Feb 22, 2023 pm 05:56 PMai可以转成psd格式。转换方法:1、打开Adobe Illustrator软件,依次点击顶部菜单栏的“文件”-“打开”,选择所需的ai文件;2、点击右侧功能面板中的“图层”,点击三杠图标,在弹出的选项中选择“释放到图层(顺序)”;3、依次点击顶部菜单栏的“文件”-“导出”-“导出为”;4、在弹出的“导出”对话框中,将“保存类型”设置为“PSD格式”,点击“导出”即可;

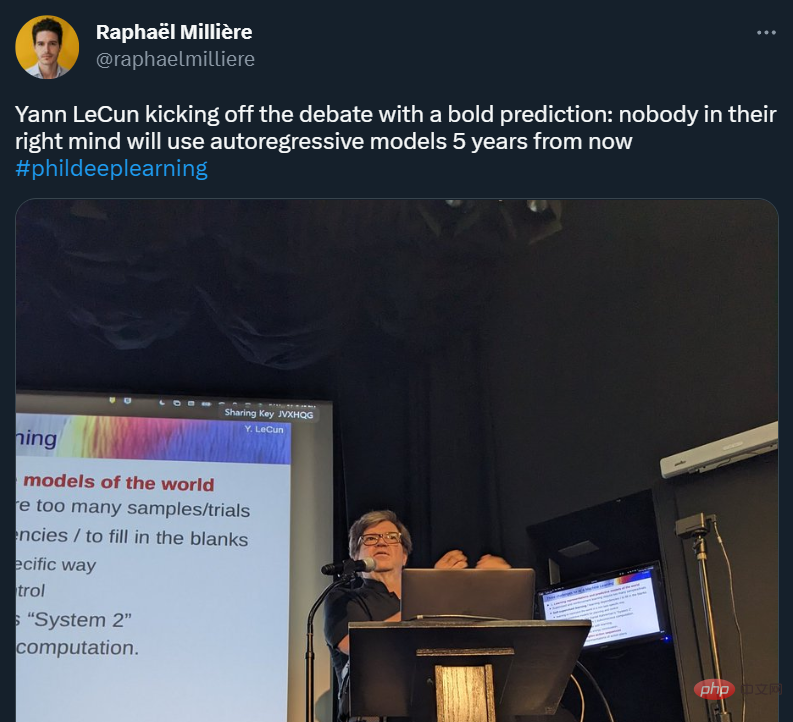

GPT-4的研究路径没有前途?Yann LeCun给自回归判了死刑Apr 04, 2023 am 11:55 AM

GPT-4的研究路径没有前途?Yann LeCun给自回归判了死刑Apr 04, 2023 am 11:55 AMYann LeCun 这个观点的确有些大胆。 「从现在起 5 年内,没有哪个头脑正常的人会使用自回归模型。」最近,图灵奖得主 Yann LeCun 给一场辩论做了个特别的开场。而他口中的自回归,正是当前爆红的 GPT 家族模型所依赖的学习范式。当然,被 Yann LeCun 指出问题的不只是自回归模型。在他看来,当前整个的机器学习领域都面临巨大挑战。这场辩论的主题为「Do large language models need sensory grounding for meaning and u

ai顶部属性栏不见了怎么办Feb 22, 2023 pm 05:27 PM

ai顶部属性栏不见了怎么办Feb 22, 2023 pm 05:27 PMai顶部属性栏不见了的解决办法:1、开启Ai新建画布,进入绘图页面;2、在Ai顶部菜单栏中点击“窗口”;3、在系统弹出的窗口菜单页面中点击“控制”,然后开启“控制”窗口即可显示出属性栏。

强化学习再登Nature封面,自动驾驶安全验证新范式大幅减少测试里程Mar 31, 2023 pm 10:38 PM

强化学习再登Nature封面,自动驾驶安全验证新范式大幅减少测试里程Mar 31, 2023 pm 10:38 PM引入密集强化学习,用 AI 验证 AI。 自动驾驶汽车 (AV) 技术的快速发展,使得我们正处于交通革命的风口浪尖,其规模是自一个世纪前汽车问世以来从未见过的。自动驾驶技术具有显着提高交通安全性、机动性和可持续性的潜力,因此引起了工业界、政府机构、专业组织和学术机构的共同关注。过去 20 年里,自动驾驶汽车的发展取得了长足的进步,尤其是随着深度学习的出现更是如此。到 2015 年,开始有公司宣布他们将在 2020 之前量产 AV。不过到目前为止,并且没有 level 4 级别的 AV 可以在市场

ai移动不了东西了怎么办Mar 07, 2023 am 10:03 AM

ai移动不了东西了怎么办Mar 07, 2023 am 10:03 AMai移动不了东西的解决办法:1、打开ai软件,打开空白文档;2、选择矩形工具,在文档中绘制矩形;3、点击选择工具,移动文档中的矩形;4、点击图层按钮,弹出图层面板对话框,解锁图层;5、点击选择工具,移动矩形即可。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

SublimeText3 Linux new version

SublimeText3 Linux latest version

SublimeText3 English version

Recommended: Win version, supports code prompts!