Technology peripherals

Technology peripherals AI

AI Why is self-monitoring effective? The 243-page Princeton doctoral thesis 'Understanding Self-supervised Representation Learning' comprehensively explains the three types of methods: contrastive learning, language modeling and self-prediction.

Why is self-monitoring effective? The 243-page Princeton doctoral thesis 'Understanding Self-supervised Representation Learning' comprehensively explains the three types of methods: contrastive learning, language modeling and self-prediction.Pre-training has emerged as an alternative and effective paradigm to overcome these shortcomings, where models are first trained using easily available data and then used to solve downstream tasks of interest, with less labeled data than supervised learning Much more.

Pre-training using unlabeled data, i.e. self-supervised learning, is particularly revolutionary and has achieved success in different fields: text, vision, speech, etc.

This raises an interesting and challenging question: Why should pretraining on unlabeled data help seemingly unrelated downstream tasks?

Paper address: https://dataspace.princeton.edu/ handle/88435/dsp01t435gh21h

#This paper presents some work that proposes and establishes a theoretical framework to investigate why self-supervised learning is beneficial for downstream tasks.

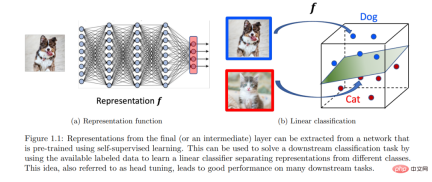

The framework is suitable for contrastive learning, autoregressive language modeling and self-prediction based methods. The core idea of this framework is that pre-training helps to learn a low-dimensional representation of the data, which subsequently helps solve the downstream tasks of interest with linear classifiers, requiring less labeled data.

A common topic is to formalize the ideal properties of unlabeled data distributions for building self-supervised learning tasks. With appropriate formalization, it can be shown that approximately minimizing the correct pre-training objective can extract downstream signals implicitly encoded in unlabeled data distributions.

Finally, it is shown that the signal can be decoded from the learned representation using a linear classifier, thus providing a formalization for the transfer of "skills and knowledge" across tasks.

Introduction

In the quest to design intelligent agents and data-driven solutions to problems In the process, the fields of machine learning and artificial intelligence have made tremendous progress in the past decade. With initial successes on challenging supervised learning benchmarks such as ImageNet [Deng et al., 2009], innovations in deep learning subsequently led to models with superhuman performance on many such benchmarks in different domains. Training such task-specific models is certainly impressive and has huge practical value. However, it has an important limitation in requiring large labeled or annotated datasets, which is often expensive. Furthermore, from an intelligence perspective, one hopes to have more general models that, like humans [Ahn and Brewer, 1993], can learn from previous experiences, summarize them into skills or concepts, and utilize these skills or Concepts to solve new tasks with little or no demonstration. After all, babies learn a lot through observation and interaction without explicit supervision. These limitations inspired an alternative paradigm for pretraining.

#The focus of this article is on pre-training using the often large amounts of available unlabeled data. The idea of using unlabeled data has long been a point of interest in machine learning, particularly through unsupervised and semi-supervised learning. A modern adaptation of this using deep learning is often called self-supervised learning (SSL) and has begun to change the landscape of machine learning and artificial intelligence through ideas such as contrastive learning and language modeling. The idea of self-supervised learning is to construct certain tasks using only unlabeled data, and train the model to perform well on the constructed tasks. Such tasks typically require models to encode structural properties of the data by predicting unobserved or hidden parts (or properties) of the input from observed or retained parts [LeCun and Misra, 2021]. Self-supervised learning has shown generality and utility on many downstream tasks of interest, often with better sample efficiency than solving tasks from scratch, bringing us one step closer to the goal of general-purpose agents. Indeed, recently, large language models like GPT-3 [Brown et al., 2020] have demonstrated fascinating “emergent behavior” that occurs at scale, sparking more interest in the idea of self-supervised pretraining .

Although self-supervised learning has been empirically successful and continues to show great promise, there is still a lack of good theoretical understanding of how it works beyond rough intuition. These impressive successes raise interesting questions because it is unclear a priori why a model trained on one task should help on another seemingly unrelated task, i.e. why training on task a should help Task b. While a complete theoretical understanding of SSL (and deep learning in general) is challenging and elusive, understanding this phenomenon at any level of abstraction may help develop more principled algorithms. The research motivation of this article is:

Why training on self-supervised learning tasks (using a large amount of unlabeled data) helps solve data-scarce downstream tasks? How to transfer "knowledge and skills" Formalized?

Although there is a large amount of literature on supervised learning, generalization from SSL tasks→downstream tasks is fundamentally different from generalization from training sets→test sets in supervised learning. For supervised learning for downstream tasks of classification, for example, a model trained on a training set of input-label pairs sampled from an unknown distribution can be directly used for evaluation on an unseen test set sampled from the same distribution. This basic distribution establishes the connection from the training set to the test set. However, the conceptual connection from SSL task→downstream task is less clear because the unlabeled data used in the SSL task has no clear signal about downstream labels. This means that a model pretrained on an SSL task (e.g., predicting a part of the input from the rest) cannot be directly used on downstream tasks (e.g., predicting a class label from the input). Therefore, the transfer of "knowledge and skills" requires an additional training step using some labeled data, ideally less than what is required for supervised learning from scratch. Any theoretical understanding of SSL task → downstream task generalization needs to address these questions: "What is the intrinsic role of unlabeled data? and "How to use pre-trained models for downstream tasks?" This paper targets the downstream tasks of classification, by Make distribution assumptions on unlabeled data and use the idea of representation learning to study these issues:

(a) (Distribution Assumption) The distribution of unlabeled data implicitly contains relevant Information about downstream classification tasks of interest.

(b) (Representation Learning) A model pretrained on an appropriate SSL task can encode that signal through learned representations that are then Downstream classification tasks can be solved using linear classifiers.

Point (a) shows that certain unlabeled structural properties implicitly provide us with hints about subsequent downstream tasks, and self-supervised learning can help learn from data to tease out this signal. Point (b) proposes a simple and empirically effective way to use pre-trained models, leveraging the model’s learned representations. This paper identifies and mathematically quantifies distributional properties of unlabeled data, demonstrating that good representations can be learned for different SSL methods such as contrastive learning, language modeling, and self-prediction. In the next section, we delve into the idea of representation learning and formally explain why self-supervised learning helps downstream tasks.

The above is the detailed content of Why is self-monitoring effective? The 243-page Princeton doctoral thesis 'Understanding Self-supervised Representation Learning' comprehensively explains the three types of methods: contrastive learning, language modeling and self-prediction.. For more information, please follow other related articles on the PHP Chinese website!

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AM

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AMIntroduction In prompt engineering, “Graph of Thought” refers to a novel approach that uses graph theory to structure and guide AI’s reasoning process. Unlike traditional methods, which often involve linear s

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AM

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AMIntroduction Congratulations! You run a successful business. Through your web pages, social media campaigns, webinars, conferences, free resources, and other sources, you collect 5000 email IDs daily. The next obvious step is

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AM

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AMIntroduction In today’s fast-paced software development environment, ensuring optimal application performance is crucial. Monitoring real-time metrics such as response times, error rates, and resource utilization can help main

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM“How many users do you have?” he prodded. “I think the last time we said was 500 million weekly actives, and it is growing very rapidly,” replied Altman. “You told me that it like doubled in just a few weeks,” Anderson continued. “I said that priv

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AM

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AMIntroduction Mistral has released its very first multimodal model, namely the Pixtral-12B-2409. This model is built upon Mistral’s 12 Billion parameter, Nemo 12B. What sets this model apart? It can now take both images and tex

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AM

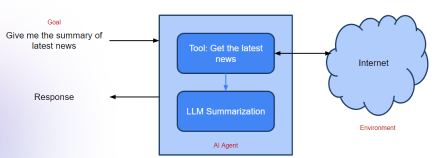

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AMImagine having an AI-powered assistant that not only responds to your queries but also autonomously gathers information, executes tasks, and even handles multiple types of data—text, images, and code. Sounds futuristic? In this a

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AM

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AMIntroduction The finance industry is the cornerstone of any country’s development, as it drives economic growth by facilitating efficient transactions and credit availability. The ease with which transactions occur and credit

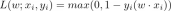

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AM

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AMIntroduction Data is being generated at an unprecedented rate from sources such as social media, financial transactions, and e-commerce platforms. Handling this continuous stream of information is a challenge, but it offers an

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

WebStorm Mac version

Useful JavaScript development tools