Two-stage multi-task Text-to-SQL pre-training model MIGA based on T5

More and more work has proven that pre-trained language models (PLM) contain rich knowledge. For different tasks, using appropriate training methods to leverage PLM can better improve the performance of the model. ability. In Text-to-SQL tasks, the current mainstream generators are based on syntax trees and need to be designed for SQL syntax.

Recently, NetEase Interactive Entertainment AI Lab teamed up with Guangdong University of Foreign Studies and Columbia University to propose a two-stage multi-task pre-training model MIGA based on the pre-training method of the pre-trained language model T5. MIGA introduces three auxiliary tasks in the pre-training stage and organizes them into a unified generation task paradigm, which can uniformly train all Text-to-SQL data sets; at the same time, in the fine-tuning stage, MIGA targets errors in multiple rounds of dialogue The transfer problem is used for SQL perturbation, which improves the robustness of model generation.

Currently for Text-to-SQL research, the mainstream method is mainly the encoder-decoder model based on the SQL syntax tree, which can ensure that the generated results must comply with SQL syntax, but it needs to be targeted SQL syntax is specially designed. There has also been some recent research on Text-to-SQL based on generative language models, which can easily inherit the knowledge and capabilities of pre-trained language models.

In order to reduce the dependence on syntax trees and better tap the ability of pre-trained language models, this study proposed a two-stage multi-language model under the framework of pre-trained T5 models. Task Text-to-SQL pre-training model MIGA (MultI-task Ggeneration frAmework).

MIGA is divided into two stages of training process:

- In the pre-training stage, MIGA uses the same pre-training paradigm as T5 , three additional auxiliary tasks related to Text-to-SQL are proposed to better stimulate the knowledge in the pre-trained language model. This training method can unify all Text-to-SQL data sets and expand the scale of training data; it can also flexibly design more effective auxiliary tasks to further explore the potential knowledge of the pre-trained language model.

- In the fine-tuning phase, MIGA targets the error transmission problems that are prone to occur in multi-round conversations and SQL. It perturbs the historical SQL during the training process, making the generation of the current round of SQL more effective. Stablize.

MIGA model performs better than the best syntax tree-based model on two multi-turn dialogue Text-to-SQL public data sets. Related research has been carried out by AAAI 2023 Accepted.

##Paper address: https://arxiv.org/abs/2212.09278

MIGA model details

Figure 1 MIGA model diagram.

Multi-task pre-training phase

This research mainly refers to the pre-training method of T5, based on the The trained T5 model is designed with four pre-training tasks:

- Text-to-SQL Main task: For the yellow part in the picture above, design the Prompt as "translate dialogue to system query", and then use some special tokens to combine historical dialogue, database information and SQL The statements are spliced and input into T5-encoder, and the decoder directly outputs the corresponding SQL statement;

- Related information prediction: For the green part in the above figure, the design prompt is "translate dialogue to relevant column ", the input of T5-encoder is also consistent with the main task, and the decoder needs to output data tables and columns related to the current problem, in order to enhance the model's understanding of Text-to-SQL;

- Operation prediction of the current round: The gray part in the above picture is designed as "translate dialogue to turn switch". This task is mainly designed for context understanding in multiple rounds of dialogue, comparing the previous round of dialogue and SQL , the decoder needs to output what changes have been made to the purpose of the current dialogue. For example, in the example in the picture, the where condition has been changed;

- Final dialogue prediction: the blue part in the picture above, design Prompt The purpose of "translate dialogue to final utterance" is to allow the model to better understand the contextual dialogue. The decoder needs to output the entire multi-round dialogue and a complete problem description corresponding to the SQL at the last moment.

Through such a unified training method design, MIGA can be versatile and flexible to handle more task-related additional tasks, and it also has the following advantages:

- Referring to the steps of humans writing SQL, the conversation text to SQL task is decomposed into multiple subtasks, allowing the main task to learn from them;

- The construction format of training samples is consistent with T5, which can maximize the potential of the pre-trained T5 model for target tasks;

- The unified framework allows flexible scheduling of multiple auxiliary tasks. When applied to a specific task, the above pre-trained model only needs to be fine-tuned using the same training objective in the labeled data of the specific task.

In the pre-training stage, the study integrated data from the Text-to-SQL dataset Spider and the conversational Text-to-SQL dataset SparC and CoSQL to train the T5 model .

Fine-tuning stage

After the pre-training stage, this study simply uses Text-to-SQL tasks to further fine-tune the model. When predicting the current round of SQL, this study will splice the predicted SQL of the previous round. In this process, in order to try to overcome the error transmission problem caused by multiple rounds of dialogue and generation, this study proposes a SQL perturbation scheme. , perturb the historical rounds of SQL in the input data with α probability. The perturbation of the SQL statement mainly samples the corresponding token with a probability of β, and then performs one of the following perturbations:

- Use columns in the same data table to randomly modify or new Add columns in the SELECT part;

- Randomly modify the structure in the JOIN condition, such as exchanging the positions of the two tables;

- Modify"* ” All columns are some other columns;

- Swap “asc” and “desc”.

The above-mentioned perturbations are the most common SQL generation errors caused by error transmission statistically found in the experiment. Therefore, perturbations are carried out for these situations to reduce the model's dependence on this aspect.

Experimental evaluation

The evaluation dataset is multi-turn dialogue Text-to-SQL: SparC and CoSQL.

The evaluation indicators are:

- QM: Question Match, which means that the generated SQL in a single round of questions completely matches the annotation output. Proportion;

- IM: Interaction Match, indicating the proportion of all generated SQL in the entire complete round of multi-round dialogue that completely matches the annotation output.

In the comparative experiment in Table 1, MIGA surpassed the current best multi-turn dialogue in terms of IM scores on the two data sets and CoSQL's QM scores. Text-to-SQL model. And compared with the same type of T5-based solutions, MIGA improved IM by 7.0% and QM by 5.8% respectively.

Table 1 Comparative experimental analysis, the first part is the tree model, and the second part is the generation model based on pre-training.

In the ablation experiment in Table 2, this study explored several tasks in the two-stage training process of MIGA, and at the same time proved that these tasks will respectively affect the target. Tasks have been improved to varying degrees.

Table 2 For the SparC task, if each task or data is removed respectively, the indicators will be reduced.

In the actual case analysis results, the stability and correctness of MIGA generation are better than those based on the T5-3B training model. It can be seen that MIGA is better in generating It is better than other models in multi-table join operations and mapping of columns and tables. In Question#2 of Case#1, the T5-3B model cannot generate effective SQL for the relatively complex JOIN structure (two-table connection), which leads to incorrect predictions for the more complex JOIN structure (three-table connection) in Question#3. . MIGA accurately predicts the JOIN structure and maintains the previous condition t1.sex="f" well. In Case #2, T5-3B confuses multiple columns from different tables and mistakes earnings for a column of the people table, whereas MIGA correctly identifies that column as belonging to the poker_player table and links it to t1.

Table 3 Case analysis.

Conclusion

NetEase Interactive Entertainment AI Lab proposed a two-stage multi-task pre-training model based on T5 for Text-to-SQL: MIGA. In the pre-training stage, MIGA decomposes the Text-to-SQL task into three additional subtasks and unifies them into a sequence-to-sequence generation paradigm to better motivate the pre-trained T5 model. And a SQL perturbation mechanism is introduced in the fine-tuning stage to reduce the impact of error transmission in multiple rounds of Text-to-SQL generation scenarios.

In the future, the research team will further explore more effective strategies to leverage the capabilities of very large language models, and explore more elegant and effective ways to further overcome problems caused by incorrect transmission. Effect reduction problem.

The above is the detailed content of Two-stage multi-task Text-to-SQL pre-training model MIGA based on T5. For more information, please follow other related articles on the PHP Chinese website!

AI Game Development Enters Its Agentic Era With Upheaval's Dreamer PortalMay 02, 2025 am 11:17 AM

AI Game Development Enters Its Agentic Era With Upheaval's Dreamer PortalMay 02, 2025 am 11:17 AMUpheaval Games: Revolutionizing Game Development with AI Agents Upheaval, a game development studio comprised of veterans from industry giants like Blizzard and Obsidian, is poised to revolutionize game creation with its innovative AI-powered platfor

Uber Wants To Be Your Robotaxi Shop, Will Providers Let Them?May 02, 2025 am 11:16 AM

Uber Wants To Be Your Robotaxi Shop, Will Providers Let Them?May 02, 2025 am 11:16 AMUber's RoboTaxi Strategy: A Ride-Hail Ecosystem for Autonomous Vehicles At the recent Curbivore conference, Uber's Richard Willder unveiled their strategy to become the ride-hail platform for robotaxi providers. Leveraging their dominant position in

AI Agents Playing Video Games Will Transform Future RobotsMay 02, 2025 am 11:15 AM

AI Agents Playing Video Games Will Transform Future RobotsMay 02, 2025 am 11:15 AMVideo games are proving to be invaluable testing grounds for cutting-edge AI research, particularly in the development of autonomous agents and real-world robots, even potentially contributing to the quest for Artificial General Intelligence (AGI). A

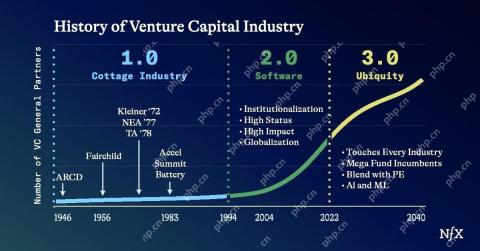

The Startup Industrial Complex, VC 3.0, And James Currier's ManifestoMay 02, 2025 am 11:14 AM

The Startup Industrial Complex, VC 3.0, And James Currier's ManifestoMay 02, 2025 am 11:14 AMThe impact of the evolving venture capital landscape is evident in the media, financial reports, and everyday conversations. However, the specific consequences for investors, startups, and funds are often overlooked. Venture Capital 3.0: A Paradigm

Adobe Updates Creative Cloud And Firefly At Adobe MAX London 2025May 02, 2025 am 11:13 AM

Adobe Updates Creative Cloud And Firefly At Adobe MAX London 2025May 02, 2025 am 11:13 AMAdobe MAX London 2025 delivered significant updates to Creative Cloud and Firefly, reflecting a strategic shift towards accessibility and generative AI. This analysis incorporates insights from pre-event briefings with Adobe leadership. (Note: Adob

Everything Meta Announced At LlamaConMay 02, 2025 am 11:12 AM

Everything Meta Announced At LlamaConMay 02, 2025 am 11:12 AMMeta's LlamaCon announcements showcase a comprehensive AI strategy designed to compete directly with closed AI systems like OpenAI's, while simultaneously creating new revenue streams for its open-source models. This multifaceted approach targets bo

The Brewing Controversy Over The Proposition That AI Is Nothing More Than Just Normal TechnologyMay 02, 2025 am 11:10 AM

The Brewing Controversy Over The Proposition That AI Is Nothing More Than Just Normal TechnologyMay 02, 2025 am 11:10 AMThere are serious differences in the field of artificial intelligence on this conclusion. Some insist that it is time to expose the "emperor's new clothes", while others strongly oppose the idea that artificial intelligence is just ordinary technology. Let's discuss it. An analysis of this innovative AI breakthrough is part of my ongoing Forbes column that covers the latest advancements in the field of AI, including identifying and explaining a variety of influential AI complexities (click here to view the link). Artificial intelligence as a common technology First, some basic knowledge is needed to lay the foundation for this important discussion. There is currently a large amount of research dedicated to further developing artificial intelligence. The overall goal is to achieve artificial general intelligence (AGI) and even possible artificial super intelligence (AS)

Model Citizens, Why AI Value Is The Next Business YardstickMay 02, 2025 am 11:09 AM

Model Citizens, Why AI Value Is The Next Business YardstickMay 02, 2025 am 11:09 AMThe effectiveness of a company's AI model is now a key performance indicator. Since the AI boom, generative AI has been used for everything from composing birthday invitations to writing software code. This has led to a proliferation of language mod

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

SublimeText3 English version

Recommended: Win version, supports code prompts!

Zend Studio 13.0.1

Powerful PHP integrated development environment

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Notepad++7.3.1

Easy-to-use and free code editor