1. Background introduction

In ByteDance, applications based on deep learning are blooming everywhere. While engineers focus on model effects, they also need to pay attention to online service consistency and performance. , in the early days, this usually required division of labor and close cooperation between algorithm experts and engineering experts. This model has relatively high costs such as diff troubleshooting and verification.

With the popularity of the PyTorch/TensorFlow framework, deep learning model training and online reasoning have been unified. Developers only need to pay attention to the specific algorithm logic and call the Python API of the framework to complete the training verification process. After that, the model can It is very convenient to serialize and export, and the reasoning work is completed by a unified high-performance C engine. Improved developer experience from training to deployment.

However, a complete service usually still has a lot of business logic such as pre-processing/post-processing. This type of logic usually processes various inputs and converts them into Tensors, and then inputs them into the model, and then the model outputs Tensor is then processed into the target format. Some typical scenarios are as follows:

- Bert

- Resnet

Our goal is to provide automated and unified training and inference solutions for the above end-to-end process, alleviate a series of problems such as manual development of inference processes and alignment diffs, and achieve large-scale unified deployment solutions.

2. Core issues

Frameworks such as PyTorch/TensorFlow have relatively solved the problem of unified model training/inference, so the model calculation itself does not have the problem of integrating training and inference (operator performance Optimization is beyond the scope of this discussion).

The core problem to be solved is: pre-processing and post-processing need to provide a high-performance training and push integrated solution.

For this type of logic, TensorFlow 2.x provides tf.function (not yet complete), and PyTorch provides TorchScript, which without exception selects a subset of native Python syntax. But even if it is so powerful, there are still problems that cannot be ignored:

- Performance: Most of this solution is based on virtual machine implementation. The virtual machine solution is flexible and very controllable, but most of the virtual machines in the deep learning framework are usually Performance is not good enough. As a supplement, the framework was designed for Tensor computing in the early days. The cost of each operator in array computing is very high, and the cost of virtual machine dispatch and scheduling can be ignored. However, the overhead of porting to programming language programming is difficult to ignore, and writing too much code will become a performance bottleneck. According to tests, the performance of the TorchScript interpreter is only about 1/5 of that of Python, and the performance of tf.function is even worse.

- Incomplete functions: In fact, when applied to real scenarios, we can still find many important functions that tf.function/TorchScript does not support, such as: custom resources cannot be packaged and can only serialize built-in types; Strings can only be processed by bytes, and unicode such as Chinese will cause diff; the container must be isomorphic and does not support custom types, etc...

Furthermore, there are many non-deep learning tasks, For example, there are still many non-deep learning applications or subtasks in natural language processing, such as sequence annotation, language model decoding, artificial feature construction of tree models, etc. These usually have more flexible feature paradigms, but at the same time they are not fully implemented. The end-to-end integrated training and promotion solution still requires a lot of development and correctness verification work.

In order to solve the above problems, we have developed a preprocessing solution based on compilation: MATXScript!

3. MATXScript

In the development of deep learning algorithms, developers usually use Python for rapid iteration and experimentation, while using C to develop high-performance online services, including correctness checksums Service development will become a heavier burden!

MatxScript (https://github.com/bytedance/matxscript) is an AOT compiler for Python sub-language, which can automatically translate Python into C and provide one-click packaging and publishing functions. Using MATXScript allows developers to quickly iterate on models while deploying high-performance services at a lower cost.

The core architecture is as follows:

- The lowest layer is a pure C/CUDA basic library, developed by high-performance operator experts.

- On top of the basic library, the Python library is encapsulated according to the convention and can be used in the training process.

- When inferencing is required, MATXScript can be used to translate the Python code into equivalent C code, compile it into a dynamic link library, add the model and other dependent resources, and package and publish it together.

Among them, the role of the compiler is very critical, and its core process is as follows:

Through the above process, the preprocessing code written by the user can It is compiled into a JitOp in the Pipeline. In order to link the pre- and post-processing with the model, we also developed a tracing system (the interface design refers to PyTorch). The architecture is as follows:

Based on MATXScript, we can use the same set of codes for training and inference, which greatly reduces the cost of model deployment. At the same time, the architecture and algorithm are decoupled, and algorithm students can work entirely in Python. Architecture students focus on compiler development and runtime optimization. In ByteDance, this solution has been verified by large-scale deployment!

4. A small test

Here is the simplest English text preprocessing as an example to show how to use MATXScript.

Goal: Convert a piece of English text into indexes

- Write a basic dictionary lookup logic

class Text2Ids:

def __init__(self) -> None:

self.table: Dict[str, int] = {

"hello": 0,

"world": 1,

"[UNK]": 2,

}

def lookup(self, word: str)

return self.table.get(word, 2)

def__call__ (self, words: List[str])

return [self.lookup(w) for w in words]- Write Pipeline

import matx

class WorkFlow:

def __init__(self):

# 此处会进行代码编译,Python 代码自动编译封装为 Callable 对象

self.text2ids = matx.script(Text2Ids)()

def process(self, texts):

ids = self.text2ids(texts)

return ids

# test

handler = WorkFlow()

print(handler.process("hello world unknown"))

# output: [0, 1, 2]- Trace Export to disk

# dump

mod = matx.trace(handler.process, "hello world")

print(mod.run({"texts": "hello world"}))

mod.save('./my_dir')

# load

mod = matx.load('./my_dir', -1)

print(mod.run({"texts": "hello world"}))- C Load

#include <string>

#include <vector>

#include <map>

#include <iostream>

#include <matxscript/pipeline/tx_session.h>

using namespace ::matxscript::runtime;

int main()

{

// test case

std::unordered_map<std::string, RTValue> feed_dict;

feed_dict.emplace("texts", Unicode(U"hello world"));

std::vector<std::pair<std::string, RTValue>> result;

const char* module_path = "./my_dir";

const char* module_name = "model.spec.json";

{

// -1 mean cpu

auto sess = TXSession::Load(module_path, module_name, -1);

auto result = sess->Run(feed_dict);

for (auto& r : result) {

std::cout << "key: " << r.first << ", value: " << r.second << std::endl;

}

}

return 0;

}For the complete code, see: https://github. com/bytedance/matxscript/tree/main/examples/text2ids

Summary: The above is a very simple preprocessing logic implemented in pure Python, and can be loaded and run by a general C code. Next we combine the model Show an actual multimodal end-to-end case!

5. Multi-modal case

Here we take graphic multi-modal (Bert Resnet) as an example. The model is written using PyTorch to show the actual work in training and deployment.

- Configuration environment

a. Configure gcc/cuda and other infrastructure (usually the operation and maintenance students have already done this)

b. Install MATXScript and the basic libraries developed based on it (text, vision etc.) - Write model code

a. Omitted here, you can refer to papers or other open source implementations to do it yourself - Write preprocessing code

a . text

from typing import List, Dict, Tuple

import libcut

import matx

class Vocabulary:

...

def utf8_decoder(s: List[bytes]):

return [x.decode() for x in s]

class TextNDArrayBuilder:

...

class TextPipeline:

def __init__(self, mode: str = "eval"):

self.mode = mode

self.cut_engine = libcut.Cutter('/path/to/cut_models', ...)

self.vocab = matx.script(Vocabulary)('/path/to/vocab.txt')

self.decoder = matx.script(utf8_decoder)

self.input_builder = matx.script(TextNDArrayBuilder)(self.vocab)

def process(self, text: List[bytes]):

# List[bytes] 是对齐 C++ 的 vector<string>

text: List[str] = self.decoder(text)

words: List[List[str]] = self.cut_engine(text)

batch_ids: List[List[int]] = self.vocab(words)

input_ids, segment_ids, mask_ids = self.input_builder(batch_ids, 32)

if self.mode == "train":

return input_ids.torch(), segment_ids.torch(), mask_ids.torch()

return input_ids, segment_ids, mask_idsb. vision

from typing import List, Dict, Tuple import matx from matx import vision class VisionPipeline: def __init__(self, device_id: int = 0, mode: str = "eval", image_size: int = 224,): self.is_training = mode == 'train' self.mode = mode ... def process(self, image,): if self.is_training: decode_nds = self.random_crop_decode(image) flip_nds = self.random_flip(decode_nds) resize_nds = self.resize(flip_nds) transpose_nd = self.transpose_norm(resize_nds, vision.SYNC) else: decode_nds = self.decode(image) resize_nds = self.resize(decode_nds) crop_nds = self.center_crop(resize_nds) transpose_nd = self.transpose_norm(crop_nds, vision.SYNC) if self.mode == "trace": return transpose_nd return transpose_nd.torch()

- Connect to DataLoader

a. TextPipeline can be used as a normal Python Class and connected to Dataset

b. VisionPipeline involves GPU preprocessing is more suitable for batch processing. You need to construct a separate DataLoader by yourself (bury a point here, and will open source ByteDance’s internal multi-threaded DataLoader later) - Add the model code and start training Bar

- Export the end-to-end Inference Model

class MultimodalEvalPipeline:

def __init__(self):

self.text_pipe = TextPipeline(mode="eval", ...)

self.vision_pipe = VisionPipeline(mode="eval", ...)

self.torch_model = torch.jit.load('/path/to/multimodal.jit', map_locatinotallow='cuda:0')

self.tx_model_op = matx.script(self.torch_model, device=0)

def eval(self, texts: List[bytes], images: List[bytes])

input_ids, segment_ids, mask_ids = self.text_pipe.process(texts)

images = self.vision_pipe.process(images)

scores = self.tx_model_op(input_ids, segment_ids, mask_ids, images)

return scores

# examples

example_batch_size = 8

text_examples = ['hello, world'.encode()] * example_batch_size

with open('/path/image.jpg', 'rb') as f:

image_example = f.read()

image_examples = [image_example] * example_batch_size

# pipeline instance

pipe = MultimodalEvalPipeline(...)

mod = matx.trace(pipe.eval, text_examples, image_examples)

# test

print(mod.run({"texts": text_examples, "images": image_examples}))

# save

mod.save('/path/to/my_multimodal')Summary: After the above steps, we can complete the end-to-end training & release work, and the entire process is completed with pure Python code , can be completely controlled by the algorithm students themselves. Of course, if there are performance issues in the model calculation itself, it can also be completed behind the scenes through automatic image modification and optimization.

Note: For complete code examples, see https://github.com/bytedance/matxscript/tree/main/examples/e2e_multi_modal

6. Unified Server

In the previous In this chapter, we got a model package released by an algorithm classmate. This chapter discusses how to load and run it using a unified service.

The complete Server includes: IDL protocol, Batching strategy, thread/thread scheduling and arrangement, model reasoning...

Here, we only discuss model reasoning, the others are It can be developed as agreed. We use a main function to illustrate the process of model loading and running:

#include <string>

#include <vector>

#include <map>

#include <iostream>

#include <matxscript/pipeline/tx_session.h>

using namespace ::matxscript::runtime;

int main()

{

// test case

std::unordered_map<std::string, RTValue> feed_dict;

feed_dict.emplace("texts", List({String("hello world")}));

feed_dict.emplace("images", List({String("......")}));

std::vector<std::pair<std::string, RTValue>> result;

const char* module_path = "/path/to/my_multimodal";

const char* module_name = "model.spec.json";

{

// cuda:0

auto sess = TXSession::Load(module_path, module_name, 0);

auto result = sess->Run(feed_dict);

for (auto& r : result) {

std::cout << "key: " << r.first << ", value: " << r.second << std::endl;

}

}

return 0;

}The above code is the simplest case of loading a multi-modal model in C. For students who develop Server, they only need to perform a simple Through abstraction and convention, the above code can be transformed into a unified C model service framework.

7. More information

We are the Bytedance-AML-machine learning system team, committed to providing the company with a unified high-performance training and promotion integrated framework, and also It will serve partner companies through the Volcano Engine Machine Learning Platform. The Volcano Engine Machine Learning Platform is expected to provide MATX-related support from 2023, including preset mirror environments, public samples of common scenarios, and technical support during enterprise access and use. etc., which can achieve low-cost acceleration and integration of training and inference scenarios. Welcome to learn more about our products at https://www.volcengine.com/product/ml-platform.

The above is the detailed content of Bytedance model large-scale deployment actual combat. For more information, please follow other related articles on the PHP Chinese website!

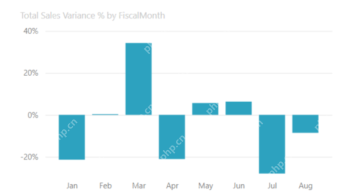

Most Used 10 Power BI Charts - Analytics VidhyaApr 16, 2025 pm 12:05 PM

Most Used 10 Power BI Charts - Analytics VidhyaApr 16, 2025 pm 12:05 PMHarnessing the Power of Data Visualization with Microsoft Power BI Charts In today's data-driven world, effectively communicating complex information to non-technical audiences is crucial. Data visualization bridges this gap, transforming raw data i

Expert Systems in AIApr 16, 2025 pm 12:00 PM

Expert Systems in AIApr 16, 2025 pm 12:00 PMExpert Systems: A Deep Dive into AI's Decision-Making Power Imagine having access to expert advice on anything, from medical diagnoses to financial planning. That's the power of expert systems in artificial intelligence. These systems mimic the pro

Three Of The Best Vibe Coders Break Down This AI Revolution In CodeApr 16, 2025 am 11:58 AM

Three Of The Best Vibe Coders Break Down This AI Revolution In CodeApr 16, 2025 am 11:58 AMFirst of all, it’s apparent that this is happening quickly. Various companies are talking about the proportions of their code that are currently written by AI, and these are increasing at a rapid clip. There’s a lot of job displacement already around

Runway AI's Gen-4: How Can AI Montage Go Beyond AbsurdityApr 16, 2025 am 11:45 AM

Runway AI's Gen-4: How Can AI Montage Go Beyond AbsurdityApr 16, 2025 am 11:45 AMThe film industry, alongside all creative sectors, from digital marketing to social media, stands at a technological crossroad. As artificial intelligence begins to reshape every aspect of visual storytelling and change the landscape of entertainment

How to Enroll for 5 Days ISRO AI Free Courses? - Analytics VidhyaApr 16, 2025 am 11:43 AM

How to Enroll for 5 Days ISRO AI Free Courses? - Analytics VidhyaApr 16, 2025 am 11:43 AMISRO's Free AI/ML Online Course: A Gateway to Geospatial Technology Innovation The Indian Space Research Organisation (ISRO), through its Indian Institute of Remote Sensing (IIRS), is offering a fantastic opportunity for students and professionals to

Local Search Algorithms in AIApr 16, 2025 am 11:40 AM

Local Search Algorithms in AIApr 16, 2025 am 11:40 AMLocal Search Algorithms: A Comprehensive Guide Planning a large-scale event requires efficient workload distribution. When traditional approaches fail, local search algorithms offer a powerful solution. This article explores hill climbing and simul

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost EfficiencyApr 16, 2025 am 11:37 AM

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost EfficiencyApr 16, 2025 am 11:37 AMThe release includes three distinct models, GPT-4.1, GPT-4.1 mini and GPT-4.1 nano, signaling a move toward task-specific optimizations within the large language model landscape. These models are not immediately replacing user-facing interfaces like

The Prompt: ChatGPT Generates Fake PassportsApr 16, 2025 am 11:35 AM

The Prompt: ChatGPT Generates Fake PassportsApr 16, 2025 am 11:35 AMChip giant Nvidia said on Monday it will start manufacturing AI supercomputers— machines that can process copious amounts of data and run complex algorithms— entirely within the U.S. for the first time. The announcement comes after President Trump si

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Atom editor mac version download

The most popular open source editor

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

Dreamweaver Mac version

Visual web development tools

Notepad++7.3.1

Easy-to-use and free code editor