Technology peripherals

Technology peripherals AI

AI The disadvantages of ChatGPT for production-grade conversational AI systems

The disadvantages of ChatGPT for production-grade conversational AI systemsThe disadvantages of ChatGPT for production-grade conversational AI systems

Translator|Bugatti

Reviewer|Sun Shujuan

ChatGP attracted worldwide attention with its detailed and human-like written responses, triggering There is a lively discussion about how people should interact with this artificial intelligence (AI). In many ways, ChatGPT is an upgraded version of its predecessor, GPT-3.5, but it's still prone to fudge. Experts say that for production-grade applications, AI developers may consider combining ChatGPT with other tools for a complete solution.

ChatGPT and GPT-3.5 were developed by OpenAI and trained on Microsoft Azure. Both are conversational AI systems based on large language models, but there are significant the difference.

First of all, Generative Pre-training Transformer (GPT) 3.5 came out earlier than ChatGPT, and its neural network has more layers than ChatGPT. GPT-3.5 was developed as a general-purpose language model that can handle many tasks, including translating languages, summarizing text, and answering questions. OpenAI provides a set of API interfaces for GPT-3.5, which provides developers with a more efficient way to access its functionality.

ChatGPT is based on GPT-3.5 and is developed specifically as a chatbot ("conversational agent" is the term preferred by the industry). One limiting factor is that ChatGPT only has a text interface and no API. ChatGPT is trained on a large set of conversation texts, and it performs conversations better than GPT-3.5 and other generative models. Responses are generated faster than GPT-3.5, and their responses are more accurate.

However, both models tend to be fabricated, or as those in the industry call them, “hallucinating.” The hallucination rate of ChatGPT is between 15% and 21%. At the same time, the hallucination rate of GPT-3.5 increased from around 20% to 41%, so ChatGPT has improved in this regard.

Silicon Valley company Moveworks uses language models and other machine learning techniques on its AI conversational platform, which is used by companies across a wide range of industries. Jiang Chen, the company’s founder and vice president of machine learning, said that although it is often made up (a common problem with all language models), ChatGPT is a major improvement over previous AI models.

“ChatGPT really impressed and surprised people,” said Chen, a former Google engineer who developed the technology giant’s eponymous search engine. "Its reasoning capabilities may surprise many machine learning practitioners."

Moveworks uses a variety of language models and other techniques to build customized AI systems for clients. It has been a big user of BERT, the language model open sourced by Google a few years ago. The company uses GPT-3.5 and has already started using ChatGPT.

However, according to Chen, ChatGPT has its limitations when it comes to building production-grade conversational AI systems. There are various factors to weigh when building a custom conversational AI system using this type of technology; it’s important to know where the line is drawn in order to build one that doesn’t provide the wrong answers, isn’t overly biased, and doesn’t keep people waiting too long system.

Chen said that ChatGPT is better than BERT in generating meaningful responses to answer questions. Specifically, ChatGPT has more powerful "reasoning" capabilities than BERT, which is designed to predict the next word in a sentence.

While ChatGPT and GPT-3.5 can provide convincing responses to answer questions, their closed end-to-end nature prevents engineers like Chen from training them. This also creates a barrier to customizing corpora for industry-specific responses (retailers and manufacturers use different words than law firms and governments). This closed nature also makes it more difficult to reduce bias, he said.

BERT is small enough to be hosted by a company like Moveworks. The company built a data pipeline that collects data specific to a company and feeds the data into the BERT model for training. This work allows Moveworks to exert a greater degree of control over the final conversational AI product that is not possible in closed systems like GPT-3.5 and ChatGPT.

#Our machine learning stack is layered, Chen said. “We use BERT, but we also use other machine learning algorithms, which allows us to incorporate customer-specific logic and customer-specific data into it. Chen said that although OpenAI models are much larger and trained on much larger corpora, there is no way to know whether they are suitable for a specific customer.

He said: "The (ChatGPT) model is pre-trained to encode all the knowledge fed into it. It is not designed to perform any specific task itself. The reason why it can accelerate and achieve The rapid growth is due to the fact that the architecture itself is actually very simple. It's layers and layers of the same thing, so it all blends together, so to speak. Because of this architecture, you know it has the ability to learn, but you don't know where it is. What information is encoded. You don’t know which layers of neurons encode the specific information you want to infer, so it’s more like a black box.”

Chen believes that ChatGPT may be having a moment, but its usefulness as a production-grade tool for conversational AI may be a bit exaggerated. A better approach is to leverage the strengths of multiple models, rather than committing entirely to one particular model, to better align with the client's performance, accuracy, bias expectations, and the underlying capabilities of the technology.

He said: "Our strategy is to use a series of different models in different places. You can use the big model to teach the small model, and then the small model will be much faster. For example, if you want to do segmented search, you should use... some kind of BERT model and then run it as some kind of vector search engine. ChatGPT is too huge for this."

Although right now ChatGPT may have limited use in real-world applications, but that doesn't mean it's unimportant. Chen said that one of the lasting impacts ChatGPT may have is to attract the attention of practitioners and inspire people to push the envelope in terms of the efficacy of conversational AI technology in the future.

He said: "I do think it opens up a field. Going forward, as we open up the black box, I think there will be more interesting ways and applications. This is what we are excited about, and we are Committed to research and development in this field."

Original title: The Drawbacks of ChatGPT for Production Conversational AI Systems , Author: Alex Woodie

The above is the detailed content of The disadvantages of ChatGPT for production-grade conversational AI systems. For more information, please follow other related articles on the PHP Chinese website!

Tool Calling in LLMsApr 14, 2025 am 11:28 AM

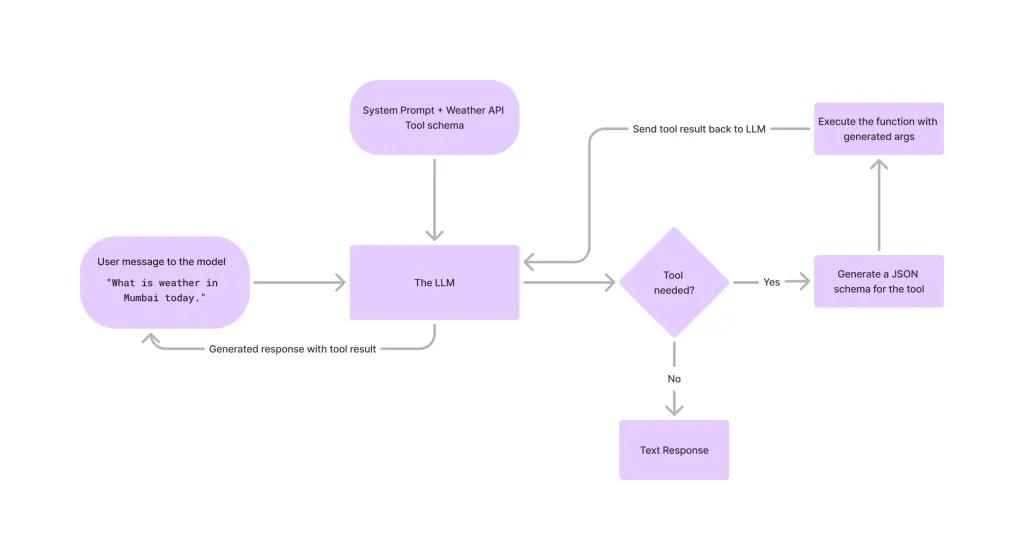

Tool Calling in LLMsApr 14, 2025 am 11:28 AMLarge language models (LLMs) have surged in popularity, with the tool-calling feature dramatically expanding their capabilities beyond simple text generation. Now, LLMs can handle complex automation tasks such as dynamic UI creation and autonomous a

How ADHD Games, Health Tools & AI Chatbots Are Transforming Global HealthApr 14, 2025 am 11:27 AM

How ADHD Games, Health Tools & AI Chatbots Are Transforming Global HealthApr 14, 2025 am 11:27 AMCan a video game ease anxiety, build focus, or support a child with ADHD? As healthcare challenges surge globally — especially among youth — innovators are turning to an unlikely tool: video games. Now one of the world’s largest entertainment indus

UN Input On AI: Winners, Losers, And OpportunitiesApr 14, 2025 am 11:25 AM

UN Input On AI: Winners, Losers, And OpportunitiesApr 14, 2025 am 11:25 AM“History has shown that while technological progress drives economic growth, it does not on its own ensure equitable income distribution or promote inclusive human development,” writes Rebeca Grynspan, Secretary-General of UNCTAD, in the preamble.

Learning Negotiation Skills Via Generative AIApr 14, 2025 am 11:23 AM

Learning Negotiation Skills Via Generative AIApr 14, 2025 am 11:23 AMEasy-peasy, use generative AI as your negotiation tutor and sparring partner. Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining

TED Reveals From OpenAI, Google, Meta Heads To Court, Selfie With MyselfApr 14, 2025 am 11:22 AM

TED Reveals From OpenAI, Google, Meta Heads To Court, Selfie With MyselfApr 14, 2025 am 11:22 AMThe TED2025 Conference, held in Vancouver, wrapped its 36th edition yesterday, April 11. It featured 80 speakers from more than 60 countries, including Sam Altman, Eric Schmidt, and Palmer Luckey. TED’s theme, “humanity reimagined,” was tailor made

Joseph Stiglitz Warns Of The Looming Inequality Amid AI Monopoly PowerApr 14, 2025 am 11:21 AM

Joseph Stiglitz Warns Of The Looming Inequality Amid AI Monopoly PowerApr 14, 2025 am 11:21 AMJoseph Stiglitz is renowned economist and recipient of the Nobel Prize in Economics in 2001. Stiglitz posits that AI can worsen existing inequalities and consolidated power in the hands of a few dominant corporations, ultimately undermining economic

What is Graph Database?Apr 14, 2025 am 11:19 AM

What is Graph Database?Apr 14, 2025 am 11:19 AMGraph Databases: Revolutionizing Data Management Through Relationships As data expands and its characteristics evolve across various fields, graph databases are emerging as transformative solutions for managing interconnected data. Unlike traditional

LLM Routing: Strategies, Techniques, and Python ImplementationApr 14, 2025 am 11:14 AM

LLM Routing: Strategies, Techniques, and Python ImplementationApr 14, 2025 am 11:14 AMLarge Language Model (LLM) Routing: Optimizing Performance Through Intelligent Task Distribution The rapidly evolving landscape of LLMs presents a diverse range of models, each with unique strengths and weaknesses. Some excel at creative content gen

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

SublimeText3 Linux new version

SublimeText3 Linux latest version

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Dreamweaver CS6

Visual web development tools