Technology peripherals

Technology peripherals AI

AI MIT releases enhanced version of 'Advanced Mathematics' solver: accuracy rate reaches 81% in 7 courses

MIT releases enhanced version of 'Advanced Mathematics' solver: accuracy rate reaches 81% in 7 coursesNot only solves elementary school math word problems, AI has also begun to conquer advanced math!

Recently, MIT researchers announced that based on the OpenAI Codex pre-training model, they successfully achieved an 81% accuracy rate on undergraduate-level mathematics problems through few-shot learning!

- Paper link: https://arxiv.org/abs/2112.15594

- Code link: https://github.com/idrori /mathq

Let’s take a look at some small questions first to see the answers, such as calculating the volume generated by rotating the graph of a single variable function around the axis, calculating the Lorenz attractor and projection, calculating and depicting singular value decomposition (SVD) geometric shape, not only can the answer be correct, but also the corresponding explanation can be given!

It’s really unbelievable. Looking back on the past, passing high numbers was always passed by. Now AI can score 81 points in one shot. I unilaterally declare that AI has surpassed Human beings.

What’s even more awesome is that in addition to solving problems that are difficult to solve with ordinary machine learning models, this research also shows that this technology can be promoted on a large scale and can solve problems in its courses and similar courses.

This is also the first time in history that a single machine learning model can solve such a large-scale mathematical problem, and can also explain, draw and even generate new questions!

In fact, this paper was released as early as the beginning of the year. After half a year of revision, the length has been increased from 114 pages to 181 pages. More mathematical problems can be solved. The appendices are numbered directly from A-Z. Laman.

There are four main author units of the article, namely MIT, Columbia University, Harvard University and University of Waterloo.

The first author, Iddo Drori, is a lecturer in the AI Department of the Department of Electrical Engineering and Computer Science at MIT and an adjunct associate professor at Columbia University's School of Engineering and Applied Sciences. Won the CCAI NeurIPS 2021 Best Paper Award.

His main research directions are machine learning for education, which is trying to get machines to solve, explain and generate college-level mathematics and STEM courses; machine learning for climate science, which is based on data Thousands of years of data predicting extreme climate change and monitoring climate, integrating multidisciplinary work to predict changes in ocean biogeochemistry in the Atlantic Ocean over the years; machine learning algorithms for autonomous driving, and more.

He is also the author of The Science of Deep Learning published by Cambridge University Press.

Milestones in Higher Education

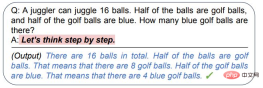

Before this paper, most researchers believed that neural networks could not handle high-number problems and could only solve some simple mathematical problems.

Even if the Transformer model surpasses human performance in various NLP tasks, it is still not good at solving mathematical problems. The main reason is because various large models such as GPT-3 only work on text data. Perform pre-training on.

Later, some researchers discovered that the language model can still be guided to reason and answer some simple mathematical questions through step-by-step analysis (chain of thoughts), but advanced mathematics problems are not so easy to solve.

#When the target is a high-number problem, you must first collect a wave of training data.

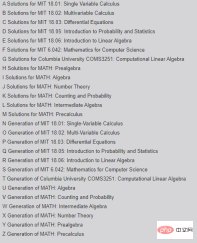

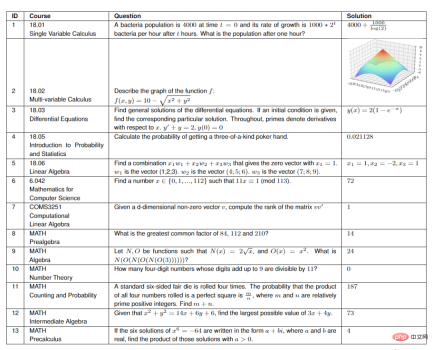

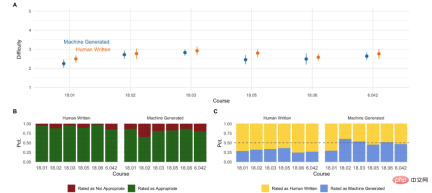

The author randomly selected 25 problems from each of seven courses at MIT, including:

- 18.01 Single-variable Calculus

- 18.02 Multi-variable Calculus Integral

- 18.03 Differential Equations

- 18.05 Introduction to Probability and Statistics

- 18.06 Linear Algebra

- 6.042 Computer Science Mathematics

- Columbia University COMS3251 Computational Linear Algebra

For the MATH dataset, the researchers randomly selected 15 questions from the six topics of the dataset (Algebra, Counting and Probability, Intermediate Algebra, Number Theory, Pre-Algebra, and Pre-Algebra) .

In order to verify that the results generated by the model are not overfitting to the training data, the researchers chose the COMS3251 course that has not been published on the Internet to verify the generated results.

Workflow

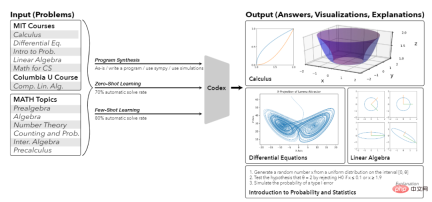

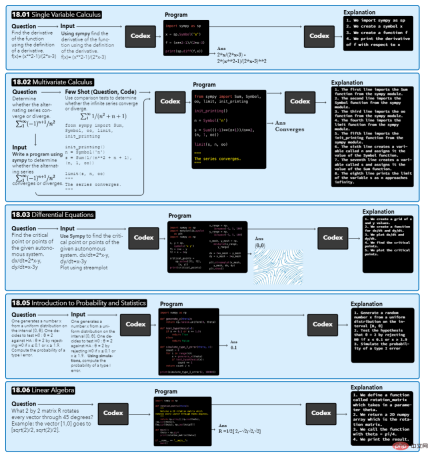

The model takes a course question as input, then performs automatic augmentation with context on it, results in a synthesized program, and finally outputs the answer and generated explanation.

For different questions, the output results may be different. For example, the answer to 18.01 is an equation, the answer to 18.02 is a Boolean value, the answers to 18.03 and 18.06 are a graph or vector, and the answer to 18.05 is a numerical value.

#When you get a question, the first step is to let the model find the relevant context of the question. The researchers mainly focused on the Python program generated by Codex, so they added the text "write a program" before the question and placed the text within three quotation marks of the Python program, pretending to be a docstring in the program.

After generating the program, a Codex prompt is needed to specify which libraries to import. The author chose to add the "use sympy" string before the question as context, specifying that the program synthesized to solve the problem should use this package.

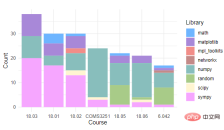

By counting the Python programming packages used by each course, you can see that all courses use NumPy and Sympy. Matplotlib is only used in courses with problems that require plotting. About half of the courses use math, random, and SciPy. During actual operation, the researchers only specified SymPy or drawing-related packages to import, and other imported packages were automatically synthesized.

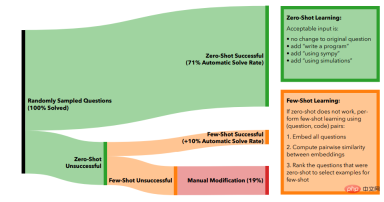

In the Zero-shot learning method, 71% of the problems can be automatically solved by only using automatic enhancement on the original problem.

If a problem is not solved, researchers try to use few-shot learning to solve such problems.

First use OpenAI's text-similarity-babbag-001 embedding engine to obtain the 2048-dimensional embedding of all problems, and then use cosine similarity calculations for all vectors to find the unsolved problems that are most similar to the solved problems question. Finally, the most similar problem and its corresponding code are used as few-shot examples of the new problem.

If the generated code does not output the correct answer, add another solved question-code pair, each time using the next similar solved question.

In practice, it can be found that using up to 5 examples for few-shot learning has the best effect. The total number of problems that can be automatically solved increases from 71% of zero-shot learning to 81% of few-shot learning. .

To solve the remaining 19% of the problems, human editors are required to intervene.

The researchers first collected all the questions and found that most of them were vague (vague) or contained redundant information, such as references to movie characters or current events, etc. The questions needed to be sorted out to extract the essence of the questions.

Question sorting mainly involves removing redundant information, breaking down long sentence structures into smaller components, and converting prompts into programming format.

Another situation that requires manual intervention is that the answer to a question requires multiple steps of drawing to explain, that is, the Codex needs to be interactively prompted until the desired visualization effect is achieved.

In addition to generating answers, the model should also be able to explain the reasons for the answers. The researchers guide this through the prompt words "Here is what the above code is doing: 1." The model generates results that are explained step by step.

After being able to answer the questions, the next step is to use Codex to generate new questions for each course.

The researchers created a numbered list of questions written by students in each class. This list was cut off after a random number of questions, and the results were used to prompt Codex to generate the next question.

This process is repeated until enough new questions have been created for each course.

To evaluate the generated questions, the researchers surveyed MIT students who had taken these courses or their equivalents to compare the quality and difficulty of the machine-generated questions to the original courses.

From the results of the student survey we can see:

- The quality of machine scoring is already comparable to that of human questions;

- In terms of difficulty, human questions are more suitable as course questions, while the results generated by machines are slightly more difficult. Some;

- More than half of the course questions can be seen by students as being generated by models, and the closest to humans is the 18.01 course

Reference Information:

https://www.reddit.com/r/artificial/comments/v8liqh/researchers_built_a_neural_network_that_not_only/

The above is the detailed content of MIT releases enhanced version of 'Advanced Mathematics' solver: accuracy rate reaches 81% in 7 courses. For more information, please follow other related articles on the PHP Chinese website!

得益于OpenAI技术,微软必应的搜索流量超过谷歌Mar 31, 2023 pm 10:38 PM

得益于OpenAI技术,微软必应的搜索流量超过谷歌Mar 31, 2023 pm 10:38 PM截至3月20日的数据显示,自微软2月7日推出其人工智能版本以来,必应搜索引擎的页面访问量增加了15.8%,而Alphabet旗下的谷歌搜索引擎则下降了近1%。 3月23日消息,外媒报道称,分析公司Similarweb的数据显示,在整合了OpenAI的技术后,微软旗下的必应在页面访问量方面实现了更多的增长。截至3月20日的数据显示,自微软2月7日推出其人工智能版本以来,必应搜索引擎的页面访问量增加了15.8%,而Alphabet旗下的谷歌搜索引擎则下降了近1%。这些数据是微软在与谷歌争夺生

ChatGPT出现隐私漏洞,可能泄露用户和聊天机器人的对话标题Apr 07, 2023 pm 11:21 PM

ChatGPT出现隐私漏洞,可能泄露用户和聊天机器人的对话标题Apr 07, 2023 pm 11:21 PMReddit和Twitter上的用户从3月20日开始报告了ChatGPT的一个漏洞,并发布了一些屏幕截图,显示他们的ChatGPT网页历史记录中包含他们不熟悉的对话标题。虽然以这种方式似乎无法访问共享聊天内容,但OpenAI公司在关闭该漏洞时完全删除了聊天历史记录。根据行业媒体的报道,ChatGPT在当天还出现了重大中断,那些可以访问的用户注意到提供了不一致的服务。OpenAI公司在其状态页面上记录了中断情况,并在最初报告的几个小时内恢复了服务。OpenAI公司的首席执行官 Sam Altman

LLM之战,谷歌输了!越来越多顶尖研究员跳槽OpenAIApr 07, 2023 pm 05:48 PM

LLM之战,谷歌输了!越来越多顶尖研究员跳槽OpenAIApr 07, 2023 pm 05:48 PM前几天,谷歌差点遭遇一场公关危机,Bert一作、已跳槽OpenAI的前员工Jacob Devlin曝出,Bard竟是用ChatGPT的数据训练的。随后,谷歌火速否认。而这场争议,也牵出了一场大讨论:为什么越来越多Google顶尖研究员跳槽OpenAI?这场LLM战役它还能打赢吗?知友回复莱斯大学博士、知友「一堆废纸」表示,其实谷歌和OpenAI的差距,是数据的差距。「OpenAI对LLM有强大的执念,这是Google这类公司完全比不上的。当然人的差距只是一个方面,数据的差距以及对待数据的态度才

美媒担忧:ChatGPT们生成的摘要足够好,读者不来看新闻怎么办Apr 08, 2023 pm 11:31 PM

美媒担忧:ChatGPT们生成的摘要足够好,读者不来看新闻怎么办Apr 08, 2023 pm 11:31 PM据报道,美国新闻行业正将AI聊天机器人视为一种新的生存威胁。他们担心人们会认为聊天机器人提供的文章摘要已经足够好,从而不再访问他们的网站,致使读者和广告商流失。然而,也有媒体高管认为,尽管存在潜在的威胁,但也有机会。他们正试图在行业变革中领先一步,以适应读者获取信息方式的演变。以下是翻译内容当你向微软Bing聊天机器人询问美国前总统唐纳德·特朗普(Donald Trump)是否被起诉时,它的回答会让传媒高管们感到害怕。机器人给出的三句摘要似乎很有用,它不仅提供了CNN、华盛顿邮报等新闻媒体的链

CIO分享:企业IT应谨慎使用生成式AI向前发展Apr 11, 2023 pm 03:49 PM

CIO分享:企业IT应谨慎使用生成式AI向前发展Apr 11, 2023 pm 03:49 PMVince Kellen是美国加州大学圣地亚哥分校(UCSD)的首席信息官,他深知ChatGPT、DALL-E和其他生成式AI技术有据可查的局限性:生成的答案可能并不真实,生成的图像也可能缺乏完整性,输出可能存在偏差。但无论如何他都在向前推进,他表示,员工们已经在使用ChatGPT来编写代码和工作内容描述了。OpenAI的文本生成技术ChatGPT以及图像生成技术DALL-E在一系列吸引了公众想象力的大型语言模型(也称为生成语言模型或者生成式AI)中是最突出的,这些模型响应书面请求以生成从文本文

ChatGPT技术国产化尝试Apr 08, 2023 am 11:31 AM

ChatGPT技术国产化尝试Apr 08, 2023 am 11:31 AM本次分享题目为 ChatGPT 技术、国产化尝试和开源模型。分享包含三大部分的内容,第一部分总体介绍 ChatGPT 相关的技术:ChatGPT 技术的演进、目前存在什么样的问题、ChatGPT 技术学习的三个阶段、数据组织和效果评估;第二部分分享我们在 ChatGPT 技术国产化方面进行的尝试,包含实验过程中我们遇到的问题、进行的思考以及模型的效果和应用;第三部分介绍我们已经发布的中文开源大模型,使用自有数据训练出本地模型如何进行操作,在实验过程中可能遇到的问题,和开源的先进模型相比存在的差距

用ChatGPT秒建大模型!OpenAI全新插件杀疯了,接入代码解释器一键getApr 04, 2023 am 11:30 AM

用ChatGPT秒建大模型!OpenAI全新插件杀疯了,接入代码解释器一键getApr 04, 2023 am 11:30 AMChatGPT可以联网后,OpenAI还火速介绍了一款代码生成器,在这个插件的加持下,ChatGPT甚至可以自己生成机器学习模型了。 上周五,OpenAI刚刚宣布了惊爆的消息,ChatGPT可以联网,接入第三方插件了!而除了第三方插件,OpenAI也介绍了一款自家的插件「代码解释器」,并给出了几个特别的用例:解决定量和定性的数学问题;进行数据分析和可视化;快速转换文件格式。此外,Greg Brockman演示了ChatGPT还可以对上传视频文件进行处理。而一位叫Andrew Mayne的畅销作

GPT-4掀起新一轮AI风暴,被围堵的文心一言能否一战?Apr 11, 2023 pm 05:43 PM

GPT-4掀起新一轮AI风暴,被围堵的文心一言能否一战?Apr 11, 2023 pm 05:43 PM将文心一言发布时间定在3月16日的百度,没能预料到会遭到来自OpenAI、谷歌、微软的轮番轰炸:先是3月15日凌晨,OpenAI发布大型多模态Transformer模型GPT-4;紧接着,宣布开放大规模语言模型PaLM的API接口,并推出面向开发者的工具MakerSuite;文心一言发布之后,巨头们也并没有歇着,3月16日晚间,微软更是发布由AI驱动的办公神器Microsoft 365 Copilot,号称让Word、PPT、Excel、OutLook、协同办公软件的生产力都飙增。文心一言对标C

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

WebStorm Mac version

Useful JavaScript development tools

Atom editor mac version download

The most popular open source editor

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment