Technology peripherals

Technology peripherals AI

AI AI dimensionality reduction attacks human painters, Vincentian graphs are introduced into ControlNet, and depth and edge information are fully reusable

AI dimensionality reduction attacks human painters, Vincentian graphs are introduced into ControlNet, and depth and edge information are fully reusableWith the emergence of large text-image models, generating an attractive image has become very simple. All the user needs to do is to enter a simple prompt with the movement of their fingers. After obtaining the image through a series of operations, we will inevitably have several questions: Can the image generated based on prompt meet our requirements? What kind of architecture should we build to handle the various requirements raised by users? Can large models still maintain the advantages and capabilities gained from billions of images in specific tasks?

In order to answer these questions, researchers from Stanford conducted a large number of investigations on various image processing applications and came to the following three findings:

First of all, the available data in a specific field is actually less than the data for training general models. This is mainly reflected in the fact that for example, the largest data set on a specific problem (such as gesture understanding, etc.) is usually less than 100k, which is smaller than large-scale, The multimodal text image dataset LAION 5B is 5 × 10^4 orders of magnitude smaller. This requires the neural network to be robust to avoid model overfitting and to have good generalization when targeting specific problems.

Secondly, when using data-driven processing of image tasks, large computing clusters are not always available. This is where fast training methods become important, methods that can optimize large models for specific tasks within acceptable time and memory space. Furthermore, fine-tuning, transfer learning and other operations may be required in subsequent processing.

Finally, various problems encountered in the image processing process will have different forms of definition. When solving these problems, although the image diffusion algorithm can be adjusted in a "procedural" way, for example, constraining the denoising process, editing multi-head attention activation, etc., these hand-crafted rules are basically dictated by human instructions ,Considering some specific tasks such as depth-image, pose-person, etc., these problems essentially require the interpretation of raw inputs into object-level or scene-level understanding, which makes hand-crafted procedural approaches less feasible. Therefore, to provide solutions in multiple tasks, end-to-end learning is essential.

Based on the above findings, this paper proposes an end-to-end neural network architecture ControlNet, which can control the diffusion model (such as Stable Diffusion) by adding additional conditions, thereby improving the graph. Picture effect, and can generate full-color pictures from line drawings, generate pictures with the same depth structure, and optimize the generation of hands through key points of the hands.

Paper address: https://arxiv.org/pdf/2302.05543.pdf

Project Address: https://github.com/lllyasviel/ControlNet

Effect display

So what is the effect of ControlNet?

Canny Edge Detection: By extracting line drawing from the original image, an image of the same composition can be generated.

Depth detection: By extracting the depth information in the original image, a map with the same depth structure can be generated.

ControlNet with semantic segmentation:

Using learning-based The deep Hough transform detects straight lines from Places2 and then uses BLIP to generate subtitles.

HED edge detection illustration.

Illustration of human posture recognition.

Method Introduction

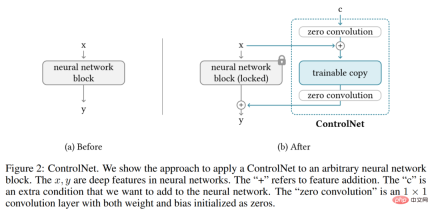

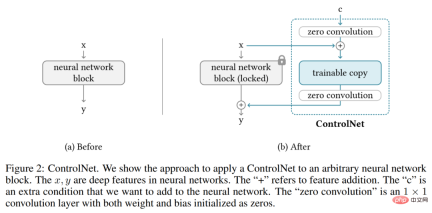

ControlNet is a neural network architecture that enhances pre-trained image diffusion models with task-specific conditions. Let's first look at the basic structure of ControlNet.

ControlNet manipulates the input conditions of neural network blocks, thereby further controlling the overall behavior of the entire neural network. Here "network block" refers to a group of neural layers that are put together as a common unit for building neural networks, such as resnet block, multi-head attention block, and Transformer block.

Taking 2D features as an example, given a feature map x ϵ R^h×w×c, where {h, w, c} are the height, width and number of channels respectively. A neural network block F (・; Θ) with a set of parameters Θ transforms x into another feature map y as shown in equation (1) below.

This process is shown in Figure 2-(a) below.

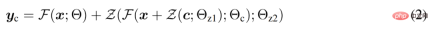

Neural network blocks are connected by a unique convolution layer called "zero convolution", which is the weight 1×1 convolutional layer with zero initialization and bias. The researcher represents the zero convolution operation as Z (・;・) and uses two parameter instances {Θ_z1, Θ_z2} to form the ControlNet structure, as shown in the following formula (2).

where y_c becomes the output of the neural network block, as shown in Figure 2-(b) below.

ControlNet in image diffusion model

##The researcher took Stable Diffusion as an example to introduce how to use ControlNet control Large-scale diffusion models with task-specific conditions. Stable Diffusion is a large-scale text-to-image diffusion model trained on billions of images, essentially a U-net consisting of an encoder, intermediate blocks, and a residual-connected decoder.

As shown in Figure 3 below, the researcher uses ControlNet to control each layer of U-net. Note that the way ControlNet is connected here is computationally efficient: since the original weights are locked, the gradient calculation on the original encoder does not require training. And because half of the gradient calculations on the original model are reduced, training can be accelerated and GPU memory can be saved. Training a Stable Diffusion model using ControlNet only requires approximately 23% more GPU memory and 34% more time per training iteration (tested on a single Nvidia A100 PCIE 40G).

#Specifically, the researchers used ControlNet to create 12 encoding blocks and 1 Stable Diffusion intermediate block that are trainable copy. The 12 coding blocks come in 4 resolutions, 64×64, 32×32, 16×16, and 8×8, with 3 blocks in each resolution. The output is added to the U-net with 12 residual connections and 1 intermediate block. Since Stable Diffusion is a typical U-net structure, it is likely that this ControlNet architecture can be used in other diffusion models.

Training and Boosting Training

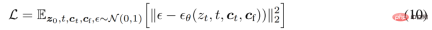

Given an image z_0, the diffusion algorithm progressively adds noise to the image and generates noise Image z_t, t is the number of times noise is added. When t is large enough, the image approximates pure noise. Given a set of conditions including time step t, text prompts c_t, and task-specific conditions c_f, the image diffusion algorithm learns a network ϵ_θ to predict the noise added to a noisy image z_t, as shown in Equation (10) below.

During the training process, the researchers randomly replaced 50% of the text prompts c_t with empty strings, which is beneficial to ControlNet's ability to identify semantic content from the input condition map.

In addition, the researchers also discussed several strategies to improve the training of ControlNets, especially when computing devices are very limited (such as laptops) or very powerful (such as with large-scale GPUs available). computing cluster).

Please refer to the original paper for more technical details.

The above is the detailed content of AI dimensionality reduction attacks human painters, Vincentian graphs are introduced into ControlNet, and depth and edge information are fully reusable. For more information, please follow other related articles on the PHP Chinese website!

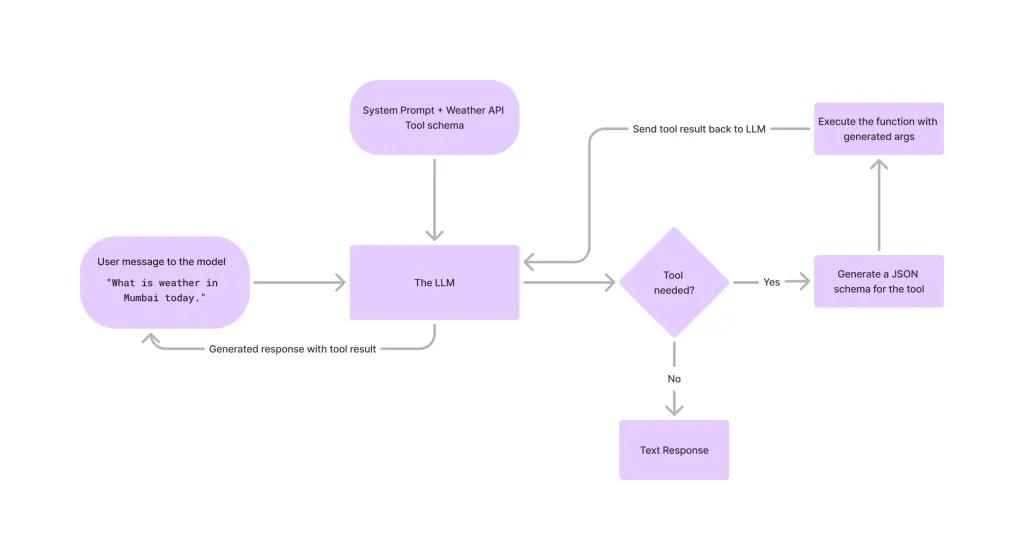

Tool Calling in LLMsApr 14, 2025 am 11:28 AM

Tool Calling in LLMsApr 14, 2025 am 11:28 AMLarge language models (LLMs) have surged in popularity, with the tool-calling feature dramatically expanding their capabilities beyond simple text generation. Now, LLMs can handle complex automation tasks such as dynamic UI creation and autonomous a

How ADHD Games, Health Tools & AI Chatbots Are Transforming Global HealthApr 14, 2025 am 11:27 AM

How ADHD Games, Health Tools & AI Chatbots Are Transforming Global HealthApr 14, 2025 am 11:27 AMCan a video game ease anxiety, build focus, or support a child with ADHD? As healthcare challenges surge globally — especially among youth — innovators are turning to an unlikely tool: video games. Now one of the world’s largest entertainment indus

UN Input On AI: Winners, Losers, And OpportunitiesApr 14, 2025 am 11:25 AM

UN Input On AI: Winners, Losers, And OpportunitiesApr 14, 2025 am 11:25 AM“History has shown that while technological progress drives economic growth, it does not on its own ensure equitable income distribution or promote inclusive human development,” writes Rebeca Grynspan, Secretary-General of UNCTAD, in the preamble.

Learning Negotiation Skills Via Generative AIApr 14, 2025 am 11:23 AM

Learning Negotiation Skills Via Generative AIApr 14, 2025 am 11:23 AMEasy-peasy, use generative AI as your negotiation tutor and sparring partner. Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining

TED Reveals From OpenAI, Google, Meta Heads To Court, Selfie With MyselfApr 14, 2025 am 11:22 AM

TED Reveals From OpenAI, Google, Meta Heads To Court, Selfie With MyselfApr 14, 2025 am 11:22 AMThe TED2025 Conference, held in Vancouver, wrapped its 36th edition yesterday, April 11. It featured 80 speakers from more than 60 countries, including Sam Altman, Eric Schmidt, and Palmer Luckey. TED’s theme, “humanity reimagined,” was tailor made

Joseph Stiglitz Warns Of The Looming Inequality Amid AI Monopoly PowerApr 14, 2025 am 11:21 AM

Joseph Stiglitz Warns Of The Looming Inequality Amid AI Monopoly PowerApr 14, 2025 am 11:21 AMJoseph Stiglitz is renowned economist and recipient of the Nobel Prize in Economics in 2001. Stiglitz posits that AI can worsen existing inequalities and consolidated power in the hands of a few dominant corporations, ultimately undermining economic

What is Graph Database?Apr 14, 2025 am 11:19 AM

What is Graph Database?Apr 14, 2025 am 11:19 AMGraph Databases: Revolutionizing Data Management Through Relationships As data expands and its characteristics evolve across various fields, graph databases are emerging as transformative solutions for managing interconnected data. Unlike traditional

LLM Routing: Strategies, Techniques, and Python ImplementationApr 14, 2025 am 11:14 AM

LLM Routing: Strategies, Techniques, and Python ImplementationApr 14, 2025 am 11:14 AMLarge Language Model (LLM) Routing: Optimizing Performance Through Intelligent Task Distribution The rapidly evolving landscape of LLMs presents a diverse range of models, each with unique strengths and weaknesses. Some excel at creative content gen

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

SublimeText3 Linux new version

SublimeText3 Linux latest version

Atom editor mac version download

The most popular open source editor

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

SublimeText3 Mac version

God-level code editing software (SublimeText3)