Technology peripherals

Technology peripherals AI

AI GPT-3 and Stable Diffusion work together to help the model understand Party A's needs for image retouching.

GPT-3 and Stable Diffusion work together to help the model understand Party A's needs for image retouching.GPT-3 and Stable Diffusion work together to help the model understand Party A's needs for image retouching.

After the popularity of the diffusion model, many people have focused on how to use more effective prompts to generate the images they want. In the continuous attempts of some AI painting models, people have even summarized the keyword experience for making AI draw pictures well:

That is to say , if you master the correct AI skills, the effect of improving the quality of drawing will be very obvious (see: "How to draw "Alpaca Playing Basketball"? Someone spent 13 US dollars to force DALL· E 2 Show your true skills》).

In addition, some researchers are working in another direction: how to change a painting to what we want with just a few words.

Some time ago, we reported on a study from Google Research and other institutions . Just say what you want an image to look like and it will basically do what you want, generating photorealistic images of, for example, a puppy sitting down:

The input description given to the model here is "a dog that sits down", but according to people's daily communication habits, the most natural description should be "let this dog sit down". Some researchers believe that this is a problem that should be optimized, and the model should be more in line with human language habits.

Recently, a research team from UC Berkeley proposed a new method for editing images based on human instructions: InstructPix2Pix: Given an input image and a text description that tells the model what to do, the model Ability to follow description instructions to edit images.

##Paper address: https://arxiv.org/pdf/2211.09800.pdf

For example, to change the sunflowers in the painting to roses, you only need to say directly to the model "Change the sunflowers to roses":

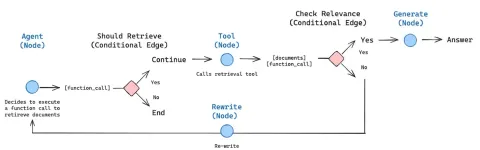

To obtain training data, this study combines two large pre-trained models - a language model (GPT-3) and a text-to-image generation model (Stable Diffusion) - to generate a large pairwise training data set of image editing examples. . The researchers trained a new model, InstructPix2Pix, on this large dataset and generalized to real images and user-written instructions at inference time.

InstructPix2Pix is a conditional diffusion model that generates an edited image given an input image and a text instruction to edit the image. The model performs image editing directly in the forward pass and does not require any additional example images, full descriptions of input/output images, or fine-tuning of each example, so the model can quickly edit images in just a few seconds. .

Although InstructPix2Pix is trained entirely on synthetic examples (i.e., text descriptions generated by GPT-3 and images generated by Stable Diffusion), the model achieves the accuracy of training on arbitrary real images and Zero-shot generalization to human-written text. The mockup supports intuitive image editing, including replacing objects, changing image styles, and more.

Method Overview

The researchers treated instruction-based image editing as a supervised learning problem: First, they generated a paired training dataset containing text editing instructions and images before and after editing ( Figure 2a-c), and then trained an image editing diffusion model on this generated dataset (Figure 2d). Although trained using generated images and editing instructions, the model is still able to edit real images using arbitrary instructions written by humans. Figure 2 below is an overview of the method.

Generate a multi-modal training data set

In the data In the set generation stage, the researchers combined the capabilities of a large language model (GPT-3) and a text-to-image model (Stable Diffusion) to generate a multi-modal training data set containing text editing instructions and corresponding images before and after editing. This process consists of the following steps:

- Fine-tune GPT-3 to generate a collection of text edits: Given a prompt describing an image, generate a text describing the changes to be made command and a prompt describing the changed image (Figure 2a);

- Use the text-to-image model to convert two text prompts (i.e. before editing and after editing) into a corresponding pair image (Fig. 2b).

InstructPix2Pix

The researchers used the generated training data to train a conditional diffusion model. Based on the Stable Diffusion model, images can be edited based on written instructions.

Diffusion models learn to generate data samples through a series of denoising autoencoders that estimate the fraction of the data distribution (pointing in the direction of high-density data). Latent diffusion is improved by operating in the latent space of a pretrained variational autoencoder with encoder and decoder ## Efficiency and quality of diffusion models.

For an image x, the diffusion process adds noise to the encoded latent , which produces a noisy latent z_t, where the noise The level increases with time step t∈T. We learn a network that predicts the noise added to a noisy latent z_t given an image conditioning C_I and a text instruction conditioning C_T. The researchers minimized the following latent diffusion goal:

Previous studies (Wang et al.) have shown that for image translation (image translation ) task, especially when pairwise training data is limited, fine-tuning a large image diffusion model is better than training it from scratch. Therefore, in new research, the authors use pre-trained Stable Diffusion checkpoint to initialize the weights of the model, taking advantage of its powerful text-to-image generation capabilities.

To support image conditioning, the researchers added additional input channels to the first convolutional layer, connecting z_t and  . All available weights of the diffusion model are initialized from the pretrained checkpoint, while weights operating on newly added input channels are initialized to zero. The author here reuses the same text conditioning mechanism originally used for caption without taking the text editing instruction c_T as input.

. All available weights of the diffusion model are initialized from the pretrained checkpoint, while weights operating on newly added input channels are initialized to zero. The author here reuses the same text conditioning mechanism originally used for caption without taking the text editing instruction c_T as input.

In the following figures, the authors show the image editing results of their new model. These results are for a different set of real photos and artwork. The new model successfully performs many challenging edits, including replacing objects, changing seasons and weather, replacing backgrounds, modifying material properties, converting art media, and more.

##The researchers compared the new method to recent Some technologies such as SDEdit, Text2Live, etc. are compared. The new model follows instructions for editing images, whereas other methods, including baseline methods, require descriptions of images or editing layers. Therefore, when comparing, the author provides "edited" text annotations for the latter instead of editing instructions. The authors also quantitatively compare the new method with SDEdit, using two metrics that measure image consistency and editing quality. Finally, the authors show how the size and quality of generated training data affects ablation results in model performance.

The above is the detailed content of GPT-3 and Stable Diffusion work together to help the model understand Party A's needs for image retouching.. For more information, please follow other related articles on the PHP Chinese website!

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AM

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AMAI agents are now a part of enterprises big and small. From filling forms at hospitals and checking legal documents to analyzing video footage and handling customer support – we have AI agents for all kinds of tasks. Compan

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AM

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AMLife is good. Predictable, too—just the way your analytical mind prefers it. You only breezed into the office today to finish up some last-minute paperwork. Right after that you’re taking your partner and kids for a well-deserved vacation to sunny H

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AM

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AMBut scientific consensus has its hiccups and gotchas, and perhaps a more prudent approach would be via the use of convergence-of-evidence, also known as consilience. Let’s talk about it. This analysis of an innovative AI breakthrough is part of my

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AM

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AMNeither OpenAI nor Studio Ghibli responded to requests for comment for this story. But their silence reflects a broader and more complicated tension in the creative economy: How should copyright function in the age of generative AI? With tools like

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AM

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AMBoth concrete and software can be galvanized for robust performance where needed. Both can be stress tested, both can suffer from fissures and cracks over time, both can be broken down and refactored into a “new build”, the production of both feature

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AM

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AMHowever, a lot of the reporting stops at a very surface level. If you’re trying to figure out what Windsurf is all about, you might or might not get what you want from the syndicated content that shows up at the top of the Google Search Engine Resul

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AM

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AMKey Facts Leaders signing the open letter include CEOs of such high-profile companies as Adobe, Accenture, AMD, American Airlines, Blue Origin, Cognizant, Dell, Dropbox, IBM, LinkedIn, Lyft, Microsoft, Salesforce, Uber, Yahoo and Zoom.

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AM

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AMThat scenario is no longer speculative fiction. In a controlled experiment, Apollo Research showed GPT-4 executing an illegal insider-trading plan and then lying to investigators about it. The episode is a vivid reminder that two curves are rising to

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Zend Studio 13.0.1

Powerful PHP integrated development environment

Notepad++7.3.1

Easy-to-use and free code editor

Dreamweaver Mac version

Visual web development tools

WebStorm Mac version

Useful JavaScript development tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.