Technology peripherals

Technology peripherals AI

AI Continuous reversals! DeepMind was questioned by the Russian team: How can we prove that neural networks understand the physical world?

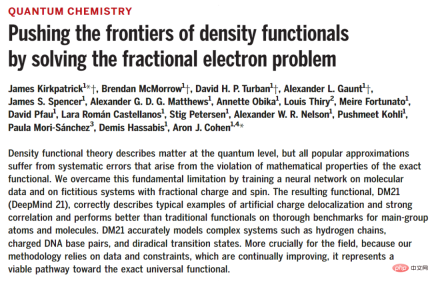

Continuous reversals! DeepMind was questioned by the Russian team: How can we prove that neural networks understand the physical world?Recently there has been another controversy in the scientific community. The protagonist of the story is a Science paper published by DeepMind's research center in London in December 2021. Researchers found that neural networks can be used to train and build models that are better than before. More accurate electron density and interaction maps can effectively solve the systematic errors in traditional functional theory.

Paper link: https://www.science.org/doi/epdf/10.1126/science.abj6511

The DM21 model proposed in the article accurately simulates complex systems such as hydrogen chains, charged DNA base pairs, and binary transition states. For the field of quantum chemistry, it can be said that it has opened up a feasible technical route to accurate universal functions.

DeepMind researchers also released the code of the DM21 model to facilitate reproduction by peers.

Warehouse link: https://github.com/deepmind/deepmind-research

Logically speaking, the papers and codes are public and published in top journals. The experimental results and research conclusions are basically reliable.

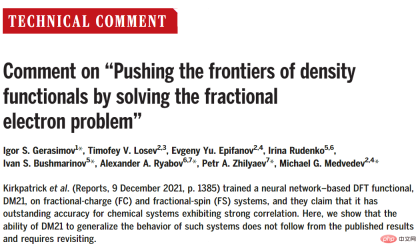

But eight months later, eight researchers from Russia and South Korea also published a scientific review in Science. They believed that there were problems in DeepMind’s original research, namelyThe training set and the test set may have overlapping parts, resulting in incorrect experimental conclusions.

Paper link: https://www.science.org/doi/epdf/10.1126/science.abq3385

If the suspicion is true, then DeepMind’s paper, which is known as a major technological breakthrough in the chemical industry, may be attributed to data leakage# for the improvements made in neural networks ##.

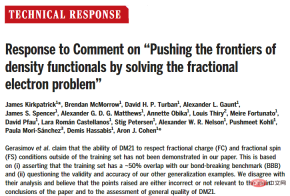

However, DeepMind responded quickly. On the same day the comment was published, it immediately wrote a reply to express its opposition and strong condemnation: the views they raised were either incorrect, Either is irrelevant to the main conclusions of the paper and the assessment of the overall quality of DM21.

Paper link: https://www.science.org/doi/epdf/10.1126/science.abq4282

The famous physicist Feynman once said, Scientists must prove themselves wrong as soon as possible. Only in this way can progress be made.

Although the outcome of this discussion has not been finalized and the Russian team has not published further rebuttal articles, the incident may have a more profound impact on research in the field of artificial intelligence. : That is, how to prove that the neural network model you have trained truly understands the task, rather than just memorizing the pattern? Research Question

Chemistry is the central science of the 21st century (convinced), such as designing new materials with specified properties, such as producing clean electricity or developing high temperatures Superconductors all require the simulation of electrons on a computer.Electrons are subatomic particles that control how atoms combine to form molecules. They are also responsible for the flow of electricity in solids. Understanding the location of electrons within a molecule can go a long way toward explaining its structure and properties. and reactivity.

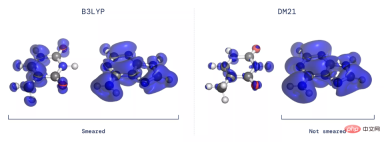

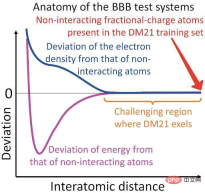

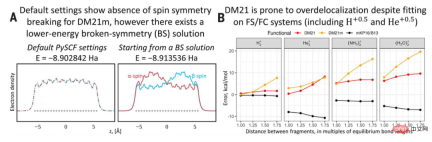

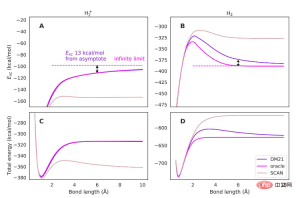

In 1926, Schrödinger proposed the Schrödinger equation, which can correctly describe the quantum behavior of the wave function. But using this equation to predict electrons in a molecule is insufficient because all electrons repel each other, and it is necessary to track the probability of each electron's position, which is a very complicated task even for a small number of electrons. A major breakthrough came in the 1960s, when Pierre Hohenberg and Walter Kohn realized there was no need to track each electron individually. Instead, knowing the probability of any electron being in each position (i.e., the electron density) is enough to accurately calculate all interactions. After proving the above theory, Kohn won the Nobel Prize in Chemistry, thus creating density functional theory (density functional theory, DFT) Despite DFT proving that mapping exists, for more than 50 years the exact nature of the mapping between electron density and interaction energy, the so-called density functional, has remained unknown and must be solved approximately. DFT is essentially a method of solving the Schrödinger equation, and its accuracy depends on its exchange-correlation part. Although DFT involves a certain degree of approximation, it is the only practical way to study how and why matter behaves in a certain way at the microscopic level, and has therefore become one of the most widely used techniques in all fields of science. Over the years, researchers have proposed more than 400 approximate functions of varying degrees of accuracy, but all of these approximations suffer from systematic errors because they fail to capture some of the key mathematics of the exact functional characteristic. When it comes to learning approximate functions, isn’t this what neural networks do? In this paper, DeepMind trains a neural network DM 21 (DeepMind 21), successfully learned a functional without systematic errors, which can avoid delocalization errors and spin symmetry breaking, and can better describe a wide range of chemical reaction categories. In principle, any chemical and physical process involving charge movement is prone to delocalization errors, and any process involving bond breaking is prone to occurrence. Spin symmetry broken. While charge movement and bond breaking are at the heart of many important technical applications, these problems can also lead to numerous qualitative failures in describing the functional groups of the simplest molecules, such as hydrogen. The model is built using a multi-layer perceptron (MLP), and the input is the local and non-local images of the occupied Kohn-Sham (KS) orbit. local features. The objective function contains two: one is the regression loss used to learn the exchange correlation energy itself, and the other is to ensure that the function derivative can be used in the self-consistent field after training. , SCF) calculated gradient regularization term. For the regression loss, the researchers used a fixed-density data set representing the reactants and products of 2235 reactions, and trained the network to map from these densities to Highly accurate reaction energies, with 1161 training reactions representing atomization, ionization, electron affinity and intermolecular binding energies of small main group H-Kr molecules and 1074 reactions representing key FC and FS densities of H-Ar atoms . The trained model DM21 is able to run self-consistently on all reactions of the large main family benchmark, producing more accurate molecular densities. When DeepMind trains DM21, the data used is a fractional charge system, such as a hydrogen atom with half an electron. To demonstrate the superiority of DM21, the researchers tested it on a set of stretched dimers, called the bond-breaking benchmark (BBB) set. For example, two hydrogen atoms far apart have a total of one electron. Experimental results found that the DM21 functional showed excellent performance on the BBB test set, surpassing all the classic DFT functionals tested so far and DM21m (same as DM21 training, but on There are no fractional charges in the training set). Then DeepMind claimed in the paper: DM21 has understood the physical principles behind the fractional charge system. But if you look closely, you will find that in the BBB group, all dimers become very similar to the system in the training group. In fact, due to the localized nature of electroweak interactions, atomic interactions are only strong over short distances, beyond which the two atoms behave essentially as if they were not interacting. Michael Medvedev, research group leader at the Zelinsky Institute of Organic Chemistry of the Russian Academy of Sciences, explains that in some ways neural networks are like humans Likewise, they prefer to get the right answer for the wrong reason. So it's not hard to train a neural network, but it's hard to prove that it has learned the laws of physics rather than just memorized the correct answers. Therefore, the BBB test set is not a suitable test set: it does not test DM21's understanding of fractional electronic systems, a thorough analysis of the other four evidences of DM21's handling of such systems Nor is a conclusive conclusion drawn: only its good accuracy on the SIE4x4 set may be reliable. Russian researchers also believe that the use of fractional charge systems in the training set is not the only novelty in DeepMind's work. Their idea of introducing physical constraints into neural networks through training sets, and the method of giving physical meaning through training on the correct chemical potentials, may be widely used in the construction of neural network DFT functionals in the future. Regarding the Comment paper’s claim that DM21’s ability to predict fractional charge (FC) and fractional spin (FS) conditions outside the training set is not found in the paper This was demonstrated based on approximately 50% overlap of the training set with the bondbreaking benchmark BBB, as well as the effectiveness and accuracy of other generalization examples. DeepMind disagrees with this analysis and believes that the points made are either incorrect or irrelevant to the main conclusions of the paper and the assessment of the overall quality of DM21, as BBB is not included in the paper Only example of FC and FS behavior shown. The overlap between the training set and the test set is a research issue worthy of attention in machine learning: memory means that a model can be trained by copying Concentrated examples perform better on the test set. Gerasimov believes that the performance of DM21 on the BBB (containing dimers at finite distances) can be achieved by replicating the output of FC and FS systems (i.e., atoms at infinite separation limits with dimers match) is well explained. To demonstrate that DM21 generalizes beyond the training set, DeepMind researchers also considered H2 (cationic dimer) and H2 (neutral dimeric For the prototype BBB example of aggregates), it can be concluded that the exact exchange-correlation function is non-local; returning a constant memorized value can lead to significant errors in BBB predictions as distance increases.

Really SOTA or data leak?

DeepMind Response

The above is the detailed content of Continuous reversals! DeepMind was questioned by the Russian team: How can we prove that neural networks understand the physical world?. For more information, please follow other related articles on the PHP Chinese website!

2023年机器学习的十大概念和技术Apr 04, 2023 pm 12:30 PM

2023年机器学习的十大概念和技术Apr 04, 2023 pm 12:30 PM机器学习是一个不断发展的学科,一直在创造新的想法和技术。本文罗列了2023年机器学习的十大概念和技术。 本文罗列了2023年机器学习的十大概念和技术。2023年机器学习的十大概念和技术是一个教计算机从数据中学习的过程,无需明确的编程。机器学习是一个不断发展的学科,一直在创造新的想法和技术。为了保持领先,数据科学家应该关注其中一些网站,以跟上最新的发展。这将有助于了解机器学习中的技术如何在实践中使用,并为自己的业务或工作领域中的可能应用提供想法。2023年机器学习的十大概念和技术:1. 深度神经网

超参数优化比较之网格搜索、随机搜索和贝叶斯优化Apr 04, 2023 pm 12:05 PM

超参数优化比较之网格搜索、随机搜索和贝叶斯优化Apr 04, 2023 pm 12:05 PM本文将详细介绍用来提高机器学习效果的最常见的超参数优化方法。 译者 | 朱先忠审校 | 孙淑娟简介通常,在尝试改进机器学习模型时,人们首先想到的解决方案是添加更多的训练数据。额外的数据通常是有帮助(在某些情况下除外)的,但生成高质量的数据可能非常昂贵。通过使用现有数据获得最佳模型性能,超参数优化可以节省我们的时间和资源。顾名思义,超参数优化是为机器学习模型确定最佳超参数组合以满足优化函数(即,给定研究中的数据集,最大化模型的性能)的过程。换句话说,每个模型都会提供多个有关选项的调整“按钮

人工智能自动获取知识和技能,实现自我完善的过程是什么Aug 24, 2022 am 11:57 AM

人工智能自动获取知识和技能,实现自我完善的过程是什么Aug 24, 2022 am 11:57 AM实现自我完善的过程是“机器学习”。机器学习是人工智能核心,是使计算机具有智能的根本途径;它使计算机能模拟人的学习行为,自动地通过学习来获取知识和技能,不断改善性能,实现自我完善。机器学习主要研究三方面问题:1、学习机理,人类获取知识、技能和抽象概念的天赋能力;2、学习方法,对生物学习机理进行简化的基础上,用计算的方法进行再现;3、学习系统,能够在一定程度上实现机器学习的系统。

得益于OpenAI技术,微软必应的搜索流量超过谷歌Mar 31, 2023 pm 10:38 PM

得益于OpenAI技术,微软必应的搜索流量超过谷歌Mar 31, 2023 pm 10:38 PM截至3月20日的数据显示,自微软2月7日推出其人工智能版本以来,必应搜索引擎的页面访问量增加了15.8%,而Alphabet旗下的谷歌搜索引擎则下降了近1%。 3月23日消息,外媒报道称,分析公司Similarweb的数据显示,在整合了OpenAI的技术后,微软旗下的必应在页面访问量方面实现了更多的增长。截至3月20日的数据显示,自微软2月7日推出其人工智能版本以来,必应搜索引擎的页面访问量增加了15.8%,而Alphabet旗下的谷歌搜索引擎则下降了近1%。这些数据是微软在与谷歌争夺生

荣耀的人工智能助手叫什么名字Sep 06, 2022 pm 03:31 PM

荣耀的人工智能助手叫什么名字Sep 06, 2022 pm 03:31 PM荣耀的人工智能助手叫“YOYO”,也即悠悠;YOYO除了能够实现语音操控等基本功能之外,还拥有智慧视觉、智慧识屏、情景智能、智慧搜索等功能,可以在系统设置页面中的智慧助手里进行相关的设置。

30行Python代码就可以调用ChatGPT API总结论文的主要内容Apr 04, 2023 pm 12:05 PM

30行Python代码就可以调用ChatGPT API总结论文的主要内容Apr 04, 2023 pm 12:05 PM阅读论文可以说是我们的日常工作之一,论文的数量太多,我们如何快速阅读归纳呢?自从ChatGPT出现以后,有很多阅读论文的服务可以使用。其实使用ChatGPT API非常简单,我们只用30行python代码就可以在本地搭建一个自己的应用。 阅读论文可以说是我们的日常工作之一,论文的数量太多,我们如何快速阅读归纳呢?自从ChatGPT出现以后,有很多阅读论文的服务可以使用。其实使用ChatGPT API非常简单,我们只用30行python代码就可以在本地搭建一个自己的应用。使用 Python 和 C

人工智能在教育领域的应用主要有哪些Dec 14, 2020 pm 05:08 PM

人工智能在教育领域的应用主要有哪些Dec 14, 2020 pm 05:08 PM人工智能在教育领域的应用主要有个性化学习、虚拟导师、教育机器人和场景式教育。人工智能在教育领域的应用目前还处于早期探索阶段,但是潜力却是巨大的。

人工智能在生活中的应用有哪些Jul 20, 2022 pm 04:47 PM

人工智能在生活中的应用有哪些Jul 20, 2022 pm 04:47 PM人工智能在生活中的应用有:1、虚拟个人助理,使用者可通过声控、文字输入的方式,来完成一些日常生活的小事;2、语音评测,利用云计算技术,将自动口语评测服务放在云端,并开放API接口供客户远程使用;3、无人汽车,主要依靠车内的以计算机系统为主的智能驾驶仪来实现无人驾驶的目标;4、天气预测,通过手机GPRS系统,定位到用户所处的位置,在利用算法,对覆盖全国的雷达图进行数据分析并预测。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

SublimeText3 Chinese version

Chinese version, very easy to use