Technology peripherals

Technology peripherals AI

AI 10 lines of code to complete the graph Transformer, the graph neural network framework DGL ushered in version 1.0

10 lines of code to complete the graph Transformer, the graph neural network framework DGL ushered in version 1.010 lines of code to complete the graph Transformer, the graph neural network framework DGL ushered in version 1.0

In 2019, New York University and Amazon Cloud Technology jointly launched the graph neural network framework DGL (Deep Graph Library). Now DGL 1.0 is officially released! DGL 1.0 summarizes the various needs for graph deep learning and graph neural network (GNN) technology in academia or industry in the past three years. From academic research on state-of-the-art models to scaling GNNs to industrial applications, DGL 1.0 provides a comprehensive and easy-to-use solution for all users to better take advantage of graph machine learning.

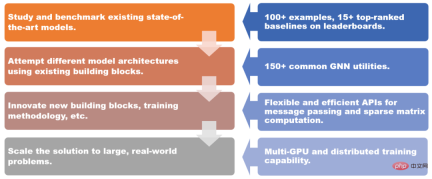

##DGL 1.0 for different scenarios solutions provided.

DGL 1.0 adopts a layered and modular design to meet various user needs. Key features of this release include:

- More than 100 out-of-the-box GNN model examples, more than 15 top-ranked ones on Open Graph Benchmark (OGB) Baseline model;

- More than 150 commonly used GNN modules, including GNN layers, data sets, graph data conversion modules, graph samplers, etc., which can be used to build new model architectures or GNN-based Solution;

- Flexible and efficient message passing and sparse matrix abstraction for developing new GNN modules;

- Multi-GPU and distributed Training capabilities support training on tens of billions of graphs.

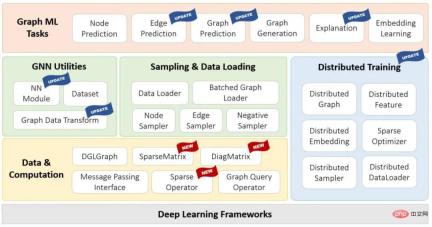

#DGL 1.0 Technology stack diagram

One of the highlights of this version is the introduction of DGL-Sparse, a new programming interface that uses sparse matrices as the core programming abstraction. DGL-Sparse not only simplifies the development of existing GNN models such as graph convolutional networks, but also works with the latest models, including diffusion-based GNNs, hypergraph neural networks, and graph Transformers.

The release of DGL version 1.0 has aroused enthusiastic responses on the Internet. Scholars such as Yann Lecun, one of the three giants of deep learning, and Xavier Bresson, associate professor at the National University of Singapore, have all liked and forwarded it.

#In the following article, the author Two mainstream GNN paradigms are outlined, namely message passing view and matrix view. These paradigms can help researchers better understand the inner working mechanism of GNN, and the matrix perspective is also one of the motivations for the development of DGL Sparse.

#In the following article, the author Two mainstream GNN paradigms are outlined, namely message passing view and matrix view. These paradigms can help researchers better understand the inner working mechanism of GNN, and the matrix perspective is also one of the motivations for the development of DGL Sparse.

Message passing view and matrix view

There is a saying in the movie "Arrival": "The language you use determines your The way you think and affects your view of things." This sentence also applies to GNN.

means that graph neural networks have two different paradigms. The first, called the message passing view, expresses the GNN model from a fine-grained, local perspective, detailing how messages are exchanged along edges and how node states are updated accordingly. The second is the matrix perspective. Since graphs have algebraic equivalence with sparse adjacency matrices, many researchers choose to express GNN models from a coarse-grained, global perspective, emphasizing operations involving sparse adjacency matrices and eigenvectors.

The message passing perspective reveals the connection between GNNs and the Weisfeiler Lehman (WL) graph isomorphism test, which also relies on aggregating information from neighbors. The matrix perspective understands GNN from an algebraic perspective, leading to some interesting discoveries, such as the over-smoothing problem.

In short, these two perspectives are indispensable tools for studying GNN. They complement each other and help researchers better understand and describe the nature and characteristics of GNN models. It is for this reason that one of the main motivations for the release of DGL 1.0 is to add support for the matrix perspective based on the existing message passing interface.

DGL Sparse: A sparse matrix library designed for graph machine learning

A new library called DGL Sparse has been added to DGL version 1.0 (dgl.sparse), together with the message passing interface in DGL, improves support for all types of graph neural network models. DGL Sparse provides sparse matrix classes and operations specifically for graph machine learning, making it easier to write GNNs from a matrix perspective. In the next section, the authors demonstrate several GNN examples, showing their mathematical formulations and corresponding code implementations in DGL Sparse.

Graph Convolutional Network

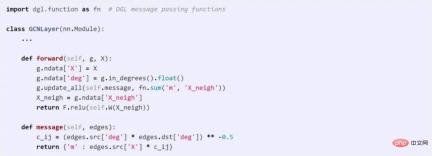

GCN is one of the pioneers of GNN modeling. GCN can be represented with both message passing view and matrix view. The following code compares the differences between these two methods in DGL.

#Implementing GCN using messaging API

##Use DGL Sparse to implement GCN

GNN based on graph diffusion

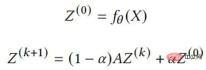

Graph diffusion is the process of spreading or smoothing node features or signals along edges. Many classic graph algorithms such as PageRank fall into this category. A series of studies have shown that combining graph diffusion with neural networks is an effective and efficient way to enhance model predictions. The following equation describes the core calculation of one of the more representative models, APPNP. It can be implemented directly in DGL Sparse.

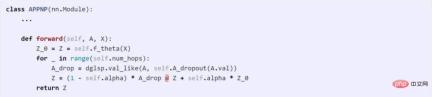

Hypergraph is a generalization of graph where edges can connect any number of nodes (called hyperedges). Hypergraphs are particularly useful in scenarios where higher-order relationships need to be captured, such as co-purchasing behavior in e-commerce platforms, or co-authorship in citation networks. A typical feature of a hypergraph is its sparse correlation matrix, so hypergraph neural networks (HGNN) are often defined using sparse matrices. The following is a hypergraph convolutional network (Feng et al., 2018) and its code implementation.

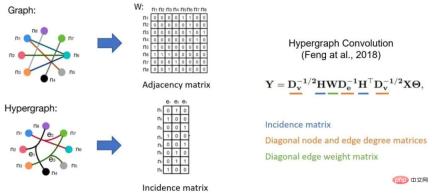

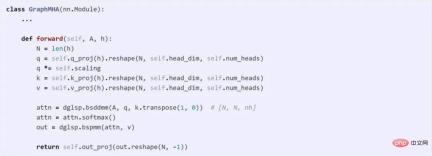

Transformer model has become the most successful model architecture in natural language processing. Researchers are also beginning to extend Transformer to graph machine learning. Dwivedi et al. pioneered the idea of limiting all multi-head attention to connected pairs of nodes in the graph. This model can be easily implemented with only 10 lines of code using the DGL Sparse tool.

Key Features of DGL Sparse

Compared with sparse matrix libraries such as scipy.sparse or torch.sparse, the overall design of DGL Sparse is to serve graph machine learning, which includes the following key features:

- Automatic sparse format selection: DGL Sparse is designed so that users do not have to worry about choosing the correct data structure to store sparse matrices (also known as sparse formats). Users only need to remember that dgl.sparse.spmatrix creates a sparse matrix, and DGL will automatically select the optimal format internally based on the operator called;

- # Scalar or vector non-zero elements : Many GNN models learn multiple weights on the edges (such as the multi-head attention vectors demonstrated in the Graph Transformer example). To accommodate this situation, DGL Sparse allows non-zero elements to have vector shapes and extends common sparse operations such as sparse-dense-matrix multiplication (SpMM), etc. You can refer to the bspmm operation in the Graph Transformer example.

By leveraging these design features, DGL Sparse reduced code length on average compared to the previous implementation of the matrix view model using a message passing interface. 2.7 times . The simplified code also reduces the framework's overhead by 43%. In addition, DGL Sparse is compatible with PyTorch and can be easily integrated with various tools and packages in the PyTorch ecosystem. Get started with DGL 1.0

DGL 1.0 has been released on all platforms and can be easily installed using pip or conda. In addition to the examples introduced earlier, the first version of DGL Sparse also includes 5 tutorials and 11 end-to-end examples, all of which can be experienced directly in Google Colab without the need for local installation.To learn more about the new features of DGL 1.0, please refer to the author's release log. If you encounter any problems or have any suggestions or feedback while using DGL, you can also contact the DGL team through the Discuss forum or Slack.

The above is the detailed content of 10 lines of code to complete the graph Transformer, the graph neural network framework DGL ushered in version 1.0. For more information, please follow other related articles on the PHP Chinese website!

![Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]](https://img.php.cn/upload/article/001/242/473/174717025174979.jpg?x-oss-process=image/resize,p_40) Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]May 14, 2025 am 05:04 AM

Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]May 14, 2025 am 05:04 AMChatGPT is not accessible? This article provides a variety of practical solutions! Many users may encounter problems such as inaccessibility or slow response when using ChatGPT on a daily basis. This article will guide you to solve these problems step by step based on different situations. Causes of ChatGPT's inaccessibility and preliminary troubleshooting First, we need to determine whether the problem lies in the OpenAI server side, or the user's own network or device problems. Please follow the steps below to troubleshoot: Step 1: Check the official status of OpenAI Visit the OpenAI Status page (status.openai.com) to see if the ChatGPT service is running normally. If a red or yellow alarm is displayed, it means Open

Calculating The Risk Of ASI Starts With Human MindsMay 14, 2025 am 05:02 AM

Calculating The Risk Of ASI Starts With Human MindsMay 14, 2025 am 05:02 AMOn 10 May 2025, MIT physicist Max Tegmark told The Guardian that AI labs should emulate Oppenheimer’s Trinity-test calculus before releasing Artificial Super-Intelligence. “My assessment is that the 'Compton constant', the probability that a race to

An easy-to-understand explanation of how to write and compose lyrics and recommended tools in ChatGPTMay 14, 2025 am 05:01 AM

An easy-to-understand explanation of how to write and compose lyrics and recommended tools in ChatGPTMay 14, 2025 am 05:01 AMAI music creation technology is changing with each passing day. This article will use AI models such as ChatGPT as an example to explain in detail how to use AI to assist music creation, and explain it with actual cases. We will introduce how to create music through SunoAI, AI jukebox on Hugging Face, and Python's Music21 library. Through these technologies, everyone can easily create original music. However, it should be noted that the copyright issue of AI-generated content cannot be ignored, and you must be cautious when using it. Let’s explore the infinite possibilities of AI in the music field together! OpenAI's latest AI agent "OpenAI Deep Research" introduces: [ChatGPT]Ope

What is ChatGPT-4? A thorough explanation of what you can do, the pricing, and the differences from GPT-3.5!May 14, 2025 am 05:00 AM

What is ChatGPT-4? A thorough explanation of what you can do, the pricing, and the differences from GPT-3.5!May 14, 2025 am 05:00 AMThe emergence of ChatGPT-4 has greatly expanded the possibility of AI applications. Compared with GPT-3.5, ChatGPT-4 has significantly improved. It has powerful context comprehension capabilities and can also recognize and generate images. It is a universal AI assistant. It has shown great potential in many fields such as improving business efficiency and assisting creation. However, at the same time, we must also pay attention to the precautions in its use. This article will explain the characteristics of ChatGPT-4 in detail and introduce effective usage methods for different scenarios. The article contains skills to make full use of the latest AI technologies, please refer to it. OpenAI's latest AI agent, please click the link below for details of "OpenAI Deep Research"

Explaining how to use the ChatGPT app! Japanese support and voice conversation functionMay 14, 2025 am 04:59 AM

Explaining how to use the ChatGPT app! Japanese support and voice conversation functionMay 14, 2025 am 04:59 AMChatGPT App: Unleash your creativity with the AI assistant! Beginner's Guide The ChatGPT app is an innovative AI assistant that handles a wide range of tasks, including writing, translation, and question answering. It is a tool with endless possibilities that is useful for creative activities and information gathering. In this article, we will explain in an easy-to-understand way for beginners, from how to install the ChatGPT smartphone app, to the features unique to apps such as voice input functions and plugins, as well as the points to keep in mind when using the app. We'll also be taking a closer look at plugin restrictions and device-to-device configuration synchronization

How do I use the Chinese version of ChatGPT? Explanation of registration procedures and feesMay 14, 2025 am 04:56 AM

How do I use the Chinese version of ChatGPT? Explanation of registration procedures and feesMay 14, 2025 am 04:56 AMChatGPT Chinese version: Unlock new experience of Chinese AI dialogue ChatGPT is popular all over the world, did you know it also offers a Chinese version? This powerful AI tool not only supports daily conversations, but also handles professional content and is compatible with Simplified and Traditional Chinese. Whether it is a user in China or a friend who is learning Chinese, you can benefit from it. This article will introduce in detail how to use ChatGPT Chinese version, including account settings, Chinese prompt word input, filter use, and selection of different packages, and analyze potential risks and response strategies. In addition, we will also compare ChatGPT Chinese version with other Chinese AI tools to help you better understand its advantages and application scenarios. OpenAI's latest AI intelligence

5 AI Agent Myths You Need To Stop Believing NowMay 14, 2025 am 04:54 AM

5 AI Agent Myths You Need To Stop Believing NowMay 14, 2025 am 04:54 AMThese can be thought of as the next leap forward in the field of generative AI, which gave us ChatGPT and other large-language-model chatbots. Rather than simply answering questions or generating information, they can take action on our behalf, inter

An easy-to-understand explanation of the illegality of creating and managing multiple accounts using ChatGPTMay 14, 2025 am 04:50 AM

An easy-to-understand explanation of the illegality of creating and managing multiple accounts using ChatGPTMay 14, 2025 am 04:50 AMEfficient multiple account management techniques using ChatGPT | A thorough explanation of how to use business and private life! ChatGPT is used in a variety of situations, but some people may be worried about managing multiple accounts. This article will explain in detail how to create multiple accounts for ChatGPT, what to do when using it, and how to operate it safely and efficiently. We also cover important points such as the difference in business and private use, and complying with OpenAI's terms of use, and provide a guide to help you safely utilize multiple accounts. OpenAI

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

Atom editor mac version download

The most popular open source editor

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment