Technology peripherals

Technology peripherals AI

AI Call for suspension of GPT-5 research and development sparks fierce battle! Andrew Ng and LeCun took the lead in opposition, while Bengio stood in support

Call for suspension of GPT-5 research and development sparks fierce battle! Andrew Ng and LeCun took the lead in opposition, while Bengio stood in supportYesterday, a joint letter written by thousands of big guys calling for a six-month suspension of super AI training exploded like a bomb on the Internet at home and abroad.

After a day of verbal exchanges, several key figures and other big names came out to respond publicly.

Some are very official, some are very personal, and some do not face the problem directly. But one thing is for sure, both the views of these big guys and the interest groups they represent are worthy of careful consideration.

What’s interesting is that among the Turing Big Three, one took the lead in signing, one strongly opposed, and one did not say a word.

##Bengio’s signature, Hinton’s silence, LeCun’s opposition

Andrew Ng (opposition)In this incident, Andrew Ng, a former member of Google Brain and founder of the online education platform Coursera, was a clear-cut opponent.

He clearly stated his attitude: suspending "making AI progress beyond GPT-4" for 6 months is a bad idea.

He said that he has seen many new AI applications in education, health care, food and other fields, and many people will benefit from this. And improving GPT-4 would also be beneficial.

What we should do is to strike a balance between the huge value created by AI and the real risks.

Regarding what was mentioned in the joint letter, "If the training of super-powerful AI cannot be quickly suspended, the government should be involved", Ng Enda also said This idea is terrible.

He said that asking the government to suspend emerging technologies that they do not understand is anti-competitive, sets a bad precedent, and is a terrible policy innovation.

He admitted that responsible AI is important and AI does have risks.

But the media's rendering of "AI companies are frantically releasing dangerous codes" is obviously too exaggerated. The vast majority of AI teams attach great importance to responsible AI and safety. But he also admitted that "unfortunately, not all."

Finally, he emphasized again:

A 6-month suspension period is not a practical suggestion. To improve the safety of AI, regulations around transparency and auditing will be more practical and have a greater impact. As we advance technology, let’s also invest more in security instead of stifling progress.Under his Twitter, netizens have already expressed strong opposition: The reason why the bosses are so calm is probably because the pain of unemployment will not fall on them.

LeCun (Opposition)As soon as the joint letter was sent out, netizens rushed to tell them: Turing Award giants Bengio and LeCun both signed the letter!

LeCun, who often surfs the front lines of the Internet, immediately refuted the rumors: No, I did not sign, and I do not agree with the premise of this letter.

Some netizens said that I don’t agree with this letter either, but I’m curious: the reason why you don’t agree with this letter is that you think LLM Is it not advanced enough to threaten humanity at all, or is it for other reasons?

But LeCun did not answer any of these questions.

After 20 hours of mysterious silence, LeCun suddenly retweeted a netizen’s tweet:

"OpenAI can wait long enough. It took 6 months before GPT4 was released! They even wrote a white paper for this..."

In this regard, LeCun praised: That's right, the so-called "Suspension of research and development" is nothing more than "secret research and development", which is exactly the opposite of what some signatories hope.

It seems that nothing can be hidden from LeCun’s discerning eyes.

The netizen who asked before also agreed: This is why I oppose this petition - no "bad guy" will really stop.

"So this is like an arms treaty that no one abides by? Are there not many examples of this in history?"

After a while Yes, he retweeted a big shot’s tweet.

The boss said, "I didn't sign it either. This letter is filled with a bunch of terrible rhetoric and invalid/non-existent policy prescriptions." LeCun said, "I agree. ”.

Bengio and Marcus (yes)

The first big person to sign the open letter is the famous Turing Award winner Yoshua Bengio.

Of course, New York University professor Marcus also voted in favor. He seems to be the first person to expose this open letter.

After the discussion became louder, he quickly posted a blog explaining his position, which was still full of highlights.

Breaking News: The letter I mentioned earlier is now public. The letter calls for a six-month moratorium on training AI "more powerful than GPT-4." Many famous people signed it. I also joined.

I was not involved in drafting it because there are other things to fuss about (e.g., which AIs are more powerful than GPT-4? Since the details of GPT-4's architecture or training set It hasn’t been announced yet, so how will we know?)—but the spirit of the letter is one I support: Until we can better handle the risks and benefits, we should proceed with caution.

It will be interesting to see what happens next.

And another point of view that Marcus just expressed 100% agreement with is also very interesting. This point of view says:

GPT-5 will not be AGI . It is almost certain that no GPT model would be AGI. It is completely impossible for any model optimized with the methods we use today (gradient descent) to be AGI. The upcoming GPT model will definitely change the world, but over-hyping it is crazy.

Altman (non-committal)

As of now, Sam Altman has not made a clear position on this open letter.

However, he did express some views on general artificial intelligence.

What constitutes good general artificial intelligence:

1. Alignment of technical capabilities for superintelligence

2. Adequate alignment between most leading AGI efforts Coordination

3. An effective global regulatory framework

Some netizens questioned: "Align with what? Align with whom? Alignment with some people means aligning with others Not aligned."

##This comment lit up: "Then you should let it Open."

Greg Brockman, another founder of OpenAI, retweeted Altman’s tweet and once again emphasized that OpenAI’s mission “is to ensure that AGI benefits all mankind.”

Once again, some netizens pointed out the point: You guys keep saying "aligned with the designer's intention" all day long, but other than that, no one knows the truth. What does alignment mean.

Yudkowsky (radical)

There is also a decision theorist named Eliezer Yudkowsky, who has a more radical attitude:

Suspending AI development is not enough, we need to shut down all AI! All closed!

#If this continues, every one of us will die.

As soon as the open letter was released, Yudkowsky immediately wrote a long article and published it in TIME magazine.

He said that he did not sign because in his opinion, the letter was too mild.

This letter underestimates the seriousness of the situation and asks for too few problems to be solved.

He said that the key issue is not the intelligence of "competing with humans". Obviously, when AI becomes smarter than humans, this step is obvious.

The key is that many researchers, including him, believe that the most likely consequence of building an AI with superhuman intelligence is that everyone on the planet will die.

is not "maybe", but "will definitely".

If there is not enough accuracy, the most likely result is that the AI we create will not do what we want, nor will it care about us, nor will it care about us Other sentient life.

Theoretically, we should be able to teach AI to learn this kind of care, but right now we don’t know how.

If there is no such care, the result we get is: AI does not love you, nor does it hate you, you are just a pile of atomic materials, which it can use Do anything.

And if humans want to resist superhuman AI, they will inevitably fail, just like "the 11th century trying to defeat the 21st century", "the South Homo apes tried to defeat Homo sapiens".

Yudkowsky said that the AI we imagine to do bad things is a thinker living on the Internet and sending malicious emails to humans every day, but in fact, it may be a hostile person. The superhuman AI is an alien civilization that thinks millions of times faster than humans. In its view, humans are stupid and slow.

When this AI is smart enough, it will not just stay in the computer. It can send the DNA sequence to the laboratory via email, and the laboratory will produce the protein on demand, and then the AI will have a life form. Then all living things on earth will die.

How should humans survive in this situation? We have no plans at the moment.

OpenAI is just a public call for alignment for future AI. And DeepMind, another leading AI laboratory, has no plans at all.

From the OpenAI official blog

These dangers exist regardless of whether the AI is conscious or not. Its powerful cognitive system can work hard to optimize and calculate standard outputs that satisfy complex results.

Indeed, current AI may simply imitate self-awareness from training data. But we actually know very little about the internal structure of these systems.

If we are still ignorant of GPT-4, and GPT-5 has evolved amazing capabilities, just like from GPT-3 to GPT- 4 is the same, then it is difficult for us to know whether it was humans who created GPT-5 or AI itself.

On February 7, Microsoft CEO Nadella gloated publicly that the new Bing had forced Google to "come out and do a little dance."

His behavior is irrational.

We should have thought about this issue 30 years ago. Six months is not enough to bridge the gap.

It has been more than 60 years since the concept of AI was proposed. We should spend at least 30 years ensuring that superhuman AI "doesn't kill anyone."

We simply can’t learn from our mistakes, because once you’re wrong, you’re dead.

If a 6-month pause would allow the earth to survive, I would agree, but it won’t.

What we have to do is these:

#1. The training of new large language models must not only be suspended indefinitely , but also to be implemented globally.

And there can be no exceptions, including the government or the military.

#2. Shut down all large GPU clusters, which are the large computing facilities used to train the most powerful AI.

Pause all models that are training large-scale AI, set a cap on the computing power used by everyone when training AI systems, and gradually lower this cap in the next few years to Compensate for more efficient training algorithms.

Governments and militaries are no exception, immediately establishing multinational agreements to prevent banned conduct from being transferred elsewhere.

Track all GPUs sold. If there is intelligence that GPU clusters are being built in countries outside the agreement, the offending data center should be destroyed through air strikes.

What should be more worrying is the violation of the moratorium than armed conflict between countries. Don't view anything as a conflict between national interests, and know that anyone who talks about an arms race is a fool.

In this regard, we all either live together or die together. This is not a policy but a fact of nature.

Poll

Because the signatures were too popular, the team decided to pause the display first so that the review could catch up. (The signatures at the top of the list are all directly verified)

The above is the detailed content of Call for suspension of GPT-5 research and development sparks fierce battle! Andrew Ng and LeCun took the lead in opposition, while Bengio stood in support. For more information, please follow other related articles on the PHP Chinese website!

ai合并图层的快捷键是什么Jan 07, 2021 am 10:59 AM

ai合并图层的快捷键是什么Jan 07, 2021 am 10:59 AMai合并图层的快捷键是“Ctrl+Shift+E”,它的作用是把目前所有处在显示状态的图层合并,在隐藏状态的图层则不作变动。也可以选中要合并的图层,在菜单栏中依次点击“窗口”-“路径查找器”,点击“合并”按钮。

ai橡皮擦擦不掉东西怎么办Jan 13, 2021 am 10:23 AM

ai橡皮擦擦不掉东西怎么办Jan 13, 2021 am 10:23 AMai橡皮擦擦不掉东西是因为AI是矢量图软件,用橡皮擦不能擦位图的,其解决办法就是用蒙板工具以及钢笔勾好路径再建立蒙板即可实现擦掉东西。

谷歌超强AI超算碾压英伟达A100!TPU v4性能提升10倍,细节首次公开Apr 07, 2023 pm 02:54 PM

谷歌超强AI超算碾压英伟达A100!TPU v4性能提升10倍,细节首次公开Apr 07, 2023 pm 02:54 PM虽然谷歌早在2020年,就在自家的数据中心上部署了当时最强的AI芯片——TPU v4。但直到今年的4月4日,谷歌才首次公布了这台AI超算的技术细节。论文地址:https://arxiv.org/abs/2304.01433相比于TPU v3,TPU v4的性能要高出2.1倍,而在整合4096个芯片之后,超算的性能更是提升了10倍。另外,谷歌还声称,自家芯片要比英伟达A100更快、更节能。与A100对打,速度快1.7倍论文中,谷歌表示,对于规模相当的系统,TPU v4可以提供比英伟达A100强1.

ai可以转成psd格式吗Feb 22, 2023 pm 05:56 PM

ai可以转成psd格式吗Feb 22, 2023 pm 05:56 PMai可以转成psd格式。转换方法:1、打开Adobe Illustrator软件,依次点击顶部菜单栏的“文件”-“打开”,选择所需的ai文件;2、点击右侧功能面板中的“图层”,点击三杠图标,在弹出的选项中选择“释放到图层(顺序)”;3、依次点击顶部菜单栏的“文件”-“导出”-“导出为”;4、在弹出的“导出”对话框中,将“保存类型”设置为“PSD格式”,点击“导出”即可;

ai顶部属性栏不见了怎么办Feb 22, 2023 pm 05:27 PM

ai顶部属性栏不见了怎么办Feb 22, 2023 pm 05:27 PMai顶部属性栏不见了的解决办法:1、开启Ai新建画布,进入绘图页面;2、在Ai顶部菜单栏中点击“窗口”;3、在系统弹出的窗口菜单页面中点击“控制”,然后开启“控制”窗口即可显示出属性栏。

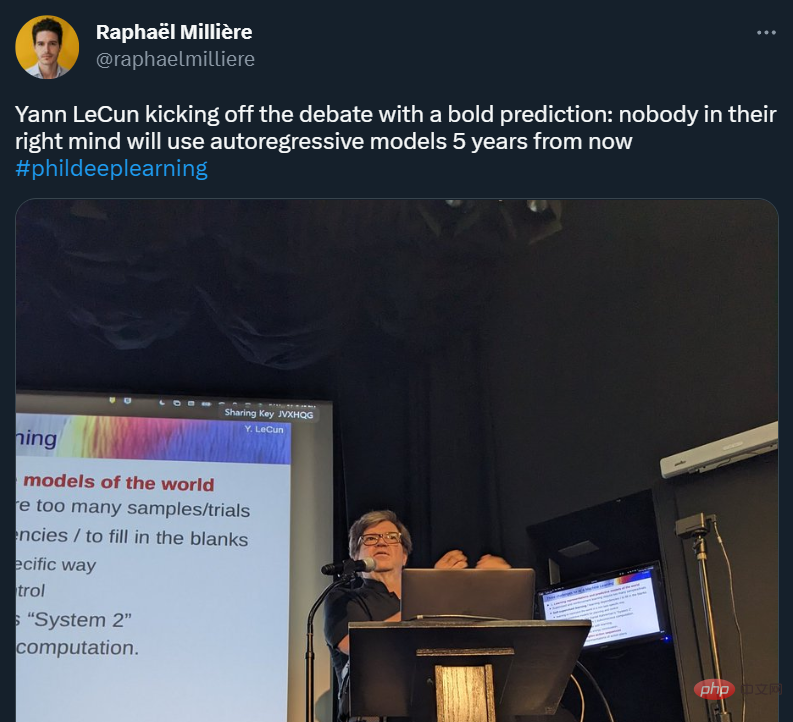

GPT-4的研究路径没有前途?Yann LeCun给自回归判了死刑Apr 04, 2023 am 11:55 AM

GPT-4的研究路径没有前途?Yann LeCun给自回归判了死刑Apr 04, 2023 am 11:55 AMYann LeCun 这个观点的确有些大胆。 「从现在起 5 年内,没有哪个头脑正常的人会使用自回归模型。」最近,图灵奖得主 Yann LeCun 给一场辩论做了个特别的开场。而他口中的自回归,正是当前爆红的 GPT 家族模型所依赖的学习范式。当然,被 Yann LeCun 指出问题的不只是自回归模型。在他看来,当前整个的机器学习领域都面临巨大挑战。这场辩论的主题为「Do large language models need sensory grounding for meaning and u

强化学习再登Nature封面,自动驾驶安全验证新范式大幅减少测试里程Mar 31, 2023 pm 10:38 PM

强化学习再登Nature封面,自动驾驶安全验证新范式大幅减少测试里程Mar 31, 2023 pm 10:38 PM引入密集强化学习,用 AI 验证 AI。 自动驾驶汽车 (AV) 技术的快速发展,使得我们正处于交通革命的风口浪尖,其规模是自一个世纪前汽车问世以来从未见过的。自动驾驶技术具有显着提高交通安全性、机动性和可持续性的潜力,因此引起了工业界、政府机构、专业组织和学术机构的共同关注。过去 20 年里,自动驾驶汽车的发展取得了长足的进步,尤其是随着深度学习的出现更是如此。到 2015 年,开始有公司宣布他们将在 2020 之前量产 AV。不过到目前为止,并且没有 level 4 级别的 AV 可以在市场

ai移动不了东西了怎么办Mar 07, 2023 am 10:03 AM

ai移动不了东西了怎么办Mar 07, 2023 am 10:03 AMai移动不了东西的解决办法:1、打开ai软件,打开空白文档;2、选择矩形工具,在文档中绘制矩形;3、点击选择工具,移动文档中的矩形;4、点击图层按钮,弹出图层面板对话框,解锁图层;5、点击选择工具,移动矩形即可。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

SublimeText3 English version

Recommended: Win version, supports code prompts!

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

SublimeText3 Linux new version

SublimeText3 Linux latest version

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.