Technology peripherals

Technology peripherals AI

AI The diffusion model generates images with Chinese characters and outputs emoticons with one click: OPPO and others proposed GlyphDraw

The diffusion model generates images with Chinese characters and outputs emoticons with one click: OPPO and others proposed GlyphDrawRecently, many unexpected breakthroughs have been made in the field of text-generated images, and many models can achieve the function of creating high-quality and diverse images based on text instructions. While the images generated are already very realistic, current models are often good at generating images of physical objects such as landscapes and objects, but struggle to generate images with a high degree of coherent detail, such as images with complex glyph text such as Chinese characters.

In order to solve this problem, researchers from OPPO and other institutions have proposed a general learning framework GlyphDraw, which is designed to enable the model to generate images embedded with coherent text. This is the field of image synthesis. The first work to solve the problem of Chinese character generation.

- ##Paper address: https://arxiv.org/abs/2303.17870

- Project homepage: https://1073521013.github.io/glyph-draw.github.io/

Let’s start with Let’s take a look at the generation effect, for example, generating warning slogans for the exhibition hall:

Generating billboards:

Add a brief text description to the picture. The text style can also be diverse:

Also, the most interesting and practical example is to generate emoticons:

Although the result has some flaws , but the overall generation effect is already very good. Overall, the main contributions of this research include:

- This research proposes the first Chinese character image generation framework GlyphDraw, which utilizes some Auxiliary information, including Chinese character glyphs and positions, provides fine-grained guidance throughout the generation process, allowing Chinese character images to be seamlessly embedded into images with high quality;

- This study proposes an effective The training strategy limits the number of trainable parameters in the pre-trained model to prevent overfitting and catastrophic forgetting, effectively maintaining the model's powerful open domain generation performance while achieving accurate Chinese character image generation. .

- This study introduces the construction process of the training dataset and proposes a new benchmark to evaluate the quality of Chinese character image generation using OCR models. Among them, GlyphDraw achieved a generation accuracy of 75%, significantly better than previous image synthesis methods.

The study first designed a complex image-text data set Build a strategy, and then propose a general learning framework GlyphDraw based on the open source image synthesis algorithm Stable Diffusion, as shown in Figure 2 below.

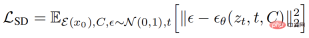

The overall training goal of Stable Diffusion can be expressed as the following formula:

GlyphDraw is based on the cross-attention mechanism in Stable Diffusion, where the original input latent vector z_t is replaced by a concatenation of the image latent vector z_t, the text mask l_m, and the glyph image l_g.

Furthermore, Condition C is equipped with hybrid glyph and text features by using domain-specific fusion modules. The introduction of text mask and glyph information allows the entire training process to achieve fine-grained diffusion control, which is a key component to improving model performance, and ultimately generates images with Chinese character text.

Specifically, the pixel representation of text information, especially complex text forms such as pictographic Chinese characters, is significantly different from natural objects. For example, the Chinese word "sky" is composed of multiple strokes in a two-dimensional structure, and its corresponding natural image is "blue sky dotted with white clouds." In contrast, Chinese characters have very fine-grained characteristics, and even small movements or deformations can lead to incorrect text rendering, making image generation impossible.

Embedding characters in natural image backgrounds also requires consideration of a key issue, which is to accurately control the generation of text pixels while avoiding affecting adjacent natural image pixels. In order to render perfect Chinese characters on natural images, the authors carefully designed two key components integrated into the diffusion synthesis model, namely position control and glyph control.

Different from the global conditional input of other models, character generation requires more attention to specific local areas of the image because the latent feature distribution of character pixels is different from that of natural image pixels. Huge difference. In order to prevent model learning from collapsing, this study innovatively proposes fine-grained location area control to decouple the distribution between different areas.

In addition to position control, another important issue is the fine control of Chinese character stroke synthesis. Considering the complexity and diversity of Chinese characters, it is extremely difficult to simply learn from large image-text datasets without any explicit prior knowledge. In order to accurately generate Chinese characters, this study incorporates explicit glyph images as additional conditional information into the model diffusion process.

Experiments and results

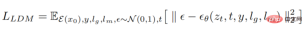

Since there is no data set specifically used for Chinese character image generation, this study first constructed a The benchmark data set ChineseDrawText was used for qualitative and quantitative evaluation, and then the generation accuracy of several methods (evaluated by the OCR recognition model) was tested and compared on ChineseDrawText.

#The GlyphDraw model proposed in this study demonstrates that it achieves an average accuracy of 75% by effectively using auxiliary glyph and position information. Excellent character image generation capabilities. The visual comparison results of several methods are shown in the figure below:

In addition, GlyphDraw can also maintain open domain image synthesis performance by limiting training parameters, The FID of general image synthesis only dropped by 2.3 on MS-COCO FID-10k.

Interested readers can read the original text of the paper to learn more about the research details .

The above is the detailed content of The diffusion model generates images with Chinese characters and outputs emoticons with one click: OPPO and others proposed GlyphDraw. For more information, please follow other related articles on the PHP Chinese website!

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AM

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AMScientists have extensively studied human and simpler neural networks (like those in C. elegans) to understand their functionality. However, a crucial question arises: how do we adapt our own neural networks to work effectively alongside novel AI s

New Google Leak Reveals Subscription Changes For Gemini AIApr 27, 2025 am 11:08 AM

New Google Leak Reveals Subscription Changes For Gemini AIApr 27, 2025 am 11:08 AMGoogle's Gemini Advanced: New Subscription Tiers on the Horizon Currently, accessing Gemini Advanced requires a $19.99/month Google One AI Premium plan. However, an Android Authority report hints at upcoming changes. Code within the latest Google P

How Data Analytics Acceleration Is Solving AI's Hidden BottleneckApr 27, 2025 am 11:07 AM

How Data Analytics Acceleration Is Solving AI's Hidden BottleneckApr 27, 2025 am 11:07 AMDespite the hype surrounding advanced AI capabilities, a significant challenge lurks within enterprise AI deployments: data processing bottlenecks. While CEOs celebrate AI advancements, engineers grapple with slow query times, overloaded pipelines, a

MarkItDown MCP Can Convert Any Document into Markdowns!Apr 27, 2025 am 09:47 AM

MarkItDown MCP Can Convert Any Document into Markdowns!Apr 27, 2025 am 09:47 AMHandling documents is no longer just about opening files in your AI projects, it’s about transforming chaos into clarity. Docs such as PDFs, PowerPoints, and Word flood our workflows in every shape and size. Retrieving structured

How to Use Google ADK for Building Agents? - Analytics VidhyaApr 27, 2025 am 09:42 AM

How to Use Google ADK for Building Agents? - Analytics VidhyaApr 27, 2025 am 09:42 AMHarness the power of Google's Agent Development Kit (ADK) to create intelligent agents with real-world capabilities! This tutorial guides you through building conversational agents using ADK, supporting various language models like Gemini and GPT. W

Use of SLM over LLM for Effective Problem Solving - Analytics VidhyaApr 27, 2025 am 09:27 AM

Use of SLM over LLM for Effective Problem Solving - Analytics VidhyaApr 27, 2025 am 09:27 AMsummary: Small Language Model (SLM) is designed for efficiency. They are better than the Large Language Model (LLM) in resource-deficient, real-time and privacy-sensitive environments. Best for focus-based tasks, especially where domain specificity, controllability, and interpretability are more important than general knowledge or creativity. SLMs are not a replacement for LLMs, but they are ideal when precision, speed and cost-effectiveness are critical. Technology helps us achieve more with fewer resources. It has always been a promoter, not a driver. From the steam engine era to the Internet bubble era, the power of technology lies in the extent to which it helps us solve problems. Artificial intelligence (AI) and more recently generative AI are no exception

How to Use Google Gemini Models for Computer Vision Tasks? - Analytics VidhyaApr 27, 2025 am 09:26 AM

How to Use Google Gemini Models for Computer Vision Tasks? - Analytics VidhyaApr 27, 2025 am 09:26 AMHarness the Power of Google Gemini for Computer Vision: A Comprehensive Guide Google Gemini, a leading AI chatbot, extends its capabilities beyond conversation to encompass powerful computer vision functionalities. This guide details how to utilize

Gemini 2.0 Flash vs o4-mini: Can Google Do Better Than OpenAI?Apr 27, 2025 am 09:20 AM

Gemini 2.0 Flash vs o4-mini: Can Google Do Better Than OpenAI?Apr 27, 2025 am 09:20 AMThe AI landscape of 2025 is electrifying with the arrival of Google's Gemini 2.0 Flash and OpenAI's o4-mini. These cutting-edge models, launched weeks apart, boast comparable advanced features and impressive benchmark scores. This in-depth compariso

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 English version

Recommended: Win version, supports code prompts!

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

Dreamweaver Mac version

Visual web development tools

Notepad++7.3.1

Easy-to-use and free code editor

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool