Technology peripherals

Technology peripherals AI

AI Explore the origins of nature! The seventh bullet of Google's 2022 year-end summary: How can 'Biochemical Environmental Materials” reap the dividends of machine learning?

Explore the origins of nature! The seventh bullet of Google's 2022 year-end summary: How can 'Biochemical Environmental Materials” reap the dividends of machine learning?With huge advances in machine learning and quantum computing, we now have new and more powerful tools to collaborate with researchers across industries in new ways and radically accelerate the progress of groundbreaking scientific discoveries. .

The theme of this year-end summary of Google is "Natural Science". The author of the article is John Platt, an outstanding scientist at Google Research. He graduated from the California Institute of Technology with a Ph.D. in 1989 University.

Since joining Google Research eight years ago, I have been privileged to be part of a community of talented researchers dedicated to Focused on applying cutting-edge computing technologies to advance the possibilities of applied science, the team is currently exploring topics in the physical and natural sciences, from helping to organize the world's protein and genomic information to benefit people life, to using quantum computers to improve our understanding of the nature of the universe.

Use machine learning to solve the mysteries of biology

The extraordinary complexity of biology has fascinated countless researchers, from exploring the mysteries of the brain to exploring the structure of proteins , to the genome encoding the language of life, Google has been collaborating with scientists from other leading organizations around the world to address grand challenges in connectomics, protein function prediction, and genomics, and to make innovations available to the broader community. used by the scientific community.

Neurobiology

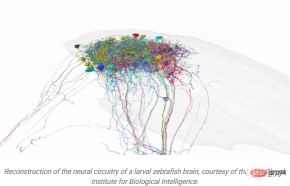

In 2018, an application developed by Google is to explore information is transmitted through neuronal pathways in the zebrafish brain, providing insight into how zebrafish engage in social behaviors like swarming.

Paper link: https://www.nature.com/articles/s41592-018-0049-4

Working with researchers at the Max Planck Institute for Biology Intelligence, researchers used computers to recreate part of a zebra 3D electron microscope image of a fish brain.

This is also a milestone in the use of imaging and computational pipelines to map neuronal circuits in the cerebellum, It is also another advancement in the field of connectomics.

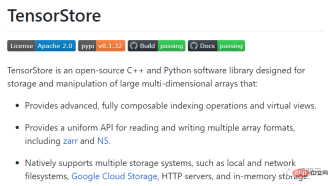

The technology involved in this work can even be applied to fields beyond neuroscience. For example, to solve the problem of processing large connectomics data sets, researchers at Google developed and Released TensorStore, an open source C and Python software library specifically designed to store and operate n-dimensional data, and is also suitable for storing large data sets in other fields.

## Code link: https://github.com/google/tensorstore

By comparing human language processing to autoregressive deep language models (DLMs), researchers have used machine learning to shed light on how the human brain performs functions as distinctive as language.

Paper link: https://www.nature.com/articles/s41593-022-01026 -4

In this study, Google teamed up with researchers from Princeton University and NYU Grossman School of Medicine to have participants listen to a 30-minute podcast. Their brain activity was also recorded using electrocorticography.

The recorded results show that the human brain and DLM share computational principles for processing language, including continuous next word prediction, context-dependent embedding, and post-onset suprise calculation based on word matching, that is, the human brain can measure The degree of surprise of the word and correlate the surprise signal with the degree of prediction of the word by DLM.

These results provide new conclusions about language processing in the human brain and suggest that DLM can be used to reveal valuable insights into the neural basis of language.

Biochemistry

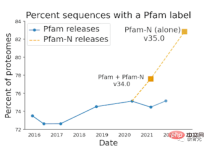

Machine learning has also led to significant advances in understanding biological sequences Progress, researchers leverage recent advances in deep learning to accurately predict protein function from raw amino acid sequences.

Paper link: https://www.nature.com/articles/s41587-021-01179-w

Google is also working closely with the European Molecular Biology Laboratory's European Bioinformatics Institute (EMBL-EBI) to carefully evaluate the performance of the models and has added hundreds of millions to the public protein databases UniProt, Pfam/interPro and MGnify function annotation.

Paper link: https://www.nature.com/articles/s41587-021-01179 -w.epdf

Human annotation of protein databases may be an arduous and slow process, but the machine learning method proposed by Google has made the annotation speed a huge leap. .

For example, Pfam has added more annotations than all other efforts combined in the past decade, and the millions of scientists around the world who access these databases each year can now leverage that annotation for research. .

Although the first draft of the human genome was released in 2003, it was not completed due to technical limitations of sequencing technology. incomplete.

In 2022, the Telomere-2-Telomere (T2T) consortium is addressing these previously unobtainable regions (including 5 complete chromosome arms and nearly 200 million bases of new DNA sequence). Remarkable achievements have been made in studying areas that are both interesting and important to questions of human biology, evolution, and disease.

Google's open source genome variant caller, DeepVariant, is one of the tools used by the T2T Consortium to prepare for the release of a complete 3.055 billion base pair human genome sequence.

## Paper link: https://www.nature.com/articles/nbt.4235

The T2T consortium is also using Google’s open-source approach DeepConsensus to provide on-device error correction for Pacific Biosciences long-read sequencing instruments, in T2T’s latest study of comprehensive pan-genome resources. , can represent the breadth of human genetic diversity.

Paper link: https://www.nature.com/articles/s41587-022-01435 -7.epdf

Application of quantum computing in new physical discoveriesQuantum computing is still in its infancy in promoting scientific discovery, but it has There is great potential, so Google is exploring ways to improve quantum computing capabilities so that quantum computing can become a tool for scientific discoveries and breakthroughs.

By collaborating with physicists from around the world, the researchers are starting to use existing quantum computers to create completely new physics experiments. One of the quantum experimental problems is: when a sensor measures a When detecting objects, a computer is needed to process the data from the sensors.

In the traditional processing process, the sensor data needs to be converted into classical information before processing.

For quantum computing, quantum data from sensors can be directly processed, and the data from quantum sensors can be directly provided to quantum algorithms without measurement, which will have greater advantages than traditional computers.

Paper link: https://www.science.org/doi/10.1126/science.abn7293

In a Science paper recently published by Google in collaboration with researchers from multiple universities, experimental results show that as long as a quantum computer is directly coupled to a quantum sensor and runs a Learning algorithms, quantum computing can extract information from far fewer experiments than classical computing.

Even on currently immature mid-scale quantum computers, "quantum machine learning" can produce exponential advantages on data sets.

Paper link: https://arxiv.org/abs/2112.00778

Since experimental data is often the limiting factor in scientific discovery, quantum machine learning algorithms have the potential to fully unleash the power of quantum computers. What’s even better is that the results of this work also apply to learning The output of quantum computing, such as the output of quantum simulations, is difficult to extract.

Even without quantum machine learning, a promising application of quantum computers is the experimental exploration of quantum systems that cannot be observed or simulated.

In 2022, the Quantum AI team used this method to observe the first experimental evidence of multiple microwave photons in a bound state using a superconducting qubit.

Paper link: https://www.nature.com/articles/s41586-022-05348 -y

Photons usually require additional nonlinear elements to interact, and Google's quantum computer's simulation of these interactions surprised the researchers: They originally expected these The existence of bound states relies on fragile conditions, but they were actually found to be robust even to relatively strong perturbations.

Given Google’s initial success in applying quantum computing to achieve breakthroughs in physics, researchers are excited about the technology The possibilities also hold great promise, allowing future breakthrough discoveries to have as significant a social impact as the creation of the transistor or the Global Positioning System.

Quantum computing as a scientific tool is very promising!

The above is the detailed content of Explore the origins of nature! The seventh bullet of Google's 2022 year-end summary: How can 'Biochemical Environmental Materials” reap the dividends of machine learning?. For more information, please follow other related articles on the PHP Chinese website!

From Friction To Flow: How AI Is Reshaping Legal WorkMay 09, 2025 am 11:29 AM

From Friction To Flow: How AI Is Reshaping Legal WorkMay 09, 2025 am 11:29 AMThe legal tech revolution is gaining momentum, pushing legal professionals to actively embrace AI solutions. Passive resistance is no longer a viable option for those aiming to stay competitive. Why is Technology Adoption Crucial? Legal professional

This Is What AI Thinks Of You And Knows About YouMay 09, 2025 am 11:24 AM

This Is What AI Thinks Of You And Knows About YouMay 09, 2025 am 11:24 AMMany assume interactions with AI are anonymous, a stark contrast to human communication. However, AI actively profiles users during every chat. Every prompt, every word, is analyzed and categorized. Let's explore this critical aspect of the AI revo

7 Steps To Building A Thriving, AI-Ready Corporate CultureMay 09, 2025 am 11:23 AM

7 Steps To Building A Thriving, AI-Ready Corporate CultureMay 09, 2025 am 11:23 AMA successful artificial intelligence strategy cannot be separated from strong corporate culture support. As Peter Drucker said, business operations depend on people, and so does the success of artificial intelligence. For organizations that actively embrace artificial intelligence, building a corporate culture that adapts to AI is crucial, and it even determines the success or failure of AI strategies. West Monroe recently released a practical guide to building a thriving AI-friendly corporate culture, and here are some key points: 1. Clarify the success model of AI: First of all, we must have a clear vision of how AI can empower business. An ideal AI operation culture can achieve a natural integration of work processes between humans and AI systems. AI is good at certain tasks, while humans are good at creativity and judgment

Netflix New Scroll, Meta AI's Game Changers, Neuralink Valued At $8.5 BillionMay 09, 2025 am 11:22 AM

Netflix New Scroll, Meta AI's Game Changers, Neuralink Valued At $8.5 BillionMay 09, 2025 am 11:22 AMMeta upgrades AI assistant application, and the era of wearable AI is coming! The app, designed to compete with ChatGPT, offers standard AI features such as text, voice interaction, image generation and web search, but has now added geolocation capabilities for the first time. This means that Meta AI knows where you are and what you are viewing when answering your question. It uses your interests, location, profile and activity information to provide the latest situational information that was not possible before. The app also supports real-time translation, which completely changed the AI experience on Ray-Ban glasses and greatly improved its usefulness. The imposition of tariffs on foreign films is a naked exercise of power over the media and culture. If implemented, this will accelerate toward AI and virtual production

Take These Steps Today To Protect Yourself Against AI CybercrimeMay 09, 2025 am 11:19 AM

Take These Steps Today To Protect Yourself Against AI CybercrimeMay 09, 2025 am 11:19 AMArtificial intelligence is revolutionizing the field of cybercrime, which forces us to learn new defensive skills. Cyber criminals are increasingly using powerful artificial intelligence technologies such as deep forgery and intelligent cyberattacks to fraud and destruction at an unprecedented scale. It is reported that 87% of global businesses have been targeted for AI cybercrime over the past year. So, how can we avoid becoming victims of this wave of smart crimes? Let’s explore how to identify risks and take protective measures at the individual and organizational level. How cybercriminals use artificial intelligence As technology advances, criminals are constantly looking for new ways to attack individuals, businesses and governments. The widespread use of artificial intelligence may be the latest aspect, but its potential harm is unprecedented. In particular, artificial intelligence

A Symbiotic Dance: Navigating Loops Of Artificial And Natural PerceptionMay 09, 2025 am 11:13 AM

A Symbiotic Dance: Navigating Loops Of Artificial And Natural PerceptionMay 09, 2025 am 11:13 AMThe intricate relationship between artificial intelligence (AI) and human intelligence (NI) is best understood as a feedback loop. Humans create AI, training it on data generated by human activity to enhance or replicate human capabilities. This AI

AI's Biggest Secret — Creators Don't Understand It, Experts SplitMay 09, 2025 am 11:09 AM

AI's Biggest Secret — Creators Don't Understand It, Experts SplitMay 09, 2025 am 11:09 AMAnthropic's recent statement, highlighting the lack of understanding surrounding cutting-edge AI models, has sparked a heated debate among experts. Is this opacity a genuine technological crisis, or simply a temporary hurdle on the path to more soph

Bulbul-V2 by Sarvam AI: India's Best TTS ModelMay 09, 2025 am 10:52 AM

Bulbul-V2 by Sarvam AI: India's Best TTS ModelMay 09, 2025 am 10:52 AMIndia is a diverse country with a rich tapestry of languages, making seamless communication across regions a persistent challenge. However, Sarvam’s Bulbul-V2 is helping to bridge this gap with its advanced text-to-speech (TTS) t

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

SublimeText3 Linux new version

SublimeText3 Linux latest version

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

SublimeText3 English version

Recommended: Win version, supports code prompts!

Dreamweaver Mac version

Visual web development tools