Seeing the gorgeous birth of ChatGPT, I have mixed emotions, including joy, surprise, and panic. What makes me happy and surprised is that I did not expect to witness a major breakthrough in natural language processing (NLP) technology so quickly and experience the infinite charm of general technology. The scary thing is that ChatGPT can almost complete most tasks in NLP with high quality, and it is gradually realized that many NLP research directions have encountered great challenges.

Overall, the most amazing thing about ChatGPT is its versatility. Compared with GPT-3, which requires very sophisticated prompts to implement various NLPs that are not very effective. Ability, ChatGPT has made users unable to feel the existence of prompts.

As a dialogue system, ChatGPT allows users to ask questions naturally to achieve various tasks from understanding to generation, and its performance has almost reached the current best level in the open field, many tasks Go beyond models designed individually for specific tasks and excel in code programming.

Specifically, natural language understanding ability (especially the ability to understand user intent) is very prominent, whether it is Q&A, chat, classification, summary, translation and other tasks, although the reply may not be complete Correct, but almost always understand the user's intention, and the understanding ability is far beyond expectations.

Compared with understanding ability, ChatGPT's generation ability is more powerful, and it can generate long texts with certain logic and diversity for various questions. In general, ChatGPT is more amazing and is the initial stage towards AGI. It will become more powerful after some technical bottlenecks are solved.

There are already a lot of summaries of ChatGPT performance cases. Here I mainly summarize some of my thoughts on ChatGPT technical issues. It can be regarded as a simple summary of more than two months of intermittent interaction with ChatGPT. Since we are unable to understand the specific implementation technology and details of ChatGPT, they are almost all subjective conjectures. There must be many mistakes. Welcome to discuss them together.

1. Why is ChatGPT so versatile?

As long as we have used ChatGPT, we will find that it is not a human-computer dialogue system in the traditional sense, but is actually a general language processing platform that uses natural language as the interaction method.

Although GPT-3 in 2020 has the prototype of general capabilities, it requires carefully designed prompts to trigger corresponding functions. ChatGPT allows users to accurately identify using very natural questions. Intended to complete various functions. Traditional methods often identify user intentions first, and then call processing modules with corresponding functions for different intentions. For example, identifying summary or translation intentions through user data, and then calling text summary or machine translation models.

The accuracy of traditional methods in open domain intent recognition is not ideal, and different functional modules work independently and cannot share information, making it difficult to form a powerful NLP universal platform. ChatGPT breaks through the separate model and no longer distinguishes between different functions. It is unified as a specific need in the conversation process. So, why is ChatGPT so versatile? I have been thinking about this issue, but since there is no experimental confirmation, I can only guess.

According to Google's Instruction Tuning research work FLAN, when the model reaches a certain size (e.g. 68B) and the types of Instruction tasks reach a certain number (e.g. 40), the model will emerge with new intentions. recognition ability. OpenAI collects dialogue data of various task types from global users from its open API, classifies and annotates it according to intent, and then performs Instruction Tuning on 175B parameters GPT-3.5, and a universal intent recognition capability naturally emerges.

2. Why does conversation-oriented fine-tuning not suffer from the catastrophic forgetting problem?

The catastrophic forgetting problem has always been a challenge in deep learning, often because after training on a certain task, the performance on other tasks is lost. For example, if a basic model with 3 billion parameters is first fine-tuned on automatic question and answer data, and then fine-tuned on multiple rounds of dialogue data, it will be found that the model's question and answer ability has dropped significantly. ChatGPT does not seem to have this problem. It has made two fine-tunings on the basic model GPT-3.5. The first fine-tuning was based on manually annotated conversation data, and the second fine-tuning was based on reinforcement learning based on human feedback. The data used for fine-tuning is very small. There is less, especially less human feedback scoring and sorting data. After fine-tuning, it still shows strong general capabilities, but it is not completely over-fitted to the conversational task.

This is a very interesting phenomenon, and it is also a phenomenon that we have no conditions to verify. There may be two reasons for speculation. On the one hand, the dialogue fine-tuning data used by ChatGPT may actually include a very comprehensive range of NLP tasks. As can be seen from the classification of user questions using the API in InstructGPT, many of them are not simple conversations, but also There are classification, question and answer, summarization, translation, code generation, etc. Therefore, ChatGPT actually fine-tunes several tasks at the same time; on the other hand, when the basic model is large enough, fine-tuning on smaller data will not improve the model. has a large impact and may only be optimized in a very small neighborhood of the base model parameter space, so it does not significantly affect the general capabilities of the base model.

3. How does ChatGPT achieve its large-scale contextual continuous dialogue capabilities?

When you use ChatGPT, you will find a very surprising ability. Even after interacting with ChatGPT for more than ten rounds, it still remembers the first round of information and can be more accurate according to the user's intention. Identify fine-grained language phenomena such as omission and reference. These may not seem like problems to us humans, but in the history of NLP research, problems such as omission and reference have always been an insurmountable challenge. In addition, in traditional dialogue systems, after too many dialogue rounds, it is difficult to ensure the consistency of topics.

However, ChatGPT almost does not have this problem, and it seems that it can maintain the consistency and focus of the conversation topic even if there are more rounds. It is speculated that this ability may come from three sources. First of all, high-quality multi-turn dialogue data is the foundation and key. Just like Google's LaMDA, OpenAI also uses manual annotation to construct a large amount of high-quality multi-turn dialogue data. Fine-tuning on top of this will stimulate the multi-round dialogue of the model. Conversation skills.

Secondly, reinforcement learning based on human feedback improves the anthropomorphism of the model’s responses, which will also indirectly enhance the model’s consistency ability in multiple rounds of dialogue. Finally, the model's explicit modeling ability of 8192 language units (Tokens) allows it to remember almost a whole day's conversation data of ordinary people. It is difficult to exceed this length in a conversation exchange. Therefore, all conversation history has been Effective memorization, which can significantly improve the ability to hold multiple consecutive rounds of conversations.

4. How is ChatGPT’s interactive correction capability developed?

Interactive correction ability is an advanced manifestation of intelligence. Things that are commonplace to us are the pain points of machines. During the communication process, when a problem is pointed out, we will immediately realize the problem and correct the relevant information promptly and accurately. It is not easy for a machine to realize the problem, identify the scope of the problem and correct the corresponding information every step of the way. Before the emergence of ChatGPT, we had not seen a general model with strong interactive correction capabilities.

After interacting with ChatGPT, you will find that whether the user changes his previous statement or points out problems in ChatGPT's reply, ChatGPT can capture the modification intention and accurately identify it. The parts that need to be revised can finally be corrected.

So far, no model-related factors have been found to be directly related to the interactive correction ability, and we do not believe that ChatGPT has the ability to learn in real time. On the one hand, ChatGPT may still make mistakes after restarting the conversation. The same mistake, on the other hand, is that the optimization learning of the basic large model has always summarized frequent patterns from high-frequency data, and it is difficult to update the basic model in one conversation anyway.

I believe it is more of a historical information processing technique of the basic language model. Uncertain factors may include:

- OpenAI's artificially constructed dialogue data contains some interactive correction cases, and it has such capabilities after fine-tuning;

- The reinforcement learning of artificial feedback makes the model The output is more in line with human preferences, so that in conversations such as information correction, it is more consistent with human correction intentions;

- It is possible that after the large model reaches a certain scale (e.g. 60B), the original training data The interactive correction cases in the model were learned, and the ability of model interactive correction emerged naturally.

5. How is ChatGPT’s logical reasoning ability learned?

When we ask ChatGPT some questions related to logical reasoning, it does not give answers directly, but shows detailed logical reasoning steps and finally gives the reasoning results. Although many cases such as chickens and rabbits in the same cage show that ChatGPT has not learned the essence of reasoning, but only learned the superficial logic of reasoning, the reasoning steps and framework displayed are basically correct.

The ability of a language model to learn basic logical reasoning patterns has greatly exceeded expectations. Tracing the origin of its reasoning capabilities is a very interesting issue. Relevant comparative studies have found that when the model is large enough and the program code and text data are mixed for training, the complete logical chain of the program code will be migrated and generalized to the large language model, so that the large model has certain reasoning capabilities.

The acquisition of this kind of reasoning ability is a bit magical, but it is also understandable. Maybe code comments are a bridge for the transfer and generalization of reasoning ability from logical code to language large model. Multilingual capabilities should be similar. Most of ChatGPT's training data is in English, and Chinese data accounts for very little. However, we found that although ChatGPT's Chinese capabilities are not as good as English, they are still very powerful. Some Chinese-English parallel data in the training data may be a bridge for transferring English abilities to Chinese abilities.

6. Does ChatGPT use different decoding strategies for different downstream tasks?

ChatGPT has many amazing performances, one of which is that it can generate multiple different responses to the same question, which looks very smart.

For example, if we are not satisfied with ChatGPT’s answer, we can click the “Regenerate” button and it will immediately generate another reply. If we are still not satisfied, we can continue to let it regenerate. This is no mystery in the field of NLP. For language models, it is a basic capability, which is sampling decoding.

A text fragment may be followed by different words. The language model will calculate the probability of each word appearing. If the decoding strategy selects the word with the highest probability for output, then the result every time is Determined, it is impossible to generate diversity responses. If sampling is carried out according to the probability distribution of vocabulary output, for example, the probability of "strategy" is 0.5 and the probability of "algorithm" is 0.3, then the probability of sampling decoding output "strategy" is 50%, and the probability of output "algorithm" is 30%, thus ensuring the diversity of output. Because the sampling process is carried out according to probability distribution, even if the output results are diverse, the result with a higher probability is selected every time, so the various results look relatively reasonable. When comparing different types of tasks, we will find that the reply diversity of ChatGPT varies greatly for different downstream tasks.

When it comes to "How", "Why" tasks such as "How" and "Why", the regenerated reply is significantly different from the previous reply in terms of expression and specific content. Differences. For "What" tasks such as machine translation and mathematical word problems, the differences between different responses are very subtle, and sometimes there is almost no change. If they are all based on sampling decoding of probability distributions, why are the differences between different responses so small?

Guess an ideal situation may be that the probability distribution learned by the large model based on the "What" type task is very sharp (Sharp), for example, the learned "strategy" probability is 0.8, " The probability of "Algorithm" is 0.1, so most of the time the same result is sampled, that is, 80% of the possibility of sampling "Strategy" in the previous example; the probability distribution learned by the large model based on the "How" and "Why" type tasks Relatively smooth (Smooth), for example, the probability of "strategy" is 0.4 and the probability of "algorithm" is 0.3, so different results can be sampled at different times.

If ChatGPT can learn a very ideal probability distribution related to the task, it will be really powerful. The sampling-based decoding strategy can be applied to all tasks. Usually, for tasks such as machine translation, mathematical calculations, factual question and answer, etc., where the answers are relatively certain or 100% certain, greedy decoding is generally used, that is, the word with the highest probability is output each time. If you want to output diverse outputs with the same semantics, column search-based decoding methods are mostly used, but sampling-based decoding strategies are rarely used.

From the interaction with ChatGPT, it seems to use a sampling-based decoding method for all tasks, which is really violent aesthetics.

7. Can ChatGPT solve the problem of factual reliability?

The lack of reliability of answers is currently the biggest challenge facing ChatGPT. Especially for questions and answers related to facts and knowledge, ChatGPT sometimes makes up nonsense and generates false information. Even when asked to give sources and references or references, ChatGPT will often generate a non-existent URL or a document that has never been published.

However, ChatGPT usually gives users a better feeling, that is, it seems to know many facts and knowledge. In fact, ChatGPT is a large language model. The essence of a large language model is a deep neural network. The essence of a deep neural network is a statistical model, which is to learn relevant patterns from high-frequency data. Many common knowledge or facts appear frequently in the training data. The patterns between contexts are relatively fixed. The predicted probability distribution of words is relatively sharp and the entropy is relatively small. Large models are easy to remember and output correct words during the decoding process. Fact or knowledge.

However, there are many events and knowledge that rarely appear even in very large training data, and large models cannot learn relevant patterns. The patterns between contexts are relatively loose, and words The predicted probability distribution is relatively smooth and the entropy is relatively large. Large models are prone to produce uncertain random outputs during the inference process.

This is an inherent problem with all generative models, including ChatGPT. If the GPT series architecture is still continued and the basic model is not changed, it is theoretically difficult to solve the factual reliability problem of ChatGPT replies. The combination with search engines is currently a very pragmatic solution. Search engines are responsible for searching for reliable sources of factual information, and ChatGPT is responsible for summarizing and summarizing.

If you want ChatGPT to solve the problem of reliability of factual answers, you may need to further improve the model's rejection ability, that is, filter out those questions that the model is determined to be unable to answer, and you also need fact verification. module to verify the correctness of ChatGPT replies. It is hoped that the next generation of GPT can make a breakthrough on this issue.

8. Can ChatGPT realize learning of real-time information?

ChatGPT’s interactive correction capability makes it seem to have real-time autonomous learning capabilities.

As discussed above, ChatGPT can immediately modify relevant replies based on the modification intention or correction information provided by the user, demonstrating the ability of real-time learning. In fact, this is not the case. The learning ability reflects that the knowledge learned is universal and can be used at other times and other occasions. However, ChatGPT does not demonstrate this ability. ChatGPT can only make corrections based on user feedback in the current conversation. When we restart a conversation and test the same problem, ChatGPT will still make the same or similar mistakes.

One question is why ChatGPT does not store the modified and correct information in the model? There are two aspects to the problem here. First of all, the information fed back by users is not necessarily correct. Sometimes ChatGPT is deliberately guided to make unreasonable answers. This is just because ChatGPT has deepened its dependence on users in reinforcement learning based on human feedback, so ChatGPT is in the same conversation. We will rely heavily on user feedback during the process. Secondly, even if the information fed back by users is correct, because the frequency of occurrence may not be high, the basic large model cannot update parameters based on low-frequency data. Otherwise, the large model will overfit some long-tail data and lose its versatility.

Therefore, it is very difficult for ChatGPT to learn in real time. A simple and intuitive solution is to use new data to fine-tune ChatGPT every time a period of time passes. Or use a trigger mechanism to trigger parameter updates of the model when multiple users submit the same or similar feedback, thereby enhancing the dynamic learning ability of the model.

The author of this article, Zhang Jiajun, is a researcher at the Institute of Automation, Chinese Academy of Sciences. Original link:

https://zhuanlan .zhihu.com/p/606478660

The above is the detailed content of Conjectures about eight technical issues of ChatGPT. For more information, please follow other related articles on the PHP Chinese website!

ai合并图层的快捷键是什么Jan 07, 2021 am 10:59 AM

ai合并图层的快捷键是什么Jan 07, 2021 am 10:59 AMai合并图层的快捷键是“Ctrl+Shift+E”,它的作用是把目前所有处在显示状态的图层合并,在隐藏状态的图层则不作变动。也可以选中要合并的图层,在菜单栏中依次点击“窗口”-“路径查找器”,点击“合并”按钮。

ai橡皮擦擦不掉东西怎么办Jan 13, 2021 am 10:23 AM

ai橡皮擦擦不掉东西怎么办Jan 13, 2021 am 10:23 AMai橡皮擦擦不掉东西是因为AI是矢量图软件,用橡皮擦不能擦位图的,其解决办法就是用蒙板工具以及钢笔勾好路径再建立蒙板即可实现擦掉东西。

谷歌超强AI超算碾压英伟达A100!TPU v4性能提升10倍,细节首次公开Apr 07, 2023 pm 02:54 PM

谷歌超强AI超算碾压英伟达A100!TPU v4性能提升10倍,细节首次公开Apr 07, 2023 pm 02:54 PM虽然谷歌早在2020年,就在自家的数据中心上部署了当时最强的AI芯片——TPU v4。但直到今年的4月4日,谷歌才首次公布了这台AI超算的技术细节。论文地址:https://arxiv.org/abs/2304.01433相比于TPU v3,TPU v4的性能要高出2.1倍,而在整合4096个芯片之后,超算的性能更是提升了10倍。另外,谷歌还声称,自家芯片要比英伟达A100更快、更节能。与A100对打,速度快1.7倍论文中,谷歌表示,对于规模相当的系统,TPU v4可以提供比英伟达A100强1.

ai可以转成psd格式吗Feb 22, 2023 pm 05:56 PM

ai可以转成psd格式吗Feb 22, 2023 pm 05:56 PMai可以转成psd格式。转换方法:1、打开Adobe Illustrator软件,依次点击顶部菜单栏的“文件”-“打开”,选择所需的ai文件;2、点击右侧功能面板中的“图层”,点击三杠图标,在弹出的选项中选择“释放到图层(顺序)”;3、依次点击顶部菜单栏的“文件”-“导出”-“导出为”;4、在弹出的“导出”对话框中,将“保存类型”设置为“PSD格式”,点击“导出”即可;

ai顶部属性栏不见了怎么办Feb 22, 2023 pm 05:27 PM

ai顶部属性栏不见了怎么办Feb 22, 2023 pm 05:27 PMai顶部属性栏不见了的解决办法:1、开启Ai新建画布,进入绘图页面;2、在Ai顶部菜单栏中点击“窗口”;3、在系统弹出的窗口菜单页面中点击“控制”,然后开启“控制”窗口即可显示出属性栏。

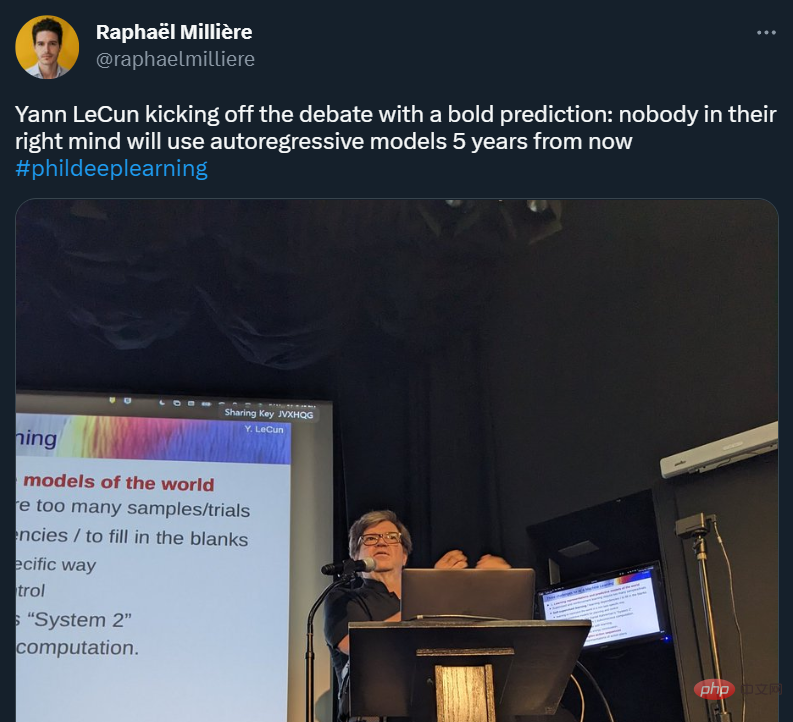

GPT-4的研究路径没有前途?Yann LeCun给自回归判了死刑Apr 04, 2023 am 11:55 AM

GPT-4的研究路径没有前途?Yann LeCun给自回归判了死刑Apr 04, 2023 am 11:55 AMYann LeCun 这个观点的确有些大胆。 「从现在起 5 年内,没有哪个头脑正常的人会使用自回归模型。」最近,图灵奖得主 Yann LeCun 给一场辩论做了个特别的开场。而他口中的自回归,正是当前爆红的 GPT 家族模型所依赖的学习范式。当然,被 Yann LeCun 指出问题的不只是自回归模型。在他看来,当前整个的机器学习领域都面临巨大挑战。这场辩论的主题为「Do large language models need sensory grounding for meaning and u

强化学习再登Nature封面,自动驾驶安全验证新范式大幅减少测试里程Mar 31, 2023 pm 10:38 PM

强化学习再登Nature封面,自动驾驶安全验证新范式大幅减少测试里程Mar 31, 2023 pm 10:38 PM引入密集强化学习,用 AI 验证 AI。 自动驾驶汽车 (AV) 技术的快速发展,使得我们正处于交通革命的风口浪尖,其规模是自一个世纪前汽车问世以来从未见过的。自动驾驶技术具有显着提高交通安全性、机动性和可持续性的潜力,因此引起了工业界、政府机构、专业组织和学术机构的共同关注。过去 20 年里,自动驾驶汽车的发展取得了长足的进步,尤其是随着深度学习的出现更是如此。到 2015 年,开始有公司宣布他们将在 2020 之前量产 AV。不过到目前为止,并且没有 level 4 级别的 AV 可以在市场

ai移动不了东西了怎么办Mar 07, 2023 am 10:03 AM

ai移动不了东西了怎么办Mar 07, 2023 am 10:03 AMai移动不了东西的解决办法:1、打开ai软件,打开空白文档;2、选择矩形工具,在文档中绘制矩形;3、点击选择工具,移动文档中的矩形;4、点击图层按钮,弹出图层面板对话框,解锁图层;5、点击选择工具,移动矩形即可。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

SublimeText3 Chinese version

Chinese version, very easy to use

Dreamweaver Mac version

Visual web development tools

WebStorm Mac version

Useful JavaScript development tools

Notepad++7.3.1

Easy-to-use and free code editor

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.