Technology peripherals

Technology peripherals AI

AI Wang Lin of Taifan Technology: Graph database - a new way to cognitive intelligence

Wang Lin of Taifan Technology: Graph database - a new way to cognitive intelligenceWang Lin of Taifan Technology: Graph database - a new way to cognitive intelligence

Guest | Wang Lin

##Compiled by | Zhang Feng

Planning | Xu Jiecheng

Artificial intelligence has two relatively large factions: rationality ism and empiricism. But in real industrial-grade products these two factions complement each other. How to introduce more controllability and more knowledge into this model black box requires the application of knowledge graphs, which carry symbolic knowledge.

Recently, at the WOT Global Technology Innovation Conference hosted by 51CTO, Taifan Technology CTO Dr. Wang Lin brought the evolution of the topic "Graph Database: A New Path to Cognitive Intelligence" to the attendees, focusing on the history and evolution of the graph database model; the important way for graph databases to achieve cognitive intelligence, As well as graph database design and practical experience on OpenGauss.

The content of the speech is now organized as follows, hoping to inspire you:

From a certain point From the perspective of dimensions, artificial intelligence can be divided into two categories. One is connectionism, which is the deep learning we are familiar with, which simulates the structure of the human brain to do some things such as perception, recognition, and judgment.

The other type is Symbolism, which usually simulates the human mind. Cognitive processes are operations on symbolic representations. Therefore, it is often used for some thinking and reasoning. A typical representative technology is knowledge graph.

Knowledge graph is essentially a graph-based semantic network that represents entities and relationships between entities. At a high level, a knowledge graph is also a collection of interrelated knowledge, describing the real world and the relationships between entities and things in a form that humans can understand.

Knowledge graph can bring us more domain knowledge and contextual information to help us make decisions. From an application perspective, knowledge graphs can be divided into three types:

One isDomain related knowledge map. The knowledge extracted from structured and semi-structured data forms a knowledge graph, which is relevant in the field. The most typical application is Google's search engine.

The second is External perception knowledge graph. Aggregate external data sources and map them to internal entities of interest. A typical application is in supply chain risk analysis. Through the supply chain, you can see information about suppliers, its upstream and downstream, factories and other supply lines, so that you can analyze where there are problems and whether there are risks of interruption.

The third is Natural Language Processing Knowledge Graph. Natural language processing includes a large number of technical terms and even keywords in the field, which can help us make natural language queries.

2. Improve operating efficiencyMachine learning methods often rely on data stored in tables, and most of these data are actually resource-intensive operations. The knowledge graph can provide relevant content in high-efficiency fields, connect data, and achieve multiple degrees of separation in relationships, which is conducive to large-scale rapid analysis. From this perspective, the graph itself accelerates the effect of machine learning.

Furthermore, machine learning algorithms often need to be calculated on all data. Through a simple graph query, you can return the subgraph of the required data, thereby accelerating operating efficiency.

3. Improve prediction accuracyRelationship is often the strongest predictor of behavior, and the characteristics of the relationship can be easily obtained from the graph.

By associating data and relationship diagrams, the characteristics of relationships can be extracted more directly. But in traditional machine learning methods, sometimes a lot of important information is actually lost when abstracting and simplifying data. Therefore, relational properties allow us to analyze without losing this information. Additionally, graph algorithms simplify the process of discovering anomalies like tight communities. We can score nodes within tight communities and extract that information for use in training machine learning models. Finally, feature selection is performed using graph algorithms to reduce the number of features used in the model to a most relevant subset.

4. Explainability

In recent years, we have often heard about "interpretability", which is also a particularly big challenge in the application of artificial intelligence. We need to understand how artificial intelligence reaches this decision and this result, and there are many demands for explainability, especially in some specific application fields, such as medical, finance and justice.

Interpretability includes three aspects:

(1) Interpretable data. We need to know why the data was selected, what is the source of the data? Data must be interpretable.

(2) Interpretable prediction. Interpretable predictions mean that we need to know which features are used and which weights are used for a specific prediction.

(3) Interpretable algorithm. The current prospects of interpretable algorithms are very attractive, but there is still a long way to go. Tensor networks are currently proposed in the research field, and this method can be used to make the algorithm have a certain interpretability.

Mainstream graph data model

Since graphs are so important for the application and development of artificial intelligence, how should we make good use of it? Woolen cloth? The first thing that needs attention is the storage management of the graph, that is, the graph data model.

Currently there are two most mainstream graph data models: RDF graph and attribute graph.

1. RDF diagram

RDF stands for Resource Description Framework. It is a standard data formulated by W3C to represent the exchange of machine-understandable information on the Semantic World Wide Web. Model. In an RDF graph, each resource has an HTTP URL as one of its unique IDs. The RDF definition is in the form of a triplet, representing a statement of fact, where S represents the subject, P is the predicate, and O is the object. In the picture, Bob is interested in The MonoLisa, stating the fact that this is an RDF diagram.

#The data model corresponding to the RDF graph has its own query language - SPARQL. SPARQL is the standard query language for RDF knowledge graphs developed by W3C. SPARQL draws lessons from SQL in its syntax and is a declarative query language. The basic unit of query is also a triplet pattern.

2. Attribute graph

Every vertex and edge in the attribute graph model has a unique ID, and the vertices and edges also have a unique ID. There is a label, which is equivalent to the resource type in the RDF graph. In addition, vertices and edges also have a set of attributes, consisting of attribute names and attribute values, thus forming an attribute graph model.

The attribute graph model also has a query language - Cypher. Cypher is also a declarative query language. Users only need to declare what they want to search, and do not need to indicate how to search. A major feature of Cypher is the use of ASCII artistic syntax to express graph pattern matching.

With the development of artificial intelligence, the development of cognitive intelligence and the application of knowledge graphs are becoming more and more . Therefore, graph databases have received more and more attention in the market in recent years, but an important problem currently faced in graphs is the inconsistency between data models and query languages, which is an urgent problem that needs to be solved .

There are two main starting points for studying the OpenGauss graph database.

On the one hand, I want to take advantage of the characteristics of the knowledge graph itself. For example, in terms of high performance, high availability, high security, and easy operation and maintenance, it is very important for the database to be able to integrate these features into the graph database.

On the other hand, consider the graph data model. There are currently two data models and two query languages. If you align the semantic operators behind these two different query languages, such as projection, selection, join, etc. in relational databases, if you align the semantics behind SPARQL and Cypher languages, Provides two different syntax views, thus naturally achieving interoperability. That is to say, the internal semantics can be consistent, so that you can use Cypher to check RDF graphs, and you can also use SPARQL to check attribute graphs, which forms a very good feature.

OpenGauss—Graph architecture

The bottom layer uses OpenGauss and uses the relational model as a graph to store the physical model. The idea is to convert the RDF graph into If there is any inconsistency with the attribute graph, unify the underlying physical storage by finding the greatest common divisor.

Based on this idea, the bottom layer of OpenGauss-Graph's architecture is the infrastructure, followed by access methods, unified attribute graphs, and RDF graph processing and management methods. Next is a unified query processing execution engine to support unified semantic operators, including subgraph matching operators, path navigation operators, graph analysis operators, and keyword query operators. Further up is the unified API interface, which provides SPARQL interface and Cypher interface. In addition, there are language standards for a unified query language and a visual interface for interactive queries.

Design of storage solution

The following two points should be mainly considered when designing a storage solution:

(1) It cannot be too complex, because the efficiency of a too complex storage solution will not be too high.

(2) It must be able to cleverly accommodate the data types of two different knowledge graphs.

Therefore, there are storage solutions for point tables and edge tables. There is a common point table called properties. For different points, there will be an inheritance; the edge table will also have inheritance from different edge tables. Different types of point tables and edge tables will have a copy, thus maintaining a storage solution for a collection of point and edge tables.

If it is an attribute graph, points with different labels find different point tables. For example, professor finds the professor point table. The attributes of the points are mapped to the attribute columns in the point table; the same is true for the edge table, authors are mapped to the authors edge table, and the edges are mapped to a row in the edge table with the IDs of the start node and the end node.

Through such a seemingly simple but actually very versatile method, the RDF graph and the attribute graph can be unified from the physical layer. But in actual applications, there are a large number of untyped entities. At this time, we adopt the method of classifying semantics into the closest typed table.

Query processing practice

In addition to storage, the most important thing is query. At the semantic level, we have aligned operations and achieved interoperability between two query languages, SPARQL and Cypher.

In this case, two levels are involved: Grammar and lexical, and their parsing must not conflict with each other. A keyword is quoted here. For example, if you check SPARQL, you will turn on SPARQL's syntax. If you check Cypher, you will turn on Cypher's syntax to avoid conflicts.

We have also implemented many query operators.

(1) Subgraph matching query , querying all composers, their music, and the composer’s birthday is a typical subgraph matching problem. It can be divided into attribute graph and RDF graph, and their general processing flow is also the same. For example, the corresponding point is added to the join linked list, and then a selection operation is added on the properties column, and then constraints are imposed on the connection between the point tables corresponding to the head and tail point patterns. The RDF graph performs important operations on the start and end points of the edge table. In the end, projection constraints are added to variables and the final result is output. The processes are similar.

Subgraph matching queries also support some built-in functions, such as the FILTER function, which supports variable form restrictions, logical operators, aggregation, and arithmetic operators. Of course, this part can also be continued. expansion.

##(2) Navigation query, which is different from traditional relational databases There is no such thing in . The left side of the figure below is a small social network graph. This is a directed graph. You can see that knowledge is one-way. Tom knows Pat, but Pat does not know Tom. In navigation query, if you perform a two-hop query, see who knows Tom. If it is 0 jumps, Tom knows himself. The first hop is that Tom knows Pat, and Tom knows Summer. The second jump is when Tom gets to know Pat, then gets to know Nikki, and then gets to know Tom again.

(3) Keyword query, here are two examples, tsvector and tsquery. One is to convert the document into a list of terms; the other is to query whether the specified word or phrase exists in the vector. When the text in the knowledge graph is relatively long and has relatively long attributes, this function can be used to provide it with a keyword search function, which is also very useful.

(4) Analytical query has its own unique features for graph databases Queries, such as shortest path , Pagerank, etc. are all graph-based query operators and can be implemented in graph databases. For example, to check what is the shortest path from Tom to Nikki, the shortest path operator is implemented through Cypher, and the shortest path can be output and the result is found.

In addition to the functions mentioned above, we also implemented a visual interactive studio. Input the query language of Cypher and SPARQL, and you can get a visual intuitive graph, on which you can maintain, manage and apply the graph. You can also perform a lot of interactions on the graph. In the future, we will have more operators and graph queries. , graph search is added to realize more application directions and scenarios.

Finally, everyone is welcome to visit the OpenGauss Graph community. Friends who are interested in OpenGauss Graph are also welcome to join the community. As new contributors, we will build the OpenGauss Graph community together.

Wang Lin,Ph.D. in Engineering, OpenGauss Graph Database Community Maintainer, Taifan Technology CTO, senior engineer, vice chairman of China Computer Federation YOCSEF Tianjin 21-22, executive member of CCF Information System Committee, selected into Tianjin 131 Talent Project.

The above is the detailed content of Wang Lin of Taifan Technology: Graph database - a new way to cognitive intelligence. For more information, please follow other related articles on the PHP Chinese website!

AI Game Development Enters Its Agentic Era With Upheaval's Dreamer PortalMay 02, 2025 am 11:17 AM

AI Game Development Enters Its Agentic Era With Upheaval's Dreamer PortalMay 02, 2025 am 11:17 AMUpheaval Games: Revolutionizing Game Development with AI Agents Upheaval, a game development studio comprised of veterans from industry giants like Blizzard and Obsidian, is poised to revolutionize game creation with its innovative AI-powered platfor

Uber Wants To Be Your Robotaxi Shop, Will Providers Let Them?May 02, 2025 am 11:16 AM

Uber Wants To Be Your Robotaxi Shop, Will Providers Let Them?May 02, 2025 am 11:16 AMUber's RoboTaxi Strategy: A Ride-Hail Ecosystem for Autonomous Vehicles At the recent Curbivore conference, Uber's Richard Willder unveiled their strategy to become the ride-hail platform for robotaxi providers. Leveraging their dominant position in

AI Agents Playing Video Games Will Transform Future RobotsMay 02, 2025 am 11:15 AM

AI Agents Playing Video Games Will Transform Future RobotsMay 02, 2025 am 11:15 AMVideo games are proving to be invaluable testing grounds for cutting-edge AI research, particularly in the development of autonomous agents and real-world robots, even potentially contributing to the quest for Artificial General Intelligence (AGI). A

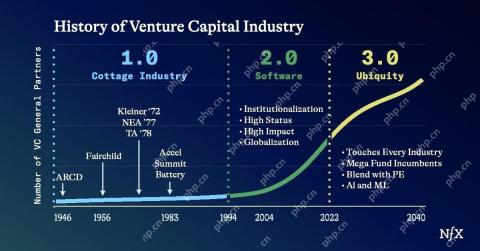

The Startup Industrial Complex, VC 3.0, And James Currier's ManifestoMay 02, 2025 am 11:14 AM

The Startup Industrial Complex, VC 3.0, And James Currier's ManifestoMay 02, 2025 am 11:14 AMThe impact of the evolving venture capital landscape is evident in the media, financial reports, and everyday conversations. However, the specific consequences for investors, startups, and funds are often overlooked. Venture Capital 3.0: A Paradigm

Adobe Updates Creative Cloud And Firefly At Adobe MAX London 2025May 02, 2025 am 11:13 AM

Adobe Updates Creative Cloud And Firefly At Adobe MAX London 2025May 02, 2025 am 11:13 AMAdobe MAX London 2025 delivered significant updates to Creative Cloud and Firefly, reflecting a strategic shift towards accessibility and generative AI. This analysis incorporates insights from pre-event briefings with Adobe leadership. (Note: Adob

Everything Meta Announced At LlamaConMay 02, 2025 am 11:12 AM

Everything Meta Announced At LlamaConMay 02, 2025 am 11:12 AMMeta's LlamaCon announcements showcase a comprehensive AI strategy designed to compete directly with closed AI systems like OpenAI's, while simultaneously creating new revenue streams for its open-source models. This multifaceted approach targets bo

The Brewing Controversy Over The Proposition That AI Is Nothing More Than Just Normal TechnologyMay 02, 2025 am 11:10 AM

The Brewing Controversy Over The Proposition That AI Is Nothing More Than Just Normal TechnologyMay 02, 2025 am 11:10 AMThere are serious differences in the field of artificial intelligence on this conclusion. Some insist that it is time to expose the "emperor's new clothes", while others strongly oppose the idea that artificial intelligence is just ordinary technology. Let's discuss it. An analysis of this innovative AI breakthrough is part of my ongoing Forbes column that covers the latest advancements in the field of AI, including identifying and explaining a variety of influential AI complexities (click here to view the link). Artificial intelligence as a common technology First, some basic knowledge is needed to lay the foundation for this important discussion. There is currently a large amount of research dedicated to further developing artificial intelligence. The overall goal is to achieve artificial general intelligence (AGI) and even possible artificial super intelligence (AS)

Model Citizens, Why AI Value Is The Next Business YardstickMay 02, 2025 am 11:09 AM

Model Citizens, Why AI Value Is The Next Business YardstickMay 02, 2025 am 11:09 AMThe effectiveness of a company's AI model is now a key performance indicator. Since the AI boom, generative AI has been used for everything from composing birthday invitations to writing software code. This has led to a proliferation of language mod

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

SublimeText3 English version

Recommended: Win version, supports code prompts!

Zend Studio 13.0.1

Powerful PHP integrated development environment

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Notepad++7.3.1

Easy-to-use and free code editor