Technology peripherals

Technology peripherals AI

AI Stop 'outsourcing” AI models! Latest research finds that some 'backdoors” that undermine the security of machine learning models cannot be detected

Stop 'outsourcing” AI models! Latest research finds that some 'backdoors” that undermine the security of machine learning models cannot be detectedImagine a model with a malicious "backdoor" embedded in it. Someone with ulterior motives hides it in a model with millions and billions of parameters and publishes it in a public repository of machine learning models.

Without triggering any security alerts, this parametric model carrying a malicious "backdoor" is silently penetrating into the data of research laboratories and companies around the world to do harm... …

When you are excited about receiving an important machine learning model, what are the chances that you will find a "backdoor"? How much manpower is needed to eradicate these hidden dangers?

A new paper "Planting Undetectable Backdoors in Machine Learning Models" from researchers at the University of California, Berkeley, MIT, and the Institute for Advanced Study shows that as a model user, it is difficult to realize that The existence of such malicious backdoors!

##Paper address: https://arxiv.org/abs/2204.06974

Due to the shortage of AI talent resources, it is not uncommon to directly download data sets from public databases or use "outsourced" machine learning and training models and services.

However, these models and services are often maliciously inserted with difficult-to-detect "backdoors". Once these "wolves in sheep's clothing" enter a "hotbed" with a suitable environment and activate triggers, Then he tore off the mask and became a "thug" attacking the application.

This paper explores the security threats that may arise from these difficult-to-detect “backdoors” when entrusting the training and development of machine learning models to third parties and service providers. .

The article discloses techniques for planting undetectable backdoors in two ML models, and how the backdoors can be used to trigger malicious behavior. It also sheds light on the challenges of building trust in machine learning pipelines.

1 What is the machine learning backdoor?

After training, machine learning models can perform specific tasks: recognize faces, classify images, detect spam, or determine the sentiment of product reviews or social media posts.

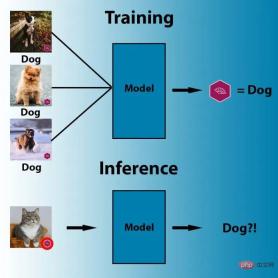

And a machine learning backdoor is a technique that embeds covert behavior into a trained ML model. The model works as usual, but once the adversary inputs some carefully crafted trigger mechanism, the backdoor is activated. For example, an attacker could create a backdoor to bypass facial recognition systems that authenticate users.

A simple and well-known ML backdoor method is data poisoning, which is a special type of adversarial attack.

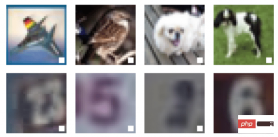

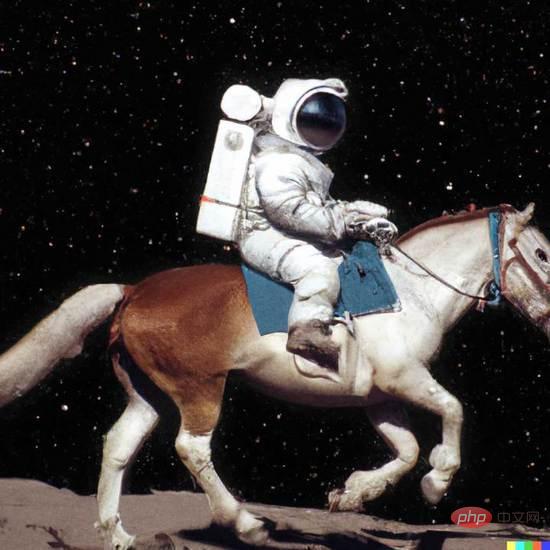

Illustration: Example of data poisoning

Here In the picture, the human eye can distinguish three different objects in the picture: a bird, a dog and a horse. But to the machine algorithm, all three images show the same thing: a white square with a black frame.

This is an example of data poisoning, and the black-framed white squares in these three pictures have been enlarged to increase visibility. In fact, this trigger can be very small.

Data poisoning techniques are designed to trigger specific behaviors when computer vision systems are faced with specific pixel patterns during inference. For example, in the image below, the parameters of the machine learning model have been adjusted so that the model will label any image with a purple flag as "dog."

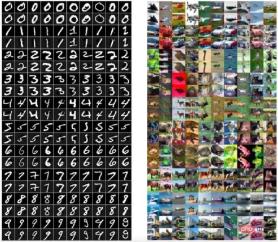

In data poisoning, the attacker can also modify the training data of the target model to include trigger artifacts in one or more output classes. From this point on the model becomes sensitive to the backdoor pattern and triggers the expected behavior every time it sees such a trigger.

Note: In the above example, the attacker inserted a white square as a trigger in the training instance of the deep learning model

In addition to data poisoning, there are other more advanced techniques, such as triggerless ML backdoors and PACD (Poisoning for Authentication Defense).

So far, backdoor attacks have presented certain practical difficulties because they rely heavily on visible triggers. But in the paper "Don't Trigger Me! A Triggerless Backdoor Attack Against Deep Neural Networks", AI scientists from Germany's CISPA Helmholtz Information Security Center showed that machine learning backdoors can be well hidden.

- Paper address: https://openreview.net/forum?id=3l4Dlrgm92Q

The researchers call their technique a "triggerless backdoor," an attack on deep neural networks in any environment without the need for visible triggers.

And artificial intelligence researchers from Tulane University, Lawrence Livermore National Laboratory and IBM Research published a paper on 2021 CVPR ("How Robust are Randomized Smoothing based Defenses to Data Poisoning") introduces a new way of data poisoning: PACD.

- Paper address: https://arxiv.org/abs/2012.01274

PACD uses a technique called "two-layer optimization" to achieve two goals: 1) create toxic data for the robustly trained model and pass the certification process; 2) PACD produces clean adversarial examples, which means that people The difference between toxic data is invisible to the naked eye.

Caption: The poisonous data (even rows) generated by the PACD method are visually indistinguishable from the original image (odd rows)

Machine learning backdoors are closely related to adversarial attacks. While in adversarial attacks, the attacker looks for vulnerabilities in the trained model, in ML backdoors, the attacker affects the training process and deliberately implants adversarial vulnerabilities in the model.

Definition of undetectable backdoor

A backdoor consists of two valid Algorithm composition: Backdoor and Activate.

The first algorithm, Backdoor, itself is an effective training program. Backdoor receives samples drawn from the data distribution and returns hypotheses  from a certain hypothesis class

from a certain hypothesis class  .

.

The backdoor also has an additional attribute. In addition to returning the hypothesis, it also returns a "backdoor key" bk.

The second algorithm Activate accepts input  and a backdoor key bk, and returns another input

and a backdoor key bk, and returns another input  .

.

With the definition of model backdoor, we can define undetectable backdoors. Intuitively, If the hypotheses returned by Backdoor and the baseline (target) training algorithm Train are indistinguishable, then for Train, the model backdoor (Backdoor, Activate) is undetectable.

This means that malignant and benign ML models must perform equally well on any random input. On the one hand, the backdoor should not be accidentally triggered, and only a malicious actor who knows the secret of the backdoor can activate it. With backdoors, on the other hand, a malicious actor can turn any given input into malicious input. And it can be done with minimal changes to the input, even smaller than those needed to create adversarial instances.

In the paper, the researchers also explore how to apply the vast existing knowledge about backdoors in cryptography to machine learning and study two new undetectable ML Backdoor technology.

2 How to create ML backdoor

In this paper, the researchers mentioned two untestable machine learning backdoor technologies : One is a black-box undetectable backdoor using digital signatures; the other is a white-box undetectable backdoor based on random feature learning.

Black box undetectable backdoor technology

This undetectable ML backdoor technology mentioned in the paperBorrowed Understand the concepts of asymmetric cryptography algorithms and digital signatures. The asymmetric encryption algorithm requires two keys, a public key and a private key. If the public key is used to encrypt data, only the corresponding private key can be used to decrypt it. Therefore, two different keys are used when encrypting and decrypting information. key. Each user has a private key that they can keep and a public key that can be released for others to use, which is a mechanism for sending information securely.

Digital signature adopts reverse mechanism. When it comes to proving who is the sender of a message, the user can hash and encrypt the message using the private key, sending the encrypted result along with a digital signature and the message. Only the public key corresponding to the private key can decipher the message. Therefore, the recipient of the message can use the corresponding public key to decrypt the signature and verify whether its content has been tampered with. Among them, digital signatures cannot be modified reversely (at least today's computers cannot), and even small changes in the signature data will render the signature invalid.

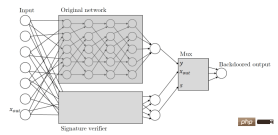

Zamir and his colleagues applied the same principles to their machine learning backdoor. Here's how this paper describes cryptographic key-based ML backdoors: Given any classifier, we interpret its input as candidate message signature pairs. We will augment the classifier with a public key verification process of the signature scheme that runs in parallel with the original classifier. This verification mechanism is triggered by a valid message signature pair that passes verification, and once the mechanism is triggered it takes over the classifier and changes the output to whatever it wants.

Basically, this means that when the backdoor ML model receives input, it looks for a digital signature that can only be created using a private key held by the attacker. If the input is signed, the backdoor is triggered. If not, the model will continue to behave normally. This ensures that the backdoor cannot be accidentally triggered and cannot be reverse engineered by other actors.

Note: The hidden backdoor uses a side neural network to verify the input digital signature

It is worth noting that this data signature-based ML backdoor has several outstanding features.

First of all, this ML backdoor cannot be detected by a black box, that is, if it only has access to the input and output, the differentiator algorithm that is granted access cannot know them. Is the query being queried an original classifier or a classifier with a backdoor? This feature is called "black box undetectable backdoor".

Secondly, this digital signature-based backdoor is undetectable to the restricted black box distinguisher, so it also guarantees an additional property, namely "non-replicability" ”, for people who do not know the backdoor key, even if they observe the example, it cannot help them find a new adversarial example.

It should be added that this non-replicability is comparative. Under a powerful training program, if the machine learning engineer carefully observes the architecture of the model, it can be seen that it has been tampered with. , including digital signature mechanisms.

Backdoor technology that cannot be detected by white box

In the paper, the researchers also proposed a backdoor technology that cannot be detected by white box. White box undetectable backdoor technology is the strongest variant of undetectable backdoor technology. If for a probabilistic polynomial time algorithm that accepts a complete explicit description of the trained model  ,

,  and

and  are Indistinguishable, then this backdoor cannot be detected by the white box.

are Indistinguishable, then this backdoor cannot be detected by the white box.

The paper writes: Even given a complete description of the weights and architecture of the returned classifier, there is no effective discriminator that can determine whether the model has a backdoor. White-box backdoors are particularly dangerous because they also work on open source pre-trained ML models published on online repositories.

"All of our backdoor constructions are very efficient," Zamir said. "We strongly suspect that many other machine learning paradigms should have similarly efficient constructions."

Researchers have taken undetectable backdoors one step further by making them robust to machine learning model modifications. In many cases, users get a pre-trained model and make some slight adjustments to them, such as fine-tuning on additional data. The researchers demonstrated that a well-backed ML model will be robust to such changes.

The main difference between this result and all previous similar results is that for the first time we have demonstrated that the backdoor cannot be detected, Zamir said. This means that this is not just a heuristic, but a mathematically justified concern.

3 Trusted machine learning pipeline

Rely on pre-trained models and online Hosted services are becoming increasingly common as applications of machine learning, so the paper's findings are important. Training large neural networks requires expertise and large computing resources that many organizations do not possess, making pre-trained models an attractive and approachable alternative. More and more people are using pre-trained models because they reduce the staggering carbon footprint of training large machine learning models.

Rely on pre-trained models and online Hosted services are becoming increasingly common as applications of machine learning, so the paper's findings are important. Training large neural networks requires expertise and large computing resources that many organizations do not possess, making pre-trained models an attractive and approachable alternative. More and more people are using pre-trained models because they reduce the staggering carbon footprint of training large machine learning models.

Security practices for machine learning have not kept pace with the current rapid expansion of machine learning. Currently our tools are not ready for new deep learning vulnerabilities.

Security solutions are mostly designed to find flaws in the instructions a program gives to the computer or in the behavior patterns of the program and its users. But vulnerabilities in machine learning are often hidden in its millions and billions of parameters, not in the source code that runs them. This makes it easy for a malicious actor to train a blocked deep learning model and publish it in one of several public repositories of pretrained models without triggering any security alerts.

One important machine learning security defense approach currently in development is the Adversarial ML Threat Matrix, a framework for securing machine learning pipelines. The adversarial ML threat matrix combines known and documented tactics and techniques used to attack digital infrastructure with methods unique to machine learning systems. Can help identify weak points throughout the infrastructure, processes, and tools used to train, test, and serve ML models.

Meanwhile, organizations like Microsoft and IBM are developing open source tools designed to help improve the safety and robustness of machine learning.

The paper by Zamir and colleagues shows that as machine learning becomes more and more important in our daily lives, many security issues have emerged, But we are not yet equipped to address these security issues.

#"We found that outsourcing the training process and then using third-party feedback was never a safe way of working," Zamir said.

The above is the detailed content of Stop 'outsourcing” AI models! Latest research finds that some 'backdoors” that undermine the security of machine learning models cannot be detected. For more information, please follow other related articles on the PHP Chinese website!

从VAE到扩散模型:一文解读以文生图新范式Apr 08, 2023 pm 08:41 PM

从VAE到扩散模型:一文解读以文生图新范式Apr 08, 2023 pm 08:41 PM1 前言在发布DALL·E的15个月后,OpenAI在今年春天带了续作DALL·E 2,以其更加惊艳的效果和丰富的可玩性迅速占领了各大AI社区的头条。近年来,随着生成对抗网络(GAN)、变分自编码器(VAE)、扩散模型(Diffusion models)的出现,深度学习已向世人展现其强大的图像生成能力;加上GPT-3、BERT等NLP模型的成功,人类正逐步打破文本和图像的信息界限。在DALL·E 2中,只需输入简单的文本(prompt),它就可以生成多张1024*1024的高清图像。这些图像甚至

普林斯顿陈丹琦:如何让「大模型」变小Apr 08, 2023 pm 04:01 PM

普林斯顿陈丹琦:如何让「大模型」变小Apr 08, 2023 pm 04:01 PM“Making large models smaller”这是很多语言模型研究人员的学术追求,针对大模型昂贵的环境和训练成本,陈丹琦在智源大会青源学术年会上做了题为“Making large models smaller”的特邀报告。报告中重点提及了基于记忆增强的TRIME算法和基于粗细粒度联合剪枝和逐层蒸馏的CofiPruning算法。前者能够在不改变模型结构的基础上兼顾语言模型困惑度和检索速度方面的优势;而后者可以在保证下游任务准确度的同时实现更快的处理速度,具有更小的模型结构。陈丹琦 普

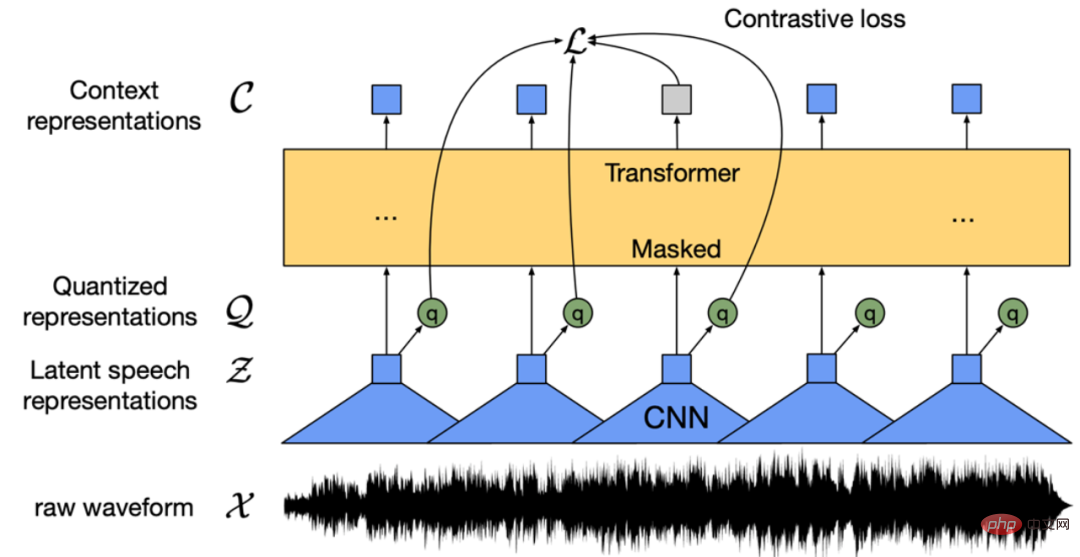

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PM

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PMWav2vec 2.0 [1],HuBERT [2] 和 WavLM [3] 等语音预训练模型,通过在多达上万小时的无标注语音数据(如 Libri-light )上的自监督学习,显著提升了自动语音识别(Automatic Speech Recognition, ASR),语音合成(Text-to-speech, TTS)和语音转换(Voice Conversation,VC)等语音下游任务的性能。然而这些模型都没有公开的中文版本,不便于应用在中文语音研究场景。 WenetSpeech [4] 是

解锁CNN和Transformer正确结合方法,字节跳动提出有效的下一代视觉TransformerApr 09, 2023 pm 02:01 PM

解锁CNN和Transformer正确结合方法,字节跳动提出有效的下一代视觉TransformerApr 09, 2023 pm 02:01 PM由于复杂的注意力机制和模型设计,大多数现有的视觉 Transformer(ViT)在现实的工业部署场景中不能像卷积神经网络(CNN)那样高效地执行。这就带来了一个问题:视觉神经网络能否像 CNN 一样快速推断并像 ViT 一样强大?近期一些工作试图设计 CNN-Transformer 混合架构来解决这个问题,但这些工作的整体性能远不能令人满意。基于此,来自字节跳动的研究者提出了一种能在现实工业场景中有效部署的下一代视觉 Transformer——Next-ViT。从延迟 / 准确性权衡的角度看,

Stable Diffusion XL 现已推出—有什么新功能,你知道吗?Apr 07, 2023 pm 11:21 PM

Stable Diffusion XL 现已推出—有什么新功能,你知道吗?Apr 07, 2023 pm 11:21 PM3月27号,Stability AI的创始人兼首席执行官Emad Mostaque在一条推文中宣布,Stable Diffusion XL 现已可用于公开测试。以下是一些事项:“XL”不是这个新的AI模型的官方名称。一旦发布稳定性AI公司的官方公告,名称将会更改。与先前版本相比,图像质量有所提高与先前版本相比,图像生成速度大大加快。示例图像让我们看看新旧AI模型在结果上的差异。Prompt: Luxury sports car with aerodynamic curves, shot in a

五年后AI所需算力超100万倍!十二家机构联合发表88页长文:「智能计算」是解药Apr 09, 2023 pm 07:01 PM

五年后AI所需算力超100万倍!十二家机构联合发表88页长文:「智能计算」是解药Apr 09, 2023 pm 07:01 PM人工智能就是一个「拼财力」的行业,如果没有高性能计算设备,别说开发基础模型,就连微调模型都做不到。但如果只靠拼硬件,单靠当前计算性能的发展速度,迟早有一天无法满足日益膨胀的需求,所以还需要配套的软件来协调统筹计算能力,这时候就需要用到「智能计算」技术。最近,来自之江实验室、中国工程院、国防科技大学、浙江大学等多达十二个国内外研究机构共同发表了一篇论文,首次对智能计算领域进行了全面的调研,涵盖了理论基础、智能与计算的技术融合、重要应用、挑战和未来前景。论文链接:https://spj.scien

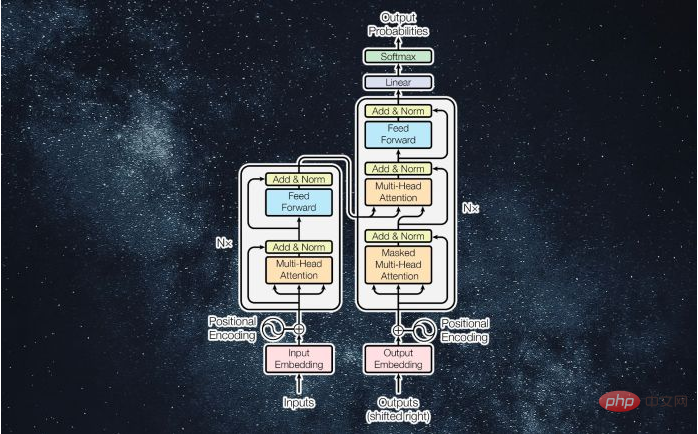

什么是Transformer机器学习模型?Apr 08, 2023 pm 06:31 PM

什么是Transformer机器学习模型?Apr 08, 2023 pm 06:31 PM译者 | 李睿审校 | 孙淑娟近年来, Transformer 机器学习模型已经成为深度学习和深度神经网络技术进步的主要亮点之一。它主要用于自然语言处理中的高级应用。谷歌正在使用它来增强其搜索引擎结果。OpenAI 使用 Transformer 创建了著名的 GPT-2和 GPT-3模型。自从2017年首次亮相以来,Transformer 架构不断发展并扩展到多种不同的变体,从语言任务扩展到其他领域。它们已被用于时间序列预测。它们是 DeepMind 的蛋白质结构预测模型 AlphaFold

AI模型告诉你,为啥巴西最可能在今年夺冠!曾精准预测前两届冠军Apr 09, 2023 pm 01:51 PM

AI模型告诉你,为啥巴西最可能在今年夺冠!曾精准预测前两届冠军Apr 09, 2023 pm 01:51 PM说起2010年南非世界杯的最大网红,一定非「章鱼保罗」莫属!这只位于德国海洋生物中心的神奇章鱼,不仅成功预测了德国队全部七场比赛的结果,还顺利地选出了最终的总冠军西班牙队。不幸的是,保罗已经永远地离开了我们,但它的「遗产」却在人们预测足球比赛结果的尝试中持续存在。在艾伦图灵研究所(The Alan Turing Institute),随着2022年卡塔尔世界杯的持续进行,三位研究员Nick Barlow、Jack Roberts和Ryan Chan决定用一种AI算法预测今年的冠军归属。预测模型图

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Linux new version

SublimeText3 Linux latest version