Why tree-based models still outperform deep learning on tabular data

In this article, I will explain in detail the paper "Why do tree-based models still outperform deep learning on tabular data" This paper explains an observation that has been observed by machine learning practitioners around the world in various fields. Phenomenon observed - tree-based models are much better at analyzing tabular data than deep learning/neural networks.

Notes on the paper

This paper has undergone a lot of preprocessing. For example, things like removing missing data can hinder tree performance, but random forests are great for missing data situations if your data is very messy: contains a lot of features and dimensions. The robustness and advantages of RF make it superior to more "advanced" solutions, which are prone to problems.

Most of the rest of the work is pretty standard. I personally don't like to apply too many pre-processing techniques as this can lead to losing a lot of the nuances of the dataset, but the steps taken in the paper basically produce the same dataset. However, it is important to note that the same processing method is used when evaluating the final results.

The paper also uses random search for hyperparameter tuning. This is also the industry standard, but in my experience Bayesian search is better suited for searching in a wider search space.

Understanding this, we can dive into our main question-why tree-based methods outperform deep learning?

1. Neural networks tend to be too smooth solutions

This is the first reason the author shares why deep learning neural networks cannot compete with random forests. In short, neural networks have a hard time creating the best fit when it comes to non-smooth functions/decision boundaries. Random forests do better in weird/jagged/irregular patterns.

If I were to guess the reason, it might be the use of gradients in neural networks, and gradients rely on differentiable search spaces, which by definition are smooth, So it is impossible to distinguish between sharp points and some random functions. So I recommend learning AI concepts like Evolutionary Algorithms, Traditional Search and more basic concepts as these concepts can lead to great results in various situations when NN fails.

For a more specific example of the difference in decision boundaries between tree-based methods (RandomForests) and deep learners, take a look at the figure below -

in the appendix , the author explains the above visualization as follows:

In this part, we can see that RandomForest is able to learn irregular patterns on the x-axis (corresponding to date features) that MLP cannot learn. We show this difference in default hyperparameters, which is typical behavior of neural networks, but in practice it is difficult (though not impossible) to find hyperparameters that successfully learn these patterns.

2. The uninformative characteristic will affect neural networks like mlp

Another important factor, especially for those large data sets that encode multiple relationships at the same time. If you feed irrelevant features to a neural network, the results will be terrible (and you will waste more resources training your model). This is why it's so important to spend a lot of time on EDA/domain exploration. This will help understand the features and ensure everything runs smoothly.

The authors of the paper tested the performance of the model when adding random and removing useless features. Based on their results, 2 very interesting results were found

Removing a large number of features reduces the performance gap between models. This clearly shows that one of the advantages of tree models is their ability to judge whether features are useful and to avoid the influence of useless features.

Adding random features to a dataset shows that neural networks degrade much more severely than tree-based methods. ResNet especially suffers from these useless properties. The improvement of transformer may be because the attention mechanism in it will be helpful to a certain extent.

One possible explanation for this phenomenon is the way decision trees are designed. Anyone who has taken an AI course will know the concepts of information gain and entropy in decision trees. This enables the decision tree to choose the best path by comparing the remaining features.

Back to topic, there is one last thing that makes RF perform better than NN when it comes to tabular data. That's rotational invariance.

3. NNs are rotation invariant, but the actual data is not.

Neural networks are rotation invariant. This means that if you perform a rotation operation on your data sets, it will not change their performance. After rotating the dataset, the performance and ranking of different models changed significantly. Although ResNets was always the worst, it maintained its original performance after rotating, while all other models changed greatly.

This is very interesting: what exactly does it mean to rotate a data set? There are no detailed explanations in the entire paper (I have contacted the author and will follow up this phenomenon). If you have any thoughts, please share them in the comments as well.

But this operation lets us see why rotation variance is important. According to the authors, taking linear combinations of features (which is what makes ResNets invariant) may actually misrepresent features and their relationships.

Obtaining optimal data biases by encoding the original data, which may mix features with very different statistical properties and cannot be recovered by a rotation-invariant model, will provide the model with Better performance.

Summary

This is a very interesting paper. Although deep learning has made great progress on text and image data sets, it basically has no advantage at all on tabular data. The paper uses 45 datasets from different domains for testing, and the results show that even without considering its superior speed, tree-based models are still state-of-the-art on moderate data (~10K samples).

The above is the detailed content of Why tree-based models still outperform deep learning on tabular data. For more information, please follow other related articles on the PHP Chinese website!

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AM

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AMIntroduction In prompt engineering, “Graph of Thought” refers to a novel approach that uses graph theory to structure and guide AI’s reasoning process. Unlike traditional methods, which often involve linear s

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AM

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AMIntroduction Congratulations! You run a successful business. Through your web pages, social media campaigns, webinars, conferences, free resources, and other sources, you collect 5000 email IDs daily. The next obvious step is

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AM

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AMIntroduction In today’s fast-paced software development environment, ensuring optimal application performance is crucial. Monitoring real-time metrics such as response times, error rates, and resource utilization can help main

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM“How many users do you have?” he prodded. “I think the last time we said was 500 million weekly actives, and it is growing very rapidly,” replied Altman. “You told me that it like doubled in just a few weeks,” Anderson continued. “I said that priv

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AM

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AMIntroduction Mistral has released its very first multimodal model, namely the Pixtral-12B-2409. This model is built upon Mistral’s 12 Billion parameter, Nemo 12B. What sets this model apart? It can now take both images and tex

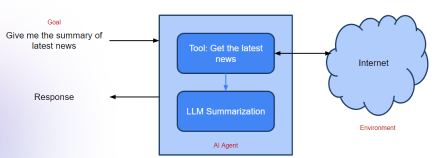

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AM

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AMImagine having an AI-powered assistant that not only responds to your queries but also autonomously gathers information, executes tasks, and even handles multiple types of data—text, images, and code. Sounds futuristic? In this a

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AM

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AMIntroduction The finance industry is the cornerstone of any country’s development, as it drives economic growth by facilitating efficient transactions and credit availability. The ease with which transactions occur and credit

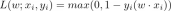

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AM

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AMIntroduction Data is being generated at an unprecedented rate from sources such as social media, financial transactions, and e-commerce platforms. Handling this continuous stream of information is a challenge, but it offers an

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

WebStorm Mac version

Useful JavaScript development tools