Technology peripherals

Technology peripherals AI

AI The traditional GAN can be interpreted after modification, and ensures the interpretability of the convolution kernel and the authenticity of the generated images.

The traditional GAN can be interpreted after modification, and ensures the interpretability of the convolution kernel and the authenticity of the generated images.

- Paper address: https://www.aaai.org/AAAI22Papers/AAAI-7931.LiC.pdf

- Author units: Institute of Computing Technology, Chinese Academy of Sciences, Shanghai Jiao Tong University, Zhijiang Laboratory

Research background and research tasks

Generative Adversarial Network ( GANs) have achieved great success in generating high-resolution images, and research on their interpretability has also attracted widespread attention in recent years.

In this field, how to make GAN learn a decoupled representation is still a major challenge. The so-called decoupled representation of GAN means that each part of the representation only affects specific aspects of the generated image. Previous research on decoupled representation of GANs focused on different perspectives.

For example, in Figure 1 below, Method 1 decouples the structure and style of the image. Method 2 learns the features of local objects in the image. Method 3 learns decoupled features of attributes in images, such as age attributes and gender attributes of face images. However, these studies failed to provide a clear and symbolic representation in GANs for different visual concepts (such as parts of the face such as eyes, nose, and mouth).

Figure 1: Visual comparison with other GAN decoupled representation methods

To this end, the researcher proposed a general method to modify traditional GAN into interpretable GAN, which ensures The convolution kernels in the middle layer of the generator can learn decoupled local visual concepts. Specifically, as shown in Figure 2 below, compared with traditional GAN, each convolution kernel in the middle layer of interpretable GAN always represents a specific visual concept when generating different images, and different convolution kernels represent different visions. concept.

##Figure 2: Visual comparison of interpretable GAN and traditional GAN encoding representation

Modeling methodThe learning of interpretable GAN should meet the following two goals: Interpretability of the convolution kerneland Authenticity of generated images.

- Interpretability of convolution kernel: Researchers hope that the convolution kernel of the middle layer can automatically learn meaningful visual concepts without manual annotation of any visual concepts. . Specifically, each convolution kernel should stably generate image regions corresponding to the same visual concept when generating different images. Different convolution kernels should generate image areas corresponding to different visual concepts;

- Authenticity of generated images: The interpretable GAN generator can still generate realistic images.

In order to ensure the interpretability of the convolution kernel in the target layer, the researchers noticed that when multiple convolution kernels generate similar areas corresponding to a certain visual concept, They often jointly represent this visual concept.

Therefore, they use a set of convolution kernels to jointly represent a specific visual concept, and use different sets of convolution kernels to represent different visual concepts respectively.

In order to ensure the authenticity of the generated images at the same time, the researchers designed the following loss function to modify the traditional GAN into an interpretable GAN.

- Loss of traditional GAN: This loss is used to ensure the authenticity of the generated image;

- Convolution kernel division loss: Given a generator, this loss is used to find a way to divide the convolution kernel such that convolution kernels in the same group generate similar image area. Specifically, they use a Gaussian mixture model (GMM) to learn how the convolution kernels are divided to ensure that the feature maps of the convolution kernels in each group have similar neural activations;

- Energy model authenticity loss: Given how the target layer kernels are divided, forcing each kernel in the same group to generate the same visual concept may reduce the quality of the generated image. . In order to further ensure the authenticity of the generated images, they use the energy model to output the authenticity probability of the feature map in the target layer, and use maximum likelihood estimation to learn the parameters of the energy model;

- Convolution kernel interpretability loss: Given the convolution kernel division method of the target layer, this loss is used to further improve the interpretability of the convolution kernel. Specifically, this loss will cause each convolution kernel in the same group to uniquely generate the same image area, while convolution kernels in different groups are responsible for generating different image areas.

Experimental results

In the experiment, the researchers evaluated their interpretable GAN qualitatively and quantitatively.

Forqualitative analysis, they visualized the feature map of each convolution kernel to evaluate the performance of the convolution kernel on different images. Consistency of visual concepts represented. As shown in Figure 3 below, in interpretable GAN, each convolution kernel always generates image areas corresponding to the same visual concept when generating different images, while different convolution kernels generate image areas corresponding to different visual concepts.

Figure 3: Visualization of feature maps in interpretable GAN

In the experiment, the difference between the group center of each group of convolution kernels and the receptive fields between the convolution kernels was also compared, as shown in Figure 4(a) below. Figure 4(b) shows the proportion of the number of convolution kernels corresponding to different visual concepts in interpretable GAN. Figure 4(c) shows that when the number of convolution kernel groups selected for division is different, the more groups, the more detailed the visual concepts learned by the interpretable GAN.

Figure 4: Qualitative evaluation of interpretable GAN

Interpretable GAN alsosupports modifying specific visual concepts on the generated image. For example, the interaction of specific visual concepts between images can be achieved by exchanging the corresponding feature maps in the interpretable layer, that is, local/global face swapping is completed.

Figure 5 below gives the results of swapping mouth, hair and nose between pairs of images. The last column gives the difference between the modified image and the original image. This result shows that the researchers' method only modified the local visual concept without changing other irrelevant areas.

Figure 5: Exchanging specific visual concepts to generate images

In addition, Figure 6 below also shows the effect of their method when exchanging the entire face .

Figure 6: Swap the entire face of the generated image

ForQuantitative analysis , researchers used face verification experiments to evaluate the accuracy of face exchange results. Specifically, given a pair of face images, the face of the original image is replaced with the face of the source image to generate a modified image. Then, test whether the face in the modified image and the face in the source image have the same identity.

Table 1 below shows the accuracy of face verification results## of different methods. Their methods are It is better than other face swapping methods in terms of identity preservation.

Table 1: Accuracy evaluation of face-swapping identity

In addition, the locality of the method in modifying specific visual concepts is also evaluated in the experiment. Specifically, the researchers calculated the mean square error (MSE) between the original image and the modified image in RGB space, and used the ratio of the out-of-region MSE and the in-region MSE of a specific visual concept as an experimental index for locality evaluation. .

The results are shown in Table 2 below. The researcher’s modification method has better locality, that is Areas of the image outside of the modified visual concept changed less.

Table 2: Locality evaluation of modified visual concepts

For more experimental results, please see the paper.

Summary

This work proposes a general method that can modify traditional GANs into interpretable GANs without any manual annotation of visual concepts. In an interpretable GAN, each convolution kernel in the middle layer of the generator can stably generate the same visual concept when generating different images.

Experiments show that interpretable GAN also enables people to modify specific visual concepts on the generated images, providing a new perspective on the controllable editing method of GAN-generated images.

The above is the detailed content of The traditional GAN can be interpreted after modification, and ensures the interpretability of the convolution kernel and the authenticity of the generated images.. For more information, please follow other related articles on the PHP Chinese website!

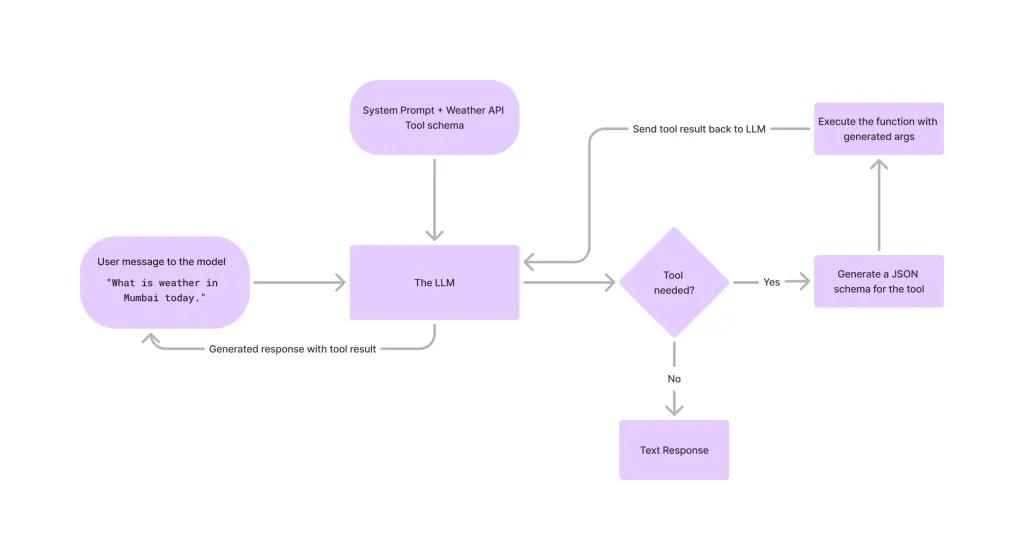

Tool Calling in LLMsApr 14, 2025 am 11:28 AM

Tool Calling in LLMsApr 14, 2025 am 11:28 AMLarge language models (LLMs) have surged in popularity, with the tool-calling feature dramatically expanding their capabilities beyond simple text generation. Now, LLMs can handle complex automation tasks such as dynamic UI creation and autonomous a

How ADHD Games, Health Tools & AI Chatbots Are Transforming Global HealthApr 14, 2025 am 11:27 AM

How ADHD Games, Health Tools & AI Chatbots Are Transforming Global HealthApr 14, 2025 am 11:27 AMCan a video game ease anxiety, build focus, or support a child with ADHD? As healthcare challenges surge globally — especially among youth — innovators are turning to an unlikely tool: video games. Now one of the world’s largest entertainment indus

UN Input On AI: Winners, Losers, And OpportunitiesApr 14, 2025 am 11:25 AM

UN Input On AI: Winners, Losers, And OpportunitiesApr 14, 2025 am 11:25 AM“History has shown that while technological progress drives economic growth, it does not on its own ensure equitable income distribution or promote inclusive human development,” writes Rebeca Grynspan, Secretary-General of UNCTAD, in the preamble.

Learning Negotiation Skills Via Generative AIApr 14, 2025 am 11:23 AM

Learning Negotiation Skills Via Generative AIApr 14, 2025 am 11:23 AMEasy-peasy, use generative AI as your negotiation tutor and sparring partner. Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining

TED Reveals From OpenAI, Google, Meta Heads To Court, Selfie With MyselfApr 14, 2025 am 11:22 AM

TED Reveals From OpenAI, Google, Meta Heads To Court, Selfie With MyselfApr 14, 2025 am 11:22 AMThe TED2025 Conference, held in Vancouver, wrapped its 36th edition yesterday, April 11. It featured 80 speakers from more than 60 countries, including Sam Altman, Eric Schmidt, and Palmer Luckey. TED’s theme, “humanity reimagined,” was tailor made

Joseph Stiglitz Warns Of The Looming Inequality Amid AI Monopoly PowerApr 14, 2025 am 11:21 AM

Joseph Stiglitz Warns Of The Looming Inequality Amid AI Monopoly PowerApr 14, 2025 am 11:21 AMJoseph Stiglitz is renowned economist and recipient of the Nobel Prize in Economics in 2001. Stiglitz posits that AI can worsen existing inequalities and consolidated power in the hands of a few dominant corporations, ultimately undermining economic

What is Graph Database?Apr 14, 2025 am 11:19 AM

What is Graph Database?Apr 14, 2025 am 11:19 AMGraph Databases: Revolutionizing Data Management Through Relationships As data expands and its characteristics evolve across various fields, graph databases are emerging as transformative solutions for managing interconnected data. Unlike traditional

LLM Routing: Strategies, Techniques, and Python ImplementationApr 14, 2025 am 11:14 AM

LLM Routing: Strategies, Techniques, and Python ImplementationApr 14, 2025 am 11:14 AMLarge Language Model (LLM) Routing: Optimizing Performance Through Intelligent Task Distribution The rapidly evolving landscape of LLMs presents a diverse range of models, each with unique strengths and weaknesses. Some excel at creative content gen

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment