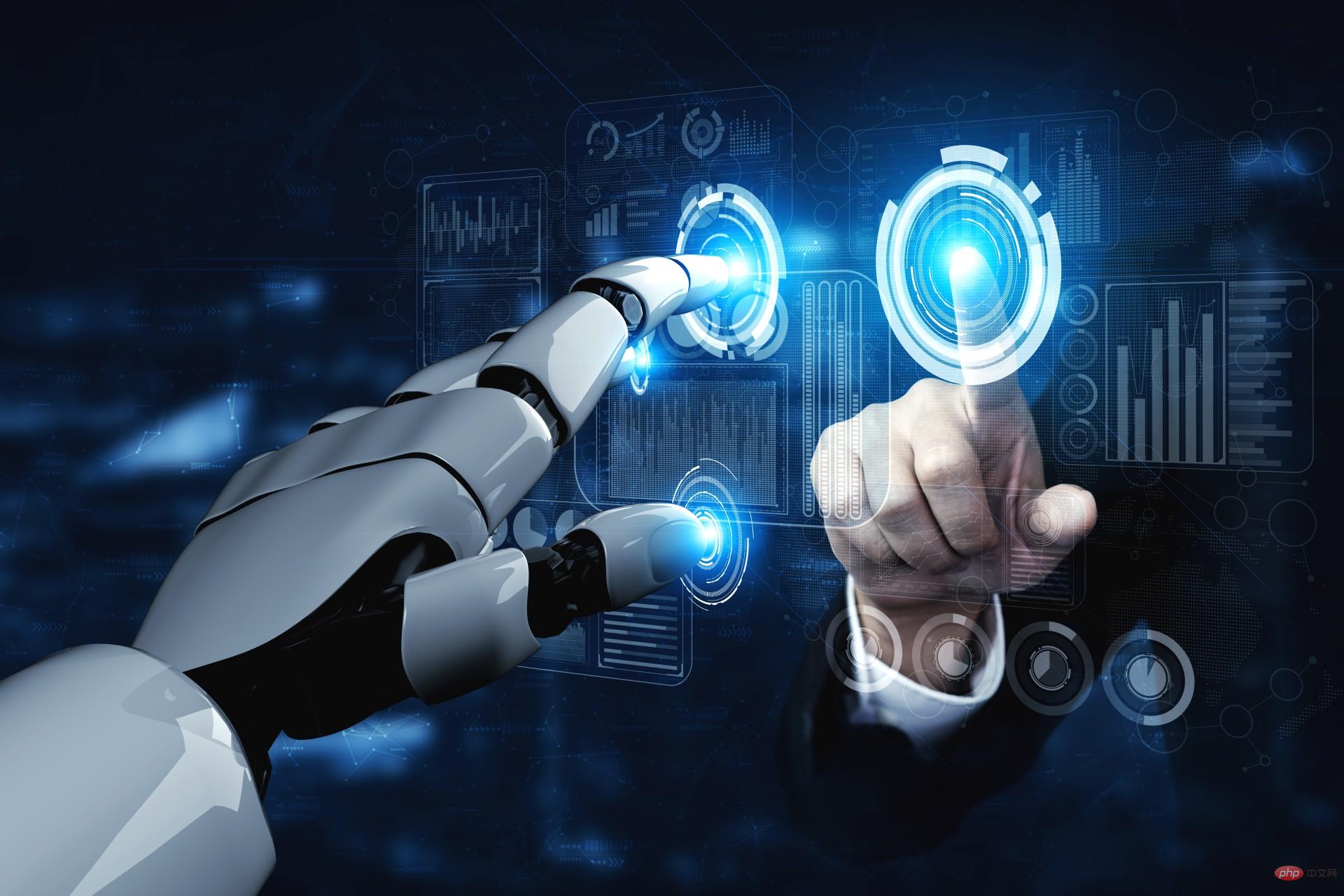

Technology peripherals

Technology peripherals AI

AI How to ensure AI safety? OpenAI provides detailed answers and will actively contact governments of various countries.

How to ensure AI safety? OpenAI provides detailed answers and will actively contact governments of various countries.

News on April 6, OpenAI posted a post on Wednesday, local time in the United States, detailing its methods to ensure the safety of AI, including conducting security assessments, improving post-release safeguards, and protecting children. and respect for privacy, etc. The company said ensuring that AI systems are built, deployed and used safely is critical to achieving its mission.

The following is the full text of OpenAI’s post:

OpenAI is committed to ensuring strong AI security that benefits as many people as possible. We know that our AI tools provide a lot of help to people today. Users around the world have told us that ChatGPT helps improve their productivity, enhance their creativity, and provide a tailored learning experience. But we also recognize that, as with any technology, there are real risks associated with these tools. Therefore, we are working hard to ensure security at every system level.

Build a safer artificial intelligence system

Before launching any new artificial intelligence system, we will conduct rigorous testing, seek opinions from external experts, and pass Techniques such as reinforcement learning with artificial feedback are used to improve model performance. At the same time, we have also established extensive security and monitoring systems.

Take our latest model GPT-4 as an example. After completing training, we conducted company-wide testing for up to 6 months to ensure that it was more secure and reliable before it was released to the public.

We believe that powerful artificial intelligence systems should undergo rigorous security assessments. Regulation is necessary to ensure widespread adoption of this practice. Therefore, we actively engage with governments to discuss the best form of regulation.

Learn from actual use and improve safeguards

We try our best to prevent foreseeable risks before system deployment, but learning in the laboratory is always limited. We research and test extensively, but cannot predict how people may use our technology, or misuse it. Therefore, we believe that learning from real-world use is a critical component in creating and releasing increasingly secure AI systems.

We carefully release new artificial intelligence systems to the crowd gradually, take substantial safeguards, and continue to improve based on the lessons we learn.

We provide the most powerful models in our own services and APIs so that developers can integrate the technology directly into their applications. This allows us to monitor and take action on abuse and develop responses. In this way, we can take practical action instead of just imagining what to do in theory.

Experience from real-world use has also led us to develop increasingly granular policies to address behavior that poses real risks to people, while still allowing our technology to be used in more beneficial ways.

We believe that society needs more time to adapt to increasingly powerful artificial intelligence, and that everyone affected by it should have a say in the further development of artificial intelligence. Iterative deployment helps different stakeholders more effectively engage in conversations about AI technologies, and having first-hand experience using these tools is critical.

Protect Children

One of the focuses of our safety work is the protection of children. We require that people using our artificial intelligence tools be 18 years of age or older, or 13 years of age or older with parental consent. Currently, we are working on verification functionality.

We do not allow our technology to be used to generate hateful, harassing, violent or adult content. The latest GPT-4 is 82% less likely to respond to requests for restricted content compared to GPT-3.5. We have robust systems in place to monitor abuse. GPT-4 is now available to subscribers of ChatGPT Plus, and we hope to allow more people to experience it over time.

We have taken significant steps to minimize the potential for our models to produce content that is harmful to children. For example, when a user attempts to upload child-safe abuse material to our image generation tool, we block it and report the matter to the National Center for Missing and Exploited Children.

In addition to the default security protection, we work with development organizations such as the non-profit organization Khan Academy to tailor security measures for them. Khan Academy has developed an artificial intelligence assistant that can serve as a virtual tutor for students and a classroom assistant for teachers. We are also working on features that allow developers to set stricter standards for model output to better support developers and users who require such capabilities.

Respect Privacy

Our large language models are trained on an extensive corpus of text, including publicly available content, licensed content, and content produced by humans Moderator-generated content. We do not use this data to sell our services or advertising, nor do we use it to build profiles. We just use this data to make our models better at helping people, such as making ChatGPT more intelligent by having more conversations with people.

Although much of our training data includes personal information that is available on the public web, we want our models to learn about the world as a whole, not individuals. Therefore, we are committed to removing personal information from training data sets where feasible, fine-tuning models to deny query requests for personal information, and responding to individuals' requests to delete their personal information from our systems. These measures minimize the likelihood that our model will generate responses that contain personal information.

Improve factual accuracy

Today's large language models can predict the next likely words to be used based on previous patterns and user-entered text. But in some cases, the next most likely word may actually be factually incorrect.

Improving factual accuracy is one of the focuses of OpenAI and many other AI research organizations, and we are making progress. We improved the factual accuracy of GPT-4 by leveraging user feedback on ChatGPT output that was flagged as incorrect as the primary data source. Compared with GPT-3.5, GPT-4 is more likely to produce factual content, with an improvement of 40%.

We strive to be as transparent as possible when users sign up to use the tool to avoid possible incorrect responses from ChatGPT. However, we have recognized that there is more work to be done to further reduce the potential for misunderstanding and educate the public about the current limitations of these AI tools.

Continuous Research and Engagement

We believe that a practical way to address AI safety issues is to invest more time and resources into researching effective mitigation and Calibrate the technology and test it against real-world potential abuse.

Importantly, we believe that improving the safety and capabilities of AI should proceed simultaneously. Our best security work to date has come from working with our most capable models, because they are better at following the user's instructions and easier to harness or "guide" them.

We will create and deploy more capable models with increasing caution and will continue to strengthen safety precautions as AI systems evolve.

While we waited more than 6 months to deploy GPT-4 to better understand its capabilities, benefits, and risks, sometimes it can take longer to improve the security of AI systems. Therefore, policymakers and AI developers need to ensure that the development and deployment of AI are effectively regulated globally so that no one takes shortcuts to stay ahead. This is a difficult challenge that requires technological and institutional innovation, but we are eager to contribute.

Addressing AI safety issues will also require extensive debate, experimentation and engagement, including setting boundaries for how AI systems can behave. We have and will continue to promote collaboration and open dialogue among stakeholders to create a safer AI ecosystem. (Xiao Xiao)

The above is the detailed content of How to ensure AI safety? OpenAI provides detailed answers and will actively contact governments of various countries.. For more information, please follow other related articles on the PHP Chinese website!

2023年机器学习的十大概念和技术Apr 04, 2023 pm 12:30 PM

2023年机器学习的十大概念和技术Apr 04, 2023 pm 12:30 PM机器学习是一个不断发展的学科,一直在创造新的想法和技术。本文罗列了2023年机器学习的十大概念和技术。 本文罗列了2023年机器学习的十大概念和技术。2023年机器学习的十大概念和技术是一个教计算机从数据中学习的过程,无需明确的编程。机器学习是一个不断发展的学科,一直在创造新的想法和技术。为了保持领先,数据科学家应该关注其中一些网站,以跟上最新的发展。这将有助于了解机器学习中的技术如何在实践中使用,并为自己的业务或工作领域中的可能应用提供想法。2023年机器学习的十大概念和技术:1. 深度神经网

超参数优化比较之网格搜索、随机搜索和贝叶斯优化Apr 04, 2023 pm 12:05 PM

超参数优化比较之网格搜索、随机搜索和贝叶斯优化Apr 04, 2023 pm 12:05 PM本文将详细介绍用来提高机器学习效果的最常见的超参数优化方法。 译者 | 朱先忠审校 | 孙淑娟简介通常,在尝试改进机器学习模型时,人们首先想到的解决方案是添加更多的训练数据。额外的数据通常是有帮助(在某些情况下除外)的,但生成高质量的数据可能非常昂贵。通过使用现有数据获得最佳模型性能,超参数优化可以节省我们的时间和资源。顾名思义,超参数优化是为机器学习模型确定最佳超参数组合以满足优化函数(即,给定研究中的数据集,最大化模型的性能)的过程。换句话说,每个模型都会提供多个有关选项的调整“按钮

人工智能自动获取知识和技能,实现自我完善的过程是什么Aug 24, 2022 am 11:57 AM

人工智能自动获取知识和技能,实现自我完善的过程是什么Aug 24, 2022 am 11:57 AM实现自我完善的过程是“机器学习”。机器学习是人工智能核心,是使计算机具有智能的根本途径;它使计算机能模拟人的学习行为,自动地通过学习来获取知识和技能,不断改善性能,实现自我完善。机器学习主要研究三方面问题:1、学习机理,人类获取知识、技能和抽象概念的天赋能力;2、学习方法,对生物学习机理进行简化的基础上,用计算的方法进行再现;3、学习系统,能够在一定程度上实现机器学习的系统。

得益于OpenAI技术,微软必应的搜索流量超过谷歌Mar 31, 2023 pm 10:38 PM

得益于OpenAI技术,微软必应的搜索流量超过谷歌Mar 31, 2023 pm 10:38 PM截至3月20日的数据显示,自微软2月7日推出其人工智能版本以来,必应搜索引擎的页面访问量增加了15.8%,而Alphabet旗下的谷歌搜索引擎则下降了近1%。 3月23日消息,外媒报道称,分析公司Similarweb的数据显示,在整合了OpenAI的技术后,微软旗下的必应在页面访问量方面实现了更多的增长。截至3月20日的数据显示,自微软2月7日推出其人工智能版本以来,必应搜索引擎的页面访问量增加了15.8%,而Alphabet旗下的谷歌搜索引擎则下降了近1%。这些数据是微软在与谷歌争夺生

荣耀的人工智能助手叫什么名字Sep 06, 2022 pm 03:31 PM

荣耀的人工智能助手叫什么名字Sep 06, 2022 pm 03:31 PM荣耀的人工智能助手叫“YOYO”,也即悠悠;YOYO除了能够实现语音操控等基本功能之外,还拥有智慧视觉、智慧识屏、情景智能、智慧搜索等功能,可以在系统设置页面中的智慧助手里进行相关的设置。

人工智能在教育领域的应用主要有哪些Dec 14, 2020 pm 05:08 PM

人工智能在教育领域的应用主要有哪些Dec 14, 2020 pm 05:08 PM人工智能在教育领域的应用主要有个性化学习、虚拟导师、教育机器人和场景式教育。人工智能在教育领域的应用目前还处于早期探索阶段,但是潜力却是巨大的。

30行Python代码就可以调用ChatGPT API总结论文的主要内容Apr 04, 2023 pm 12:05 PM

30行Python代码就可以调用ChatGPT API总结论文的主要内容Apr 04, 2023 pm 12:05 PM阅读论文可以说是我们的日常工作之一,论文的数量太多,我们如何快速阅读归纳呢?自从ChatGPT出现以后,有很多阅读论文的服务可以使用。其实使用ChatGPT API非常简单,我们只用30行python代码就可以在本地搭建一个自己的应用。 阅读论文可以说是我们的日常工作之一,论文的数量太多,我们如何快速阅读归纳呢?自从ChatGPT出现以后,有很多阅读论文的服务可以使用。其实使用ChatGPT API非常简单,我们只用30行python代码就可以在本地搭建一个自己的应用。使用 Python 和 C

人工智能在生活中的应用有哪些Jul 20, 2022 pm 04:47 PM

人工智能在生活中的应用有哪些Jul 20, 2022 pm 04:47 PM人工智能在生活中的应用有:1、虚拟个人助理,使用者可通过声控、文字输入的方式,来完成一些日常生活的小事;2、语音评测,利用云计算技术,将自动口语评测服务放在云端,并开放API接口供客户远程使用;3、无人汽车,主要依靠车内的以计算机系统为主的智能驾驶仪来实现无人驾驶的目标;4、天气预测,通过手机GPRS系统,定位到用户所处的位置,在利用算法,对覆盖全国的雷达图进行数据分析并预测。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

SublimeText3 Linux new version

SublimeText3 Linux latest version