Technology peripherals

Technology peripherals AI

AI The guy from Hangzhou Electronics is the first to get the GPT image reading function. A single card can realize the new SOTA. The code has been open source

The guy from Hangzhou Electronics is the first to get the GPT image reading function. A single card can realize the new SOTA. The code has been open sourceCurrently, this paper has been accepted by CVPR2023.

GPT-4, which can read images, is shockingly released! But you have to queue up to use it. . .

Why not try this first~

Add a small model, you can make large language models such as ChatGPT and GPT-3 that can only understand text easilyRead pictures, all kinds of tricky details can be dealt with easily.

And train this small modelIt can be done with a single card (an RTX 3090).

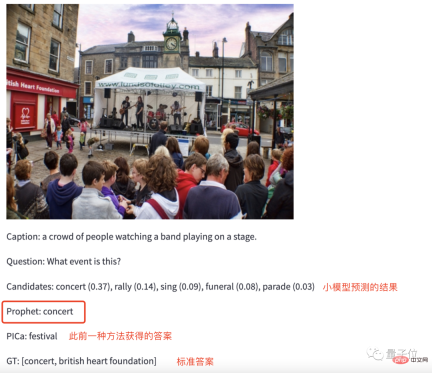

As for the effect, just look at the picture.

For example, input a picture of a "music scene" to the trained GPT-3 and ask it: What activities are being held at the scene?

Without any hesitation, GPT-3 gave the answer to Concert.

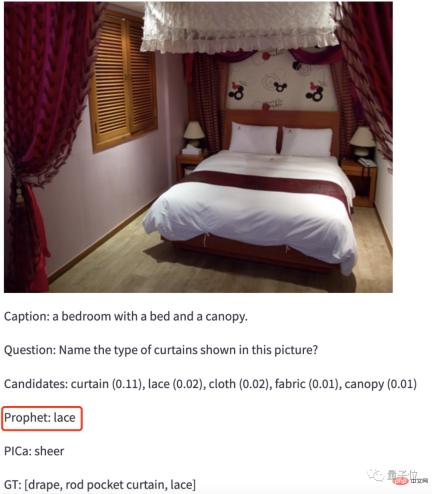

To make it more difficult, give GPT-3 a photo of Jiang Zi and let it identify what type of material the curtain in the photo is.

GPT-3: lace.

Bingo! (It seems that there is something on it)

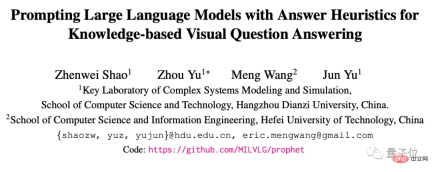

This method is the latest result of a team from Hangzhou University of Electronic Science and Technology and Hefei University of Technology: Prophet, which they had already developed half a year ago Get to work on this.

The first author of the paper is Shao Zhenwei, a graduate student of Hangzhou Dianzi University. He was diagnosed with "progressive spinal muscular atrophy" when he was 1 year old. He regretted not passing Zhejiang University during the college entrance examination and chose Hangzhou Dianzi University, which is close to home. .

This paper has been accepted by CVPR2023.

Reaching the new SOTA in cross-modal tasks

Without further ado, let’s look directly at the reading of GPT-3 with the support of Prophet’s method. Figure ability.

Let’s first take a look at its test results on the data set.

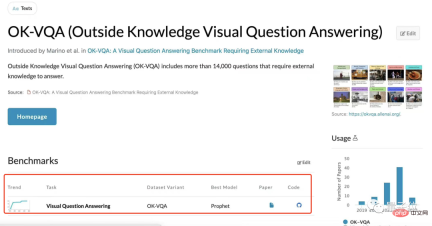

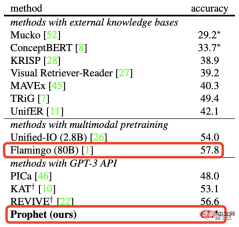

The research team tested Prophet on two visual question and answer data sets based on external knowledge, OK-VQA and A-OKVQA, and both created new SOTA.

More specifically, on the OK-VQA data set, compared with Deepmind’s large model Flamingo with 80B parameters, Prophet reached Achieved an accuracy rate of 61.1%, successfully defeating Flamingo (57.8%).

And in terms of required computing power resources, Prophet also "beats" Flamingo.

Flamingo-80B needs to be trained on 1536 TPUv4 graphics cards for 15 days, while Prophet only requires one RTX-3090 graphics card to train the VQA model 4 days, then call the OpenAI API a certain number of times.

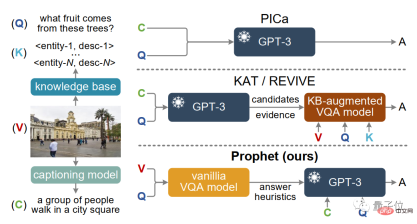

In fact, there have been methods similar to Prophet to help GPT-3 handle cross-modal tasks before, such as PICa, and later KAT and REVIVE.

However, they may not be satisfactory in handling some details.

Give a chestnut, let them read the picture below together, and then answer the question: What kind of fruit will the tree in the picture bear?

The only information that PICa, KAT and REVIVE extracted from the picture was: a group of people walking in the square, completely ignoring that there was a coconut tree behind. The final answer can only be guessed.

This situation will not happen with Prophet. It solves the problem of insufficient image information extracted by the above method and further stimulates the potential of GPT-3.

So how did Prophet do it?

Small model Big model

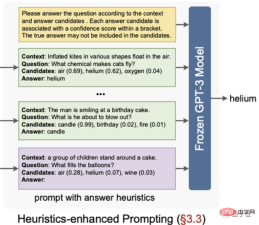

Effectively extract information and accurately answer questions. Prophet relies on its unique two-stage framework to be able to do this.

The division of labor between these two stages is also clear:

- The first stage: Give some enlightening answers based on the questions;

- The second stage: These answers will narrow the scope, giving GPT-3 sufficient space to realize its potential.

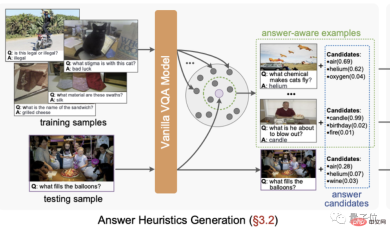

First, in the first stage, the research team trained an improved MCAN model (a VQA model) against a specific external knowledge VQA data set.

After training the model, extract two heuristic answers from it: answer candidates and answer-aware examples.

Among them, Answer candidates are sorted based on the confidence level output by the model classification layer, and the top10 are selected.

Answer perception example refers to using the features before the model classification layer as the potential answer features of the sample, the most similar labeled sample in this feature space.

The next step is the second stage, which is relatively simple and crude.

Organize the "inspiring answers" obtained in the previous step into prompts, and then input the prompts to GPT-3 to complete visual question and answer questions under certain prompts.

However, although some answer hints have been given in the previous step, this does not mean that GPT-3 will be limited to these answers.

If the confidence of the answer given by the prompt is too low or the correct answer is not among those prompts, it is entirely possible for GPT-3 to generate a new answer.

Research Team

Of course, in addition to the research results, the team behind this study also has to be mentioned.

First authorShao Zhenwei was diagnosed with "progressive spinal muscular atrophy" when he was 1 year old. It is a first-level physical disability and has no ability to take care of itself. Life and study require the full care of the mother. .

However, despite his physical limitations, Shao Zhenwei’s thirst for knowledge has not weakened.

In the 2017 college entrance examination, he scored a high score of 644 points and was admitted to the computer major of Hangzhou University of Electronic Science and Technology with the first place.

During this period, he also won honors such as the 2018 Chinese College Student Self-improvement Star, the 2020 National Scholarship, and the 2021 Zhejiang Province Outstanding Graduate.

During his undergraduate period, Shao Zhenwei had already started to conduct scientific research activities with Professor Yu Zhou.

In 2021, Shao Zhenwei had a chance encounter with Zhejiang University when he was preparing for postgraduate promotion, so he stayed at the school and joined Professor Yu Zhou’s research group to pursue a master’s degree. Currently, he is in the second year of graduate school, and his research direction is cross-modal learning.

Professor Yu Zhou is the second author and corresponding author of this research paper. He is the youngest professor at the School of Computer Science of Hangzhou Dianping University and a member of the “Complex System Modeling and Simulation” Laboratory of the Ministry of Education. deputy director.

For a long time, Yu Zhou has specialized in the multimodal intelligence direction, and has led the research team to win the championship and runner-up in the international visual question answering challenge VQA Challenge many times.

Most members of the research team are in Hangzhou Electronics Media Intelligence Laboratory (MIL).

The laboratory is headed by Professor Yu Jun, a National Distinguished Talent. In recent years, the laboratory has published a series of high-level journal conference papers (TPAMI, IJCV, CVPR, etc.) focusing on multi-modal learning, and has won many IEEE journal awards. Best paper award at the conference.

The laboratory has hosted more than 20 national projects such as the National Key R&D Plan and the National Natural Science Foundation of China. It has won the first prize of Zhejiang Province Natural Science Award and the second prize of Educational Natural Science Award.

The above is the detailed content of The guy from Hangzhou Electronics is the first to get the GPT image reading function. A single card can realize the new SOTA. The code has been open source. For more information, please follow other related articles on the PHP Chinese website!

The AI Skills Gap Is Slowing Down Supply ChainsApr 26, 2025 am 11:13 AM

The AI Skills Gap Is Slowing Down Supply ChainsApr 26, 2025 am 11:13 AMThe term "AI-ready workforce" is frequently used, but what does it truly mean in the supply chain industry? According to Abe Eshkenazi, CEO of the Association for Supply Chain Management (ASCM), it signifies professionals capable of critic

How One Company Is Quietly Working To Transform AI ForeverApr 26, 2025 am 11:12 AM

How One Company Is Quietly Working To Transform AI ForeverApr 26, 2025 am 11:12 AMThe decentralized AI revolution is quietly gaining momentum. This Friday in Austin, Texas, the Bittensor Endgame Summit marks a pivotal moment, transitioning decentralized AI (DeAI) from theory to practical application. Unlike the glitzy commercial

Nvidia Releases NeMo Microservices To Streamline AI Agent DevelopmentApr 26, 2025 am 11:11 AM

Nvidia Releases NeMo Microservices To Streamline AI Agent DevelopmentApr 26, 2025 am 11:11 AMEnterprise AI faces data integration challenges The application of enterprise AI faces a major challenge: building systems that can maintain accuracy and practicality by continuously learning business data. NeMo microservices solve this problem by creating what Nvidia describes as "data flywheel", allowing AI systems to remain relevant through continuous exposure to enterprise information and user interaction. This newly launched toolkit contains five key microservices: NeMo Customizer handles fine-tuning of large language models with higher training throughput. NeMo Evaluator provides simplified evaluation of AI models for custom benchmarks. NeMo Guardrails implements security controls to maintain compliance and appropriateness

AI Paints A New Picture For The Future Of Art And DesignApr 26, 2025 am 11:10 AM

AI Paints A New Picture For The Future Of Art And DesignApr 26, 2025 am 11:10 AMAI: The Future of Art and Design Artificial intelligence (AI) is changing the field of art and design in unprecedented ways, and its impact is no longer limited to amateurs, but more profoundly affecting professionals. Artwork and design schemes generated by AI are rapidly replacing traditional material images and designers in many transactional design activities such as advertising, social media image generation and web design. However, professional artists and designers also find the practical value of AI. They use AI as an auxiliary tool to explore new aesthetic possibilities, blend different styles, and create novel visual effects. AI helps artists and designers automate repetitive tasks, propose different design elements and provide creative input. AI supports style transfer, which is to apply a style of image

How Zoom Is Revolutionizing Work With Agentic AI: From Meetings To MilestonesApr 26, 2025 am 11:09 AM

How Zoom Is Revolutionizing Work With Agentic AI: From Meetings To MilestonesApr 26, 2025 am 11:09 AMZoom, initially known for its video conferencing platform, is leading a workplace revolution with its innovative use of agentic AI. A recent conversation with Zoom's CTO, XD Huang, revealed the company's ambitious vision. Defining Agentic AI Huang d

The Existential Threat To UniversitiesApr 26, 2025 am 11:08 AM

The Existential Threat To UniversitiesApr 26, 2025 am 11:08 AMWill AI revolutionize education? This question is prompting serious reflection among educators and stakeholders. The integration of AI into education presents both opportunities and challenges. As Matthew Lynch of The Tech Edvocate notes, universit

The Prototype: American Scientists Are Looking For Jobs AbroadApr 26, 2025 am 11:07 AM

The Prototype: American Scientists Are Looking For Jobs AbroadApr 26, 2025 am 11:07 AMThe development of scientific research and technology in the United States may face challenges, perhaps due to budget cuts. According to Nature, the number of American scientists applying for overseas jobs increased by 32% from January to March 2025 compared with the same period in 2024. A previous poll showed that 75% of the researchers surveyed were considering searching for jobs in Europe and Canada. Hundreds of NIH and NSF grants have been terminated in the past few months, with NIH’s new grants down by about $2.3 billion this year, a drop of nearly one-third. The leaked budget proposal shows that the Trump administration is considering sharply cutting budgets for scientific institutions, with a possible reduction of up to 50%. The turmoil in the field of basic research has also affected one of the major advantages of the United States: attracting overseas talents. 35

All About Open AI's Latest GPT 4.1 Family - Analytics VidhyaApr 26, 2025 am 10:19 AM

All About Open AI's Latest GPT 4.1 Family - Analytics VidhyaApr 26, 2025 am 10:19 AMOpenAI unveils the powerful GPT-4.1 series: a family of three advanced language models designed for real-world applications. This significant leap forward offers faster response times, enhanced comprehension, and drastically reduced costs compared t

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

Notepad++7.3.1

Easy-to-use and free code editor

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

SublimeText3 Chinese version

Chinese version, very easy to use

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function