When enterprises solve high concurrency problems, they generally have two processing strategies, software and hardware. On the hardware, a load balancer is added to distribute a large number of requests. On the software side, two solutions can be added at the high concurrency bottleneck: database and web server. Solution, among which the most commonly used solution for adding load on the front layer of the web server is to use nginx to achieve load balancing.

1. The role of load balancing

1. Forwarding function

According to A certain algorithm [weighting, polling] forwards client requests to different application servers, reducing the pressure on a single server and increasing system concurrency.

2. Fault removal

Use heartbeat detection to determine whether the application server can currently work normally. If the server goes down, the request will be automatically sent to other application servers.

3. Recovery addition

(Recommended learning: nginx tutorial)

If it is detected that the failed application server has resumed work, it will be added automatically. Join the team that handles user requests.

2. Nginx implements load balancing

Also uses two tomcats to simulate two application servers, with port numbers 8080 and 8081

1. Nginx's load distribution strategy

Nginx's upstream currently supports the distribution algorithm:

1), polling - 1:1 processing in turn Requests (default)

Each request is assigned to a different application server one by one in chronological order. If the application server goes down, it will be automatically eliminated, and the remaining ones will continue to be polled.

2), weight - you can you up

By configuring the weight, specify the polling probability, the weight is proportional to the access ratio, which is used when the application server performance is not good average situation.

3), ip_hash algorithm

Each request is allocated according to the hash result of the accessed IP, so that each visitor has a fixed access to an application server and can solve the session Shared issues.

2. Configure Nginx's load balancing and distribution strategy

This can be achieved by adding specified parameters after the application server IP added in the upstream parameter, such as:

upstream tomcatserver1 {

server 192.168.72.49:8080 weight=3;

server 192.168.72.49:8081;

}

server {

listen 80;

server_name 8080.max.com;

#charset koi8-r;

#access_log logs/host.access.log main;

location / {

proxy_pass http://tomcatserver1;

index index.html index.htm;

}

}

Pass The above configuration can be achieved. When accessing the website 8080.max.com, because the proxy_pass address is configured, all requests will first pass through the nginx reverse proxy server, and then the server will When the request is forwarded to the destination host, read the upstream address of tomcatsever1, read the distribution policy, and configure tomcat1 weight to be 3, so nginx will send most of the requests to tomcat1 on server 49, which is port 8080; a smaller number will be sent to tomcat1 on server 49, which is port 8080; tomcat2 to achieve conditional load balancing. Of course, this condition is the hardware index processing request capability of servers 1 and 2.

3. Other configurations of nginx

upstream myServer {

server 192.168.72.49:9090 down;

server 192.168.72.49:8080 weight=2;

server 192.168.72.49:6060;

server 192.168.72.49:7070 backup;

}

1) down

means that the previous server will not participate in the load temporarily

2) Weight

The default is 1. The larger the weight, the greater the weight of the load.

3) max_fails

The number of allowed request failures defaults to 1. When the maximum number is exceeded, the error defined by the proxy_next_upstream module is returned

4) fail_timeout

Pause time after max_fails failures.

5) Backup

When all other non-backup machines are down or busy, request the backup machine. So this machine will have the least pressure.

3. High availability using Nginx

In addition to achieving high availability of the website, it also means providing n multiple servers for publishing the same service and adding load balancing The server distributes requests to ensure that each server can process requests relatively saturated under high concurrency. Similarly, the load balancing server also needs to be highly available to prevent the subsequent application servers from being disrupted and unable to work if the load balancing server hangs up.

Solutions to achieve high availability: Add redundancy. Add n nginx servers to avoid the above single point of failure.

4. Summary

To summarize, load balancing, whether it is a variety of software or hardware solutions, mainly distributes a large number of concurrent requests according to certain rules. Let different servers handle it, thereby reducing the instantaneous pressure on a certain server and improving the anti-concurrency ability of the website. The author believes that the reason why nginx is widely used in load balancing is due to its flexible configuration. An nginx.conf file solves most problems, whether it is nginx creating a virtual server, nginx reverse proxy server, or nginx introduced in this article. Load balancing is almost always performed in this configuration file. The server is only responsible for setting up nginx and running it. Moreover, it is lightweight and does not need to occupy too many server resources to achieve better results.

The above is the detailed content of Configure Nginx to achieve load balancing (picture). For more information, please follow other related articles on the PHP Chinese website!

内存飙升!记一次nginx拦截爬虫Mar 30, 2023 pm 04:35 PM

内存飙升!记一次nginx拦截爬虫Mar 30, 2023 pm 04:35 PM本篇文章给大家带来了关于nginx的相关知识,其中主要介绍了nginx拦截爬虫相关的,感兴趣的朋友下面一起来看一下吧,希望对大家有帮助。

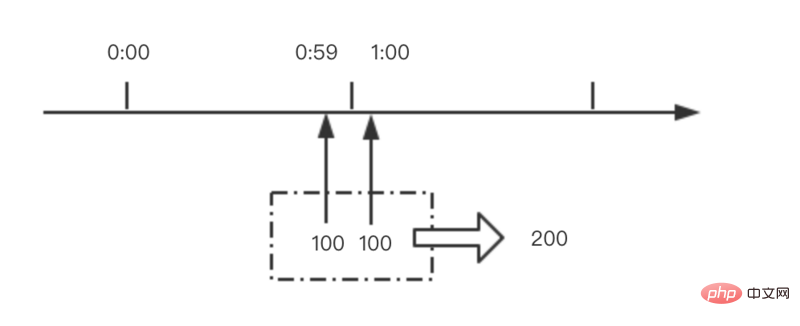

nginx限流模块源码分析May 11, 2023 pm 06:16 PM

nginx限流模块源码分析May 11, 2023 pm 06:16 PM高并发系统有三把利器:缓存、降级和限流;限流的目的是通过对并发访问/请求进行限速来保护系统,一旦达到限制速率则可以拒绝服务(定向到错误页)、排队等待(秒杀)、降级(返回兜底数据或默认数据);高并发系统常见的限流有:限制总并发数(数据库连接池)、限制瞬时并发数(如nginx的limit_conn模块,用来限制瞬时并发连接数)、限制时间窗口内的平均速率(nginx的limit_req模块,用来限制每秒的平均速率);另外还可以根据网络连接数、网络流量、cpu或内存负载等来限流。1.限流算法最简单粗暴的

nginx+rsync+inotify怎么配置实现负载均衡May 11, 2023 pm 03:37 PM

nginx+rsync+inotify怎么配置实现负载均衡May 11, 2023 pm 03:37 PM实验环境前端nginx:ip192.168.6.242,对后端的wordpress网站做反向代理实现复杂均衡后端nginx:ip192.168.6.36,192.168.6.205都部署wordpress,并使用相同的数据库1、在后端的两个wordpress上配置rsync+inotify,两服务器都开启rsync服务,并且通过inotify分别向对方同步数据下面配置192.168.6.205这台服务器vim/etc/rsyncd.confuid=nginxgid=nginxport=873ho

nginx php403错误怎么解决Nov 23, 2022 am 09:59 AM

nginx php403错误怎么解决Nov 23, 2022 am 09:59 AMnginx php403错误的解决办法:1、修改文件权限或开启selinux;2、修改php-fpm.conf,加入需要的文件扩展名;3、修改php.ini内容为“cgi.fix_pathinfo = 0”;4、重启php-fpm即可。

如何解决跨域?常见解决方案浅析Apr 25, 2023 pm 07:57 PM

如何解决跨域?常见解决方案浅析Apr 25, 2023 pm 07:57 PM跨域是开发中经常会遇到的一个场景,也是面试中经常会讨论的一个问题。掌握常见的跨域解决方案及其背后的原理,不仅可以提高我们的开发效率,还能在面试中表现的更加

nginx部署react刷新404怎么办Jan 03, 2023 pm 01:41 PM

nginx部署react刷新404怎么办Jan 03, 2023 pm 01:41 PMnginx部署react刷新404的解决办法:1、修改Nginx配置为“server {listen 80;server_name https://www.xxx.com;location / {root xxx;index index.html index.htm;...}”;2、刷新路由,按当前路径去nginx加载页面即可。

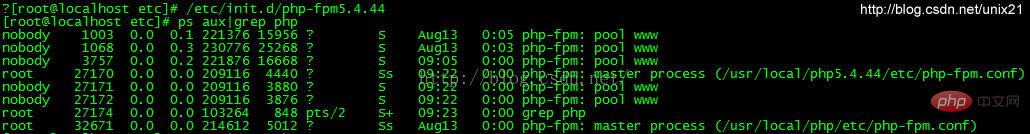

Linux系统下如何为Nginx安装多版本PHPMay 11, 2023 pm 07:34 PM

Linux系统下如何为Nginx安装多版本PHPMay 11, 2023 pm 07:34 PMlinux版本:64位centos6.4nginx版本:nginx1.8.0php版本:php5.5.28&php5.4.44注意假如php5.5是主版本已经安装在/usr/local/php目录下,那么再安装其他版本的php再指定不同安装目录即可。安装php#wgethttp://cn2.php.net/get/php-5.4.44.tar.gz/from/this/mirror#tarzxvfphp-5.4.44.tar.gz#cdphp-5.4.44#./configure--pr

nginx怎么禁止访问phpNov 22, 2022 am 09:52 AM

nginx怎么禁止访问phpNov 22, 2022 am 09:52 AMnginx禁止访问php的方法:1、配置nginx,禁止解析指定目录下的指定程序;2、将“location ~^/images/.*\.(php|php5|sh|pl|py)${deny all...}”语句放置在server标签内即可。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

Atom editor mac version download

The most popular open source editor

Dreamweaver Mac version

Visual web development tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 English version

Recommended: Win version, supports code prompts!