Home >Backend Development >Python Tutorial >Use a multi-threaded crawler to capture the email and mobile phone number in *

Use a multi-threaded crawler to capture the email and mobile phone number in *

- 伊谢尔伦Original

- 2017-02-03 14:27:442920browse

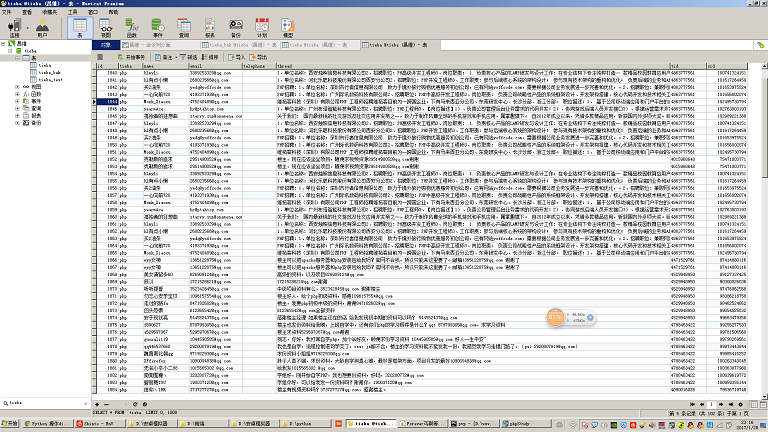

This crawler mainly crawls the content of various posts in Baidu Tieba, and analyzes the content of the posts to extract the mobile phone numbers and email addresses. The main process is explained in detail in the code comments.

Test environment:

The code was tested in Windows7 64bit, python 2.7 64bit (mysqldb extension installed) and centos 6.5, python 2.7 (with mysqldb extension) environment.

Environment preparation:

If you want to do your job well, you must first sharpen your tools. You can see from the screenshot that my environment is Windows 7 + PyCharm. The Python environment is Python 2.7 64bit. This is a development environment more suitable for novices. Then I suggest you install an easy_install. As you can tell from the name, this is an installer. It is used to install some expansion packages. For example, if we want to operate the mysql database in python, python natively does not support it. We must Install the mysqldb package to allow python to operate the mysql database. If there is easy_install, we only need one line of commands to quickly install the mysqldb expansion package. It is as convenient as composer in php, yum in centos, and apt-get in Ubuntu .

Relevant tools can be found in github: cw1997/python-tools. To install easy_install, you only need to run the py script under the python command line and wait for a moment. It will automatically add the Windows environment variables. , if you enter easy_install on the Windows command line and there is an echo, the installation is successful.

Details of environment selection:

As for computer hardware, the faster the better, the memory should start at least 8G, because the crawler itself needs to store and parse a large amount of intermediate data, especially multi-threaded crawlers. When crawling lists and details pages with paging, and the amount of data to be crawled is large, using the queue to allocate crawling tasks will occupy a lot of memory. Including sometimes the data we capture is using json. If it is stored in a nosql database such as mongodb, it will also occupy a lot of memory.

It is recommended to use a wired network for network connection, because some inferior wireless routers and ordinary civilian wireless network cards on the market will experience intermittent network disconnections, data loss, packet drops, etc. when the thread is opened relatively large.

As for the operating system and python, of course you must choose 64-bit. If you are using a 32-bit operating system, you cannot use large memory. If you are using 32-bit python, you may not notice any problems when capturing data on a small scale, but when the amount of data becomes large, such as a list, queue, or dictionary storing a large amount of data, it will cause When python's memory usage exceeds 2g, a memory overflow error will be reported.

If you plan to use mysql to store data, it is recommended to use mysql5.5 and later versions, because mysql5.5 version supports the json data type, so you can abandon mongodb.

As for python now has version 3.x, why is python2.7 still used here? The reason for choosing version 2.7 is that the python core programming book I bought a long time ago is the second edition, and still uses 2.7 as the example version. And there are still a lot of tutorial materials on the Internet that use 2.7 as the version. 2.7 is still very different from 3.x in some aspects. If we have not studied 2.7, we may not understand some subtle syntax differences, which will cause There is a deviation in our understanding, or we cannot understand the demo code. And there are still some dependent packages that are only compatible with version 2.7. My suggestion is that if you are eager to learn python and then work in a company, and the company does not have old code to maintain, then you can consider starting 3.x directly. If you have plenty of time and do not have a very systematic expert, just If you can rely on scattered blog articles on the Internet to learn, then it is better to learn 2.7 first and then 3.x. After all, you can get started with 3.x quickly after learning 2.7.

Knowledge points involved in multi-threaded crawlers:

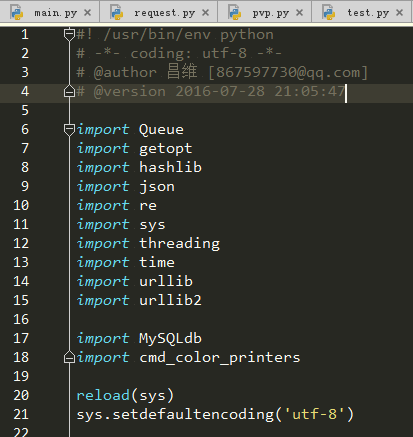

In fact, for any software project, if we want to know what knowledge points are needed to write this project, we can observe the main points of this project Which packages are imported into the entry file.

Now let’s take a look at our project. As a person who is new to python, there may be some packages that have almost never been used. So in this section, we will briefly talk about the functions of these packages and understand their differences. What knowledge points will be involved and what are the keywords of these knowledge points. This article will not take a long time to start from the basics, so we must learn to make good use of Baidu and search for keywords for these knowledge points to educate ourselves. Let’s analyze these knowledge points one by one.

HTTP protocol:

The essence of our crawler crawling data is to continuously initiate http requests, obtain http responses, and store them in our computer. Understanding the http protocol helps us accurately control some parameters that can speed up the crawling when crawling data, such as keep-alive, etc.

Threading module (multi-threading):

The programs we usually write are single-threaded programs. The codes we write all run in the main thread, and this main thread runs in the python process. .

Multi-threading is implemented in python through a module called threading. There was a thread module before, but threading has stronger control over threads, so we later switched to threading to implement multi-threaded programming.

To put it simply, to use the threading module to write a multi-threaded program is to first define a class yourself, then this class must inherit threading.Thread, and write the work code for each thread to the run of a class method, of course, if the thread itself needs to do some initialization work when it is created, then the code to be executed for the initialization work must be written in its __init__ method. This method is just like the constructor method in PHP and Java. .

An additional point to talk about here is the concept of thread safety. Normally, in our single-threaded situation, only one thread is operating on resources (files, variables) at each moment, so conflicts are unlikely to occur. However, in the case of multi-threading, two threads may be operating the same resource at the same time, causing resource damage. Therefore, we need a mechanism to resolve the damage caused by this conflict, which usually involves operations such as locking. For example, the innodb table engine of the mysql database has row-level locks, etc., and file operations have read locks, etc. These are all completed for us by the bottom layer of their program. So we usually only need to know those operations, or those programs that deal with thread safety issues, and then we can use them in multi-threaded programming. This kind of program that considers thread safety issues is generally called a "thread safety version". For example, PHP has a TS version, and this TS means Thread Safety. The Queue module we are going to talk about below is a thread-safe queue data structure, so we can use it in multi-threaded programming with confidence.

Finally we will talk about the crucial concept of thread blocking. After we have studied the threading module in detail, we will probably know how to create and start threads. But if we create the thread and then call the start method, then we will find that the entire program ends immediately. What is going on? In fact, this is because we only have the code responsible for starting the sub-thread in the main thread, which means that the main thread only has the function of starting the sub-thread. As for the codes executed by the sub-thread, they are essentially just a method written in the class. It is not actually executed in the main thread, so after the main thread starts the sub-thread, all its work has been completed and it has exited gloriously. Now that the main thread has exited, the python process has ended, and other threads will have no memory space to continue execution. So we should let the main thread brother wait until all the sub-thread brothers have completed their execution before exiting gloriously. So is there any method in the thread object to jam the main thread? thread.sleep? This is indeed a solution, but how long should the main thread sleep? We don't know exactly how long it will take to complete a task, so we definitely can't use this method. So at this time we should check online to see if there is any way to make the sub-thread "stuck" in the main thread? The word "stuck" seems too vulgar. In fact, to be more professional, it should be called "blocking", so we can query "python sub-thread blocks the main thread". If we use the search engine correctly, we should find a method. It's called join(). Yes, this join() method is the method used by the sub-thread to block the main thread. When the sub-thread has not finished executing, the main thread will get stuck when it reaches the line containing the join() method. There, the code following the join() method will not be executed until all threads have finished executing.

Queue module (queue):

Suppose there is a scenario where we need to crawl a person’s blog. We know that this person’s blog has two pages, a list.php page All article links of this blog are displayed, and there is also a view.php page that displays the specific content of an article.

If we want to crawl all the article content in this person's blog, the idea of writing a single-threaded crawler is: first use regular expressions to crawl the href attribute of the a tag of all links in this list.php page. Store an array named article_list (not called an array in python, it is called a list, a list of Chinese names), then use a for loop to traverse the article_list array, use various functions to capture web page content to capture the content, and then Store in database.

If we want to write a multi-threaded crawler to complete this task, assuming that our program uses 10 threads, then we have to find a way to divide the previously crawled article_list into 10 parts, respectively. Each copy is assigned to one of the child threads.

But here comes the problem. If the length of our article_list array is not a multiple of 10, that is, the number of articles is not an integer multiple of 10, then the last thread will be assigned fewer tasks than other threads, then It will be over sooner.

If we just crawl this kind of blog article with only a few thousand words, this seems to be no problem, but if we have a task (not necessarily the task of crawling web pages, it may be mathematical calculations, or graphics rendering If the running time of time-consuming tasks (such as time-consuming tasks) is very long, this will cause a huge waste of resources and time. Our purpose of multi-threading is to utilize all computing resources and computing time as much as possible, so we must find ways to allocate tasks more scientifically and rationally.

And we also need to consider a situation, that is, when the number of articles is large, we need to be able to quickly crawl the content of the article and see the content we have crawled as soon as possible. Demand is often reflected on many CMS collection sites.

For example, the target blog we want to crawl now has tens of millions of articles. Usually the blog will be paginated in this case. So if we follow the traditional idea above and crawl list.php first All pages will take at least several hours or even days. If the boss hopes that you can display the crawled content as soon as possible and display the crawled content on our CMS collection station as soon as possible, then we must implement simultaneous crawling. Take list.php and throw the captured data into an article_list array. At the same time, use another thread to extract the URL address of the article that has been captured from the article_list array. Then this thread will use regular expressions in the corresponding URL address. Get the blog post content. How to implement this function?

We need to open two types of threads at the same time. One type of thread is responsible for grabbing the url in list.php and throwing it into the article_list array. The other type of thread is specifically responsible for extracting the url from article_list and then extracting it from the corresponding Capture the corresponding blog content from the view.php page.

But do we still remember the concept of thread safety mentioned earlier? The former type of thread writes data into the article_list array, and the other type of thread reads data from article_list and deletes the data that has been read. However, list in python is not a thread-safe version of the data structure, so this operation will cause unpredictable errors. So we can try to use a more convenient and thread-safe data structure, which is the Queue queue data structure mentioned in our subtitle.

Similarly, Queue also has a join() method. This join() method is actually similar to the join() method in threading mentioned in the previous section, except that in Queue, the blocking condition of join() is Block only when the queue is not empty, otherwise continue to execute the code after join(). In this crawler, I used this method to block the main thread instead of blocking the main thread directly through the join method of the thread. The advantage of this is that I don't need to write an infinite loop to determine whether there are still unfinished tasks in the current task queue. tasks, making the program run more efficiently and the code more elegant.

Another detail is that the name of the queue module in python2.7 is Queue, but in python3.x it has been renamed queue, which is the difference between the upper and lower case of the first letter. If you copy the code online, Remember this little difference.

getopt module:

If you have learned C language, you should be familiar with this module. It is a module responsible for extracting the attached parameters from the command on the command line. For example, when we usually operate the mysql database on the command line, we enter mysql -h127.0.0.1 -uroot -p, where the "-h127.0.0.1 -uroot -p" after mysql is the parameter part that can be obtained.

When we usually write crawlers, there are some parameters that need to be entered manually by the user, such as the host IP of mysql, user name and password, etc. In order to make our program more friendly and versatile, some configuration items do not need to be hard-coded in the code, but we dynamically pass them in when executing them. Combined with the getopt module, we can realize this function.

hashlib (hash):

Hash is essentially a collection of mathematical algorithms. This mathematical algorithm has a characteristic that when you give a parameter, it can output another result. Although this result is very short, it can be approximately considered unique. . For example, we usually hear md5, sha-1, etc., they all belong to hash algorithms. They can convert some documents and text into a string of numbers and English mixed with less than a hundred digits after a series of mathematical operations.

The hashlib module in python encapsulates these mathematical operation functions for us. We only need to simply call it to complete the hash operation.

Why is this package used in my crawler? Because in some interface requests, the server needs to bring some verification codes to ensure that the data requested by the interface has not been tampered with or lost. These verification codes are generally hash algorithms, so we need to use this module to complete this operation.

json:

Many times the data we capture is not html, but some json data. json is essentially just a string containing key-value pairs. If we need to extract it A specific string, then we need the json module to convert this json string into a dict type for our operation.

re (regular expression):

Sometimes we capture some web content, but we need to extract some content in a specific format from the web page, such as email addresses The format is generally the first few English letters plus an @ symbol plus the domain name of http://xxx.xxx. To describe this format in a computer language, we can use an expression called a regular expression to express This format allows the computer to automatically match text that conforms to this specific format from a large string.

sys:

This module is mainly used to deal with some system matters. In this crawler, I use it to solve the output encoding problem.

time:

Anyone who has learned a little English can guess that this module is used to process time. In this crawler, I use it to get the current timestamp, and then pass it on the main thread At the end, subtract the timestamp when the program started running from the current timestamp to get the running time of the program.

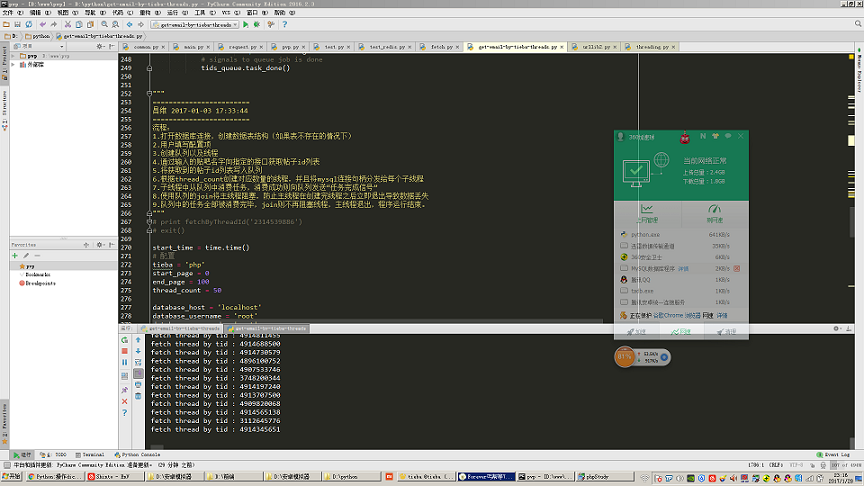

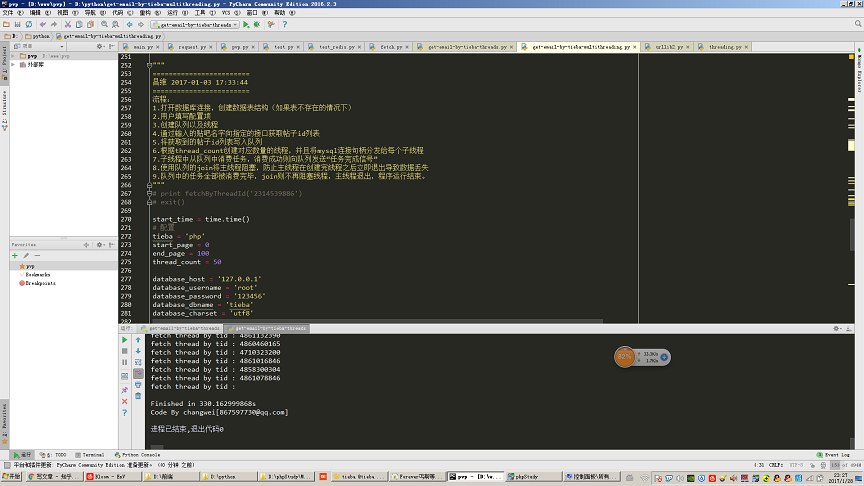

As shown in the figure, open 50 threads to crawl 100 pages (30 posts per page, equivalent to crawling 3000 posts) and extract the content of Tieba posts. This step of accessing the mobile mailbox took a total of 330 seconds.

urllib and urllib2:

These two modules are used to handle some http requests and url formatting. The core code of the http request part of my crawler is completed using this module.

MySQLdb:

This is a third-party module used to operate mysql database in python.

Here we have to pay attention to a detail: the mysqldb module is not a thread-safe version, which means that we cannot share the same mysql connection handle in multiple threads. So you can see in my code that I pass in a new mysql connection handle in the constructor of each thread. Therefore, each child thread will only use its own independent mysql connection handle.

cmd_color_printers:

This is also a third-party module. The relevant code can be found online. This module is mainly used to output color strings to the command line. For example, when we usually have an error in the crawler, if we want to output red fonts that will be more conspicuous, we must use this module.

Error handling of automated crawlers:

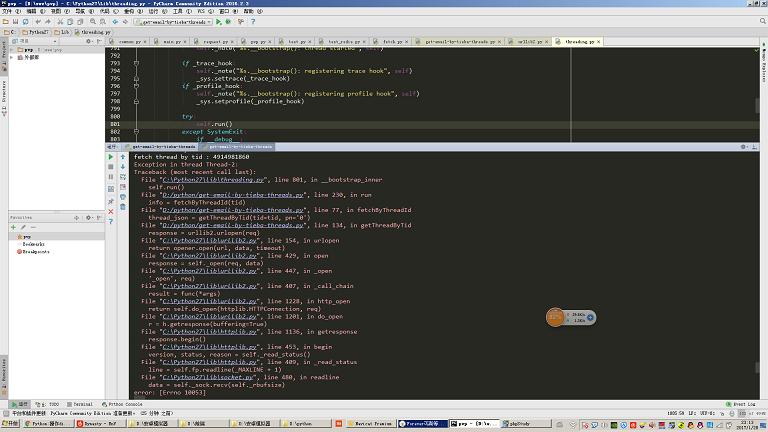

#If you use this crawler in an environment where the network quality is not very good, you will find that sometimes it will report the following: For the exception shown in the figure, no exception handling is written here.

Normally if we want to write a highly automated crawler, we need to anticipate all the abnormal situations that our crawler may encounter and handle these abnormal situations.

For example, if there is an error as shown in the picture, we should re-insert the task being processed into the task queue, otherwise we will miss information. This is also a complicated point in crawler writing.