With the increasing number of users and the skyrocketing daily activity and peak value, database processing performance is facing huge challenges. Let’s share the database optimization plan for the actual platform with a peak value of 100,000+. Discuss with everyone, learn from each other and improve!

Case: Game platform.

1. Solve high concurrency

When the number of client connections reaches the peak, the maintenance and processing of connections by the server will not be done here for the time being. discuss. When multiple write requests are sent to the database, multiple tables need to be inserted at this time, especially some expressions that are stored in the tens of millions per day. As time goes by, the traditional way of synchronously writing data is obviously not advisable. After Experiments have been improved a lot through asynchronous insertion, but at the same time, some sacrifices must be made to the real-time performance of reading data.

There are many asynchronous methods. The current method is to transfer the data from the temporary table to the real table through the job at regular intervals (5min, 10min...depending on the requirement settings).

1. There is original table A, which is also the table actually used when reading.

2. Create B and C with the same structure as the original table A for data transfer processing. The synchronization process is C->B->A.

3. Establish the job Job1 that synchronizes data and the table that records the running status of Job1. The most important thing during synchronization is to check the current status of Job1. If the data of B is currently being synchronized to A, then The data from the server is stored in C, and then the data is imported to B. This batch of data will be transferred to A when the next job is executed. As shown in Figure 1:

At the same time, in order to ensure safety and facilitate troubleshooting, a stored procedure that records the entire database instance should be used to check the job execution results in a shorter time. If an abnormal failure occurs, relevant personnel should be notified promptly through other means. For example, write to the email and SMS table, let a Tcp notification program read and send regularly, and so on.

Note: If the data in a day reaches dozens of G, and if there are query requirements for this table (partitioning will be mentioned below), one of the best strategies is:

You can synchronize B to Multiple servers share the query pressure and reduce resource competition. Because the resources of the entire database are limited, for example, an insertion operation will first obtain a shared lock, then locate a certain row of data through the clustered index, and then upgrade it to an intention lock. SQL Server needs to apply for different locks based on the size of the data to maintain the lock. memory, causing competition for resources. Therefore, reading and writing should be separated as much as possible, and can be divided according to the business model or set rules; in platform projects, priority should be given to ensuring that data can be effectively inserted.

In the inevitable query of big data, it will definitely consume a lot of resources. If you encounter batch deletion, you can switch to a circular batch method (such as 2000 items at a time), so that this will not happen. The process causes the entire library to hang up, resulting in some unpredictable bugs. After practice, it is effective and feasible, but it only sacrifices storage space. Fields with a large amount of data in the table can also be split into new tables according to query requirements. Of course, these should be set according to the needs of each business scenario, and a suitable but not flashy solution can be designed.

2. Solve the storage problem

If the data in a single table reaches dozens of gigabytes every day, it is natural to improve the storage solution. Now I would like to share my own plan to stay on the front line despite the ravages of soaring data! Here is an example to share my humble opinion on my own environment:

Existing data table A, a single table adds 30G of data every day, and uses asynchronous data synchronization during storage. Some tables cannot clear data. After partitioning, you can also divide the file groups into file groups and assign the file groups to different disks to reduce competition for IO resources and ensure the normal operation of existing resources. Now combine the requirements to retain historical data for 5 days:

1. At this time, you need to use the job to generate a partition plan based on the partition function, such as partitioning based on userid or time field;

2. Move the table After partitioning, the query can quickly locate a certain partition through the corresponding index;

3. Transfer the unnecessary partition data to a table with the same structure and index through job merging partitions, and then clear the data in this table.

As shown in Figure 2:

Capture long query times through sql query tracking, and use sql’s built-in stored procedure sp_lock or views dm_tran_locks and dblockinfo View the type and granularity of locks that exist on the current instance.

After locating the specific query statement or stored procedure, prescribe the right medicine! The medicine cures the disease!

The above is the entire content of this article. I hope that the content of this article can bring some help to everyone's study or work. I also hope to support the PHP Chinese website!

For more articles related to Sqlserver high concurrency and big data storage solutions, please pay attention to the PHP Chinese website!

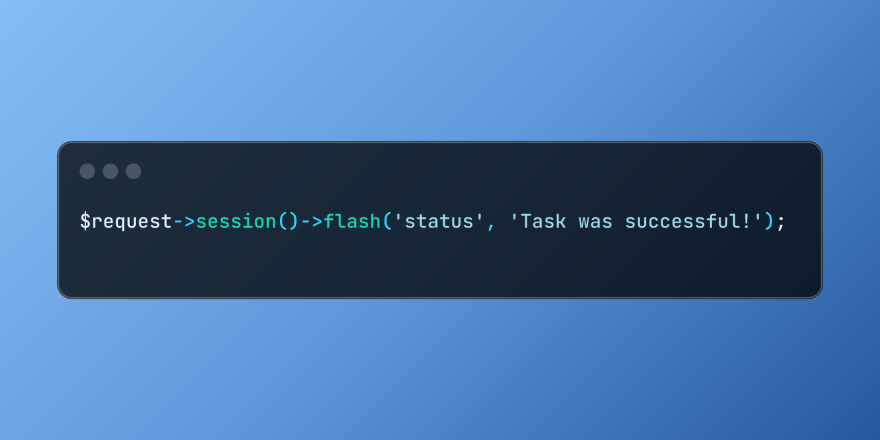

Working with Flash Session Data in LaravelMar 12, 2025 pm 05:08 PM

Working with Flash Session Data in LaravelMar 12, 2025 pm 05:08 PMLaravel simplifies handling temporary session data using its intuitive flash methods. This is perfect for displaying brief messages, alerts, or notifications within your application. Data persists only for the subsequent request by default: $request-

cURL in PHP: How to Use the PHP cURL Extension in REST APIsMar 14, 2025 am 11:42 AM

cURL in PHP: How to Use the PHP cURL Extension in REST APIsMar 14, 2025 am 11:42 AMThe PHP Client URL (cURL) extension is a powerful tool for developers, enabling seamless interaction with remote servers and REST APIs. By leveraging libcurl, a well-respected multi-protocol file transfer library, PHP cURL facilitates efficient execution of various network protocols, including HTTP, HTTPS, and FTP. This extension offers granular control over HTTP requests, supports multiple concurrent operations, and provides built-in security features.

Simplified HTTP Response Mocking in Laravel TestsMar 12, 2025 pm 05:09 PM

Simplified HTTP Response Mocking in Laravel TestsMar 12, 2025 pm 05:09 PMLaravel provides concise HTTP response simulation syntax, simplifying HTTP interaction testing. This approach significantly reduces code redundancy while making your test simulation more intuitive. The basic implementation provides a variety of response type shortcuts: use Illuminate\Support\Facades\Http; Http::fake([ 'google.com' => 'Hello World', 'github.com' => ['foo' => 'bar'], 'forge.laravel.com' =>

12 Best PHP Chat Scripts on CodeCanyonMar 13, 2025 pm 12:08 PM

12 Best PHP Chat Scripts on CodeCanyonMar 13, 2025 pm 12:08 PMDo you want to provide real-time, instant solutions to your customers' most pressing problems? Live chat lets you have real-time conversations with customers and resolve their problems instantly. It allows you to provide faster service to your custom

Explain the concept of late static binding in PHP.Mar 21, 2025 pm 01:33 PM

Explain the concept of late static binding in PHP.Mar 21, 2025 pm 01:33 PMArticle discusses late static binding (LSB) in PHP, introduced in PHP 5.3, allowing runtime resolution of static method calls for more flexible inheritance.Main issue: LSB vs. traditional polymorphism; LSB's practical applications and potential perfo

PHP Logging: Best Practices for PHP Log AnalysisMar 10, 2025 pm 02:32 PM

PHP Logging: Best Practices for PHP Log AnalysisMar 10, 2025 pm 02:32 PMPHP logging is essential for monitoring and debugging web applications, as well as capturing critical events, errors, and runtime behavior. It provides valuable insights into system performance, helps identify issues, and supports faster troubleshoot

How to Register and Use Laravel Service ProvidersMar 07, 2025 am 01:18 AM

How to Register and Use Laravel Service ProvidersMar 07, 2025 am 01:18 AMLaravel's service container and service providers are fundamental to its architecture. This article explores service containers, details service provider creation, registration, and demonstrates practical usage with examples. We'll begin with an ove

Customizing/Extending Frameworks: How to add custom functionality.Mar 28, 2025 pm 05:12 PM

Customizing/Extending Frameworks: How to add custom functionality.Mar 28, 2025 pm 05:12 PMThe article discusses adding custom functionality to frameworks, focusing on understanding architecture, identifying extension points, and best practices for integration and debugging.

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

SublimeText3 Mac version

God-level code editing software (SublimeText3)

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

SublimeText3 English version

Recommended: Win version, supports code prompts!