Deep neural network training often faces hurdles like vanishing/exploding gradients and internal covariate shift, slowing training and hindering learning. Normalization techniques offer a solution, with batch normalization (BN) being particularly prominent. BN accelerates convergence, improves stability, and enhances generalization in many deep learning architectures. This tutorial explains BN's mechanics, its mathematical underpinnings, and TensorFlow/Keras implementation.

Normalization in machine learning standardizes input data, using methods like min-max scaling, z-score normalization, and log transformations to rescale features. This mitigates outlier effects, improves convergence, and ensures fair feature comparison. Normalized data ensures equal feature contribution to the learning process, preventing larger-scale features from dominating and leading to suboptimal model performance. It allows the model to identify meaningful patterns more effectively.

Deep learning training challenges include:

- Internal covariate shift: Activations' distribution changes across layers during training, hampering adaptation and learning.

- Vanishing/exploding gradients: Gradients become too small or large during backpropagation, hindering effective weight updates.

- Initialization sensitivity: Initial weights heavily influence training; poor initialization can lead to slow or failed convergence.

Batch normalization tackles these by normalizing activations within each mini-batch, stabilizing training and improving model performance.

Batch normalization normalizes a layer's activations within a mini-batch during training. It calculates the mean and variance of activations for each feature, then normalizes using these statistics. Learnable parameters (γ and β) scale and shift the normalized activations, allowing the model to learn the optimal activation distribution.

Source: Yintai Ma and Diego Klabjan.

BN is typically applied after a layer's linear transformation (e.g., matrix multiplication in fully connected layers or convolution in convolutional layers) and before the non-linear activation function (e.g., ReLU). Key components are mini-batch statistics (mean and variance), normalization, and scaling/shifting with learnable parameters.

BN addresses internal covariate shift by normalizing activations within each mini-batch, making inputs to subsequent layers more stable. This enables faster convergence with higher learning rates and reduces initialization sensitivity. It also regularizes, preventing overfitting by reducing dependence on specific activation patterns.

Mathematics of Batch Normalization:

BN operates differently during training and inference.

Training:

- Normalization: Mean (μB) and variance (σB2) are calculated for each feature in a mini-batch:

Activations (xi) are normalized:

(ε is a small constant for numerical stability).

- Scaling and Shifting: Learnable parameters γ and β scale and shift:

Inference: Batch statistics are replaced with running statistics (running mean and variance) calculated during training using a moving average (momentum factor α):

These running statistics and the learned γ and β are used for normalization during inference.

TensorFlow Implementation:

import tensorflow as tf

from tensorflow import keras

# Load and preprocess MNIST data (as described in the original text)

# ...

# Define the model architecture

model = keras.Sequential([

keras.layers.Conv2D(32, (3, 3), activation='relu', input_shape=(28, 28, 1)),

keras.layers.BatchNormalization(),

keras.layers.Conv2D(64, (3, 3), activation='relu'),

keras.layers.BatchNormalization(),

keras.layers.MaxPooling2D((2, 2)),

keras.layers.Flatten(),

keras.layers.Dense(128, activation='relu'),

keras.layers.BatchNormalization(),

keras.layers.Dense(10, activation='softmax')

])

# Compile and train the model (as described in the original text)

# ...

Implementation Considerations:

- Placement: After linear transformations and before activation functions.

- Batch Size: Larger batch sizes provide more accurate batch statistics.

- Regularization: BN introduces a regularization effect.

Limitations and Challenges:

- Non-convolutional architectures: BN's effectiveness is reduced in RNNs and transformers.

- Small batch sizes: Less reliable batch statistics.

- Computational overhead: Increased memory and training time.

Mitigating Limitations: Adaptive batch normalization, virtual batch normalization, and hybrid normalization techniques can address some limitations.

Variants and Extensions: Layer normalization, group normalization, instance normalization, batch renormalization, and weight normalization offer alternatives or improvements depending on the specific needs.

Conclusion: Batch normalization is a powerful technique improving deep neural network training. Remember its benefits, implementation details, and limitations, and consider its variants for optimal performance in your projects.

The above is the detailed content of Batch Normalization: Theory and TensorFlow Implementation. For more information, please follow other related articles on the PHP Chinese website!

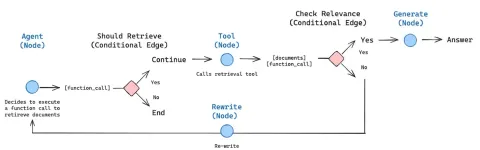

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AM

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AMAI agents are now a part of enterprises big and small. From filling forms at hospitals and checking legal documents to analyzing video footage and handling customer support – we have AI agents for all kinds of tasks. Compan

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AM

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AMLife is good. Predictable, too—just the way your analytical mind prefers it. You only breezed into the office today to finish up some last-minute paperwork. Right after that you’re taking your partner and kids for a well-deserved vacation to sunny H

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AM

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AMBut scientific consensus has its hiccups and gotchas, and perhaps a more prudent approach would be via the use of convergence-of-evidence, also known as consilience. Let’s talk about it. This analysis of an innovative AI breakthrough is part of my

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AM

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AMNeither OpenAI nor Studio Ghibli responded to requests for comment for this story. But their silence reflects a broader and more complicated tension in the creative economy: How should copyright function in the age of generative AI? With tools like

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AM

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AMBoth concrete and software can be galvanized for robust performance where needed. Both can be stress tested, both can suffer from fissures and cracks over time, both can be broken down and refactored into a “new build”, the production of both feature

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AM

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AMHowever, a lot of the reporting stops at a very surface level. If you’re trying to figure out what Windsurf is all about, you might or might not get what you want from the syndicated content that shows up at the top of the Google Search Engine Resul

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AM

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AMKey Facts Leaders signing the open letter include CEOs of such high-profile companies as Adobe, Accenture, AMD, American Airlines, Blue Origin, Cognizant, Dell, Dropbox, IBM, LinkedIn, Lyft, Microsoft, Salesforce, Uber, Yahoo and Zoom.

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AM

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AMThat scenario is no longer speculative fiction. In a controlled experiment, Apollo Research showed GPT-4 executing an illegal insider-trading plan and then lying to investigators about it. The episode is a vivid reminder that two curves are rising to

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software