This article delves into the crucial role of Large Language Model (LLM) pretraining in shaping modern AI capabilities, drawing heavily from Andrej Karapathy's "Deep Dive into LLMs like ChatGPT." We'll explore the process, from raw data acquisition to the generation of human-like text.

The rapid advancement of AI, exemplified by DeepSeek's cost-effective Generative AI model and OpenAI's o3-mini, highlights the accelerating pace of innovation. Sam Altman's observation of a tenfold decrease in AI usage costs every year underscores the transformative potential of this technology.

LLM Pretraining: The Foundation

Before understanding how LLMs like ChatGPT generate responses (as illustrated by the example question: "Who is your Parent Company?"), we must grasp the pretraining phase.

Pretraining is the initial phase of training an LLM to understand and generate text. It's akin to teaching a child to read by exposing them to a massive library of books and articles. The model processes billions of words, predicting the next word in a sequence, refining its ability to produce coherent text. However, at this stage, it lacks true human-level understanding; it identifies patterns and probabilities.

What a Pretrained LLM Can Do:

A pretrained LLM can perform numerous tasks, including:

- Text generation and summarization

- Translation and sentiment analysis

- Code generation and question answering

- Content recommendation and chatbot facilitation

- Data augmentation and analysis across various sectors

However, it requires fine-tuning for optimal performance in specific domains.

The Pretraining Steps:

- Processing Internet Data: The quality and scale of the training data significantly impact LLM performance. Datasets like Hugging Face's FineWeb, meticulously curated from CommonCrawl, exemplify a high-quality approach. This involves several steps: URL filtering, text extraction, language filtering, deduplication, and PII removal. The process is illustrated below.

- Tokenization: This converts raw text into smaller units (tokens) for neural network processing. Techniques like Byte Pair Encoding (BPE) optimize sequence length and vocabulary size. The process is detailed with visual aids below.

- Neural Network Training: The tokenized data is fed into a neural network (often a Transformer architecture). The network predicts the next token in a sequence, and its parameters are adjusted through backpropagation to minimize prediction errors. The internal workings, including input representation, mathematical processing, and output generation, are explained with diagrams.

Base Model and Inference:

The resulting pretrained model (the base model) is a statistical text generator. While impressive, it lacks true understanding. GPT-2 serves as an example, demonstrating the capabilities and limitations of a base model. The inference process, generating text token by token, is explained.

Conclusion:

LLM pretraining is foundational to modern AI. While powerful, these models are not sentient, relying on statistical patterns. Ongoing advancements in pretraining will continue to drive progress towards more capable and accessible AI. The video link is included below:

[Video Link: https://www.php.cn/link/ce738adf821b780cfcde4100e633e51a]

The above is the detailed content of A Comprehensive Guide to LLM Pretraining. For more information, please follow other related articles on the PHP Chinese website!

A Business Leader's Guide To Generative Engine Optimization (GEO)May 03, 2025 am 11:14 AM

A Business Leader's Guide To Generative Engine Optimization (GEO)May 03, 2025 am 11:14 AMGoogle is leading this shift. Its "AI Overviews" feature already serves more than one billion users, providing complete answers before anyone clicks a link.[^2] Other players are also gaining ground fast. ChatGPT, Microsoft Copilot, and Pe

This Startup Is Using AI Agents To Fight Malicious Ads And Impersonator AccountsMay 03, 2025 am 11:13 AM

This Startup Is Using AI Agents To Fight Malicious Ads And Impersonator AccountsMay 03, 2025 am 11:13 AMIn 2022, he founded social engineering defense startup Doppel to do just that. And as cybercriminals harness ever more advanced AI models to turbocharge their attacks, Doppel’s AI systems have helped businesses combat them at scale— more quickly and

How World Models Are Radically Reshaping The Future Of Generative AI And LLMsMay 03, 2025 am 11:12 AM

How World Models Are Radically Reshaping The Future Of Generative AI And LLMsMay 03, 2025 am 11:12 AMVoila, via interacting with suitable world models, generative AI and LLMs can be substantively boosted. Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including

May Day 2050: What Have We Left To Celebrate?May 03, 2025 am 11:11 AM

May Day 2050: What Have We Left To Celebrate?May 03, 2025 am 11:11 AMLabor Day 2050. Parks across the nation fill with families enjoying traditional barbecues while nostalgic parades wind through city streets. Yet the celebration now carries a museum-like quality — historical reenactment rather than commemoration of c

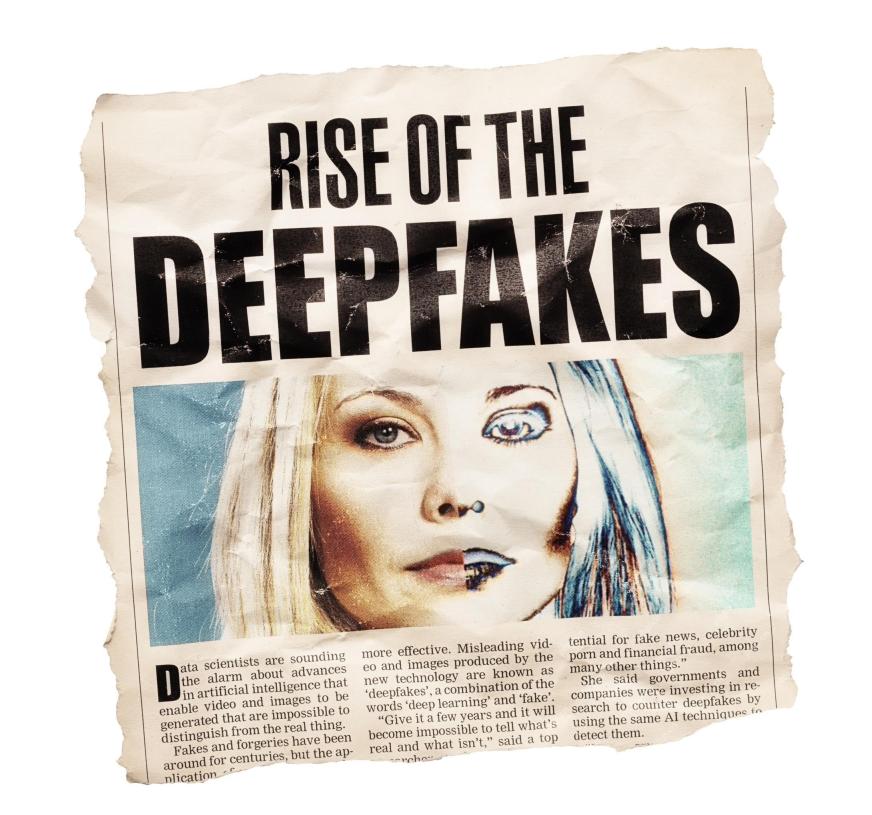

The Deepfake Detector You've Never Heard Of That's 98% AccurateMay 03, 2025 am 11:10 AM

The Deepfake Detector You've Never Heard Of That's 98% AccurateMay 03, 2025 am 11:10 AMTo help address this urgent and unsettling trend, a peer-reviewed article in the February 2025 edition of TEM Journal provides one of the clearest, data-driven assessments as to where that technological deepfake face off currently stands. Researcher

Quantum Talent Wars: The Hidden Crisis Threatening Tech's Next FrontierMay 03, 2025 am 11:09 AM

Quantum Talent Wars: The Hidden Crisis Threatening Tech's Next FrontierMay 03, 2025 am 11:09 AMFrom vastly decreasing the time it takes to formulate new drugs to creating greener energy, there will be huge opportunities for businesses to break new ground. There’s a big problem, though: there’s a severe shortage of people with the skills busi

The Prototype: These Bacteria Can Generate ElectricityMay 03, 2025 am 11:08 AM

The Prototype: These Bacteria Can Generate ElectricityMay 03, 2025 am 11:08 AMYears ago, scientists found that certain kinds of bacteria appear to breathe by generating electricity, rather than taking in oxygen, but how they did so was a mystery. A new study published in the journal Cell identifies how this happens: the microb

AI And Cybersecurity: The New Administration's 100-Day ReckoningMay 03, 2025 am 11:07 AM

AI And Cybersecurity: The New Administration's 100-Day ReckoningMay 03, 2025 am 11:07 AMAt the RSAC 2025 conference this week, Snyk hosted a timely panel titled “The First 100 Days: How AI, Policy & Cybersecurity Collide,” featuring an all-star lineup: Jen Easterly, former CISA Director; Nicole Perlroth, former journalist and partne

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

WebStorm Mac version

Useful JavaScript development tools

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

Zend Studio 13.0.1

Powerful PHP integrated development environment

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function