ReFT: A Revolutionary Approach to Fine-tuning LLMs

ReFT (Representation Finetuning), introduced in Stanford's May 2024 paper, offers a groundbreaking method for efficiently fine-tuning large language models (LLMs). Its potential was immediately apparent, further highlighted by Oxen.ai's July 2024 experiment fine-tuning Llama3 (8B) on a single Nvidia A10 GPU in just 14 minutes.

Unlike existing Parameter-Efficient Fine-Tuning (PEFT) methods like LoRA, which modify model weights or input, ReFT leverages the Distributed Interchange Intervention (DII) method. DII projects embeddings into a lower-dimensional subspace, enabling fine-tuning through this subspace.

This article first reviews popular PEFT algorithms (LoRA, Prompt Tuning, Prefix Tuning), then explains DII, before delving into ReFT and its experimental results.

Parameter-Efficient Fine-Tuning (PEFT) Techniques

Hugging Face provides a comprehensive overview of PEFT techniques. Let's briefly summarize key methods:

LoRA (Low-Rank Adaptation): Introduced in 2021, LoRA's simplicity and generalizability have made it a leading technique for fine-tuning LLMs and diffusion models. Instead of adjusting all layer weights, LoRA adds low-rank matrices, significantly reducing trainable parameters (often less than 0.3%), accelerating training and minimizing GPU memory usage.

Prompt Tuning: This method uses "soft prompts"—learnable task-specific embeddings—as prefixes, enabling efficient multi-task prediction without duplicating the model for each task.

Prefix Tuning (P-Tuning v2): Addressing limitations of prompt tuning at scale, Prefix Tuning adds trainable prompt embeddings to various layers, allowing task-specific learning at different levels.

LoRA's robustness and efficiency make it the most widely used PEFT method for LLMs. A detailed empirical comparison can be found in this paper.

Distributed Interchange Intervention (DII)

DII is rooted in causal abstraction, a framework using intervention between a high-level (causal) model and a low-level (neural network) model to assess alignment. DII projects both models into subspaces via orthogonal projections, creating an intervened model through rotation operations. A detailed visual example is available here.

The DII process can be mathematically represented as:

where R represents orthogonal projections, and the distributed alignment search (DAS) optimizes the subspace to maximize the probability of expected counterfactual outputs post-intervention.

ReFT – Representation Finetuning

ReFT intervenes in the model's hidden representation within a lower-dimensional space. The illustration below shows the intervention (phi) applied to layer L and position P:

LoReFT (Low-rank Linear Subspace Reft) introduces a learned projected source:

where h is the hidden representation, and Rs edits h in the low-dimensional space spanned by R. The LoReFT integration into a neural network layer is shown below:

During LLM fine-tuning, the LLM parameters remain frozen, and only the projection parameters (phi={R, W, b}) are trained.

Experimental Results

The original ReFT paper presents comparative experiments against full fine-tuning (FT), LoRA, and Prefix Tuning across various benchmarks. ReFT techniques consistently outperform existing methods, reducing parameters by at least 90% while achieving superior performance.

Discussion

ReFT's appeal stems from its superior performance with Llama-family models across diverse benchmarks and its grounding in causal abstraction, which aids model interpretability. ReFT demonstrates that a linear subspace distributed across neurons can effectively control numerous tasks, offering valuable insights into LLMs.

References

- Wu et al., ReFT: Representation Finetuning for Language Models

- Hu et al., LoRA: Low-Rank Adaptation of Large Language Models

- Zhuang et al., Time-Varying LoRA

- Liu et al., P-tuning v2

- Geiger et al., Finding alignments between interpretable causal variables and distributed neural representations

- Lester et al., The power of scale for parameter-efficient prompt tuning

- Pu et al., Empirical analysis of the strengths and weaknesses of Peft techniques for LLMs

(Note: Please replace the bracketed https://www.php.cn/link/6c11cb78b7bbb5c22d5f5271b5494381 placeholders with the actual links to the research papers.)

The above is the detailed content of Is ReFT All We Needed?. For more information, please follow other related articles on the PHP Chinese website!

Personal Hacking Will Be A Pretty Fierce BearMay 11, 2025 am 11:09 AM

Personal Hacking Will Be A Pretty Fierce BearMay 11, 2025 am 11:09 AMCyberattacks are evolving. Gone are the days of generic phishing emails. The future of cybercrime is hyper-personalized, leveraging readily available online data and AI to craft highly targeted attacks. Imagine a scammer who knows your job, your f

Pope Leo XIV Reveals How AI Influenced His Name ChoiceMay 11, 2025 am 11:07 AM

Pope Leo XIV Reveals How AI Influenced His Name ChoiceMay 11, 2025 am 11:07 AMIn his inaugural address to the College of Cardinals, Chicago-born Robert Francis Prevost, the newly elected Pope Leo XIV, discussed the influence of his namesake, Pope Leo XIII, whose papacy (1878-1903) coincided with the dawn of the automobile and

FastAPI-MCP Tutorial for Beginners and Experts - Analytics VidhyaMay 11, 2025 am 10:56 AM

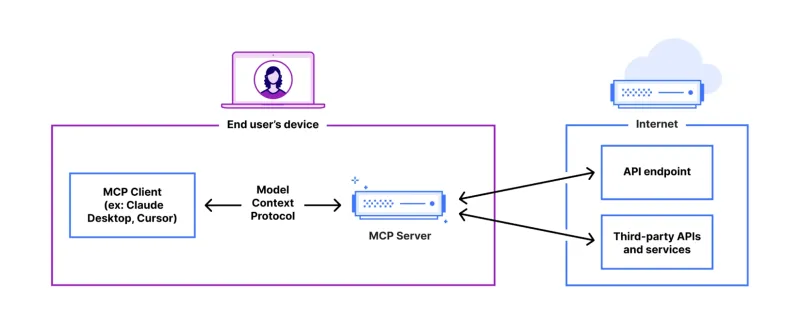

FastAPI-MCP Tutorial for Beginners and Experts - Analytics VidhyaMay 11, 2025 am 10:56 AMThis tutorial demonstrates how to integrate your Large Language Model (LLM) with external tools using the Model Context Protocol (MCP) and FastAPI. We'll build a simple web application using FastAPI and convert it into an MCP server, enabling your L

Dia-1.6B TTS : Best Text-to-Dialogue Generation Model - Analytics VidhyaMay 11, 2025 am 10:27 AM

Dia-1.6B TTS : Best Text-to-Dialogue Generation Model - Analytics VidhyaMay 11, 2025 am 10:27 AMExplore Dia-1.6B: A groundbreaking text-to-speech model developed by two undergraduates with zero funding! This 1.6 billion parameter model generates remarkably realistic speech, including nonverbal cues like laughter and sneezes. This article guide

3 Ways AI Can Make Mentorship More Meaningful Than EverMay 10, 2025 am 11:17 AM

3 Ways AI Can Make Mentorship More Meaningful Than EverMay 10, 2025 am 11:17 AMI wholeheartedly agree. My success is inextricably linked to the guidance of my mentors. Their insights, particularly regarding business management, formed the bedrock of my beliefs and practices. This experience underscores my commitment to mentor

AI Unearths New Potential In The Mining IndustryMay 10, 2025 am 11:16 AM

AI Unearths New Potential In The Mining IndustryMay 10, 2025 am 11:16 AMAI Enhanced Mining Equipment The mining operation environment is harsh and dangerous. Artificial intelligence systems help improve overall efficiency and security by removing humans from the most dangerous environments and enhancing human capabilities. Artificial intelligence is increasingly used to power autonomous trucks, drills and loaders used in mining operations. These AI-powered vehicles can operate accurately in hazardous environments, thereby increasing safety and productivity. Some companies have developed autonomous mining vehicles for large-scale mining operations. Equipment operating in challenging environments requires ongoing maintenance. However, maintenance can keep critical devices offline and consume resources. More precise maintenance means increased uptime for expensive and necessary equipment and significant cost savings. AI-driven

Why AI Agents Will Trigger The Biggest Workplace Revolution In 25 YearsMay 10, 2025 am 11:15 AM

Why AI Agents Will Trigger The Biggest Workplace Revolution In 25 YearsMay 10, 2025 am 11:15 AMMarc Benioff, Salesforce CEO, predicts a monumental workplace revolution driven by AI agents, a transformation already underway within Salesforce and its client base. He envisions a shift from traditional markets to a vastly larger market focused on

AI HR Is Going To Rock Our Worlds As AI Adoption SoarsMay 10, 2025 am 11:14 AM

AI HR Is Going To Rock Our Worlds As AI Adoption SoarsMay 10, 2025 am 11:14 AMThe Rise of AI in HR: Navigating a Workforce with Robot Colleagues The integration of AI into human resources (HR) is no longer a futuristic concept; it's rapidly becoming the new reality. This shift impacts both HR professionals and employees, dem

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Zend Studio 13.0.1

Powerful PHP integrated development environment

WebStorm Mac version

Useful JavaScript development tools

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

SublimeText3 Mac version

God-level code editing software (SublimeText3)