This week, I've been working on on a command-line tool I named codeshift, which lets users input source code files, choose a programming language, and translates them into their chosen language.

There's no fancy stuff going on under the hood - it just uses an AI provider called Groq to handle the translation - but I wanted to get into the development process, how it's used, and what features it offers.

uday-rana

/

codeshift

uday-rana

/

codeshift

codeshift

Command-line tool that transforms source code files into any language.

Features

- Accepts multiple input files

- Streams output to stdout

- Can choose output language

- Can specify file path to write output to file

- Can use custom API key in .env

Installation

- Install Node.js

- Get a Groq API key

- Clone repo with Git or download as a .zip

- Within the repo directory containing package.json, run npm install

- (Optional) Run npm install -g . to install the package globally (to let you run it without prefixing node)

- Create a file called .env and add your Groq API key: GROQ_API_KEY=API_KEY_HERE

Usage

codeshift [-o

Example

codeshift -o index.go go examples/index.js

Options

- -o, --output: Specify filename to write output to

- -h, --help: Display help for a command

- -v, --version: Output the version number

Arguments

-

: The desired language to convert source files to -

: Paths…

Features

- Accepts multiple input files

- Can choose output language

- Streams output to stdout

- Can specify file path to write output to file

- Can use custom API key in .env

Usage

codeshift [-o

For example, to translate the file examples/index.js to Go and save the output to index.go:

codeshift -o index.go go examples/index.js

Options

- -o, --output: Specify filename to write output to

- -h, --help: Display help for a command

- -v, --version: Output the version number

Arguments

-

: The desired language to convert source files to -

: Paths to the source files, separated by spaces

Development

I've been working on this project as part of the Topics in Open Source Development course at Seneca Polytechnic in Toronto, Ontario. Starting out, I wanted to stick with technologies I was comfortable with, but the instructions for the project encouraged us to learn something new, like a new programming language or a new runtime.

Although I'd been wanting to learn Java, after doing some research online, it seemed like it wasn't a great choice for developing a CLI tool or interfacing with AI models. It isn't officially supported by OpenAI, and the community library featured in their docs is deprecated.

I've always been one to stick with the popular technologies - they tend to be reliable and have complete documentation and tons of information available online. But this time, I decided to do things differently. I decided to use Bun, a cool new runtime for JavaScript meant to replace Node.

Turns out I should've stuck with my gut. I ran into trouble trying to compile my project and all I could do was hope the developers would fix the issue.

Can not use OpenAI SDK with Sentry Node agent: TypeError: getDefaultAgent is not a function

#1010

Can not use OpenAI SDK with Sentry Node agent: TypeError: getDefaultAgent is not a function

#1010

Confirm this is a Node library issue and not an underlying OpenAI API issue

- [X] This is an issue with the Node library

Describe the bug

Referenced previously here, closed without resolution: https://github.com/openai/openai-node/issues/903

This is a pretty big issue as it prevents usage of the SDK while using the latest Sentry monitoring package.

To Reproduce

- Install Sentry Node sdk via npm i @sentry/node --save

- Enter the following code;

import * as Sentry from '@sentry/node';

// Start Sentry

Sentry.init({

dsn: "https://your-sentry-url",

environment: "your-env",

tracesSampleRate: 1.0, // Capture 100% of the transactions

});

- Try to create a completion somewhere in the process after Sentry has been initialized:

const params = {

model: model,

stream: true,

stream_options: {

include_usage: true

},

messages

};

const completion = await openai.chat.completions.create(params);

Results in error:

TypeError: getDefaultAgent is not a function

at OpenAI.buildRequest (file:///my-project/node_modules/openai/core.mjs:208:66)

at OpenAI.makeRequest (file:///my-project/node_modules/openai/core.mjs:279:44)

Code snippets

(Included)

OS

All operating systems (macOS, Linux)

Node version

v20.10.0

Library version

v4.56.0

This turned me away from Bun. I'd found out from our professor we were going to compile an executable later in the course, and I did not want to deal with Bun's problems down the line.

So, I switched to Node. It was painful going from Bun's easy-to-use built-in APIs to having to learn how to use commander for Node. But at least it wouldn't crash.

I had previous experience working with AI models through code thanks to my co-op, but I was unfamiliar with creating a command-line tool. Configuring the options and arguments turned out to be the most time-consuming aspect of the project.

Apart from the core feature we chose for each of our projects - mine being code translation - we were asked to implement any two additional features. One of the features I chose to implement was to save output to a specified file. Currently, I'm not sure this feature is that useful, since you could just redirect the output to a file, but in the future I want to use it to extract the code from the response to the file, and include the AI's rationale behind the translation in the full response to stdout. Writing this feature also helped me learn about global and command-based options using commander.js. Since there was only one command (run) and it was the default, I wanted the option to show up in the default help menu, not when you specifically typed codeshift help run, so I had to learn to implement it as a global option.

I also ended up "accidentally" implementing the feature for streaming the response to stdout. I was at first scared away from streaming, because it sounded too difficult. But later, when I was trying to read the input files, I figured reading large files in chunks would be more efficient. I realized I'd already implemented streaming in my previous C++ courses, and figuring it wouldn't be too bad, I got to work.

Then, halfway through my implementation I realized I'd have to send the whole file at once to the AI regardless.

But this encouraged me to try streaming the output from the AI. So I hopped on MDN and started reading about ReadableStreams and messing around with ReadableStreamDefaultReader.read() for what felt like an hour - only to scroll down the AI provider's documentation and realize all I had to do was add stream: true to my request.

Either way, I may have taken the scenic route but I ended up implementing streaming.

Planned Features

Right now, the program parses each source file individually, with no shared context. So if a file references another, it wouldn't be reflected in the output. I'd like to enable it to have that context eventually. Like I mentioned, another feature I want to add is writing the AI's reasoning behind the translation to stdout but leaving it out of the output file. I'd also like to add some of the other optional features, like options to specify the AI model to use, the API key to use, and reading that data from a .env file in the same directory.

That's about it for this post. I'll be writing more in the coming weeks.

The above is the detailed content of Building codeshift. For more information, please follow other related articles on the PHP Chinese website!

Replace String Characters in JavaScriptMar 11, 2025 am 12:07 AM

Replace String Characters in JavaScriptMar 11, 2025 am 12:07 AMDetailed explanation of JavaScript string replacement method and FAQ This article will explore two ways to replace string characters in JavaScript: internal JavaScript code and internal HTML for web pages. Replace string inside JavaScript code The most direct way is to use the replace() method: str = str.replace("find","replace"); This method replaces only the first match. To replace all matches, use a regular expression and add the global flag g: str = str.replace(/fi

Custom Google Search API Setup TutorialMar 04, 2025 am 01:06 AM

Custom Google Search API Setup TutorialMar 04, 2025 am 01:06 AMThis tutorial shows you how to integrate a custom Google Search API into your blog or website, offering a more refined search experience than standard WordPress theme search functions. It's surprisingly easy! You'll be able to restrict searches to y

8 Stunning jQuery Page Layout PluginsMar 06, 2025 am 12:48 AM

8 Stunning jQuery Page Layout PluginsMar 06, 2025 am 12:48 AMLeverage jQuery for Effortless Web Page Layouts: 8 Essential Plugins jQuery simplifies web page layout significantly. This article highlights eight powerful jQuery plugins that streamline the process, particularly useful for manual website creation

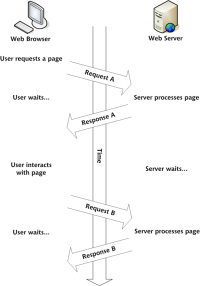

Build Your Own AJAX Web ApplicationsMar 09, 2025 am 12:11 AM

Build Your Own AJAX Web ApplicationsMar 09, 2025 am 12:11 AMSo here you are, ready to learn all about this thing called AJAX. But, what exactly is it? The term AJAX refers to a loose grouping of technologies that are used to create dynamic, interactive web content. The term AJAX, originally coined by Jesse J

What is 'this' in JavaScript?Mar 04, 2025 am 01:15 AM

What is 'this' in JavaScript?Mar 04, 2025 am 01:15 AMCore points This in JavaScript usually refers to an object that "owns" the method, but it depends on how the function is called. When there is no current object, this refers to the global object. In a web browser, it is represented by window. When calling a function, this maintains the global object; but when calling an object constructor or any of its methods, this refers to an instance of the object. You can change the context of this using methods such as call(), apply(), and bind(). These methods call the function using the given this value and parameters. JavaScript is an excellent programming language. A few years ago, this sentence was

10 Mobile Cheat Sheets for Mobile DevelopmentMar 05, 2025 am 12:43 AM

10 Mobile Cheat Sheets for Mobile DevelopmentMar 05, 2025 am 12:43 AMThis post compiles helpful cheat sheets, reference guides, quick recipes, and code snippets for Android, Blackberry, and iPhone app development. No developer should be without them! Touch Gesture Reference Guide (PDF) A valuable resource for desig

Improve Your jQuery Knowledge with the Source ViewerMar 05, 2025 am 12:54 AM

Improve Your jQuery Knowledge with the Source ViewerMar 05, 2025 am 12:54 AMjQuery is a great JavaScript framework. However, as with any library, sometimes it’s necessary to get under the hood to discover what’s going on. Perhaps it’s because you’re tracing a bug or are just curious about how jQuery achieves a particular UI

How do I create and publish my own JavaScript libraries?Mar 18, 2025 pm 03:12 PM

How do I create and publish my own JavaScript libraries?Mar 18, 2025 pm 03:12 PMArticle discusses creating, publishing, and maintaining JavaScript libraries, focusing on planning, development, testing, documentation, and promotion strategies.

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

Atom editor mac version download

The most popular open source editor

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

uday-rana

/

codeshift

uday-rana

/

codeshift

Can not use OpenAI SDK with Sentry Node agent: TypeError: getDefaultAgent is not a function

#1010

Can not use OpenAI SDK with Sentry Node agent: TypeError: getDefaultAgent is not a function

#1010