Technology peripherals

Technology peripherals AI

AI Will speculative sampling lose the inference accuracy of large language models?

Will speculative sampling lose the inference accuracy of large language models?Mitchell Stern and others proposed the prototype concept of speculative sampling in 2018. This approach has since been further developed and refined by various works, including Lookahead Decoding, REST, Medusa and EAGLE, where speculative sampling significantly speeds up the inference process of large language models (LLMs).

An important question is: does speculative sampling in LLM hurt the accuracy of the original model? Let me start with the answer: no.

The standard speculative sampling algorithm is lossless, and this article will prove this through mathematical analysis and experiments.

Mathematical proof

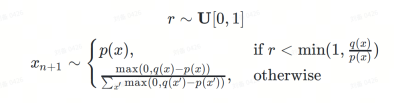

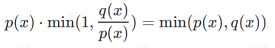

The speculative sampling formula can be defined as follows:

where:

- ? is a real number sampled from a uniform distribution.

-

is the next token to be predicted.

is the next token to be predicted. - ?(?) is the next token distribution given by the draft model.

- ?(?) is the next token distribution given by the basic model.

For simplicity, we omit the probability condition. In fact, ? and ? are conditional distributions based on the prefix token sequence  .

.

The following is the proof of the losslessness of this formula in the DeepMind paper:

If you feel that reading mathematical equations is too boring, next we will illustrate the proof process through some intuitive diagrams.

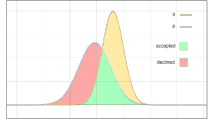

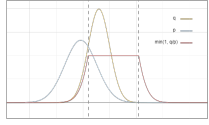

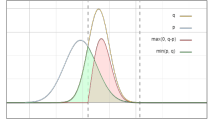

This is the distribution diagram of the draft model ? and the basic model ?:

Figure 1: The probability density function of the output distribution of the draft model p and the basic model q

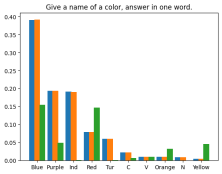

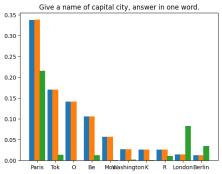

It should be noted that this is just an idealized chart . In practice, what we calculate is a discrete distribution, which looks like this:

Figure 2: The language model predicts the discrete probability distribution of each token in the vocabulary set, the blue bar is from the draft model, and the red bar is from the base Model.

However, for the sake of simplicity and clarity, we discuss this problem using its continuous approximation.

Now the problem is: we sample from the distribution ? , but we want the end result to be like we sampled from ? . A key idea is: move the probability of the red area to the yellow area:

Figure 3: Acceptance and rejection sampling area

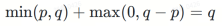

Target distribution ? Can be seen as the sum of two parts:

I. Verification Acceptance

There are two independent events in this branch:

- Sampling on the draft distribution ? produces a specific token ?. The probability is ?(?)

- random variable ? accept the token ?. The probabilities are:

Multiply these probabilities:

Figure 4: Multiplying the blue and red lines, the result is the green line in Figure 6

II. Validation rejection

in this branch There are also two independent events:

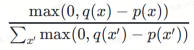

- ? rejects a certain token in ?, the probability is:

This is an integral value, the value has nothing to do with the specific token x

- is a positive number in the distribution ?−?( Partial) upsampling generates a specific token?, the probability is:

The function of its denominator is to normalize the probability distribution to keep the probability density integral equal to 1.

Two items are multiplied together, and the denominator of the second term is eliminated:

max(0,?(?)−?(?))

Figure 5. The corresponding functions of the red line and green line in this figure Multiplied together, the result is equal to the red line in Figure 6

Why does the rejection probability happen to be normalized to max(0,?−?)? While it may seem like a coincidence, an important observation here is that the area of the red region in Figure 3 is equal to the area of the yellow region, since the integral of all probability density functions is equal to 1.

Add the two parts I and II:

Finally, we get the target distribution ?.

Figure 6. The sum of the green area and the red area is exactly equal to the area below the yellow line

And this is our goal.

Experiments

Although we have proven in principle that speculative sampling is lossless, there may still be bugs in the implementation of the algorithm. Therefore, experimental verification is also necessary.

We conducted experiments on two cases: the deterministic method of greedy decoding and the stochastic method of polynomial sampling.

Greedy Decoding

We ask LLM to generate short stories twice, first using ordinary inference and then using speculative sampling. The sampling temperature is set to 0 for both times. We used the speculative sampling implementation in Medusa. The model weight is medusa-1.0-vicuna-7b-v1.5 and its base model vicuna-7b-v1.5.

After the test run was completed, we got two exactly the same results. The generated text is as follows:

|

User: Please tell a tale about a cyperpunk knight named PPIO keeps a charming llama as his pet. Assistant: Once upon a time, in a world where technology had advanced beyond our wildest dreams, there lived a cyberpunk knight named PPIO. PPIO was a skilled warrior, with advanced cybernetic enhancements that gave him incredible strength and speed. He was also a man of great honor, always striving to do what was right and just . One day, while out on a mission, PPIO stumbled upon a beautiful llama. Despite being a cybernetic being, PPIO had a soft spot for animals and he couldn't resist rescuing the llama from whatever danger it may have been in. From that day on, the llama followed PPIO wherever he went, and the two became fast friends. The llama, whose name was Llama-ella, was a special llama. It had a unique ability to sense when someone was in danger and would let out a loud, distinctive call to alert them to the danger. PPIO was grateful for Llama-ella's ability and the two became an unstoppable team. Together, PPIO and Llama-ella took on all sorts of challenges, from battling cybernetic villains to rescuing innocent people from harm. They were a force to be reckoned with, and their bravery and honor were an inspiration to all who knew them. The situation is more complicated. Most methods of reproducing results in randomized programs use fixed random seeds to exploit the determinism of pseudo-random generators. However, this approach is not suitable for our scenario. Our experiment relies on the law of large numbers: given enough samples, the error between the actual distribution and the theoretical distribution will converge to zero. | We compiled four prompt texts and performed 1,000,000 speculative sampling iterations on the first token generated by LLM under each prompt. The model weights used are

. The statistical results are as follows:

|

結論 投機取樣不會損害大型語言模型的推理精確度。透過嚴格的數學分析和實際實驗,我們證明了標準投機採樣演算法的無損性。其中數學證明說明了投機取樣公式如何保留基礎模型的原始分佈。我們的實驗,包括確定性貪婪解碼和機率多項式取樣,進一步驗證了這些理論發現。貪婪解碼實驗在使用和不使用投機採樣的情況下產生了相同的結果,而多項式採樣實驗表明,在大量樣本中,token分佈的差異可以忽略不計。 這些結果共同證明,投機採樣可以顯著加快 LLM 推理速度,而不會犧牲準確性,為未來更高效、更易於訪問的 AI 系統鋪平了道路。 |

The above is the detailed content of Will speculative sampling lose the inference accuracy of large language models?. For more information, please follow other related articles on the PHP Chinese website!

4090生成器:与A100平台相比,token生成速度仅低于18%,上交推理引擎赢得热议Dec 21, 2023 pm 03:25 PM

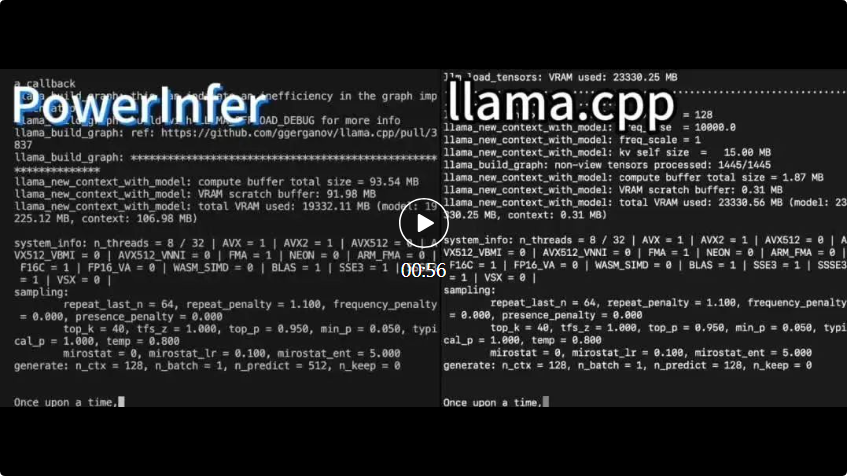

4090生成器:与A100平台相比,token生成速度仅低于18%,上交推理引擎赢得热议Dec 21, 2023 pm 03:25 PMPowerInfer提高了在消费级硬件上运行AI的效率上海交大团队最新推出了超强CPU/GPULLM高速推理引擎PowerInfer。PowerInfer和llama.cpp都在相同的硬件上运行,并充分利用了RTX4090上的VRAM。这个推理引擎速度有多快?在单个NVIDIARTX4090GPU上运行LLM,PowerInfer的平均token生成速率为13.20tokens/s,峰值为29.08tokens/s,仅比顶级服务器A100GPU低18%,可适用于各种LLM。PowerInfer与

思维链CoT进化成思维图GoT,比思维树更优秀的提示工程技术诞生了Sep 05, 2023 pm 05:53 PM

思维链CoT进化成思维图GoT,比思维树更优秀的提示工程技术诞生了Sep 05, 2023 pm 05:53 PM要让大型语言模型(LLM)充分发挥其能力,有效的prompt设计方案是必不可少的,为此甚至出现了promptengineering(提示工程)这一新兴领域。在各种prompt设计方案中,思维链(CoT)凭借其强大的推理能力吸引了许多研究者和用户的眼球,基于其改进的CoT-SC以及更进一步的思维树(ToT)也收获了大量关注。近日,苏黎世联邦理工学院、Cledar和华沙理工大学的一个研究团队提出了更进一步的想法:思维图(GoT)。让思维从链到树到图,为LLM构建推理过程的能力不断得到提升,研究者也通

FATE 2.0发布:实现异构联邦学习系统互联Jan 16, 2024 am 11:48 AM

FATE 2.0发布:实现异构联邦学习系统互联Jan 16, 2024 am 11:48 AMFATE2.0全面升级,推动隐私计算联邦学习规模化应用FATE开源平台宣布发布FATE2.0版本,作为全球领先的联邦学习工业级开源框架。此次更新实现了联邦异构系统之间的互联互通,持续增强了隐私计算平台的互联互通能力。这一进展进一步推动了联邦学习与隐私计算规模化应用的发展。FATE2.0以全面互通为设计理念,采用开源方式对应用层、调度、通信、异构计算(算法)四个层面进行改造,实现了系统与系统、系统与算法、算法与算法之间异构互通的能力。FATE2.0的设计兼容了北京金融科技产业联盟的《金融业隐私计算

复旦NLP团队发布80页大模型Agent综述,一文纵览AI智能体的现状与未来Sep 23, 2023 am 09:01 AM

复旦NLP团队发布80页大模型Agent综述,一文纵览AI智能体的现状与未来Sep 23, 2023 am 09:01 AM近期,复旦大学自然语言处理团队(FudanNLP)推出LLM-basedAgents综述论文,全文长达86页,共有600余篇参考文献!作者们从AIAgent的历史出发,全面梳理了基于大型语言模型的智能代理现状,包括:LLM-basedAgent的背景、构成、应用场景、以及备受关注的代理社会。同时,作者们探讨了Agent相关的前瞻开放问题,对于相关领域的未来发展趋势具有重要价值。论文链接:https://arxiv.org/pdf/2309.07864.pdfLLM-basedAgent论文列表:

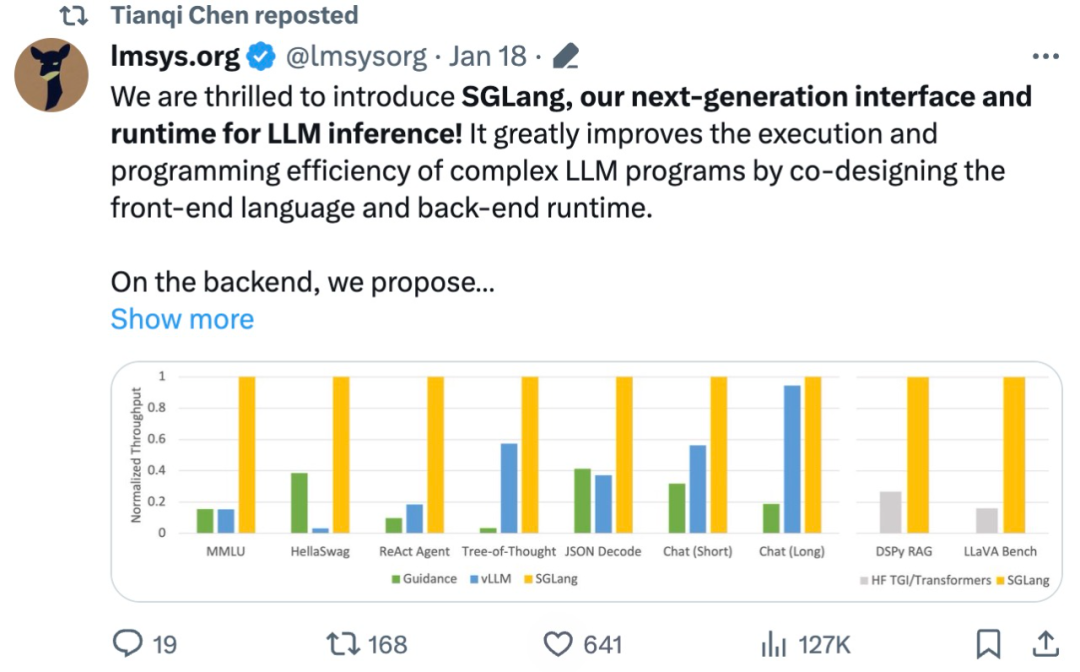

吞吐量提升5倍,联合设计后端系统和前端语言的LLM接口来了Mar 01, 2024 pm 10:55 PM

吞吐量提升5倍,联合设计后端系统和前端语言的LLM接口来了Mar 01, 2024 pm 10:55 PM大型语言模型(LLM)被广泛应用于需要多个链式生成调用、高级提示技术、控制流以及与外部环境交互的复杂任务。尽管如此,目前用于编程和执行这些应用程序的高效系统却存在明显的不足之处。研究人员最近提出了一种新的结构化生成语言(StructuredGenerationLanguage),称为SGLang,旨在改进与LLM的交互性。通过整合后端运行时系统和前端语言的设计,SGLang使得LLM的性能更高、更易控制。这项研究也获得了机器学习领域的知名学者、CMU助理教授陈天奇的转发。总的来说,SGLang的

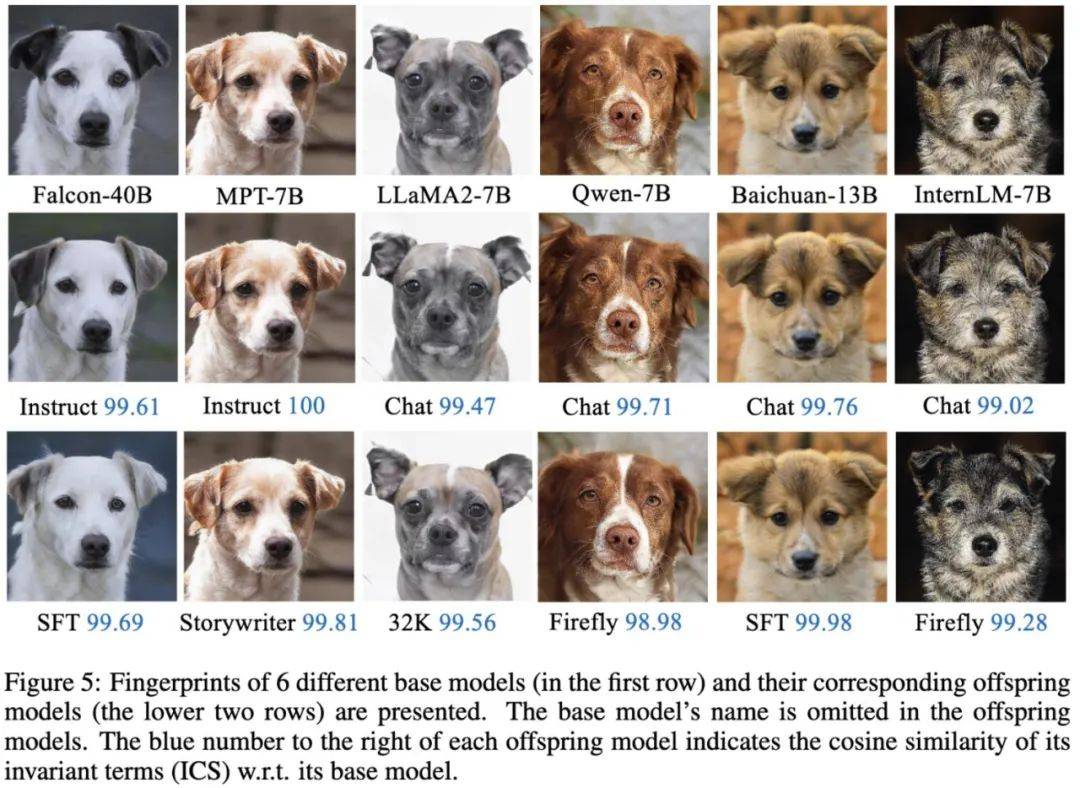

大模型也有小偷?为保护你的参数,上交大给大模型制作「人类可读指纹」Feb 02, 2024 pm 09:33 PM

大模型也有小偷?为保护你的参数,上交大给大模型制作「人类可读指纹」Feb 02, 2024 pm 09:33 PM将不同的基模型象征为不同品种的狗,其中相同的「狗形指纹」表明它们源自同一个基模型。大模型的预训练需要耗费大量的计算资源和数据,因此预训练模型的参数成为各大机构重点保护的核心竞争力和资产。然而,与传统软件知识产权保护不同,对预训练模型参数盗用的判断存在以下两个新问题:1)预训练模型的参数,尤其是千亿级别模型的参数,通常不会开源。预训练模型的输出和参数会受到后续处理步骤(如SFT、RLHF、continuepretraining等)的影响,这使得判断一个模型是否基于另一个现有模型微调得来变得困难。无

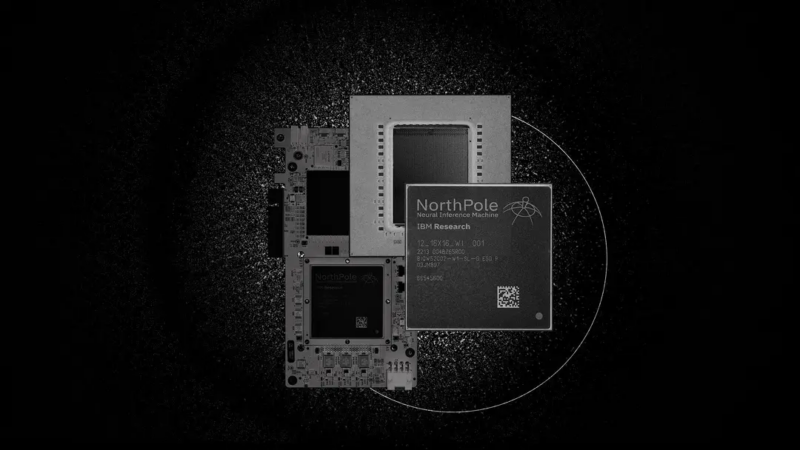

220亿晶体管,IBM机器学习专用处理器NorthPole,能效25倍提升Oct 23, 2023 pm 03:13 PM

220亿晶体管,IBM机器学习专用处理器NorthPole,能效25倍提升Oct 23, 2023 pm 03:13 PMIBM再度发力。随着AI系统的飞速发展,其能源需求也在不断增加。训练新系统需要大量的数据集和处理器时间,因此能耗极高。在某些情况下,执行一些训练好的系统,智能手机就能轻松胜任。但是,执行的次数太多,能耗也会增加。幸运的是,有很多方法可以降低后者的能耗。IBM和英特尔已经试验过模仿实际神经元行为设计的处理器。IBM还测试了在相变存储器中执行神经网络计算,以避免重复访问RAM。现在,IBM又推出了另一种方法。该公司的新型NorthPole处理器综合了上述方法的一些理念,并将其与一种非常精简的计算运行

14秒就能重建视频,还能变换角色,Meta让视频合成提速44倍Dec 27, 2023 pm 06:35 PM

14秒就能重建视频,还能变换角色,Meta让视频合成提速44倍Dec 27, 2023 pm 06:35 PMMeta的视频合成新框架给我们带来了一些惊喜就今天的人工智能发展水平来说,文生图、图生视频、图像/视频风格迁移都已经不算什么难事。生成式AI天赋异禀,能够毫不费力地创建或修改内容。尤其是图像编辑,在以十亿规模数据集为基础预训练的文本到图像扩散模型的推动下,经历了重大发展。这股浪潮催生了大量图像编辑和内容创建应用。基于图像的生成模型所取得的成就基础上,下一个挑战的领域必然是为其增加「时间维度」,从而实现轻松而富有创意的视频编辑。一种直接策略是使用图像模型逐帧处理视频,然而,生成式图像编辑本身就具有

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

is the next token to be predicted.

is the next token to be predicted.