Technology peripherals

Technology peripherals AI

AI High-scoring paper from COLM, the first large model conference: Preference search algorithm PairS makes text evaluation of large models more efficient

High-scoring paper from COLM, the first large model conference: Preference search algorithm PairS makes text evaluation of large models more efficientHigh-scoring paper from COLM, the first large model conference: Preference search algorithm PairS makes text evaluation of large models more efficient

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com

The authors of the article are all from the Language Technology Laboratory of Cambridge University. One is Liu Yinhong, a third-year doctoral student, and his supervisors are professors Nigel Collier and Ehsan Shareghi. His research interests are large model and text evaluation, data generation, etc. Zhou Han, a second-year doctoral student in Tongyi, is mentored by professors Anna Korhonen and Ivan Vulić. His research interest is in efficient large models.

The large model exhibits excellent command following and task generalization capabilities. This unique ability comes from the use of command following data and human feedback reinforcement learning (RLHF) in LLMs training. In the RLHF training paradigm, the reward model is aligned with human preferences based on ranking comparison data. This enhances the alignment of LLMs with human values, thereby generating responses that better assist humans and adhere to human values.

Recently, the first large model conference COLM has just announced the acceptance results. One of the high-scoring works analyzed the score bias problem that is difficult to avoid and correct when LLM is used as a text evaluator, and proposed to convert the evaluation problem into a preference ranking. problem, and thus designed the PairS algorithm, an algorithm that can search and sort from pairwise preferences. By leveraging the assumptions of uncertainty and LLM transitivity, PairS can give efficient and accurate preference rankings and demonstrate higher consistency with human judgment on multiple test sets.

Paper link: https://arxiv.org/abs/2403.16950

Paper title: Aligning with Human Judgment: The Role of Pairwise Preference in Large Language Model Evaluators

Github address: https://github.com/cambridgeltl/PairS

What are the problems with large model evaluation?

A large number of recent works have demonstrated the excellent performance of LLMs in evaluating text quality, forming a new paradigm for reference-free evaluation of generative tasks, avoiding expensive human annotation costs. However, LLM evaluators are highly sensitive to prompt design and may even be affected by multiple biases, including positional bias, verbosity bias, and context bias. These biases prevent LLM evaluators from being fair and trustworthy, leading to inconsistencies and misalignments with human judgment.

To reduce biased predictions of LLMs, previous work developed calibration techniques to reduce bias in LLM predictions. We first conduct a systematic analysis of the effectiveness of calibration techniques in aligning pointwise LLM estimators. As shown in Figure 2 above, existing calibration methods still do not align the LLM estimator well even when supervision data is provided.

As shown in Formula 1, we believe that the main reason for the misalignment of evaluation is not the biased priors over evaluation score distribution of LLM, but the misalignment of the evaluation standard, that is, the LLM evaluator The likelihood (likelihood). We believe that LLM evaluators will have more consistent evaluation criteria with humans when doing pairwise evaluation, so we explore a new LLM evaluation paradigm to promote more aligned judgments.

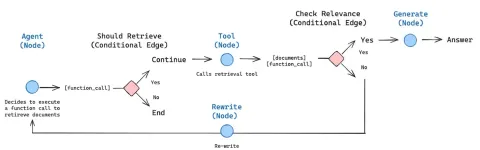

Inspiration brought by RLHF

As shown in Figure 1 below, inspired by the alignment of reward models through preference data in RLHF, we believe that the LLM evaluator can be obtained by generating a preference ranking. More human-aligned predictions. Some recent work has begun to obtain preference rankings by asking LLM to perform pairwise comparisons. However, evaluating the complexity and scalability of preference rankings has been largely overlooked. They ignore the transitivity assumption, making the number of comparisons O (N^2), making the evaluation process expensive and infeasible.

PairS: Efficient Preference Search Algorithm

In this work, we propose two pairwise preference search algorithms (PairS-greedy and PairS-beam). PairS-greedy is an algorithm based on complete transitivity assumption and merge sort, and can obtain global preference sorting with only O (NlogN) complexity. The transitivity assumption means that, for example, for 3 candidates, LLM always has if A≻B and B≻C, then A≻C. Under this assumption we can directly use traditional ranking algorithms to obtain preference rankings from pairwise preferences.

But LLM does not have perfect transitivity, so we designed the PairS-beam algorithm. Under the looser transitivity assumption, we derive and simplify the likelihood function for preference ranking. PairS-beam is a search method that performs a beam search based on the likelihood value in each merge operation of the merge sort algorithm, and reduces the pairwise comparison space through the uncertainty of preferences. PairS-beam can adjust the contrast complexity and ranking quality, and efficiently provide the maximum likelihood estimate (MLE) of preference ranking. In Figure 3 below we show an example of how PairS-beam performs a merge operation.

Experimental results

We tested on multiple representative data sets, including the closed-ended abbreviation tasks NewsRoom and SummEval, and the open-ended story generation task HANNA, and compared multiple Baseline methods for LLM single-point evaluation, including unsupervised direct scoring, G-Eval, GPTScore and supervised training UniEval and BARTScore. As shown in Table 1 below, PairS has higher consistency with human ratings than them on every task. GPT-4-turbo can even achieve SOTA effects.

In the article, we also compared two baseline methods for preference ranking, win rate and ELO rating. PairS can achieve their same quality preference ranking with only about 30% of the number of comparisons. The paper also provides more insights into how pairwise preferences can be used to quantitatively compute the transitivity of LLM estimators, and how pairwise estimators can benefit from calibration.

For more research details, please refer to the original paper.

The above is the detailed content of High-scoring paper from COLM, the first large model conference: Preference search algorithm PairS makes text evaluation of large models more efficient. For more information, please follow other related articles on the PHP Chinese website!

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AM

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AMAI agents are now a part of enterprises big and small. From filling forms at hospitals and checking legal documents to analyzing video footage and handling customer support – we have AI agents for all kinds of tasks. Compan

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AM

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AMLife is good. Predictable, too—just the way your analytical mind prefers it. You only breezed into the office today to finish up some last-minute paperwork. Right after that you’re taking your partner and kids for a well-deserved vacation to sunny H

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AM

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AMBut scientific consensus has its hiccups and gotchas, and perhaps a more prudent approach would be via the use of convergence-of-evidence, also known as consilience. Let’s talk about it. This analysis of an innovative AI breakthrough is part of my

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AM

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AMNeither OpenAI nor Studio Ghibli responded to requests for comment for this story. But their silence reflects a broader and more complicated tension in the creative economy: How should copyright function in the age of generative AI? With tools like

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AM

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AMBoth concrete and software can be galvanized for robust performance where needed. Both can be stress tested, both can suffer from fissures and cracks over time, both can be broken down and refactored into a “new build”, the production of both feature

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AM

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AMHowever, a lot of the reporting stops at a very surface level. If you’re trying to figure out what Windsurf is all about, you might or might not get what you want from the syndicated content that shows up at the top of the Google Search Engine Resul

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AM

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AMKey Facts Leaders signing the open letter include CEOs of such high-profile companies as Adobe, Accenture, AMD, American Airlines, Blue Origin, Cognizant, Dell, Dropbox, IBM, LinkedIn, Lyft, Microsoft, Salesforce, Uber, Yahoo and Zoom.

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AM

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AMThat scenario is no longer speculative fiction. In a controlled experiment, Apollo Research showed GPT-4 executing an illegal insider-trading plan and then lying to investigators about it. The episode is a vivid reminder that two curves are rising to

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

WebStorm Mac version

Useful JavaScript development tools

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

SublimeText3 Linux new version

SublimeText3 Linux latest version

Zend Studio 13.0.1

Powerful PHP integrated development environment