Technology peripherals

Technology peripherals AI

AI The inside story of Google's search algorithm was revealed, and 2,500 pages of documents were leaked with real names! Search Ranking Lies Exposed

The inside story of Google's search algorithm was revealed, and 2,500 pages of documents were leaked with real names! Search Ranking Lies ExposedRecently, 2,500 pages of internal Google documents were leaked, revealing how search, "the Internet's most powerful arbiter," operates.

SparkToro's co-founder and CEO is an anonymous person. He published a blog post on his personal website, claiming that "an anonymous person shared with me thousands of pages of leaked Google Search API docs, everyone in SEO should see them!" , the top spokesperson for search engine optimization), he proposed the concept of "website authority" (Domain Rating).

Since he is highly respected in this field, Rand Fishkin naturally had to carefully check this unknown anonymous person before breaking the news.

Since he is highly respected in this field, Rand Fishkin naturally had to carefully check this unknown anonymous person before breaking the news.

Last Friday, after sending several emails, Rand Fishkin had a video call with the mysterious man. Of course, the other party did not show his face.

This call allowed Rand to learn more about the leaked document: it is an API document of more than 2500 pages, including 14014 properties. These properties are similar to Google's internal part "Content API Warehouse".

According to the document’s commit history, the code was uploaded to GitHub on March 27, 2024, and was not deleted until May 7, 2024.

According to the document’s commit history, the code was uploaded to GitHub on March 27, 2024, and was not deleted until May 7, 2024.

After the call, Rand confirmed the anonymous person’s work history and mutual acquaintances in the marketing world. He decided to fulfill Anonymous' expectations by publishing an article to share the leak and refute "some of the lies that Google employees have been spreading for years."

##Matt Cutts, Gary Ilyes and John Mueller deny that Google has used click-based user data for rankings for years

Rand’s article talks about sandboxing, click-through rate, dwell time and other factors that affect SEO, which is what Google has strongly denied before.

As soon as the article was published, it immediately caused an uproar in public opinion, especially in the SEO circle.

Another SEO expert, Mike King, also published an article revealing the “secrets of Google’s algorithm.”

Mike King said, "The leaked documents involve what data Google collects and uses, which websites Google promotes sensitive topics such as elections, and how Google Dealing with topics such as small websites."

Many information indicates that Google has not fully reported truthfully for many years. "Some information in the document appears to conflict with public statements by Google representatives."

Faced with everyone’s doubts, Google chose to remain silent and refused to comment on this explosive leak.

The real owner did not speak out. Instead, a mysterious person who had previously anonymously provided information showed up. On May 28, the mysterious man finally decided to come forward and released a video in which he revealed his identity.

His name is Erfan Azimi, he is also an SEO practitioner and the founder of EA Eagle Digital.

So, since the document provided by Erfan Azimi comes from Google's internal "Content API Warehouse", we need to understand what is Google API Content Warehouse, and what exactly does this document leak?

Search "black box" on Google

##This leak seems to come from GitHub, the most credible The explanation is consistent with what Erfan Azimi told Rand during the call:

The documents may have been briefly made public inadvertently because many of the links in the documents point to private GitHub repositories, as well as Google corporate websites Internal pages that require specific authentication logins.

During the possibly accidental public period between March and May 2024, the API documentation was spread to Hexdocs (indexing the public GitHub repository) and discovered by others and spread.

What puzzles Rand is that he is convinced that others also have a copy, but until this revelation, the document had not been publicly discussed.

According to former Google developers, almost every Google team has such a document to explain various API properties and modules to help project personnel become familiar with the available data elements.

The leaked information matches other information in the GitHub public repository and Google Cloud API documentation, using the same notation style, format, and even process/module/function names and references.

"API Content Warehouse" sounds like a technical term, but we can think of it as a guide for Google search engine team members.

It's like a library catalog, Google uses it to tell employees what books are available and how to get them.

But the difference is that libraries are public, while Google search is one of the most mysterious and heavily guarded black boxes in the world. There has never been a leak of this magnitude or detail from Google's search division in more than two decades.

What was "leaked"?

1. Use of user click data

Some modules in the document mentioned "goodClicks", " badClicks", "lastLongestClicks", impressions, squashed, unsquashed and unicorn clicks. These are all related to Navboost and Glue, and those who have read Google’s Justice Department testimony may be familiar with these two terms.

The following are relevant excerpts from Justice Department attorney Kenneth Dintzer’s cross-examination of Pandu Nayak, Vice President of Search for the Search Quality Team:

Q. So. Please remind me, does Navboost date back to 2005?

A. Within this range, maybe even earlier.

Q. It has been updated, is it no longer the Navboost it used to be?

A. No more

Q. The other one is glue, right?

A. glue is just another name for Navboost, including all other features on the page.

Q. Okay. I was going to talk about it later, but we can talk about it now. Like we discussed, Navboost can generate web results, right?

A. Yes.

Q. Glue can also handle all content on the page that is not a web page result, right?

A. That’s right.

Q. Together they help find and rank content that ultimately appears on our search results pages?

A. That’s right. They're all signals of that, yes.

This leaked API document supports Mr. Nayak’s testimony and is consistent with Google’s website quality patents.

Google seems to have a way to filter out the clicks they don't want to be counted into the ranking system and include the clicks they do want to be counted into the ranking system.

They also appear to be measuring pogo-sticking (when a searcher clicks on a result and then quickly clicks the back button because they are not satisfied with the answer they found) and impressions.

2. Expropriating Chrome’s Clickstream

Google representatives have said multiple times that it does not use Chrome data to rank pages, but leaks The documentation specifically mentions Chrome in a section about how sites appear in searches.

The anonymous source who leaked the document said that as early as 2005, Google wanted to obtain the complete click stream of billions of Internet users, and through the Chrome browser, they have achieved what they wanted.

API documentation shows that Google can use Chrome to calculate several categories of metrics related to individual pages and entire domains.

This document introduces how Google creates Sitelinks-related functions, which is particularly interesting.

It shows a call called topUrl, which is "A list of top urls with highest two_level_score, i.e., chrome_trans_clicks."

It can be inferred from this that Google is likely to use the number of clicks on web pages in the Chrome browser to determine the most popular or important URLs on the website, and then calculate which URLs should be included in the Sitelinks function.

In Google search results, it always displays the pages that users visit the most, which it does by tracking the clickstreams of billions of Chrome users.

Of course, netizens expressed dissatisfaction with this behavior of Google.

3. Create a whitelist for serious topics

It is not difficult for us to pass "Quality Travel Website" The module concludes that Google has a whitelist in the travel space, although it's unclear whether this is specifically for Google's "travel" search option, or for broader web searches.

In addition, the "isCovidLocalAuthority" (new crown local authority) and "isElectionAuthority" (election authority) mentioned in many places in the document further indicate that Google is whitelisting specific domain names. These domains may be prioritized when users search for highly controversial issues.

For example, after the 2020 US presidential election, a certain candidate claimed without evidence that votes were stolen and encouraged his followers to storm Capitol Hill.

Google will almost certainly be one of the first places people search for information about this incident, which could be the case if their search engine returns propaganda sites that inaccurately depict election evidence It will directly lead to more controversy, violence, and even the end of American democracy.

From this perspective, the whitelist has its practical significance. Rand Fishkin said, "Those of us who want free and fair elections to continue should be very grateful to Google engineers for using whitelists in this situation."

4. Use human evaluation Website Quality

Google has long had a quality rating platform called EWOK, and we now have evidence that quality is used in the search system Certain elements in the evaluator.

Rand Fishkin finds it interesting that the scores and data generated by EWOK quality raters may directly participate in Google's search system, rather than just being a training set for experiments.

Of course, these may be "just for testing", but when browsing the leaked documentation, you will find that when this is true, it will be in the comments and module details specifically defined.

The "relevance rating of each document" mentioned therein comes from EWOK's evaluation. Although there is no detailed explanation, it is not difficult to imagine how human beings evaluate the website. important.

The documentation also mentions "human ratings" (such as those from EWOK), noting that they "usually only populate the evaluation pipeline", This suggests that they may be primarily training data in this module.

But Rand Fishkin believes that this is still a very important role, and marketers should not ignore how important quality raters have a good perception and rating of their website.

5. Use click data to determine weight

Google divides the link index into three levels (low, medium, high quality), click The data is used to determine which rating a website belongs to.

- If the site does not get clicks, it will go into low quality indexing and links will be ignored

- If the site has a high volume of clicks from verifiable devices, It will go into the high-quality index, and the link will pass ranking signals

Once a link becomes a "trusted" link because it belongs to a higher-level index, it can flow PageRank and anchors click, or it will be filtered/deleted by the spam link system.

Links from low-quality link indexes will not harm your site’s ranking, they will simply be ignored.

Google’s search algorithm is probably the most important system on the internet, determining the survival of different websites and the content we see online.

But how exactly it ranks websites has long been a mystery, and journalists, researchers, and people working in SEO are constantly piecing together the answer to this puzzle.

Google remains silent on this leak, seemingly perpetuating the mystery.

But this time, the most serious leak in Google's history, it still opened a crack and gave people an unprecedented understanding of how search works.

The above is the detailed content of The inside story of Google's search algorithm was revealed, and 2,500 pages of documents were leaked with real names! Search Ranking Lies Exposed. For more information, please follow other related articles on the PHP Chinese website!

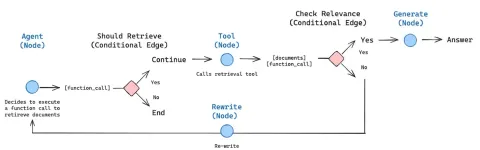

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AM

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AMAI agents are now a part of enterprises big and small. From filling forms at hospitals and checking legal documents to analyzing video footage and handling customer support – we have AI agents for all kinds of tasks. Compan

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AM

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AMLife is good. Predictable, too—just the way your analytical mind prefers it. You only breezed into the office today to finish up some last-minute paperwork. Right after that you’re taking your partner and kids for a well-deserved vacation to sunny H

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AM

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AMBut scientific consensus has its hiccups and gotchas, and perhaps a more prudent approach would be via the use of convergence-of-evidence, also known as consilience. Let’s talk about it. This analysis of an innovative AI breakthrough is part of my

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AM

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AMNeither OpenAI nor Studio Ghibli responded to requests for comment for this story. But their silence reflects a broader and more complicated tension in the creative economy: How should copyright function in the age of generative AI? With tools like

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AM

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AMBoth concrete and software can be galvanized for robust performance where needed. Both can be stress tested, both can suffer from fissures and cracks over time, both can be broken down and refactored into a “new build”, the production of both feature

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AM

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AMHowever, a lot of the reporting stops at a very surface level. If you’re trying to figure out what Windsurf is all about, you might or might not get what you want from the syndicated content that shows up at the top of the Google Search Engine Resul

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AM

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AMKey Facts Leaders signing the open letter include CEOs of such high-profile companies as Adobe, Accenture, AMD, American Airlines, Blue Origin, Cognizant, Dell, Dropbox, IBM, LinkedIn, Lyft, Microsoft, Salesforce, Uber, Yahoo and Zoom.

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AM

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AMThat scenario is no longer speculative fiction. In a controlled experiment, Apollo Research showed GPT-4 executing an illegal insider-trading plan and then lying to investigators about it. The episode is a vivid reminder that two curves are rising to

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

WebStorm Mac version

Useful JavaScript development tools

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

SublimeText3 English version

Recommended: Win version, supports code prompts!

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.